Technical Discussions

How Autofocus (Often) Works

Clarke’s Law: Any sufficiently advanced technology is indistinguishable from magic. Arthur C. Clarke

Clark’s Law: Any sufficiently advanced cluelessness is indistinguishable from malice. J. Porter Clark

My Apologies

Nothing in online forums demonstrates the two laws above better than discussions involving camera autofocus systems. Clarke’s law because very, very few people have the slightest grasp of how autofocus works. Clark’s law because since they don’t understand autofocus, when the topic comes up the Three Rules of Online Discussion (1 – Establish blame early, 2 – Repeat it Loudly, 3 – Repeat it Often) take hold. So most discussions that start with “My camera (or this lens) doesn’t autofocus well” quickly end up in “because you’re a bad photographer” or “because that equipment sucks” perseveration.

Not that I really understood autofocus. Sure, I knew some things that are obviously true: phase detection AF is fast, contrast detection is slow; f/2.8 lenses focus more accurately than slower lenses; third party lenses tend to have more problems than manufacturer’s lenses. But since I didn’t understand how autofocus really worked, I didn’t understand why those things were true.

So, I thought I’d just go read about it on the internet and educate myself. Except (and you can’t really imagine my shock here) there wasn’t diddly-squat on the internet to read. A couple of short articles and blogs, at least one of which had some major errors that even I could see. A couple of marketing pieces by camera manufacturers full of claims but with few specifics. Nothing else. I had reached the end of the internet, at least as far as SLR autofocus was concerned.

So there it was—a vacuum waiting to be filled. My chance to write the definitive article on a topic had finally arrived, since, by definition, the only article would be the best article. (I guess it would also be the worst article, but I try to keep a positive attitude). Never one to let lack of knowledge stop me from writing on a topic, I decided to proceed at all speed.

But all speed wasn’t so fast. The camera manufacturers, understandably, don’t advertise how they make their autofocus systems work, they just advertise that theirs is different, somehow, and much better than anyone else’s. So I spent a month collecting and reading books, journal articles, even patent filings and then proceeded to write a comprehensive piece on autofocus.

This isn’t it. That ran about 14 pages and even I fell asleep halfway through proofreading it. This is the condensed, abridged, Cliff Notes version of that article. To get it to a reasonable length, I’ll have to leave out the optics and physics involved, but I’ve done my best to keep things accurate and included references at the end for those of you who want to see those things.

Let’s start with the simpler (and more accurate) of the two common types of autofocus systems used in SLRs: contrast assessment autofocus.

Contrast Assessment Autofocus

Contrast assessment is just that: the camera’s computer evaluates the histogram it sees from the sensor, moves the lens a bit and then reevaluates to see if there is more or less contrast. If contrast has increased, it continues moving the lens in that direction until contrast is maximized. If contrast decreased, it moves the lens in the other direction. Repeat as needed until contrast is maximized (which basically means moving slightly past perfect and then backing up once contrast has started to decrease again). A perfectly focused picture should be the one with the highest contrast.

If your camera shows a Live View histogram, you can contrast autofocus the right type of image (a shot where everything is at similar distance) to some degree by simply manually focusing until the histogram shows maximal contrast. In the camera’s contrast-detection autofocus, of course, only the small area marked as the autofocus detector is actually assessed, not the entire sensor. This is both to let you choose the object you want to focus on and also, so the camera’s computer doesn’t have to process the entire image’s contrast, just the autofocus points.

Disadvantages of contrast assessment

The major drawback to contrast assessment autofocus is that it is slow. The pattern of move – assess – move – assess takes time and the camera may well start by moving focus in the wrong direction and then have to reverse itself. Because it is slow and offers no predictive possibilities, contrast detection is inappropriate for action or sports shooting. The slowness can be aggravating even for stills and portraits. Contrast assessment also requires a bit better light than phase-detection autofocus, and obviously it requires an area of good contrast in the image.

Advantages of contrast assessment

Contrast assessment autofocus does have some advantages though, that have not only kept it in use, but are now increasing how much it is used. The first advantage is that it’s simpler. It doesn’t require the additional sensors and chips that phase detection autofocus requires. Simplicity lowers cost and (along with the fact that autofocus speeds are not as critical) is the main reason contrast detection is used in point and shoot cameras. (The other reason is that point and shoot cameras by nature have a large depth-of-field, so accurate focus is not as critical, either.)

Simplicity also reduces size. Mirrorless systems place a priority on small size, and the contrast detection system does not require the additional light paths, prisms, mirrors and lenses that a phase contrast system does. This is a critical advantage for the small interchangeable lens cameras we’re starting to see enter the market, all of which use contrast detection.

The second advantage is that contrast assessment can use the image sensor itself to determine focus. There is not a separate light path with prisms, mirrors, etc. that all could be less than perfectly calibrated to the sensor. During contrast assessment autofocus, the sensor is evaluating the actual image it receives, not a separate image that should be (and should be doesn’t mean the same as is) accurately calibrated to the sensor.

For this reason contrast detection, when using the image sensor, provides more reliably accurate autofocus than does the more common phase detection system. The key work here is “when using the image sensor for contrast detection”. Olympus and Sony standard-sized (not mirrorless) SLRs use a second, smaller sensor in a separate light path to generate the Live View image and provide contrast assessment. Like any calibrated system, it’s possible for the second sensor to not be accurately calibrated to the image sensor.

In summary then, contrast detection is simpler, cheaper, smaller, and theoretically more accurate than phase detection autofocus. But it is much, much slower. Camera companies are working hard to speed up contrast detection autofocus, and are making some strides, but for the near future it will remain slower.

Phase Detection Focusing

Basic Principle

The basic design of phase detection (AKA phase matching) autofocus was originated by Honeywell in the 1970, but first widely used in the Minolta Maxxum 7000 camera. Honeywell sued Minolta for patent infringement and won, so all camera manufacturers had to pay Honeywell for the rights to phase detection autofocus.

Phase detection uses the principle that when a point is in focus, the light rays coming from it will equally illuminate opposite sides of the lens (it is ‘in phase’). If the lens is focused in front of or behind the point in question, the light rays at the edge of the lens arrive in a different position (out of phase).

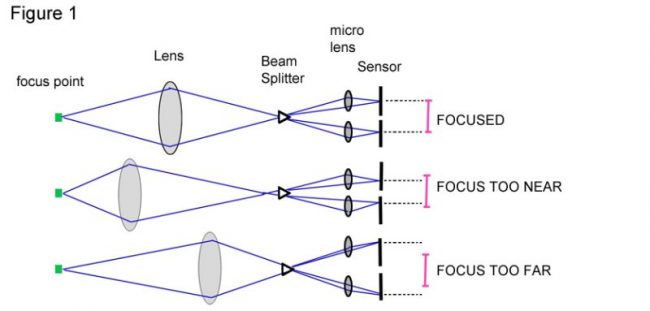

There are different ways to determine if the light is in or out of phase, but most current systems use mirrors, lenses, or a prism (beam splitter) to split the rays coming from opposite edges of the lens into two rays and secondary lens systems to refocus these rays on a linear sensor (usually CCD). The autofocus sensor produces a signal showing where the light rays from the opposite edges of the lens strike. If the image is properly focused, the rays from each side strike the sensor a certain distance apart. If the lens is focused in front of or behind the object, light rays from opposite sides will strike too close together or too far apart (Figure 1).

Please note: the preceding paragraph and figure is a very superficial synopsis of how phase detection works. There should be two pages of physics and formulas, and alternative methods. But for practical, “how it works” purposes, it is accurate.

It’s obvious from figure 1 that phase detection can immediately tell the camera that the lens is focused too near or too far from the object of interest, so one of the disadvantages we saw with contrast detection (the camera doesn’t know which way to move the focus) is already overcome – instead of moving back and forth and deciding which direction has more contrast, phase detection tells the camera: that way.

Less obvious is the computing that goes on. Each autofocus lens has a chip that has already told the camera, for example, “I’m a 50mm f/1.4 lens and my focus element is located at 20% less than infinity” or something similar. When you push the shutter button halfway down, several steps take place:

- The camera reads the phase detection sensor, looks up a huge data array programmed in its chips that describes the properties of all of the manufacturer’s lenses, does some calculations and tells the lens something like “Move your autofocus this much toward infinity”.

- The lens contains a sensor and chips that either measures the amount of current applied to the focusing motor, or actually measures how far the focusing element has moved, and sends a signal to the camera, saying: “almost there”.

- The camera rechecks the phase detection, may send some fine-tuning signal to the lens, may even recheck a 3rd or 4th time until perfect focus is indicated. If things don’t work as planned, the infamous “hunting” may occur, but usually not.

- Once the camera has confirmed focus, it tells the lens not to move anymore and then sends that little ‘beep’ and light signal we all know and love, and we push the shutter.

The whole process takes a tiny fraction of a second. It’s fast.

System Design

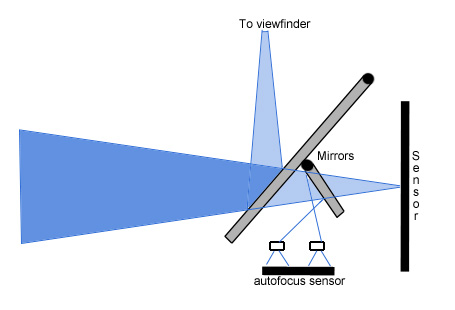

Obviously the autofocus sensors can’t be in front of the image sensor, so the manufacturers use a partially transparent area in the mirror to allow some light to pass through and reflect from a secondary mirror onto the autofocus assembly, (Figure 2) which is usually located in the bottom of the mirror box (Figure 3) along with the exposure metering sensors.

Figure 2: light pathway to the autofocus sensor

Figure 3: Location of autofocus sensor (red arrow) in Canon 5D, reproduced from Canon, USA

Sensor Types

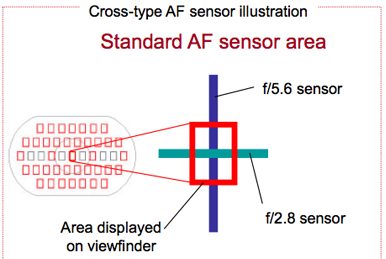

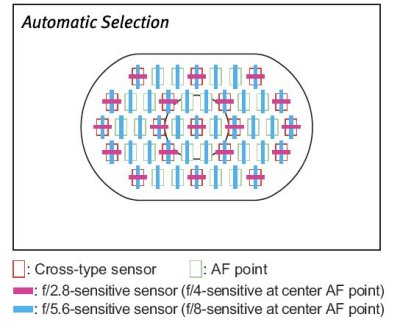

Each phase detection sensor can assess only a small, linear part of the image. Horizontal sensors detect vertical features best, and most images contain a preponderance of vertical features, so horizontal sensors predominate. There are also some vertical sensors, usually arrayed in a cross (Figure 4) or “H” shape with horizontal sensors. A few cameras even contain diagonal sensors.

Some of the autofocus sensors (almost always the center), by means of different refracting lenses and sensor size, are more accurate than other sensors, especially when wide aperture lenses are used. Many of these high accuracy sensors are only active when an f/2.8 or wider aperture lens is used. In Figure 4, for example, this sensor would be a more accurate cross-type sensor when an f/2.8 lens is mounted, but only a less accurate linear sensor with a lens of lesser aperture. As a general rule, higher end and newer bodies will have more sensors, and more of those sensors will be the higher accuracy type.

_Figure 4: Cross-type sensor

In the very first autofocus systems (and some current medium format cameras) there was only one sensor in the center of the image. As processing power and engineering prowess have been applied, more and more sensors were added. Most cameras have at least seven or nine and some as many as 52 separate sensors. We can select one of them, all of them, a group of them, whatever is best for the type of images we’re shooting. We can tell the camera which sensor(s) we want to use. Or we can let the camera tell us. (The camera is always courteous enough to let us know which sensors it chose. My cameras are also telepathic and will immediately choose whichever ones I didn’t want to use, which is why I pick my own.)

These multiple sensors, along with the camera’s computer, can do some other remarkable things. By sensing which sensors are in focus on a moving subject and how that changes, both in distance from the lens and across sensors over brief intervals in time, the camera can predict where that moving subject will be in the future. This is the basis of AI Servo autofocus, which, I’m afraid, is a subject too complex for this article. I’m treading water as fast as I can just describing how the camera focuses on that pot of flowers on the porch.

The Effect of Lens Aperture

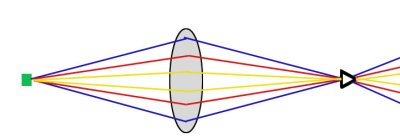

No matter what the sensor type, however, it will usually be more accurate with a wider aperture lens. Remember, during autofocus the camera automatically opens the lens to its widest aperture, only closing it down to the aperture you’ve chosen just before the shutter curtain opens. Phase detection autofocus is more accurate when the light beams are entering from a wider angle. In the schematic below beams from an f/2.8 lens (blue) would enter at a wider angle than those of an f/4 lens (red), which are still wider than an f/5.6 lens (yellow). By f/8, only the most accurate sensors (usually only the center point on the more expensive bodies) can function at all, but even then focus may be slow and inaccurate. This is the reason our f/5.6 lenses stop autofocusing when we try to add a teleconverter, which changes them to f/8 or f/11 lenses.

Advantages of phase detection autofocus

We’ve already covered the major advantages of phase detection autofocus:

- It’s incredibly fast compared to contrast detection, fast enough for moving subjects.

- The camera can use the sensor array to evaluate a subject’s movement and realize the moving object is the subject of interest, giving us the AI Servo autofocus all sportshooters complain about.

There are several less commonly used benefits. The sensor array can be used to assess the image’s depth of field giving an “electronic depth of field” preview. The camera can be set to take a picture when something enters the autofocus point (called trap autofocus, but few cameras offer this feature). If the sensors sense random movement in an otherwise static subject, they can flash a notice that camera shake is affecting the image. But speed and AI servo are what it’s all about.

Disadvantages of phase detection autofocus

I once heard the Porsche 911 described as “An interesting concept that through remarkably intense engineering became a superb automobile”. The description fits phase detection autofocus well. The systems are amazingly complex and require a remarkable amount of engineering to work as well as they do.

First and foremost, the system requires physical calibration. The light path to the imaging sensor must be calibrated with the light path to the autofocus sensor, so what is in focus for the autofocus sensor is also exactly in focus for the imaging sensor. Each lens contains chips that provide feedback to the camera, telling it exactly what position the focusing element is in and how far it moves for a given input to the lens motor. This must agree completely, so that the lens actually moves exactly where the camera told it to move, and so the camera knows exactly what position it’s in. If any of these systems aren’t calibrated perfectly, autofocus becomes inaccurate. Even if they are calibrated perfectly at the factory, if they expand and contract slightly with heat or cold they may become inaccurate, at least temporarily.

Second, the system requires software calibration. As mentioned earlier, the camera manufacturers have very complex algorithms and database tables programmed into each lens and each camera that provide this information, and which they protect from public knowledge. So, a Nikon D3, for example, knows exactly how much current must be applied for how long to the ultrasonic motor in a 70-200 f/2.8 VR lens to move the focus from 6 feet in front of the camera to 12 feet in front. And that a very different amount of current will be needed to move it from 12 feet to 30 feet, or to move the focus of a 50 f/1.4 the same distance, etc. etc. Because of this, autofocus can sometimes be improved by a firmware update, and firmware updates are often issued after a new lens is released. The update contains new algorithms for that lens.

Also because of this, third-party lenses may not be quite as accurate as manufacturers lenses at times, and third party manufacturers sometimes have to ‘rechip’ their lenses to work with certain cameras. The big guys have yet to tell the little guys: “we’ll be happy to issue a firmware update so our camera works well with your lens”. Instead the third-party guys have to take the manufacturer’s cameras and lenses, decode the signals they send back and forth, and then encode a chip in their lens translating those signals in a way that makes their lens work properly. And they have to accept the data arrays the manufacturers have designed for their own lenses, which might not be ideal for their autofocus gearing and motors. I have no firsthand knowledge of this process, but I suspect this is why certain third-party lenses seem to autofocus well with cameras of one brand, but not as well with another. And, at least theoretically, this would explain why a change in a manufacturer’s autofocus system could render third party lenses obsolete—or at least require that they get a new chip, such as recently occurred with the Sigma 120-300 f/2.8 and the Nikon D3x.

As mentioned above, lens aperture can also affect phase-detection autofocus accuracy. Usually this matters little, because the smaller aperture lens will have a greater depth of field. There is a point, however, where the aperture is too small for the sensor to autofocus accurately, usually at f/5.6 or f/8. (Remember that the camera automatically opens the lens aperture to maximum during autofocus, so the aperture you’ve set the lens at doesn’t matter, it’s the maximum aperture the lens can achieve that matters.) It also is the reason that f/2.8 lenses can sometimes autofocus in more difficult conditions than lenses of lesser aperture.

Since the autofocus sensors only receive light when the mirror is down, phase detection sensors stop working when you actually take the picture, and don’t start working again until the mirror has returned to its normal position. This is why phase detection autofocus doesn’t work during Live View and may contribute to why AI Servo autofocus can lose accuracy during a series of rapid-fire shots. When you listen to how fast a D3 or 1DMkIV is shooting at maximum FPS, it’s rather amazing that any of the images are in focus, really. But our expectations are high these days.

There are other issues, of course, but most of them we don’t think about. For example, linear (not circular) polarizing filters interfere with phase detection. You don’t see linear polarizing filters much these days, but every so often someone buys one because it’s so inexpensive and then wonders why their camera isn’t autofocusing accurately. Phase detection can also struggle with certain patterns in an image—things like checkerboards and grids, for example—can cause a phase detection system to melt down, but are handled easily by contrast detection.

Live View:

I mention Live View focusing separately because it seems to be driving the camera manufacturers back to improving contrast detection AF, and into creating hybrid AF systems. As mentioned, contrast detection does have some advantages already and overcoming its disadvantages could improve autofocusing for all of us.

As mentioned above, Olympus and Sony have systems that split the light beam, sending part to the viewfinder and part to a secondary image sensor. This system allows phase detection autofocus to remain enabled even during Live View. But it adds the possibility that Live View focusing is not absolutely accurate, since the sensor being used to focus is not the imaging sensor.

Canon has described a system that uses phase detection to focus the lens initially, then uses contrast detection to fine tune autofocus, which could have significant advantages for still and macro work (Ishikawa, et al). Nikon has apparently applied for a patent that designates certain pixels on the image sensor to be used in what apparently is a phase detection type autofocus (Kusaka). This may provide the best of both worlds.

We shall see. But what is apparent is that, for the first time in over a decade, changes to autofocus systems may be revolutionary rather than evolutionary.

References

- Digital Camera Info: Evolution of Live Preview in Digital Photography

- Focusing in a Flash: Scientific American. August, 2000. p82-83.

- Goldberg, Norman: Camera Technology. The Dark Side of the Lens. Academic Press, 1992.

- Ishikawa, et al.: Camera System and Lens Apparatus. United States Patent 6,603,929 B2

- Kusaka; Yosuke: Image sensor, focus detection device, focus adjustment device and image-capturing apparatus. United States Patent application 20090167927

- Kabza, K. J.: Evolution of the Live Preview in Digital Photography . Digital Camera Info.

- Kingslake, Gordon: Optics in Photography. SPIE Optical Engineering Press. 1992

- Predictive Autofocus Tracking Systems Nikon

- Ray, Sidney: Autofocus and Focus Maintenance Methods. In: Ray, Sidney: Applied Photographic Optics, 3rd ed. Focal Press, 2004.

- Ray, Sidney: Camera Features. In: The Manual of Photography, 9th ed. Focal Press, 2008.

- Wareham, Lester: Canon AF System

- Understanding Autofocus Cambridge In Colour

- Wikipedia: Autofocus

- Wikipedia: Live Preview

Author: Roger Cicala

I’m Roger and I am the founder of Lensrentals.com. Hailed as one of the optic nerds here, I enjoy shooting collimated light through 30X microscope objectives in my spare time. When I do take real pictures I like using something different: a Medium format, or Pentax K1, or a Sony RX1R.

-

Michael Clark

-

TomDibble

-

John Jackson

-

Jacques Driscart

-

Urban Domeij

-

Chris

-

Shreyas Hampali

-

Nicolas Prieur

-

Paul

-

MIss Viki Secrets

-

Sourav Chakraborty

-

Philippe

-

nic

-

bart

-

Luis

-

Greg

-

bart

-

Martin

-

Mim

-

Wilba

-

stu