There Is No Perfect Lens Test, Either

In my last article, I wrote about the fact that every copy of a given lens has some bit of sample variation. This affects lens reviews, whether lab-based or photography-based, because the copy they tested will be just a bit different from the copy you buy. I suggested, that if you want to get a feel for what the lens you purchase is likely to be like, you had best compare several different reviews. That should give you an idea of the variation that exists.

Photographs are really the best way to evaluate a lens’ performance, but you have to look at a few dozen, minimum to do it. That takes a lot of time and a fair amount of bandwidth. Looking at online size jpgs is worthless unless all you do with your images is post online-size jpgs. You need to download at least full-size jpgs (preferably RAW files) and look at them at 50% magnification to get a good idea about a lens’ performance.

Lab testing, with its numbers, gives us nice, quick overviews of lens performance. It’s useful for lens reviews so that you can compare one lens to another. It’s useful for people like me who have to test lenses to make sure the optics are OK, since it eliminates some of the human variability that comes with looking at images of a test chart.

But each type of lab test has its own strengths and weaknesses that nobody ever talks about. This is important if we’re going to compare several different reviews of a lens, because we should have some idea of what the reviewers are actually analyzing. Like every scientific test, if you don’t have a grasp of the testing methods being used, you can’t possibly understand the results.

I’ll give you one teaser before we start. I don’t trust test results for lenses of 24mm or wider (full-frame equivalent). Period. Including most of mine. I’ll get into why in just a bit.

The Types of Lab Tests

Basically there are two types of lab testing used for reviews: computerized target analysis and optical bench testing.

Computerized Target Analysis

Computerized target analysis, using either Imatest (used by Lensrentals.com, Photozone, Lenstip, and others) or DxO Analytics (used by DPReview, DxOMark, SLRGear), are the most commonly used lab tests. Both programs work by taking carefully aligned and lighted photographs of a specific test chart which are then analyzed by a computer program. The program then analyzes the file and determines things like resolution, distortion, vignetting, etc.

There are differences between the two programs. DxO Analytics is proprietary (and I don’t own it so my comments are secondhand) but it analyzes round dots rather than lines, and results are given in ‘blur units’. What the exact definition of a ‘blur unit’ is remains somewhat debated outside of DxO (1,2).

Imatest analyzes slanted lines and other patterns and can be used with a number of different charts (which generate somewhat different results). Resolution is given in line pairs per mm or line pairs per image height at a given MTF; usually MTF50.

There are differences between the two programs, and while we don’t know all of those differences, they should ‘internally agree’ but not necessarily ‘externally agree’. In other words if one person using DxO decides Lens A is sharper than Lens B, all the DxO testers should find the same results. On the other hand, it is possible (although unlikely) that Imatest might find Lens B is sharper than Lens A, but in theory all Imatest users should find the same thing.

In practice, though, Imatesters may not agree nearly as much as DxO testers. Why? Because Imatest’s flexibility allows much more variation. There are several different test charts you can use (and depending on the chart, what is defined as the corner of the image differs a lot). You can analyze RAW files or jpgs (which have some in-camera sharpening applied). Imatest gives vertical and horizontal resolution separately, indicating astigmatism, and the reviewer can average the numbers, use the higher number, or lower number, etc.

There are a number of other test charts that can be analyzed in Imatest, theses are just the most common examples. This can be a critical difference when looking at lens reviews, by the way. It’s cheap and easy to buy an inkjet printed (or even home print) test chart and shoot Imatest. The reality is unless the chart is a high-quality linotype print (which are quite expensive — $400 to $1,000 each) the chart limits the program’s abilities. With really high resolution cameras, like the Nikon D800, Imatest now recommends using backlit charts printed on film.

Using a lesser chart doesn’t invalidate the results, but it certainly puts a ceiling on them. When some testers find a new, very sharp, very high resolution lens ‘isn’t much better’ than others they’ve tested, I sometimes wonder if they’re nearing the ceiling of their chart’s capabilities.

Disadvantages of Target Analysis

The above stuff is just splitting hairs that show why different testers get slightly different results. There are several real problems with target analysis, though, that affect all testing done with target analysis.

It tests a camera-lens system, not just a lens. That’s generally not a big deal. If you’re interested in Canon cameras you’re probably interested in Canon lenses. But it causes some problems. If you’re looking a a lens that’s been available for several years the reviews may have been done on an older, lower resolution camera. That can make the lens seem worse than it is compared to lenses reviewed on newer, higher resolution cameras. It can also be a problem if you want to buy a third party lens in one mount but can only find reviews of it on another mount.

Focus Distance – At Lensrentals we’ve got targets ranging in size from 24″ to 85″ in diameter. But that means on the very largest targets we’re testing wide angle lenses very close to the target. For example a 24mm lens is tested at about 6 feet shooting distance. A 14mm we’re shooting at about 3 feet from the chart. Making the assumption that the sharpest lens at 3 feet shooting distance is the sharpest lens at infinity is, well, weak. There have been several examples where target analysis reviews say a wide-angle lens is just great, but in the field, shot at infinity, photographers unanimously say it’s not that great. And now you know why I don’t really trust these tests on wide-angle lenses.

The same problem comes up with macro lenses. My first Imatest results showed that the Nikon 105mm f/2.8 Micro just wasn’t that great. But, guess what? Even with my smallest charts I was shooting it at an 8-foot distance. When we developed techniques using high-resolution backlit Imatest targets shot at 1 foot distances more appropriate for a macro lens, it turned out to be a great lens. I ended up writing a blog post saying, yet again, I was wrong less correct than I had hoped to be.

Until we paint test targets on billboards (don’t laugh, I looked into it), we’ll still be testing wide angle lenses at very close distances.

Field Curvature – When I focus on the center of the image in a lens with field curvature (most lenses have a bit), the corners aren’t in best focus. If I change focus a bit, I can get higher numbers for the corners, but drop resolution in the center. Some reviewers use the corner numbers when the center is in best focus (that’s my habit, since I think it reflects real-world photography best). Others think it more appropriate to give you the best possible corner numbers, more like what you’d get if you were focusing off-center. Probably giving both would be the best way to do it, but I’m not sure anyone does that.

Summary of Computerized Target Analysis

When you look at a review using computerized target analysis you should check the testing methods to see exactly how they got those results (and why the results differ).

– What camera was used? Test the same lens on a Nikon D700 and D800 and you’ll get wildly different results.

– What was the shooting distance? Not many people (myself included) list this with every test, but you can assume a wide-angle is tested very close up, a standard lens at 10 to 20 feet, and a 200mm lens at 30 feet or so.

– What part of the test target is considered ‘the corner’? This can vary from really near the corner to 2/3 of the way between the corner and center.

– What are the corner numbers, really? Is it an average of all corners, the best, the worst, the verticals, etc?

– Did they give you astigmatism data? Not many people do because it makes things complex, but it would be nice if the Imatest reviewers mentioned it. I assume (and I may be wrong) that DxO data doesn’t give astigmatism readings, since it’s analyzing small dots, not angled lines.

– Was best corner focus, or corner results with best center focus reported?

None of this stuff makes computer target analysis bad. It’s actually really good and has several advantages over any other testing methods. BUT, and there’s always a but, it’s giving data for certain conditions, and often incomplete data at that. It’s a really useful starting point, but it’s not an exhaustive report of all the lens’ characteristics.

Optical Bench Testing

I know what you’re thinking. With all of these limitations for target analysis, why not just give optical bench reports. Well, the first reason is pretty simple. A good Imatest lab costs $10,000 to $15,000. (DxO is a lot more expensive – it’s sold as a complete package, including the testing room and lighting). But an optical bench costs from $50,000 to $350,000 depending upon its capabilities.

Still, an optical bench gives us two huge advantages over target analysis:

- It tests the lens at infinity focus, not close up, which can be more real-world.

- It eliminates the variable of the camera body, so you can compare, for example, a Leica lens to a Nikon lens.

It has another advantage for someone like me who has to check a lot of lenses; it’s very automated. Instead of multiple images taken of an Imatest target with careful alignment and manual focus bracketing, you mount the lens, push a button, and the machine does the rest.

How it Works

Optical benches can be oriented either horizontally or vertically. The lens is mounted in a holder and collimated light (parallel rays, like an object at infinity) is shined through a test target onto the lens. A camera at the other end of the lens receives the image and a computer analyzes it to determine the MTF of the lens at that point. The optical bench can either tilt the len or the light source and camera to examine the lens at various angles off-axis.

The projected target is usually a grid of crossed lines so that both tangential (at right angles to the radius of the lens) and sagittal (along the radius from the center to the edge of the lens) MTF can be measured. Occasionally a pinhole or other type of reticle is used.

When measuring a lens at one point, the bench takes measurements at multiple focusing distances (a motor varies the distances by as little as 1 micron), the computer measures each one and plots the best. The bench then tilts the lens a few degrees and repeats the process again and again. The process is fairly quick and in a minute or two the information is plotted as a graph showing a ‘cut’ from one side of the lens to the other.

To give you an idea, here’s a quick video showing the bench rotating the lens to various positions, aligning the target reticle automatically, and then the computer screen as it takes a dozen images at each location while altering the focus.

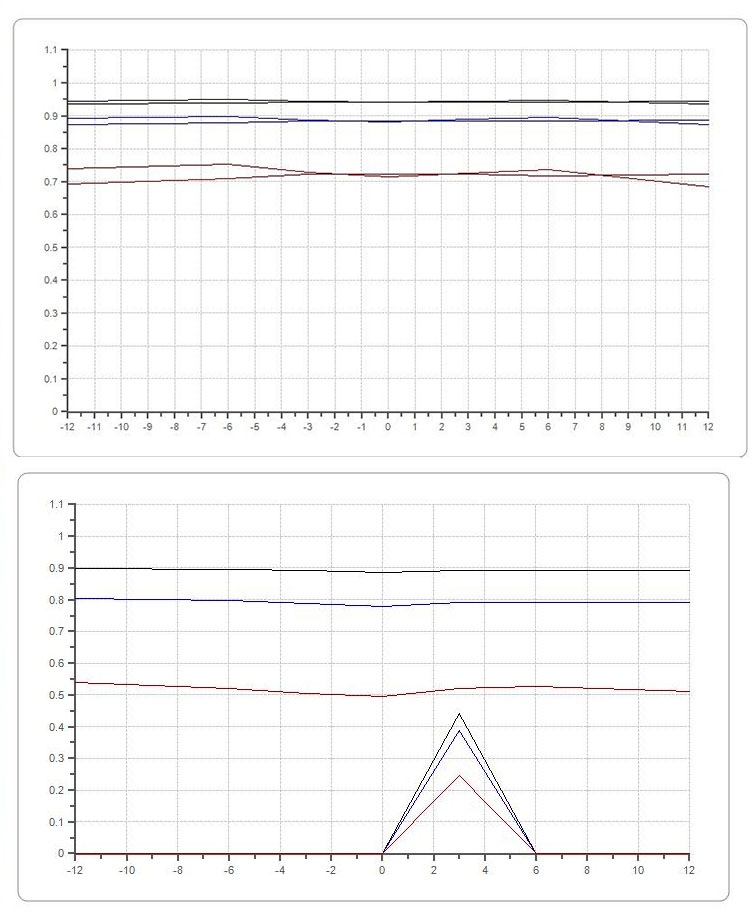

The bench does not give a full two dimensional picture like Imatest or DxO does, it measures a single line across the lens from one side to the other. In the screen shot below, the MTF at 10, 20, and 50 lines is shown from -12 degrees to 12 degrees field of view across the lens.

Notice there are two same-color plots for each MTF frequency. One shows the vertical line measurements, the other the horizontal lines. Since this is a single cut across the lens the horizontal line represent the sagittal MTF, while the vertical shows the tangential MTF. This lets us see the degree of astigmatism quite nicely (all lenses have some).

One weakness of the optical bench is that it reads just a single line from one side of the lens to the other. More advanced machines allow you to rotate the lens and repeat the measurements several times. This gives us a two-dimensional look at the lens, which can be very important when we’re testing lenses to make sure they’re within spec. A lens can be tilted or decentered in a way that looks OK in one plane (say from side-t0-side), but if you rotate the lens 45 or 90 degrees in the mount the readings can be quite different. The examples below show readings for one lens taken at 0, 45, and 90 degrees rotation.

One thing that has to be considered, though, is that the optical bench can overread things a bit. For example, the lens above looks awful on the bench, but really had just a bit of right upper corner softness when tested optically on test charts. Truth is, our inspection techs missed it, and I would have too if I hadn’t been looking for it very carefully.

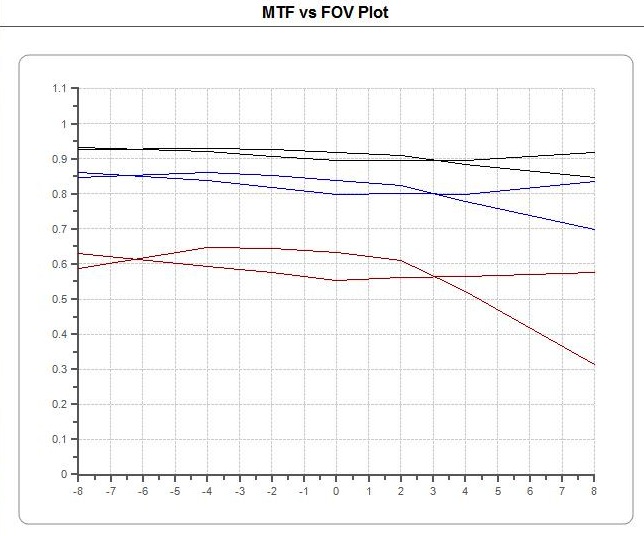

Here’s another example that should give some idea of what a really bad lens looks like on the bench. The upper print out is from a good copy, the lower one from a copy that was obviously soft.

Here’s another example combined with a real world shot. Below is a 100 Macro lens that looks to have a lot of astigmatism (notice how the two lines at each frequency don’t line up) according to the optical bench.

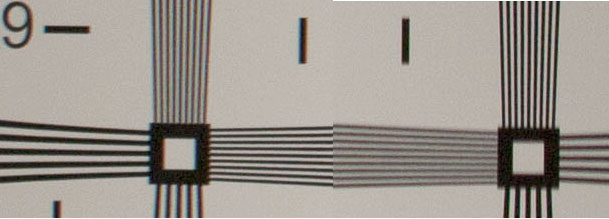

In reality, it is noticeable, but not horrible. Here are 100% crops of the lower corners of an ISO 12233 chart photographed with that same lens (this was the worst location for this lens). You can see that the right lower corner has softer horizontal lines, while the left corner has softer vertical lines.

In reality, though, chances are when taking pictures you might (although probably would not) notice the lower corners were just a bit soft.

Disadvantages of the Optical Bench

The weaknesses of the optical bench tend to be almost the opposite of the weaknesses of Computerized Target Testing.

It tests just a lens, not a system. – That’s better in some ways. Using a bench we could, for example, compare the Canon, Tamron, Nikon, and Sigma 24-70 f/2.8 lenses and calculate which is best. But this isn’t really as useful as you might think. For one thing, you’re going to shoot whichever lens you choose on a certain camera body. The camera-plus-lens combination is probably a more real-world test than simply testing the lens itself.

Different sensor microlenses may make the corners or edges look much different on a certain camera than they appear to the bench. (If you don’t understand how this is so, find someone who shoots rangefinder lenses on an NEX camera.) And we won’t even get into cameras that pre-process RAW images and how distortion in-camera control might change resolution. So in some ways, seeing the camera-lens combination tested might be more real world than optical bench results.

Focus Distance – Infinity focus distance is great and much more appropriate for lenses that will be used to shoot landscapes, etc. But it might be more appropriate to test a portrait lens at a reasonably close-up distance rather than infinity. Similarly, testing Macro lenses at infinity is probably worse than my original tests done at 8 foot distances. There are some optical benches that can also test at closer focusing distances but they are extremely expensive unless they only test on-axis.

It’s Less Intuitive – If you scroll back up to the Imatest and DxO test charts above, you can look at them in two dimensions like you would a picture taken through the lens. If the right lower corner of the Imatest readings are poor, the lens is poor in the right lower corner. Looking at several lines taken at various angles with the optical bench just isn’t the same.

Far Corners and Wide Angle Lenses – Depending upon the price, an optical bench has a limitation of how great an angle off-axis it can evaluate the lens at. The best one I know of is the Trioptics Imagemaster, which has a 94 degree field of view (equivalent to about a 20mm lens on a full-frame camera). Most are not quite that wide, and a 50 degree field of view (about 45mm) is more common.

This isn’t a problem for screening lenses because the bench is so sensitive it detects decentering without needing to get anywhere near the corners. For lab-testing reviewers, though, it’s a deal breaker. They have to give you corner information, even if it’s corner information at a 4 foot focusing distance.

Lens Size and Focal Length – Imatest and DxO can be used with lenses of any size as long as you have proper support (and a long enough room). As you can tell from the video, the small optical bench I’ve been able to afford isn’t going to be usable with a very large lens. It will handle a 24-70 f/2.8 size lens just fine, but a 70-200 f/2.8 lens is too big to fit.

A larger, vertical bench will be able to handle reasonably large lenses (300 f/2.8 size), but at a cost similar to a small house. There are even bigger benches that can handle almost any size lens, but those cost truly amazing amounts of money. There are also some limitations for focal lengths depending upon optical paths and physical size of the machine. Few optical benches can test lenses over 300mm focal length, and many can only handle 150mm or less.

None of this stuff makes optical bench analysis bad. It’s actually really good and has several advantages over any other testing methods. BUT, and there’s always a but, it’s giving data for certain conditions, and often incomplete data at that. It’s a really useful starting point, but it’s not an exhaustive report of all the lens’ characteristics.

What’s the Bottom Line?

To put it in uber geek terms, both of the testing methods we’ve discussed analyze the point spread function (3,4,) to calculate MTF. One analyzes the entire imaging system, the other analyzes just the lens. One measures at infinity, the other usually closer (sometimes much closer) than infinity. One measures the entire imaging field while the other measures a 2-dimensional line across the imaging field. Each has its advantages and disadvantages.

For testing purposes, I’ll be using both Imatest and an optical bench. Having the ability to check both infinity and near focus is a huge plus (I’ve seen a number of lenses that were out-of-spec at one distance but not another). For wide angle lenses, the optical bench is a probably better for screening, even if it has limitations for lens reviews. Its ability to detect astigmatism is very helpful for wide-aperture prime lenses and the fact that it tests numerous at focusing distances really helps with those lenses that have field curvature. For screening purposes, the bench is faster, easier to set up, and easier to change from one focal length to another change. That’s less of an issue for a lens review where one lens is tested exhaustively.

For macro lenses, physically large lenses, and lenses with very long focal lengths, the optical bench is not particularly useful and Imatest will continue to be our test of choice. Imatest is also a more logical choice for lenses likely to be used at fairly close distances. An 85mm lens, for example, is tested at 12-17 feet in our Imatest lab, which is probably where most portrait photographers are going to use it.

For lens reviewers, though, the ability to test at infinity focusing distance is a huge plus for many lenses. I know there are a few lenses that have amazing test results using computer target analysis, but that don’t seem to perform as well as expected in the real world. I suspect when we look at some of those on an optical bench, we’ll see their performance is more ‘tuned’ for closer focusing distances. Doing some comparison testing using both methods should be fun.

The bottom line, though, is we should read lab tests realizing what is being tested. A lab test may seem to say (as I have done on occasion), “this is an amazing sharp lens”. What that actually means is “this is an amazingly sharp lens at this focus distance, measuring this area of the lens, but not that area, giving you the average number for the 4 corners or edges (or best corner, or whatever), averaging out tangential and sagittal resolution (or just using one of the two), etc. Depending on what was tested and what you shoot, your mileage will almost certainly vary.

Roger Cicala

Lensrentals.com

October, 2013

PS – The last two posts have exhausted my photogeekness for a while, so there won’t be any more testing posts for a few weeks. I’ll have to do a history article or something silly to recharge.

Addendum: I had a nice conversation with Henry and Norman Koren at Imatest and they pointed out some new things they’ve developed that will definitely give some extra abilities to the Imatest system. Their ultra-high resolution chrome-on-glass charts can be used with a collimated light source to allow some infinity testing. For those of you interested, they have a nice comparison page up that shows the difference in quality between the new transmissive charts and the older paper charts. They’ve also written a nice response showing that there is good correlation with near and infinity focus HERE.

So, like so many things, the technology is improving faster than I can write about it!

29 Comments

Ralf C. Kohlrausch ·

Hello Roger,

interesting read as usual, thank you. I just try to do some testing of my own to find out, which lenses to use for what (and which to sell off). Every single time I get different results 😉 And even worse: I gelt wildly diffent results for every region of the picture – which is why I hardly sell a lens, once I have it. I am a little like a child, I like to keep my toys.

Whenever I do decide to depart with a lens, I need to illustrate it’ strengths for the advertisement. So I take pictures, like the pictures, and keep the lens.

And – what really confuses me: All poster-size pictures on our walls have been taken with lenses, that I have since sold for obvious lack of quality. Conclusion: I have to do much more testing… 😉

Greets

Ralf C.

ginsbu ·

Thanks for an interesting post, Roger.

One question: From your description it sounds as if automated testing on the optical bench (varying focus distance and taking the best) cannot measure resolution loss due to field curvature — is that correct? If so, that seems like an unfortunate limitation as that’s something folks intending to use a lens for landscapes would want to know, i.e. whether the lens can produce sharp images across the frame when focused at infinity.

Roger Cicala ·

The optical bench actually measures best point at all tested sites. Depending upon the software the bench has, you can look at it in any way you like (as long as that way is one of the software options). Our bench lets us pick any focusing distance (for example between -40 and +40 microns from our starting best center focus) or the reverse – what was the focusing distance that gave the highest MTF at each point. The latter lets us take out paper and pencil and make a map of field curvature. More powerful benches will actually plot out the field curvature map for you.

Curby ·

Hi Roger, thanks for another great article. Since you’re doing all these tests anyway, and since you’re in the unusual and envious position of seeing 10+ copies of each lens, is there any way you could publish your testing results?

I know that each lens you rent has a short blurb about general pros and cons. However, it’s clear from these articles that you have a ton of unpublished data as well. Linking off to a more detailed analysis, with some charts, notes on sample variation, most common (last least common) problems, etc. would be great.

Of course that would take a ton of time and effort, but I can’t think of a better source for comprehensive (in terms of both tests performed and copies tested) testing.

P.S. Something silly would be nice too. Could we get more pics of you wearing various photo equipment like hats? 🙂

Roger Cicala ·

Curby,

Well, you read between the lines well. I do have a lot of data I don’t publish, but often because I’m not certain of it and the rest of the time because I just don’t have time. We plan on tackling a lot of this stuff on the new Lensauthority.com site (where we sell gear now) because we’ll be adding optical printouts to the for-sale lenses and hopefully offering optical testing as a service through that site in a few months. The Lensrental’s pages are so information intensive I’m afraid of bogging down the server with more things. The Lensauthority site will have less intensive traffic. Or something like that (I don’t do IT – can you tell??)

KeithB ·

Roger:

What did you mean by 50% magnification? Assuming 100% is one image pixel per screen pixel, do you mean 4 screen pixels per image pixel or 1 screen pixel for 4 image pixels?

Roger Cicala ·

Keith, I was thinking screen pixels per image pixel (at 50% size in Photoshop).

ginsbu ·

Thanks for your clarification regarding field curvature, Roger.

SteveB ·

Have you considered flying over some of the giant test charts for wide-angle testing? They’re already there and if the air space is open, all you need is a stable aircraft. http://petapixel.com/2013/02/15/there-are-giant-camera-resolution-test-charts-scattered-across-the-us/

Ed ·

Once again, a impressive article written so that many photographers can comprehend what you are saying. That’s a rare talent!

I’m looking forward to the testing service, I’ve always felt that there was a need for one, and a market for even some of us who don’t need it. Its too bad that testing very long lenses and very wide ones is so difficult.

As more and more photographers get into cinema, that should open up a big opportunity for testing. Colors also become a big deal, perhaps a article on testing for color variability from lens to lens would be interesting, I’m not sure if there is a commercial machine to do this, but there is probably some sort of spectrographic attachment for your bench.

Roger Cicala ·

Ed, the lighting thing is being done. It uses a machine called an integration sphere: http://en.wikipedia.org/wiki/Integrating_sphere

But I don’t have any expertise in that area. Plus, there’s this other equipment I really want to buy instead of spending more money on stuff I don’t know about . . . .

Roger

Allan Sheppard ·

Hi Roger, another very informative article. Coming from a scientific background I appreciate the work that must go into an article like this.

Presumably you will have access to the new Zeiss Otus 55mm f/1.4 APO-Distagon at some time. It would be interesting to see your comments on this lens – perhaps compared to the Coastal Optic 60mm Macro which is at least comparable on price.

Frank Kolwicz ·

For wide-angle lenses couldn’t you use individual charts spread out on a suitably flat surface for the center and each corner and possibly some in-between locations? That would be nearer billboard size and still have high resolution test targets.

Chris Livsey ·

Billboard size? Not thinking large enough at all:

http://www.clui.org/newsletter/winter-2013/photo-calibration-targets

http://www.livescience.com/17052-mysterious-symbols-china-desert-spy-satellite-targets-expert.html

CarVac ·

For Imatest with ultrawides, how about using multiple (7 or so?) test charts laid out in a plane?

Nqina Dlamini ·

I will re-read this article again in a few weeks (I do that with all your articles). Today my needs (more like a geek fetish of some sort) for a well written, researched and unbiased article have been met. Thank You for your articles they are really informative, and the background work you put in them shows.

Frans van den Bergh ·

Hi Roger,

I like your comparison of the bench vs. target analysis methods. Good stuff!

I would like to mention another disadvantage of the target analysis method: your measurement error increases with increasing system sharpness, so it takes more work to obtain reliable results with very sharp lenses.

I posted some results to illustrate this here:

http://mtfmapper.blogspot.com/2013/10/how-sharpness-interacts-with-accuracy.html

Lui ·

Hi Roger,

we really liked your articles – especially the previous one on variations in lens quality. I think you might be interested in a new software that can account for deviations in manufacturing. Basically what it does is auto-detect the optical aberrations in the picture (so you don’t need lens profiles or a lab):

http://www.piccure.de/piccure_lens_correction_EN.pdf

I don’t like posting links in public (since we are a company and I do not want to Spam your blog – so feel free to drop me a mail and delete the post or just delete the post) but I couldn’t find you mail… So apologies here.

Have a great week!

Lui

Ken Owen ·

I don’t see too many torture tests out there. As far as I can see, LensRentals’ repair stats (from many rented units) are the only guide to durability. Fine work.

LensTester ·

Hi Roger,

I really appreciate your blog posts. Since I am one of those who tests lenses on an optical bench, I can agree almost completely with you.

Luckily enough, our optical bench is able to test lenses up to 14mm at the wide end and up to 300mm f/2.8 or 400mm f/4 at the tele end, 35mm equivalent.

On the other hand, it’s quite old and the only motorized element is the sensor and rotary unit: the lens stand still and the collimator is moved by hand. Oh, and we make our lens mounts… that’s a huge saving 😉

If you like to be in touch, just drop me a line: the mail I used to write this post is real.

Promit Roy ·

Is it possible or meaningful to put filters on this test equipment, probably mounted on a lens? (I assume it’s fine with Imatest, not sure about optical bench.) I think it’d be interesting to see if filters affect measured parameters and by how much. Never seen a test like that…

Alan Cox ·

I wouldn’t think too long about those huge test targets used by the military reconnaissance camera folks. Using them from moving platforms, through various moisture and temperature layer conditions introduces more nasty variables than you can imagine. The slanted edge test is becoming quite popular .. even possibly those miliary types. (The largest blocks can be shot on an angle.)

Jeff ·

Since computer design and analysis has been used for a long time in creating a lens, do the manufactures use these test methods as the intended end result instead of actually looking at photos? A lens can score ok to good, but not have anything stand out. The 28-200 superzooms and kit lenses seem designed this way, overall score ok but have lots of compromises, thus not doing anything well. Pop Photo had MTF data 20 years ago which rarely if ever gave less than a 90% + score at most used apertures and print sizes. Pop Photo’s stance of “All slides were sharp and contrasty” meaning “good enough”. Yet real world photos (and consensus) showed the primes and short or fixed aperture zooms were better than the 2-4% test differences of superzooms & kit lenses. Thus the primes and decent quality zooms having a good resale value vs. other lenses being worth 1/5-10th its new price once you walked out the store.

Richard ·

Don’t be sorry you have to test wide angle lenses up close. That is how they are supposed to be used: you have I get 2 or 3 feet from the subject of your photo so that you can juxtapose a very large foreground object with smaller middle ground objects and tiny background objects.

Robert ·

Have you done any AF Fine Tune testing using LensAlign, FoCal or any other hardware/software products and if so please share your findings…

Roger Cicala ·

Robert, we do a lot of AF fine tuning using all of the above methods and they all work well. But I’m not sure if I understand your question, since AF fine-tuning doesn’t affect lens testing – autofocus is never used.

Roger

Robert ·

The question was not well stated…

We had hoped to gain some insight into what methods (Inclined Rule, Software based or other) you have used to determine a ‘Best Focus’ Lens/Body Phase Detection Auto Focus AFMA that when used under actual field conditions produced neither Front or Back Focused captures (assuming proper system management).

Also note that the question asked is confined to AFMA testing of Lens/Body types that are Prime Lens/Pro Body manufactured by Canon or Nikon and not cross mounted (Canon Lens on Nikon Body or…).

We have had reasonable results with some Lens/Body systems where ‘reasonable’ is defined as a final ‘Best Focus’ AFMA value determined by Field Testing to be within 4 AFMA units of what the Inclined Rule or Software product identified as a ‘Best Focus’ value.

We have also had less than acceptable results where ‘less than acceptable’ is defined as a lack of Field Test AFMA agreement within 10 AFMA units of what Inclined Rule or Software product identified as a ‘Best Focus’ AFMA value. Note that several system retests were conducted to confirm the results.

In all cases the same Test Device/Software, Test Procedures (altered as required for Lens Focal Length) and lighting conditions were used.

Note also that Focus Target distance/ Field Subject Test distance were maintained as a near constant for the subject Lens/Body system tested.

‘Reasonable’ agreement testing has been limited to:

1. Canon 500mm/1D MK IV

2. Nikon 500mm/D3s

3. Nikon 600mm/D3s

4. Nikon 600mm/D4 (not the same 600mm used in Item 3)

5. Nikon 600mm/D4 (not the same 600mm used in Item 4)

6. Nikon 600mm (all three)/D300s

‘Less than acceptable’ results has been experienced with:

1. Canon 500mm/1Ds MK III

2. Nikon 300mm f./2.8/D800

Any knowledge you can share that might help explain or overcome the dramatic ‘Less than acceptable’ results experienced with the Canon 500mm/1Ds MK III and Nikon 300mm f./2.8/D800 would be greatly appreciated.

Roger Cicala ·

Hi Robert,

I stopped doing AF testing some time ago when I saw the amount of shot-to-to shot variation given properly adjusted MA varied by both camera body and lens mounted. Realizing both body and lens were variables led me to conclude the formula (‘every body X every lens for that body’) X sufficient copies of each to eliminate sample variability would take me about 10 years to test, by which time all the tested equipment would be obsolete.

I would suggest as a starting place determining the number of AF steps for the lenses in question. It varies from 650 to 3500 on a given lens, and that will be the rate-limiting accuracy number for the lens if all else is perfect. But I don’t know if such a number is available for cameras. I wasn’t able to find it.

Best luck!

Roger

Mike ·

Very interesting article, thanks for taking the time to write it.