Why Aren’t the Damn Numbers the Same????

I get asked a simple question almost every day: Why do your results say this, Joe’s results say that, and Bill’s results say something different? The questions surprised me at first; I grew up in biological and medical research and took it for granted everyone expected different tests to have slightly different results.

But different numbers makes things complicated. Everyone would like it if 5 reviewers all said the new Ultrazooma has an MTF50 of 816.5. That would be that. But obviously it’s not that simple. So why does Roger say 800, Joe says 1642, and Bill says 656? Plus, Roger says 800 is great, Joe says 1642 sucks, and Bill says 650 is average?

I’m going to talk about that, but fair warning: This is a geeky article. If you aren’t into this kind of thing you might want to save 15 minutes and go chat on a forum.

Image Analysis MTF Numbers

A lot of people evaluating lenses use Imatest, DxO Analyzer, or some similar computerized resolution tool. They all work roughly the same way: a controlled image of slanted lines or circles is taken and computer analyzed to determine the resolution of the camera and lens. The data can be presented in several ways. Imatest users commonly present MTF50 numbers but have several other options. DxO uses a ‘blur factor’ measurement that is proprietary.

Computerized Image Analysis is a big improvement on “It looks really sharp to me” versus “I didn’t think it was all that great.” But it also has several limitations:

- It tests a combination of lens and camera, not just lens.

- Testing is done at fairly short distances (10 – 50 feet) so it may differ significantly from values at infinite focusing distance.

- Each lab varies a bit. Lighting, testing distance, test charts used, etc. can make numbers slightly different between testers

- The same value may be reported in different numbers.

So why does everyone use image analsyis MTF? Because it’s accurate within its limitations and it’s reasonably priced. A good Imatest setup costs around $10,000 and gives reproducible numeric results that are more accurate than shooting a test chart. A good optical bench capable of measuring MTF across the entire lens costs 10 times as much.

An optical bench, though, has several advantages: it’s testing the lens at infinity, the variables of the camera are eliminated, and it provides greater accuracy. On the other hand, most can only measure the lens at infinity. Sometimes you want to know how a lens does at 20 feet rather than at 250 feet.

If you’re interested in the specifics of testing, I’ll go into some more specifics below. If not, those are the main points. Move on down to ‘The Single Copy Phenomenon” below.

The Range Chosen for Numbers

Even when presenting MTF 50 results, different testers will present their numbers differently. For example, I use line pairs / image height. Others might use lines / image height (so their number would be exactly double mine). They might also present lines (or line pairs) per mm instead of per image height. This is pretty simple, though. You can get out your calculator and compare line pairs per image height to lines per mm.

Were the Test Images RAW or JPG?

The Imatest results from an in-camera JPG will be significantly higher than if the test is run on a RAW image. How much higher varies depending upon the camera, but 20 to 40% higher is common.

Some testers use in-camera JPGs because they believe the results are more likely to approximate what people will actually see. Others use raw images to eliminate, as much as possible, any in-camera sharpening and reduce the chance of spurious resolution. Even different raw converters may give different results. Luckily most Imatest testers use the included DCraw converter so their results should be similar.

What Targets Were Measured from What Distance?

This is one area where most testers let you down — myself included. I know that few people want to read this stuff in an article, but I should have put it up somewhere before now. Other testers should too. But this is the kind of thing that has a lot to do with why Joe’s numbers and Bill’s numbers are still different after you convert them.

We’re taking pictures of special test targets. They come in various sizes, but they’re expensive (the largest charts are over $1,000 each). I use 6 sizes, ranging from 2″ X 3″ backlit photographic film (for macro testing) up to 42″ X 72″ factory-made prints. Since the chart must always completely fill the image, you can only shoot at certain distances. For example, even with our largest chart, a 16mm lens on a full frame camera is shot at about 8 feet distance.

Even with all the sizes, though, since the target must always fill the frame of the image, there are fixed distances you can shoot at. There can be significant differences on standard range distances. If I shoot a 70mm lens on our second largest chart, I’m shooting at about 14 feet. On the largest I’m back to 19 feet. On a smaller chart, I may be at 7 feet. The same lens, on the same camera, will give slightly different results shot at different distances.

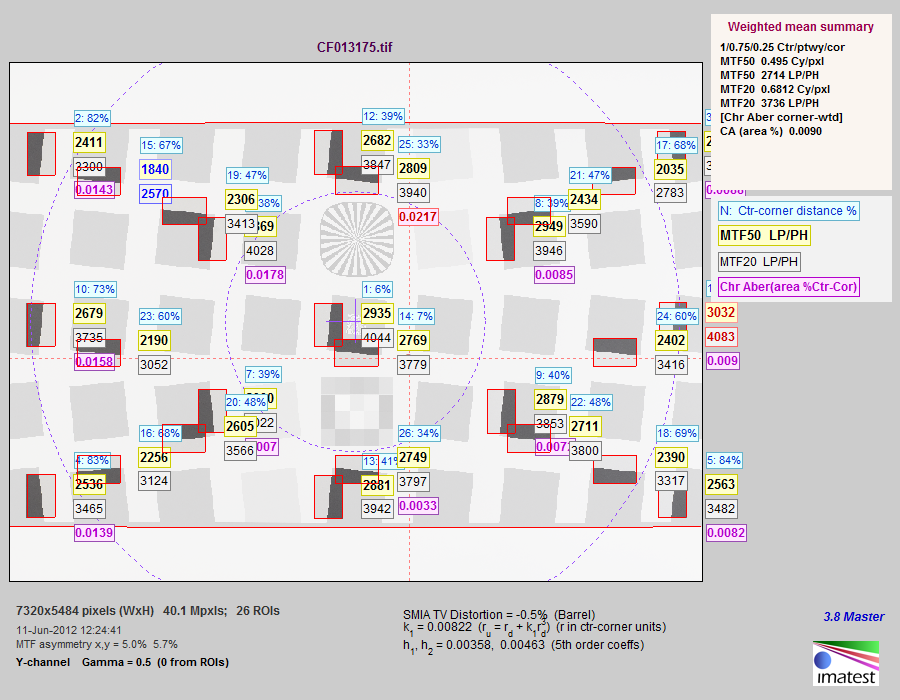

It also matters what part of the chart I take my measurements from. A typical Imatest image looks like the one below.

The edges of the light gray squares are what the computer actually measures. In this printout the computer has shown (by darker areas in red boxes) the parts of the chart used for calculations. This is the typical 13-point measurement I use when I give average results across the entire lens.

I could actually calculate over 30 points, but the calculations take a loooong time. But another reviewer might use 9 points or 24 or 32 to get his overall results. We should pretty well agree on the center value, but depending on the number of points used for calculations we might differ a bit on the average value.

One other thing can cause significant differences. In the example above I’ve measured both horizontal and vertical lines. Because of astigmatism they’re usually slightly different (look at the center yellow numbers – in the center this camera-lens measured 2935 on vertical lines and 2769 on horizontal. You can make an argument for reporting vertical or horizontal resolution only, just the higher of the two, or the average of the two. We use the average, but I’m not sure what everyone else does, so vertical versus horizontal resolution can be another source of variation.

The final argument concerns what is the corner value. Notice the corner horizontal and vertical resolution measurement areas are different in this example because this chart isn’t set up perfectly (it’s from a medium format camera with the wrong format size for this chart). My takeaway point is you can’t always measure the absolute furthest corner with this method. The measurement is some distance from the corner and that may vary between testers slightly, depending on which chart they use.

These Systems Test Camera-Lens Combinations

I think everyone knows this, but maybe not. You can’t compare a two-year-old-test done with a Nikon D700 (or Canon 5D) with a newer test of the same lens done on a Nikon D800 (or Canon 5DIII). Change in camera resolution equals change in system resolution equals different numbers. Even if resolution is about the same, different microlenses on the sensor might make a difference in edge and corner readings.

Every Lab is a Bit Different

One day we started testing and 12 lenses in a row failed to meet expected resolution. We figured maybe something odd was going on and investigated. It turns out people at the other end of our building were tearing up concrete floors. We couldn’t hear them, but the vibrations were screwing up our tests.

We were doing quality control checks on lenses we already had numbers for, so we knew something was wrong. But if that was the first day we were testing that lens, or if I was a reviewer testing my only copy of the lens, I might have just decided the lens sucked.

We had a similar phenomenon occur when we changed lighting systems from fluorescent to tungsten. It changed our values slightly.

The takeaway message is everyone’s lab is slightly different. Even with the exact same camera and lens they will have slightly different results. But no two testers ever have the same copy of the camera and lens. Which brings up the second source of variation. (Nice segue, isn’t it?)

The Single Copy Phenomenon

If you’ve ever read anything I wrote, you probably already know this. If not, here’s an absolute reality you need to digest. Every copy of lens X is just a little bit different from every other copy. Every copy of camera Z is just a little bit different from every other copy. There are manufacturing tolerances for such complex devices that are unavoidable at any price.

If you can’t accept it, you definitely need to stop pixel peeping. You’ll go insane. If you want to say, “For $2,000 every lens should be perfect” then you are very naive. We have $50,000 cinema zooms and they are all slightly different, too. In fact, at the very highest levels, technicians will hand calibrate each $50,000 lens to the $100,000 camera because there is still variation.

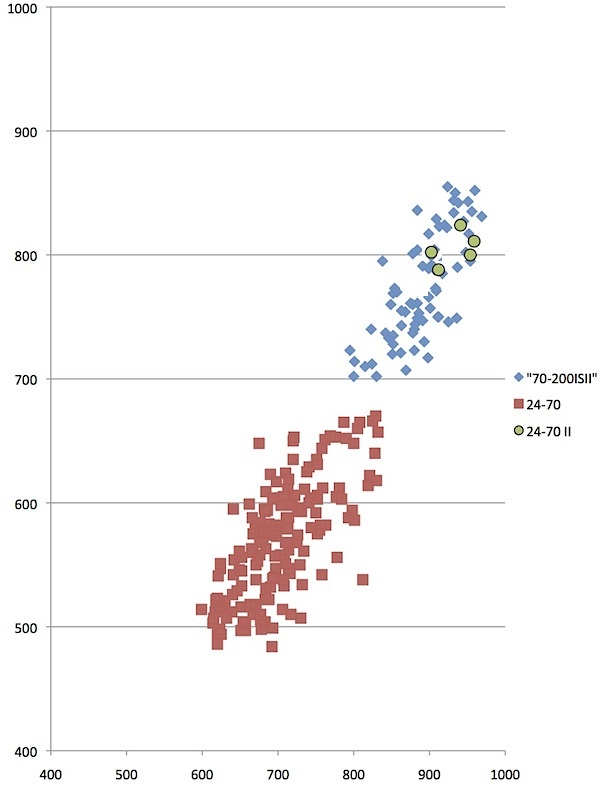

But let’s look at how much this variation affects numbers with some real-world samples. Here’s a chart I used in another article about the Canon 24-70 f/2.8 Mk II shot at 70mm and compared to the original version and the 70-200 f/2.8 IS II. The table presents the average (mean) Imatest results for peak value and overall value (average MTF50 at 13 points). It looks nice and simple, doesn’t it? That’s why I presented it that way, because that’s what people want.

| Ctr MTF50 | Avg MTF50 | |

|---|---|---|

| 70-200 f2.8 IS II | 885 | 765 |

| 24-70 f/2.8 | 705 | 570 |

| 24-70 f/2.8 Mk II | 950 | 809 |

Below is the actual data used to obtain those numbers (well most of it, this graph only has a subset of the 70-200 and 24-70 numbers). These are the individual Imatest measurements for around 75 copies of the Canon 70-200 f/2.8 IS II, around 120 copies of the Canon 24-70 f/2.8, and exactly 5 copies of the Canon 24-70 f/2.8 Mk II.

The horizontal axis is MTF50 at the center point, while the vertical axis shows average MTF50 measure at 13 points like the illustration above. It’s not nearly as simple, clean, or easy-to-read as the table of average (mean) numbers. What can I say with absolute certainty? At 70mm you’re never going to find a 24-70 Mk I that’s as good as either a 70-200 f/2.8 IS II or a 24-70 f/2.8 Mk II.

The number in my table says the 24-70 Mk II is ‘better’ than the 70-200 IS II, because the mean value is slightly higher. It should be pretty obvious, though, that if a reviewer tests one copy of each, there’s a good chance his 70-200 IS II may be better than the 24-70 Mk II he has. I’ve certainly got some 70-200 IS II lenses in stock that are a bit sharper (at least with numerical measurebating) than any of the 24-70 Mk IIs we have. Most of them aren’t, though.

It doesn’t take much imagination to understand that when we test another 50 copies of the 24-70 Mk II the average (mean value) might be different than the first 5 copies. Until I test a lot more 24-70 Mk II samples, though, I won’t have a really accurate idea of the range of that lens.

Early reports from reviewers seem to indicate there might be a lot more variation in the 24-70 f/2.8 Mk I than I thought at first. Since all 5 of my samples were very close in serial number, it might have given me a false sense of security about low variation between copies.

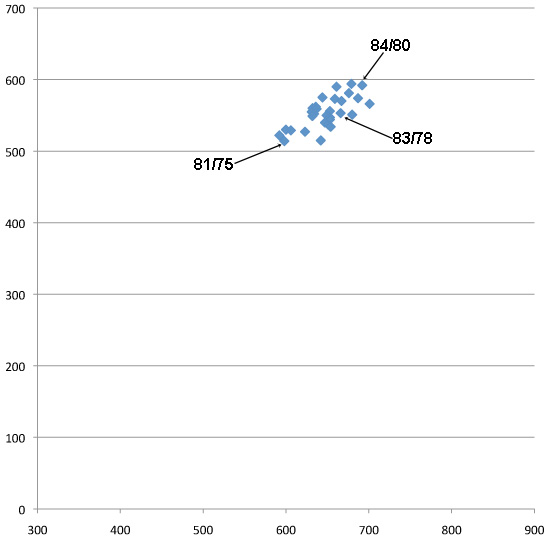

MeasureBating

The lenses in the graph below are all ‘within normal range’. (This is a prime lens, so the variation is smaller than with a zoom.) The numbers pointing to the best, worst, and one of the middle copies are SQF values. If the SQF value is within 5, you probably can’t tell the difference in a photograph. The MTF 50 for the best lens is 690 / 590. For the worst one it’s 585 / 510. That sounds hugely different.

If I used SQF numbers instead (84 / 80 compared to 81 / 75) it doesn’t sound nearly as impressive, although one lens still has better numbers than another. But if I took real-world photographs you probably couldn’t tell the difference.

It doesn’t make the numbers less real. Geeks like me can dance celebrations to the triumph of the lens designer when he hits big numbers. But reality is the difference we’re measuring, while real, may be so small that it can’t make a noticeable difference in pictures.

In the real world the difference in how well the camera hit focus, how correct exposure was, if there was a tiny bit of movement creating blur, and a zillion other things make more difference than the difference between those two lenses.

So Is All this Measurement and Reviewing Entirely Worthless?

No, not at all. But any single reviewer’s or tester’s comments (including mine) must be taken with a grain of salt. When 4 or 5 reviews are all roughly the same, you probably are getting a reasonable overview about the lens.

When 6 reviews say one thing and two say something different, don’t do the internet thing and decide two of them are lying or incompetent. You might miss some important information that way. Look more carefully and you might find the 2 reviewers were looking at something different than the other 6.

The Zeiss 25mm f/2 lens provides a great example. Imatest people, like myself, tend to rave about how well the lens resolves. We are all testing it at 6 to 12 foot shooting distances. People who shoot landscapes talk about the lens having soft corners when focused at infinity. Guess what? Everybody is right. They’re just telling you two different things about the lens.

Of course even the most careful reviewer will make a mistake sometimes. (I’ve certainly made mistakes and I suspect everyone else in this field has, too). They might have gotten a bad copy — it happens and it seems to happen more during the first shipment of a lens or camera.

They might have had very high expectations and the lens didn’t meet them. Or they might have had very low expectations and the lens exceeded them. I did just that back when Nikon released the 24-120 f/4 and 28-300 VR lenses. There’s no question the 24-120 is the sharper lens and had the better MTF numbers when I tested them. But I waxed poetic about how great the 28-300 was because I just I expected it to suck like every other superzoom. When I found it was pretty good, I lost my mind a bit.

For all those reasons, nobody should ever buy a lens based on a single review. But after looking at 5 or 6 you usually get a pretty good idea of how good or bad a lens is.

And let’s face it. We all know early adapters pay a price and are doing some beta testing anyway. If you’re waiting for the first review to come out so you can decide if you want to preorder that lens TODAY, just go ahead and order it. You know you’re going to.

For Those of You Certain THE MAN is Buying Great Reviews!

I love a good paranoid rant as much as the next guy. And I can’t say it never, ever happens because I don’t know everything. But I seriously doubt it happens.

I know a lot of reviewers pretty well. Every single one I know spends hours and hours worrying about the accuracy of their methods, the accuracy of their results, and making sure they don’t say more than they know. They got into this field because they’re geeks, mostly, and they love doing this stuff.

Review sites that give bad information don’t tend to grow very much or survive very long. Most of the successful ones have large websites that generate lots of traffic or subscriptions. It would take a very large payment for them to put their years of work establishing credibility at risk.

Even more than that, I think a camera company would be pretty insane to ever risk someone going public that they received kickbacks or payoffs. Think about it. A company pays Joe $10,000 to write a stellar review. If Joe was really smart and unethical he’d document it, call them back in 6 months and demand 10 times that much to keep him from going public with his documentation.

Are there more subtle influences? Sure. Getting a lens or camera to test before anyone else gives a reviewer a head start and some traffic. But you have eyes the same as I do. The largest sites get the prerelease cameras and lenses and not the smaller guys, usually. The camera makers are going to supply the people with the most readers whether they say nice things or not.

Roger Cicala

Lensrentals.com

September 2012

23 Comments

Justin ·

> Review sites that give bad information don’t tend to grow very much or survive very long.

Besides Ken Rockwell, unfortunately.

Roger Cicala ·

Justin,

Well, they say there’s an exception to every rule 🙂

Nate ·

Thank you Roger for consistently providing articles that educate and provide significant data to the photographic community. I enjoy reading them very much and always come away feeling better informed.

My inner science teacher geek has one bit of constructive criticism. It irks me when graph axes are not labeled. Because I don’t look at them every day, I always find I’ve forgotten what each axis means on the MTF50 graphs. You usually explain it in the article, but having it in plain view for each graph would be helpful.

Thanks again, and keep this geeky stuff coming.

Chas ·

My head just exploded…

Aaron ·

Always great posts filled with useful information, a bit of humor, and some humility sprinkled on top. Please, keep them coming!

Joachim ·

Roger, again the manufacturers should weave you a broad red carpet for your articles! Not only you know your stuff well, but you also know how to transfer this knowldege into readable fine articles and lead me to conclusions which are obvious once you think about but not necessarily easy to recognise.

Big thank you again for great work!

Dieter ·

Thanks Roger for publishing this. Is the variation displayed in the graph typical for most lenses or is this a particularly bad (or good) example? The variation appears huge to me – are all those lenses indeed within factory specs (in other words, are factory specs really that loose)? Without having actual SQF numbers for the zooms I can’t be certain but it does appear that one should be able to easily tell the difference between a good and a bad copy of either the 70-200 or 24-70 in a print?

Roger Cicala ·

Dieter,

One thing to note is the axes on the zooms aren’t started at zero like the prime was: it makes the prime variation look smaller. (I was trying to emphasize points with the zooms, hence the expanded axes.) But zooms do have a larger variation and you often can, with pixel peeping, tell a difference between zooms.

It’s complicated because all zooms vary at different zoom ranges, most are better at one end than the other. Finally, remember the variations involve lens-camera combinations. If I shot every lens on a different cameras the patterns would be very similar, but the dots would change position. The best lenses on one test camera aren’t the best on another.

Short answer, though, is yes, you can tell the difference between the worst and the best with very careful analysis.

Dieter ·

Thanks Roger – this sentence gives me pause however: “yes, you can tell the difference between the worst and the best with very careful analysis”.

Eyeballing the graphs I’d say there is a +/-10% deviation in the numbers for each given lens between best and worst (give or take a little) – but only careful analysis would reveal this? So a 900/900 isn’t quite obviously different from a 810/810 or a 990/990? I’m thinking of all those discussions I read and sometimes even participated in where a 1% difference (and often less) was treated as being the distinction between “stellar” and “dog”. I always asked myself what variation really was distinguishable and field-relevant – but I wouldn’t have stretched this to include a 20% span.

I was always taking single copy lens tests with the necessary amount of salt – and always kept wondering about the statistics. The data you present here seem to support that one really shouldn’t take those numbers too serious – quite obviously one can’t tell from comparing two different lenses on the same camera whether other copies of the same lenses wouldn’t have the results reversed.

Roger Cicala ·

Dieter,

We may be comparing apples and oranges. I was trying to compare “would you notice it in real world photographs” and I think perhaps you were talking “would I notice it on some at-home optical testing”. Correct me if I’m wrong, I don’t want to assume.

I think the +/- is about 7.5 – 8%.

Now a couple of factors:

1) If I set up a camera and lens and autofocus on the chart taking repeated shots, nothing else difference, the deviation will be about =/- 2%.

2) If I take the highest testing lens and put it on a different camera, it will generally drop down towards the middle quite a bit. If I take the worst and put it on a different camera it will be quite a bit better. This isn’t an AF variation, it’s with careful bracketed manual focus, taking single best shot. Usually a good 3-4% change for extreme cases like we’re discussing.

3) If I do give you the very best camera lens and very worst camera lens combination you would see the same difference I see – if you were shooting at that focal length and shooting distance. But if the results are for 70mm on a 70-200, there will be no correlation between doing well at 70 and doing well at 200. So the best lens at 70 will not be the best lens at 200.

4) A similar variation exists in focusing distance: less obvious but if I run this batch on a small chart at 9 feet and a big chart at 15 feet, the lenses won’t finish in exactly the same order.

As confirmation, on at least the lenses I know very well I can optically adjust them to be superb at one end of the zoom range, but this adjustment often weakens the other end. Not all adjustments do this (centering improves both, for example).

So I guess it’s a two part answer: If you shoot the same focal length and distance as my test, with the same lens and camera, you’ll notice the difference no question. If I sent you just the lenses on your camera the difference might be much smaller. If you change focal lengths or shooting distance, the difference may be gone, or maybe reversed. The sharper lens at 70mm and 15 feet may be the softer lens at 200mm and infinity.

Roger

Dieter ·

Again, a big thank you for answering my questions. I had aimed at “would you notice it in real world photographs” – and if I understand correctly then one could if one would look very very carefully. That’s what matters in the end result (the image). Thus owning any one of the 70-200 lenses used in the above test, for example, would not lead to noticeable differences in real world photographs unless I scrutinize the images very carefully (i.e. pixel peep).

Of course, the “would I notice it on some at-home optical testing” is also quite interesting – and you answered that question as well. Still surprised at the magnitude of the deviations but knowing those numbers now helps putting things in perspective.

I was aware of the variability of the measured value with distance and focal length and the also the dependency on the actual camera used. It seems to me that my approach of running every new lens through some quick “real life” tests to check for obvious flaws is quite sufficient. To exaggerate a little: if I can’t see it in an image, then I don’t really care if it could be detected on the optical bench.

Nicholas Condon ·

“I grew up in biological and medical research and took it for granted everyone expected different tests to have slightly different results.”

Heh. As a physical scientist, I’m always surprised to see replicates of bio-med experiments that are only slightly different…

Snark aside, this is a great, great article. Everyone on the internet on any comment thread about any lens should be forced to pass a test proving that they have read and understoond this information before being allowed to post.

Jim Thomson ·

Nicholas

Why do you want to shut down all of the photography forums?

Many may read, but few will understand and be allowed to continue posting. 🙂

Randy ·

Roger – Very interesting and candid, as usual. Not to beat this to death, but having had some experience on the manufacturer/distributor side, things can be a bit more subtle. If you receive an item prior to its being available to the public, you can be sure it has been thoroughly tested to make sure the manufacturer is putting their best foot forward. “Pre Release” implies unfinished but who really believes a manufacturer would subject their product to a test, knowing it isn’t ready.

The other scenario is that a lens tests so poorly that the tester is uncomfortable publishing the results and requests another sample. If that sample doesn’t check out they decide not to publish the review. Of course you’re in a different position because LR can simply choose not to carry the lens.

Bob ·

Great article. What I take from this is you don’t know how a lens will perform for you until you mount it on the camera you will be using it on and take some images at your typical apertures and distances. The only challenge with this approach is that over the years you use different digital cameras and so it is hard to obtain a benchmark as a lens may appear softer than another, but it really isn”t, it just appears that way because your current camera creates softer images as processed than your previous one. Maybe the solution is to buy a high resolution camera and keep it as long as works for comparative lens testing. This may seem extreme, but I have spent tons of time and mental distress trying to figure out why a new lens doesn’t look as sharp as I expect, and this approach would hopefully eliminate the camera body as a possible reason

Bob

Nikola Runev ·

Great article, thanks for taking the time and trouble to write it.

The way I judge a lens is by trying it on my camera, I don’t do all that pixel peeping stuff. I go out with the lens and make a day out of it, later when I have a look at the RAWs I make my decision.

I’ve been lucky to have good copies of Sigma lenses ( 24-70/2.8 300/2.8 150/2.8 12-24 F/whatever 10-20mm ) but I always tested them with my eyes.

When I was in Japan buying the 24-70 I made the chaps at Yodobashi Cameras open every single camera they had 🙂 then argued for about 2 hours for the price 🙂 got a great deal 🙂

Always compared the shots with my mates.All that matters are prints !

Most of the lens issues can be eliminated in post. If you’re not a pro that prints larger than A0 , the difference is hard to tell from the viewing distance.

Gert Weber ·

Hi Roger,

This is a very interesting article!

For me it left only one question:

The original Imatest-chart you are showing shows roughly a double resolution for a very good lens/camera-combination or four times as much as a normal zoomlens. (2700 lp/ph in the Chart, about 1200 lp/ph for a good prime, 500 – 850 in the comparision here)

Why are the numbers so different?

With kind regards

Gert

Roger Cicala ·

Gert,

I’ve been wondering when someone would ask. These numbers are for a Phase 180 digital back medium format camera — 80 megapixel CCD sensor.

Roger

Max ·

Roger, great information as always. I can understand the variations you write about in Imatest testing and results but can you please explain how the same lens and camera tested by Photography Life scores 3000+ in Imatest while you score it at 1000 ? If there lenses 3x better out there – then I MUST have them. Max

xrqp ·

Regarding causes of variability in MTF50 test numbers:

What is the weighting factor you use for center, part way, and corner? Imatest defaults are 1.0, 0.75, and 0.50. (I like 1.0 for all, but that may be too unconventional for comparison purposes.)

Imatest Studio 4.0 (Nov. 2014) says: The boundary between center and part-way is 30% of the center-corner distance. The boundary between part-way and corner regions is 75%. Is that what you use?

When you framed the Imatest chart in your graphic above for the medium format camera, could you have got closer so as to crop the sides more? I think Imatest recommends the top an bottom have only about 1″ to 2″ border at top and bottom, and they say it is OK to crop the left and right sides.

That framing shows a good example of variance. Your horiz and vert regions (litle red box) in the corners have the horiz in the “part way” area and the vert in the “corner” area.

I wondered how Imatest dealt with horiz and vert numbers. Based on what you wrote, I guess they just average the “pair” that are closest to each other.

Thanks for publishing your work.

xrqp ·

When I run Imatest Studio on 32 regions, it takes 7 seconds more than 13 regions. I only have a Intel i3 processor in my desktop w Windows 7.

xrqp ·

I see now, in your Imatest graphic, the weighting you use is 1.0, 0.75, and 0.25. So a low corner score will not affect the overall weighted average much.

Mark Blum ·

Roger, I need to pick the best matched pair out of a pool of lenses for stereo 3D work. I can’t afford the image testing software and optical bench. Are you aware of a list of testers who may preform the analysis on a contract basis?

Thank you,