Is AI Art Generation Going to Destroy Art as We Know It?

Most everyone is talking these days about artificial intelligence, and the latest machine learning tools developed within the art space. Whereas 2021 was all about NFTs, this year is very much about AI and how it will change the art industry or possibly, destroy it entirely. As someone who is fascinated by technology and how it incorporates itself into the art world, I’ve been deeply intrigued by these latest pieces of software. And so, I decided to do a deep dive into what’s available, and determine if it will be a savior within the art community or a destroyer of worlds.

The Birth of AI

In 2022, the art community further merged with technology, and a couple of pieces of software were born – most notably Midjourney and Dall-E. Each giving the user the ability to type in a series of writing prompts, machine learning tech will attempt to create entirely unique art that follows the guided terms and phrases. And if you’ve been on social media in the last six months, you’ve likely seen your feeds flooded with this new style of art. In fact, this new technology has become so popular, brands like TikTok, Meta, and even Adobe have dipped their toes into the industry, each releasing betas of their own software designed to generate art.

How it Works

MidJourney

First making its debut when The Economist used its software to create its magazine cover for its June 2022 issue, Midjourney is an independent research lab looking to experiment with AI and art generation. Using a discord server as its main platform, Midjourney allows you to type a series of prompts to a chatbot, and that chatbot will then generate four pieces of unique art based on those writing prompts, usually in just a few seconds. From there, you can upscale the art, ‘reimagine’ the art, or tweak it as you see fit. But using a writing prompt system, your imagination is your only limit. If you want to create art of a steampunk panda bear flying a spaceship through our solar system, all you need to do is type that to a bot, and wait 10-15 seconds as it generates four different pieces of art bringing your weird ideas to life. Generally, the more descriptive you are in your art, the more tailored it will be to you.

DALL-E

Like Midjourney, DALL-E works largely the same way as MidJourney, but as a surprise to many, actually predates MidJourney by a full year and a half. Developed by OpenAI in January of 2021 (though the more common version is the DALL-E 2, developed in 2022), DALL-E works in assumingly the same way as MidJourney, giving you a web console where you can type in a series of phrases and artistic styles, and watch as their AI system generates four examples of your imagination in a few seconds. Whereas MidJourney takes more of an artistic and painterly approach, DALL-E has gotten a lot of attention for its more photorealistic approach to art.

Other Tools

Additionally, several other AI art technologies have come to the attention of many in recent months and have encouraged other tech companies to invest in their own version of similar technology. Most notable are Google Brain’s Imagen, Microsoft’s NUWA-Infinity, Stable Diffusion, and Craiyon. Additionally, Adobe has been in the development of their own AI Art software, as well as TikTok and Meta. With the flood of investments into machine learning art creation, many are worried that we’ve opened Pandora’s Box into AI art, and soon, human-created art and procedurally generated art will be indistinguishable soon, if we haven’t passed that threshold already.

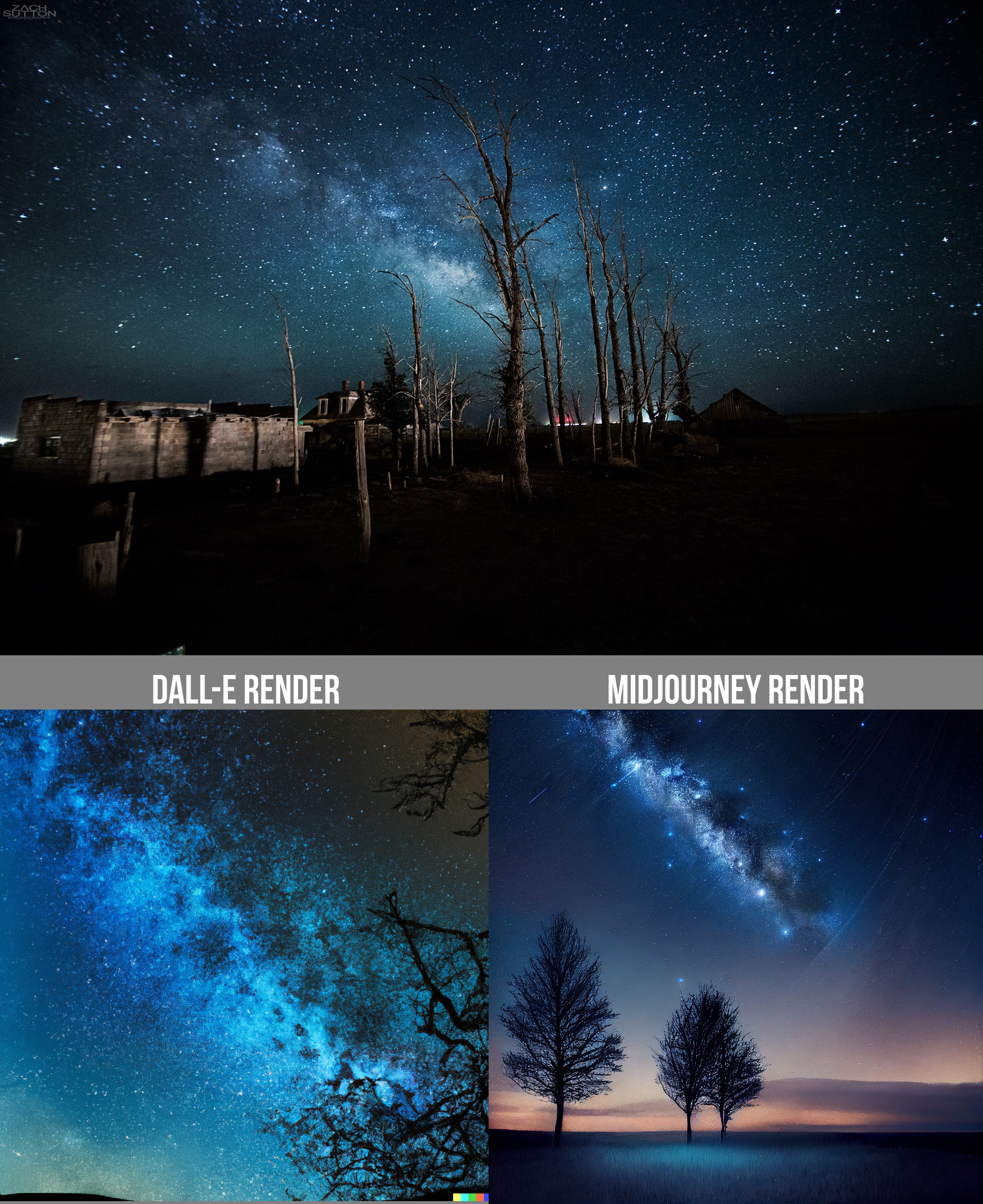

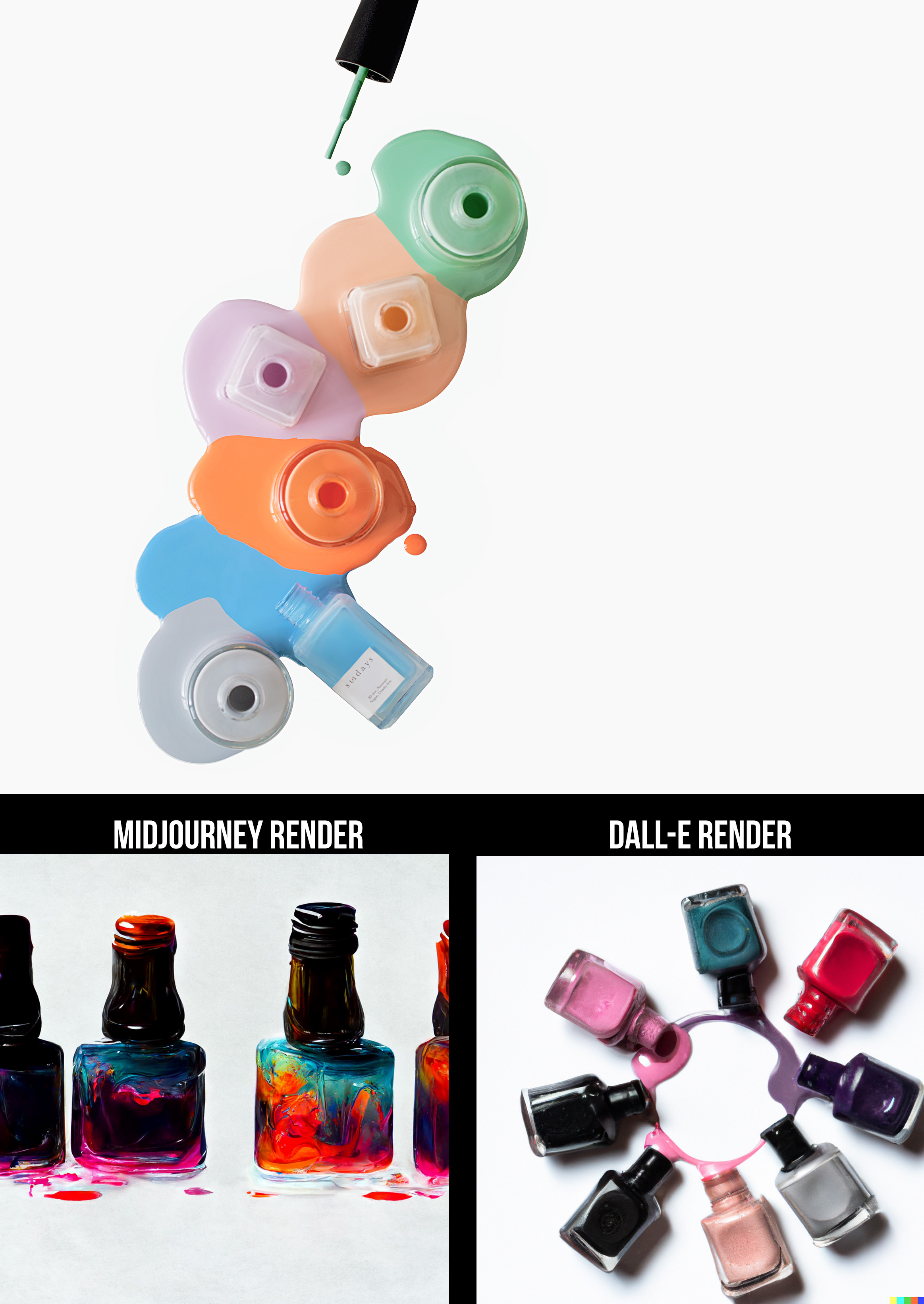

Examples of Renders

With Midjourney and Dall-E being the two vastly more popular AI generation tools, I decided I’d test each of them by using a random selection of images from my portfolio. Pulling six images from various bodies of my work from over the years, I gave both Dall-E and Midjourney a series of phrases describing the work they would mimic. From there, I selected the best render of the four generated from the AI generation tools. This should give you a basic idea of what their art looks like, and what capabilities they offer. The results of those seven images and prompts are below.

While the art speaks for itself, I did find myself really impressed with some of the renders, particularly the work created by DALL-E. Some of the work, by giving it just a few phrases came out amazingly accurate (particularly the DALL-E render of the bearded man above). Both subjects do seem to struggle with eyes and lips, but to create art as quickly as it does is certainly impressive.

Photo Input

While both MidJourney and DALL-E work off of writing prompts to create their art, each of their latest renditions also allows you to really test their capabilities – by allowing you to upload images and letting them generate additional pieces of work based on the art you feed it. Some examples of that are below.

-

DALL-E Rendering when uploading photo -

MidJourney Rendering when uploading photo -

DALL-E Rendering when uploading photo -

DALL-E Rendering when uploading photo -

MidJourney Rendering when uploading photo -

DALL-E Rendering when uploading photo -

DALL-E Rendering when uploading photo -

MidJourney Rendering when uploading photo -

DALL-E Rendering when uploading photo -

DALL-E Rendering when uploading photo -

DALL-E Rendering when uploading photo

Where is its Future?

I think the question on everyone’s mind is if this technology will destroy the photography industry as we know it. And so what do I think? Well, that’s a hard question to answer outright.

On one end, some of the renders (particularly from Dall-E) were astoundingly accurate and photo-realistic. By typing a handful of words and phrases, I watched as DALL-E and Midjourney created four different interpretations of my prompts, and did it all in just a few seconds. Each tool had its own set of problems, with both of them struggling with specific features of a human face, like eyes and mouths. However, with enough patience and tweaking of my phrases, I became really impressed with the accuracy they generated in their renditions.

To the well-trained eye though, most of the work sat in that uncanny valley, and it was pretty easy to determine it was generated by AI. But there lie the concerns. Whereas a painter is limited to the time it takes to paint a painting, and a photographer is limited to the time it takes to capture a photo, machine learning is not. Each of these tools produces thousands of renders daily, improving their techniques with each passing moment. These current shortfalls in their algorithms can be solved in hours, whereas it might take an artist years to develop these skills.

So is art doomed? Well, in my opinion, not exactly. I’m apprehensive to jump to conspiratory conclusions as to what this will mean for art. After all, conspiracy theories exist almost entirely as a coping mechanism response to uncertainty – and there is a lot of uncertainty with this tech. With AI-generated art recently winning art competitions and selling for over $400K in art auctions, a lot of people have felt uneasy about what this futuristic technology might hold. So much in fact, that Getty Images recently banned AI-generated art from their platform. And with the copyright and usage rights of these programs being murky at best, it brings a lot of questions as to what the status quo will be in the next year.

There is one way (out of several) where I can see this going. AI can function as an incredible tool for photographers and creatives to use within their own art. Using Midjourney, DALL-E, and several other tools to help enhance your creative expression can bring your work to new heights. While paranoid painters thought that the camera would destroy their work in the late 1800s, others embraced it as a new way to express themselves. In the end, painting didn’t go away, it just found new problems for it to solve.

And the same holds true here. AI has become a powerful tool that very well will take away potential jobs from artists. The ability to create concept art, storyboard an idea, or bring concepts to life is now easier than ever – and those jobs will suffer dramatically from this, just as the painters hired to paint portraits did in the 1800s. So how can you circumvent this? By personalizing your work.

Offer your clients art that expresses an idea, feeling, and style that comes from you – something they can relate to on an artistic and expressive level. Samuel Jay Keyser once said that art interpretation is the ultimate Turing test, and as such, if you focus your attention on those who you can create for, not those who might take from you, you’ll be far better off in the long run. These machines are producing art at an exceptional rate – hundreds of prompts every second. And they certainly are learning how to create what is fed to them, but they don’t know why they create what they’re creating.

8 Comments

Jalan ·

Thanks for your thoughts Zach. I agree that savvy people will see these computer programs as tools and not threats. As you pointed out, NFT’s were all the rage in 2021 and now the market has collapsed into worthlessness. “AI” is not intelligence or thinking or even creating; it is computer algorithms matching existing images with keywords and mashing them together. Humans think and are creative and no program can do that!

# WLM ·

> Humans think

very few actually do … most are “matching existing images with keywords and mashing them together.”

# WLM ·

> Humans think

very few actually do ... most are "matching existing images with keywords and mashing them together."

Kenneth_Almquist ·

People will still want photographs of things that actually exist, so jobs like portrait photographer, wedding photographer, and product photographer will not be replaced by AI. But the artistic photographer has to compete with people who generate are via computer rather than a camera.

Tree Young ·

Design is for others.

Art is for ourselves.

By that definition, a lot of “art” is actually not art but design.

AI is nothing but a advanced tool like photoshop.

Until someday we can prove that some machine has consciousness and creats some work totally driven by itself, it’s not art by definition.

Tree Young ·

Design is for others.

Art is for ourselves.

By that definition, a lot of "art" is actually not art but design.

AI is nothing but a advanced tool like photoshop.

Until someday we can prove that some machine has consciousness and creats some work totally driven by itself, it's not art by definition.

Alec Kinnear ·

https://uploads.disquscdn.com/images/1b4c302fde55b0010c77e78db022f09fa794a595b39b53b9520a4e0a1132c5ca.png https://uploads.disquscdn.com/images/5ae11d904b02615764c34ded0a7173914c9449dd03b384e52408cf84dddba8d0.png https://uploads.disquscdn.com/images/05ba23dde288a824b90f0bc2157c207c499d225d23799263574399eae3ffd7a2.png Zach, thank you so much for documenting the state of the art in autumn 2022. It’s autumn 2023 now and Midjourney is a completely different beast already. Whether AI images are a good thing or a bad thing is a different and longer conversation but what AI images can certainly be as of today are photo realistic.

The first two images below are mine (first day on Midjourney). The third one Mexican woman in hat in desert was prompted by milu6759.

Alec Kinnear ·

Zach, thank you so much for documenting the state of the art in autumn 2022. It's autumn 2023 now and Midjourney is a completely different beast already. Whether AI images are a good thing or a bad thing is a different and longer conversation but what AI images can certainly be as of today are photo realistic.

The first two images below are mine (first day on Midjourney). The third one Mexican woman in hat in desert was prompted by milu6759.

https://uploads.disquscdn.c...

https://uploads.disquscdn.c...

https://uploads.disquscdn.c...