Notes on Lens and Camera Variation

A funny thing happened when I opened Lensrentals and started getting 6 or 10 copies of each lens: I found out they weren’t all the same. Not quite. And each of those copies behaved a bit different on different cameras. I wrote a couple of articles about this: This Lens is Soft and Other Myths talked about the fact that autofocus microadjustment would eliminate a lot, but not all, of the camera-to-camera variation for a give lens. This Lens is Soft and Other Facts talked about the inevitable variation in mass producing any product including cameras and lenses: that there must be some real difference between any two copies of the same lens or camera.

A lot of experienced photographers and reviewers noted the same things and while we all talked about it, it was difficult to use words and descriptions to demonstrate the issue.

And Then Came Imatest

We’ve always had a staff of excellent technicians that optically test every camera and lens between every rental. But optical testing has limitations: it’s done by humans and involves judgement calls. So after we moved and had sufficient room, I spent a couple of months investigating, buying, and setting up a computerized system to allow us to test more accurately. We decided the Imatest package best met our needs and I’ve spent most of the last two months setting up and calibrating our system (Thank you to the folks at Imatest and SLRGear.com for their invaluable help).

It has already proven successful for us, as it is more sensitive and reproducible than human inspection. We now find some lenses that aren’t quite right, but that were perhaps close enough to slip past optical inspection. Plus the computer doesn’t get headaches and eyestrain from looking at images for 8 to 10 hours a day.

Computerized testing has also give me an opportunity to demonstrate the amount of variation between different copies of lenses and cameras. We have dozens (in in some cases dozens of dozens) of copies of each lens and camera. While we don’t perform the multiple, critically exact measurements that a lens reviewer does on a single copy, performing the basic tests we do on multiple copies demonstrates variation pretty well.

Lens-to-Lens Variation

We know from experience that if we mount multiple copies of a given lens on one camera, each one is a bit different. One lens may front focus a bit, another back focus. One may seem a bit sharper close up, another is a bit sharper at infinity. But most are perfectly acceptable (meaning the variation between different copies is a lot smaller than the variation you’re likely to detect in a print). I can tell you that, but showing you is more effective.

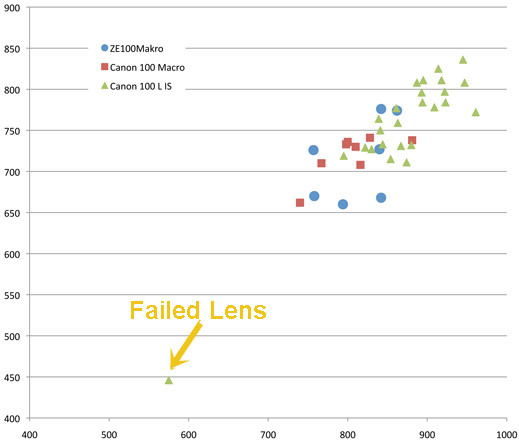

Here’s a good illustration, a run of 3 different 100mm lenses, all of which are known to be quite sharp: the original Canon 100mm f/2.8 Macro, the newer Canon 100mm f/2.8 IS L Macro, and the Zeiss ZE 100mm Makro. The charts shows the highest resolution (at the center of the lens) across the horizontal axis, and the weighted average resolution of the entire lens on the vertical axis, measured in line pairs / image height. All were taken on the same camera body and the best of several measurements for each lens copy is the one graphed.

It’s pretty obvious from the image there is variation among the different copies of each lens type. I chose this focal length because there was a bad lens in this group, so you can see how different a bad lens looks compared to the normal variation of good lenses. As an aside, the bad lens didn’t look nearly as bad as you would think: if I posted a small JPG taken with it, you couldn’t tell the difference between it and the others. Blown up to 50% in Photoshop, though, the difference was readily apparent.

My point, though, is while the Canon 100mm f/2.8 IS L lens is a bit sharper than the other two on average, not every copy is. If someone was doing a careful comparative review there’s a fair chance they could get a copy that wasn’t any sharper than the other two lenses. I think this explains why two careful reviewers may have slightly different opinions on a given lens. (Not, as I see all too often claimed on various forums, because one of them is being paid by one company or another. Every reviewer I know is meticulously honest.)

Autofocus Variation

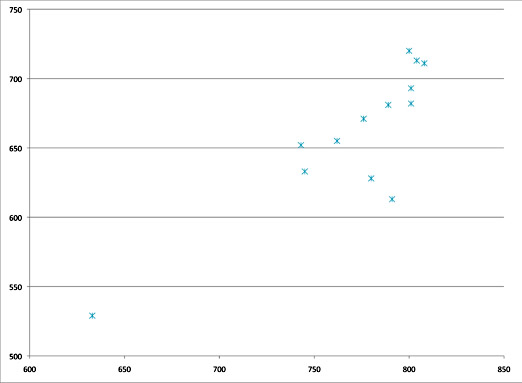

We all know camera autofocus isn’t quite as exact as we wish. (Personally, after investigating how autofocus works for this article, I’m amazed that it’s as good as it is, but I still complain about it as much as you do.) But when I started setting up our testing, I was hoping we could use autofocus to at least screen lenses initially. The results were rather interesting. Below is the same type of graph for a set of Canon 85mm f/1.8 lenses I tested using autofocus. Notice I again included a bad copy as a control.

(For those of you who are out there thinking “I want one of those top 3 copies, not one of the other ones”, and I know some of you are, keep reading.)

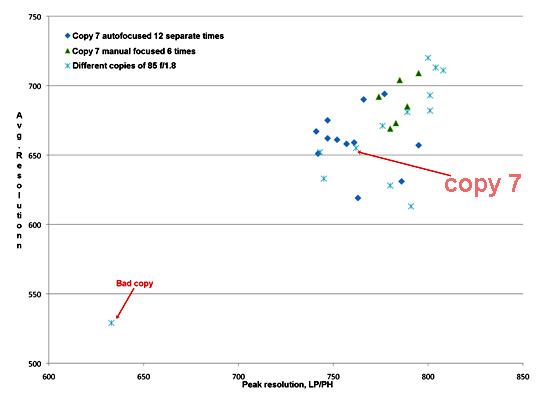

Then I selected one copy that had average results (Copy 7), mounted it to the test camera, and took 12 consecutive autofocus shots with it. Between each shot I’d either manually turn the focus ring to one extreme or the other, or turn the camera off and on, but nothing else was moved. (By the way, for testing the camera is rigidly mounted to a tripod head, mirror lock up used, etc.)

In the graph below, overlaid on the original graph, the dark blue diamond shapes are the 12 autofocus results from one lens on one camera. Then I took 6 more shots, using live view 10x manual focus instead of autofocus, again spinning the focus dial between each shot. The MF shots are the green diamonds. I should also mention that when I take multiple shots without refocusing the results are nearly identical – that would be a dozen blue triangles all touching each other. What you’re seeing is not a variation in the testing setup, it’s variation in the focus.

It’s pretty obvious that the spread of sharpness of one lens focused many times is pretty similar to the spread of sharpness of all the different copies tested once each. It’s also obvious that live view manual focus was more accurate and reproducible than autofocus. Of course, that’s with 10X live view, a still target, and a nice star chart to focus on and all the time in the world to focus correctly. No surprise there, we’ve always known live view focusing was more accurate than autofocus.

One aside on the autofocus topic: Because it would be much quicker for testing, I tried the manual versus autofocus comparison on a number of lenses. I won’t bore you with 10 more charts but what I found was that older lens designs (like the 85 f/1.8 above) and third party lenses had more autofocus variation. Newer lens designs, like the 100mm IS L had less autofocus variation (on 5DII bodies, at least – this might not apply to other bodies).

Oh, and back to the people who wanted one of the top 3 copies: when I tested two of those repeatedly, I never again got numbers quite as good as those first numbers shown on the graph. The repeated images (including manual focus) were more towards the center of the range, although they did stay in the top half of the range, at least on this camera, which provides me an exceptionally skillful segue into the next section. (My old English professor would be proud. Not of my writing skills, but simply that I used segue in a sentence.)

Camera to Camera Variation

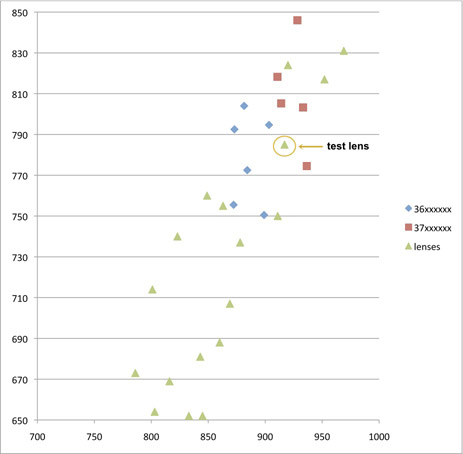

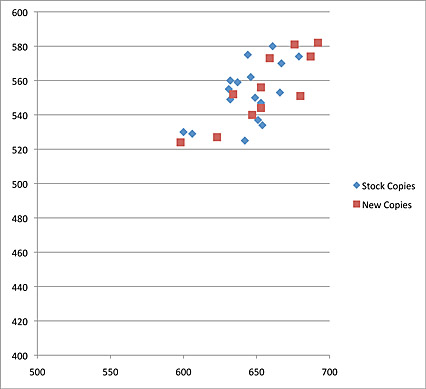

Well, we’ve looked at different lenses on one camera body, but what happens if we use one lens and change camera bodies? I had a great chance to test that when we got a shipment of a dozen new Canon 5D Mark II cameras in. First, I tested a batch of Canon 70-200 f2.8 IS II lenses on one camera, using 3 trials of live view focusing on each. The best results for each lens are shown as green triangles.

Then I took one of those lenses (mounted to the testing bench by its tripod ring) and repeated the series on 11 of the new camera bodies. The blue diamonds and red boxes this time each represent a different camera on the same lens. (4 test shots were taken with each camera, and while the best is used, each camera’s four shots were almost identical.) Obviously the same lens on a different body behaves a little differently.

I separated the cameras into two sets because we received cameras from two different serial number series on this day. I don’t know that conclusions are warranted from this small number, but I found the difference intriguing. And maybe worth some further investigation.

Summary

Notice I don’t say conclusion, because this little post isn’t intended to conclude anything. It simply serves as an illustration showing visually what we all (or at least most of us) already know:

- Put different copies of the same lens on a single camera and each will vary a bit in resolution.

- Put different copies of the same camera on a single lens and each will vary a bit in resolution.

- Truly bad lenses aren’t a little softer, they are way softer.

- Autofocus isn’t as accurate as live view focus, at least when the camera has not been autofocus microadjusted to the lens.

All of this needs to be put in perspective, however. If you go back to the first two charts, you’ll notice the bad copies are far different than the shotgun pattern shown by all the good copies. And when we looked at those two bad copies, we had to look fairly carefully (looking at 50% jpgs on the monitor) to see they were bad.

The variation among “good copies” could probably be detected by some pixel peeping. For example if you examined the images shot by the best and worst Canon 100 f2.8 IS L lenses you could probably see a bit of difference if you looked at the images side-by-side (the images I took on my test camera). But if I handed you the two lenses and you put them on your camera, they’d behave slightly differently and the results would be different.

So for those of you who spend your time worried about getting “the sharpest possible lens”, unfortunately sharpness is rather a fuzzy concept.

Roger Cicala

Lensrentals.com

October, 2011

Addendum:

Matt’s comment made me realize I hadn’t talked about one obvious variable in this little post: how much of the variation is caused by the fact that these are rental lenses that have been used? The answer (at least for Canon prime lenses) is not much, if at all. For example the graph below compares a set of brand new Canon 35mm f/1.4 lenses tested the day we received them (red boxes) to a set taken off of the rental shelves (blue diamonds).

Please note I make this statement only for Canon prime lenses. Zooms are more complex and I see at least one zoom lens that doesn’t seem to be aging well, but until I get more complete numbers to confirm what I think I’m seeing I won’t say more. I see no reason to expect other brands to be different, but at this point we’ve only been able to test Canon lenses (these tests are pretty time consuming and we have a lot of lenses).

104 Comments

neuroanatomist ·

Thanks for this – like your other blog posts, it really adds to the knowledge base!

One comment about the AF vs. MF live view behavior, and that’s based on the testing procedure at SLR gear. They point out that for careful testing, neither AF nor MF with Live View is accurate enough, and instead they take a series of focus-bracketed shots (done by moving the camera on a rail), and use the sharpest one for the test.

Roger Cicala ·

SLR Gear is totally correct: focus bracketing is the most accurate way to do it – it’s the only way to eliminate the camera-lens variability I’m writing about. In our situation, though, we need to examine and evaluate that same variability because it’s going to affect our customer’s use of the lens.

If a lens is optically superb but backfocuses horribly we need to know that so we can get that corrected, because our customer is almost certainly going to use the lens on autofocus mode. But for testing and review purposes, SLR Gear needs to determine the best possible performance of each lens, and focus bracketing is the only way to do that. I believe, in fact, that they developed that technique – they are meticulous and their reviews reflect that.

Roger

Bob Williams ·

Roger, Thanks for the time you spent on this testing, analysis and most importantly, the explanation. This type of info is extremely valuable to those of us new to the craft. BTW, I bought a used 100-400 from you after renting one from you 2 years ago. It’s still on my camera and my most often used lens, its also worth more now (used) than when I purcahsed it—–Thanks and love your service.

Bob

Chester ·

Thanks for this post Roger. This makes me want to do the long overdue auto-focus calibration on my camera.

Tenisd ·

Pretty cool post. Thank You 🙂 Saw on twitter.

Samuel Hurtado ·

and that is why this blog is freaking awesome

Matt Norris ·

Great article.

I had heard a little about how different lenses produced different results, especially when used on different bodies. But I hadn’t heard much about autofocus variation.

For those interested, the luminous landscape website recently posted an article about back focus:http://www.luminous-landscape.com/essays/are_your_pictures_out_of_focus.shtml

Also, I’d love to see the test results of the same lenses over time. It would be an interesting test to find out which lenses are better made and retain sharpness after heavy use.

Roger Cicala ·

Hi Matt,

Great minds think alike — testing for changes over time is one of our major goals. The most accurate way will be as we follow lenses over time in service. The less accurate way we’re already doing, which is comparing older and new copies which we’re doing now (of course this will also show a difference if an unannounced improvement has occurred in newer lenses).

Allan Sheppard ·

Hi Roger,

As a long time reader of your excellent articles thanks for another common sense note.

Canon has stated (I don’t have a link) that their specification for AF performance is that the AF is inside the depth of focus for that F stop/distance.

Could you run an experiment with one of your (say) 100L lenses the results when you focus on the calculated DOF range – distance plus 1/2 DOF or distance minus 1/2 DOF and see how the numbers change. I realise this brings in CoC, etc, but would help matching Canon’s specifications to performance.

Cheers,

Allan

Roger Cicala ·

Allan, that’s a great idea. I’ll give that a try.

Roger

Sylvain ·

Thanks Roger, very instructive article, as always.

Wish there was an european LensRentals 🙂

Chris ·

Thanks so much for these articles, Roger. Your tests are a huge asset to the community. The vast majority of reviews are of a single sample, and as you’ve proven with this and other articles that’s not enough data points to speak conclusively about a lens model.

In your article you mentioned how newer lens designs seem to be more accurate, “on 5DII bodies, at least”. Anecdotally, I had a teeth-gnashing experience related to this.

I had a 5D1 and a Tamron 28-75/2.8 that worked beautifully together. I shot portraits with it, and I’d get perfect AF on the eyes >90% of the time.

Later I got the wildlife bug and bought a 40D. Its AF was very good with the 100-400 and 300/2.8IS I used for wildlife, but with the Tamron 28-75 I had very frequent AF misses. Headshots at 75mm, f/2.8 often missed badly enough that the eyes were OOF, either front or back focused randomly. I’d put my keeper rate down around 50%. Not usable at all.

Later still I bought a 7D and experienced the same problem. Since I had sold my 5D1, this time I decided to use AF microadjust. I bought a LensAlign and set to calibrating all my lenses. The 300/2.8IS was off consistently by a setting of +1 or +2. The 100-400 was off by +2. The 100/2.8 USM was spot on. The Tamron was off randomly by so much that the range of the adjustment wouldn’t be enough to correct it, if the error was even consistent. Which it wasn’t… I tested by defocusing near and far then letting the camera AF. There was no rhyme nor reason to the results that I could gather.

My current body is a 5D2, and I was saddened to see that it wasn’t just my crop bodies that were problematic. The Tamron has the same problem on the 5D2 as well. A lens that was perfect almost every time on the 5D1 is inconsistent on any body newer than a 5D1. (For what it’s worth, my old 350D never had a problem, either.) I have since bought a 24-70/2.8 (a new one) and my focus inconsistencies are gone. Unfortunately that means I now need to carry around a heavier, more expensive, and less sharp lens.

My hypothesis from this is that sometime after the 5D Canon changed their AF algorithms enough that some older or third-party lenses don’t focus consistently. Long story short, I think there’s something to your thought that the age of the equipment might have something to do with accuracy. Not because the equipment is old, but because newer equipment has newer algorithms that work differently.

Have any 5D1’s or older lying around to test, Roger? 🙂

Roger Cicala ·

Chris,

I still miss my 5D Classic. I loved that camera till I ran the shutter out. But I agree completely with your assessment: new algorithms probably change the way a third party lens behaves, but the manufacturer probably makes a point to include all of their own lenses in the new setup.

Roger

Gary ·

Perhaps one other line or arc on the graph–that point of resolution at which it’s not possible to detect a difference with the unaided eye. That is, are all the resolution differences on the above graphs occurring substantially above what the eye can see anyway? I’m reminded of extraordinarily good sound gear–should you pay for headphones that produce a sound range most humans can’t hear?

Roger Cicala ·

Gary,

I think there’s a lot of truth in that. One thing that impressed me was that at a glance, the really bad lenses shown in the graphs could be detected if we looked at the images on a monitor, but you had to look rather carefully. I think of the general group of lenses, it would take some serious pixel-peeping or lab type measurement to see the difference.

Roger

Dan Tong ·

This is the most inteliligent and valuable article about photographic equipment to be posted anywhere. It’s the kind of work dpreview should have done years ago. Congratulations for doing this.

Dan

John Geisendorfer ·

Excellent article & information. We see so much user information on the web that is not backed by good data, or we fear influenced by those selling something. So, now I know thee really are bad copies, but they are very likely really bad. I also know that most are fine & that me trying to nit pick the best is questionable. Thanks & I look forward to more.

Craig Luna ·

Roger,

Since you have a unique perspective given the quantity of returns and service you probably deal with, could you please answer a question.

When you send a lens in for service, esp Nikon, what is the typical recourse of an out of spec focus. That you can tell, do they replace and tighten up the gears to reduce backlash, adjust optics for zero, or does the lens get programmed with re-calibration?

Thanks and hoping you have been fortunate enough to have been enlightened which approach they pursue!

Roger Cicala ·

Craig,

Our experience is that usually if we just send a lens in saying it’s soft, all we get is an electrical adjustment and “lens in spec” reply. If we describe the problem carefully, like “lens is softer on the left side when focusing at 8-20 feet, less so at infinity” we tend to get a good repair.

Grant Zabro ·

As informative as these plots appear to be, in reality they’re mostly useless, as error bars are not shown on any of the data points, and we have no idea how significant the differences between the various measurements are. Can you really distinguish between a resolution of 750 and 751? 750 and 760? When you average across the lens, are you accounting for both systematic and statistical errors? How reproducible is the setup? I’m sure that there are real differences between lenses and camera bodies, but without a proper error metric, it’s impossible for us to tell how significant these differences really are.

Merrick ·

So can we conclude that because there’s variation from live view focusing that we could expect focus variation on cameras that use the image sensor for focusing, such as Sony NEX or micro 4/3 cameras?

Roger Cicala ·

Merrick,

I can’t say for certain, gut my gut feeling is there would still be variation, although much less so.

Roger

Kjetil Johannessen ·

Hi

Good work, and interesting. The only part of this I would like to have seen a liitle more is how good is the meassurment. I would propose to use the same camera and lens, but set up the same combination for test say at least 8 times (as if you took a new combination) To be sure disconnect the lens from the camera each time, and finally the last important align the camera with the test target as for a new camera each time. This would give a baseline for the measurment uncertainty itself.

Roger Cicala ·

Kjetil,

I don’t have the figures at hand, but if you look at the graph in the addendum the new lenses were tested in different days (with resetup) than the older lenses. It took a month of developing our techniques to get reproducible results but once we had the proper support and alignment equipment in place the results are very reproducible session to session.

Roger

Darrill Stoddart ·

Roger,

Thanks for sharing your test results. Though I have no similar empirical evidence I remain convinced that a certain lens on a specified body can perform differently on different days. I photography fast moving sports (rugby, athletics) and using a Canon 70-200 f2.8 IS II and some days I find myself having to sharpen the jpegs and on other days the raw files come out razor sharp.

It might be the weather, how much coffee I had that morning but the results on one day are consistent for that day but not necessarily consistent with the shots from another day. Or it could just be my imagination;-)

The lens is micro adjusted monthly.

Roger Cicala ·

Darrill, I believe that to be true – at least it is in my hands. I know temperature and humidity can have a very real effect, but I think there’s other variables, too.

Roger

Clay Taylor ·

Roger –

Informative and amusing, as always. Great job.

Given that I am a photodinosaur who normally manually “tweaks” (via the groundglass focusing screen) whatever AF setting my camera makes (I would have to resort to reading glasses to use the LCD screen’s 10x MF assist), I wonder about the accuracy of THAT system.

What would the graph spread be for a lens that was Manually Focused using only the groundglass screen, compared to the AF and 10x MF Assist clusters for that same lens?

Michael Kasper ·

Fantastic article. Thanks Roger.

Do you guys do auto-focus calibration for people’s personal gear? That is, if I send you my camera & lenses, will you calibrate them and let me know if anything is amiss? If not, this could be a nice bolt on service for Lensrentals!

BTW, the 8-15 Fisheye I rented from you guys a month ago was great. I would have bought it from you at the end of the rental period. Rent to own is another idea… Credit a portion of the rental costs? Perhaps only on certain lenses that aren’t hard to get.

Roger Cicala ·

Michael,

We don’t right now, we just don’t have enough staff during busy season, but we may offer it as a service during the winter.

Thanks,

Roger

Ruy Penalva ·

Roger,

I think these results are expected, chiefly in lens, but in camera also. In industries, as in nature, nothing is able to repeat identical, maybe the DNA. Probably quality control in camera and lens makers does not include photografic tests, but pure electronics tests. Congratulation to show what I already felt. Congratulations

Mel Gross ·

Roger, you mentioned the micro adjust. I know this is time consuming from my own experience. But, what might we see as far as sharpness values once that is done, as well as other values? Is it possible that these numbers might change with worse lenses becoming better than better lenses without it? I can’t get multiple lenses to try that.

Roger Cicala ·

Mel,

It was outside the main point of this article, but when we run a batch of lenses through on autofocus on a given camera there are always a few that are badly back or frontfocused consistently, and those would definitely be corrected by micro adjustment.

Lonny Smart ·

Thanks,very interesting, now I wonder what happend if you threw microadjustment into the mix.

David Stock ·

Great article; thanks.

One thing I’ve been wondering lately is whether contrast-detect autofocus, once seen as a cheap but second-rate option, is actually the future.

As higher-end cameras start using it (either as their only autofocus sytem or for live view), and as advances are made in contrast-detect speed, it seems worth investigating how well this system compensates for not only lens sample-to-sample variation but also camera calibration issues and focus shift.

Would there be as much difference among your samples if they were focussed using contrast-detect autofocus? Can contrast-detect autofocus compensate for the relatively loose tolerances of the current manufacturing processes? What are the prospects for really fast contrast detect systems in the future?

I’ve noticed that contrast-detect autofocus, when available, often makes better use of my lenses optically than the phase-detect version, even on the same camera. In fact, I sometimes use contrast detect autofocus to calibrate my lenses for phase-detect focussing.

Roger Cicala ·

David,

I totally think it is, or perhaps the idea of phase detection using the actual image sensor. But anything that eliminates focusing using one sensor and shooting the image with another has got to reduce variability.

Roger

Mount Spokane Photography ·

Thanks for another interesting article. I guess I knew that lenses and bodies acted the way you describe, I’ve managed a Aerospace laboratory, and results always vary.

Still, its very good to see some data that confirms my gut feeling about lenses and bodies. I have seen the variation with one particular wide aperture Canon zoom that I had five copies before giving up on finding one that I thought was to specification. I find that most of my lenses are very good once the AF is tweaked, but I also feel that the focus accuracy sometimes varies with distance. Something else you might look at one day.

Dave Sucsy ·

Much thanks, Roger. You blogs & posts and thinking are great and very appreciated. And I will continue to show my thanks by renting from you whenever I have the need.

Dale Blevins ·

Nice work, I know it’s got to be excruciatingly tiring and for that, we thank you! I’m reminded of when I used to target shoot. Every gun (camera) has a preference to a style and weight of bullet (lens) and a particular powder/charge weight. The trick for a competition shooter (pro photog) is to fine tune his tool of choice to perform at it’s optimum so that the shooter himself could perform at his. It truly is a matter of mass produced product and variation, but with today’s camera’s being capable of micro adjustments, it pays to spend a little time fine tuning your equipment…not only to make sure they are working together but so that you know the limitations of your product and are thereby able to get the most out of it.

That said, I personally do not like returning anything unless it is absolutely necessary. So I won’t be one of those in the perpetual search for the optimum lens for my camera body. This kind of report helps me to understand the necessity of dialing in the correct micro-adjustments in each body for each lens and then using what you have to create the best magic possible.

Thanks for a job well done. I sincerely hope this helps many more people understand their equipment and get busy shooting not swapping.

cj ·

It would be interesting to see how the EXIF focus distance compares to the real distance and sharpness in this study.

Bob ·

Without error bars, it is impossible to tell if any of the results are statistically significant.

Roger Cicala ·

Bob,

This is just a quick demonstration, not a scientific study. However, I’m not aware of a method of placing error bars on actual data points.

Roger

David ·

Love the articles. I don’t suppose you’ll take a stab at variation among zoom lenses? Could we assume that a given lens might be sharper at some, but not all focals, or might we expect a lesser copy to be uniformly worse?

Another of the more interesting attributes you can discover with Imatest is T-stop transmission. The variability here among ostensibly similar lenses would make for a nice graph.

Bob ·

If by “not a scientific study” you mean the results are meaningless, I agree. But you seem well intentioned, and I hope you understand that I’m not nitpicking here.

You could create basic error bars by measuring the same copy of the same lens, same setup, multiple times and then calculating the standard deviation of your measurements.

James ·

Excellent, excellent article. Your description of those who wanted the top 3 copies of a particular lense reminds me of how at Steinway Piano’s hq in NYC, there’s a special room where the world’s great pianists get to hand pick the top pianos (out of several $100,000+ pianos!) to get the one that’s sounds just ‘right’.

When I first read about Fujifilm’s choice of a non-interchangeable fixed lens in their x100, they justified it by stating that the camera’s sensor was customized just for that lense in order to allow light to strike the sensor at an angle that is as perpendicular as possible (more important for digital sensors than film sensors). Were Fujifilm’s engineers possible trying to avoid the performance variations that might exist with interchangeable lenses as described in your article?

I’d love to learn more about this topic, especially one of the above comments’ thoughts on how contrast detection autofocus systems might minimize the issues described in your piece. Again, thank you for an excellent article.

Novak ·

It is interesting, when Sony bought Minolta’s camera division, one of the Sony directors said that he was really surprised with how manual the whole manufacturing process is and at how many levels things can go wrong with current AF system. Back then he claimed that Sony will tackle that. Now with SLT and NEX series we do see the changing landscape after a long time but the question is is it really better, can we count on more consistence results across camera/lens system. Any experience with SLT camera/lens combo?

mantra ·

Hi

nice article

may i ask a question ?

do the earthquake & tsunami affect the built and control quality of canon lenses ?

thanks

Roger Cicala ·

We haven’t seen any difference in recent lens and older lens, but we don’t have any well to tell what lenses have been made post tosunami.

Roger

alek ·

WOW … yet another great article Roger – super job again. BTW, you say

“The variation among “good copies” could probably be detected by some pixel peeping. For example if you examined the images shot by the best and worst Canon 100 f2.8 IS L lenses you could probably see a bit of difference if you looked at the images side-by-side (the images I took on my test camera).”

You might consider putting those two images side-by-side … i.e. show us graphically how (little?) difference there is. Plus toss up the bad copy. Obviously full-res for all of ’em.

The suggestion to do error bars is a good one … although adds another dimension so lots more testing. The 3rd figure down (suggestion: number your figures/graphs) titled “Copy 7, repeatedly autofocused (blue diamonds) and manually focused (green triangles)” does exactly this. Encouraging that the spread is pretty tight to my untrained eyes.

It’s late at night, but I’m assuming that “autofocused” meant using phase AF and “manually focused” actually means use contrast AF (rather than Mark I eyeball and manually rotating the focus ring) – if I’m wrong, ignore the next paragraph.

The data shows that contrast-AF squeezes the best performance out of the lens which I don’t find surprising. Although I can’t help but wonder if a little microfocus adjust might have helped here … i.e. how much would a +-1 have moved those phase-AF data points?

Voe ·

This is why Leica lenses are so expensive. Because there is no sample variation. Everything is built and tested to the highest standards.

Andre ·

Seriously, do you expect to get any accurate focus results using those bodies?

Why not try the same methods with a brand of camera that is reliable in the focus dept.

Oleg ·

Roger, thank you for the very informative article.

Do you know, that Canon does have very sophisticated calibration software,

which allows to calibrate all and each autofocus sensor, and for zoom lenses even to specify

different MFAs, depending on zoom value?

Also, different autofocus sensors in one camera can be misaligned (this results in different focus results when camera focuses by different focus points) and that Canon software

allows to align them evenly. MFAs can be recorded for a specific camera/lens combo, based on lens internal ID, not lens name which is like standard MFA in camera works.

I saw how service engineers calibrated Canon 40d + Canon 50L combo and I wished I had that software, it is really not hard to use and very powerful. Unfortunately, it is not public, it is provided only for authorized Canon Service centers. If Canon provided this software for public use (it even has good enough help documents), all that problems would be gone, I think. With that software, almost any photographer can test autofocus and find, for instance, that center horizontal autofocus sensor should be adjusted and other censors should be not, which was the case of that 40D+50L combo. Standard in camera MFA does not allow to calibrate one autofocus sensor only, it does it for all focus points at once. If it is new info for you and you have any questions about how Canon service calibrates lenses, just send me a message.

Roger Cicala ·

Oleg,

I know of the factory calibration software, but this is a very pertinent point. It can do a much more thorough job than we can with micro adjustment. My understanding is requires specific test targets and the setup is too expensive for smaller repair shops to afford, but I totally agree: it would be an awesome tool to have available.

Basco ·

very good review, thk you for sharing it with the rest of us, keep up the good work.

Stas ·

Thanks for the excellent article! I just miss one thing – a test ran on different samples, but with manual focussing. I noted that the pictures taken with manual focus form a more tight group and stay well above the ones that are taken with autofocus on the same lens. If You do 4-5 shots with MF on each lens, choose the best sample and make a graph that should be closer to lens difference inspection. In continuing Your point on having too much variables we should list not only lens, and AF, but rather disassemble them to body-to-lens protocol, autofocus optics, autofocus in camera algorithm, meaning that even body-to-body with the perfect lens would produce a cloud result.

Lynn Allan ·

Good article.

I’d be interested in charts/graphs where you took an average performing combination of lens+camera, and used micro-focus-adjustment to optimize. My impression is that the combination should improve to a greater or lesser degree, and that there would be more uniformity.

Or not?

Sandra ·

Hey Roger Help Me…Problem in canon Lenses?…Not in Nikon?…should I switch to Nikon?

Roger Cicala ·

Sandra – Canon lenses used for the example, but it is pretty similar with all brands.

Roger

R ·

Merrick, Live View was manual focus – so he could magnify to get sharper manual focus than with an optical viewfinder. The article does not test the difference between phase detect autofocus and contrast detect autofocus. I think phase detect would be more accurate, but it could vary from model to model.

AJ Borromeo ·

I am impressed Roger!! This explains why I have always rented my lenses from you. Always reliable and always professional and friendly. Keep up the great work!!!

Bart ·

Hi Roger,

I really love your articles, and especially the position you take; no conclusions, no big statements, just proper testing and modest judgements. Anyhow, I have a question; Can you think of a reason why manufacturers won’t let the CDAF (Contrast Detect AutoFocus) be used to calibrate the PDAF (Phase Detect AutoFocus)? I could imagine mounting a new lens, focusing with CDAF on a test-chart at different distances, with which the PDAF is calibrated. This would combine the pro of CDAF, of focusing on the image-plane, with the speed of the PDAF.

Kind regards en thanks for your great articles!

Bart

Roger Cicala ·

Bart,

I can’t think of any good reason they don’t do that. It seems to me it would be a simple software / firmware add on. And would be amazingly useful. I’d also love to be able to flip a switch and get to choose phase detection AF when I needed speed and contrast detection for accuracy.

Roger

Oleg ·

Roger,

Regarding Canon calibration software:

Yes, service uses special setup, but feature-wise it is basically

vertical and horizontal focus targets with a ruler to measure focus error.

First, they focus on targets, then replace it with ruler and make a shot.

After that software analyzes the contrast to determine area of best focus.

After that adjustments are made.

So, this special equipment can be replaced by focus target (vertical of horizontal lines printer on paper) and a ruler. It will work slower than special equipment but it will work. The only problem is software.

Canon is very serious about it. Installation is tied to a machine, it will not work on another machine and Canon manages keys for each installation. That is why that software is not well-known. It can run only on authorized machines.

Guthrie ·

Another fantastic article! Thanks for keeping the information flowing, and public! You do a great service to the community.

Will this be making it’s way over to canonrumors?

I’m curious as to which zoom you need “more complete numbers” to talk about. Should be a good read, I’m sure!

Keep up the great work,

Cheers,

alek ·

Ditto what Bart and Roger said about using contrast-AF to auto-microadjust phase-AF … surprising/disappointing that the manufacturers haven’t provided this capability.

P.S. Any chance Roger of showing us some full-res images that better show the real-world impact of focusing/lens variability?

Udi ·

Roger,

Your tests are very interesting. You have tested a set of identical lenses against one body, and then a single lens against multiple identical bodies.

My question is – Can you actually use this to select a “good” body and a “good” lens, or is this a question of a combination of a specific body and lens.

Asked otherwise – if you will run the same groups of lens against a different camera body than the one you just did – will the top performing lens remain the same one, or will we get a lens that wasn’t so good in the previous test to become a much better lens because the specific body and lens cancel out each other’s errors?

Roger Cicala ·

Udi,

It’s easy to find a good-camera lens match, but the lens that’s sharpest on camera A is not likely to be the sharpest on Camera B. If I run the same batch of lenses on another camera (which we’ve done) the overall data points will be similar, but the lenses will shift around within the grouping.

Roger

Les Burns ·

Great article.

I remember years ago, at a large camera store in Chicago, they offered special selections of new Goerz gold dot lenses (supposedly the best) for a premium; I always wonderd if it was worth the money; at least I suppose it kept you from getting a dog.

When I ordered M300 Nikors for my Gowlandflex (a 4×5 tlr), Olden got them to match the focal lengths tightly. Judging from what you said about Canon’s focus checking, it doesn’t seem as if focal length should be a factor in lens quality variation.

I also remember hearing Leitz had a series of cams (or maybe an adjustment) to match variation in focal length on their rangefinder lenses.

Chris ·

One of the most most useful, informative articles I have ever read! Thank you sooo much for this. 🙂

John Dunne ·

A great read, thanks for being a beacon of sanity 🙂

eric ·

Geesh, more people stealing my idea to use contrast deection to calibrate phase detection! Just kididng, but I posted that idea many months ago. Canon may have simply not thought of doing so….they could even have a firmware routine that almost totally automates the process (user would be promted to change focal lengths).

John Hartigan ·

I love this article! Best I have read on any photography tech subject in years. Before CAD and CAM, when cameras and lenses were designed and manufactured by craftsmen & not computers, We said the difference between a 60 dollar lens and a 3000 dollar lens was how many ended up on the trash pile instead of on store shelves. A great lens was rigorously checked before release, which is probably still done in the Cine world.

Thank you Roger

George ·

Thanks for this article… a great read. Maybe I missed this in the article but were calibrations preformed on the cameras before the tests? I guess my root question is around the thought of… are these variations after calibration or out of the box variation?

Roger Cicala ·

George,

Those data points are random: lenses are not microfocus adjusted to the test camera. Our goal is to get sort of a worst case scenario since we’re trying to see how they’ll work for our customers.

Roger

tlinn ·

Hey Roger,

Just another thank you for a great article. You are really in a unique position to provide insights like this and I certainly appreciate your doing so.

Tim

J. Skinner ·

Great article. Written better than most professional engineers could do.

I really liked your filter tests too.

I understand your graphs, but it takes some mental extrapolation to

realize how tightly grouped the data points are.

If one of your graphs went to zero-zero on the 2 axes it would appear

to the casual reader that there is much less variation between the

best and worst lenses and they would be less likely to send one back

the the seller.

Roger Cicala ·

That’s a very good point on the graphs: I was zooming the axis a bit to emphasize the difference, but probably should have started with a zero axis graph to show how tight the group really is.

Thanks,

Roger

Marc Beckwith ·

An outstanding article. I especially appreciate the lack of sweeping generalizations, allowing your research to speak for itself. You’ve provided a great service to the pixel peepers amongst us!

John Jovic ·

Nice job Roger, keep it up. You’re in a special position to be able to do this kind of testing and of course you don’t have to share your efforts with any one so it’s much appreciated that you do.

JJ

Steve ·

Great work on a subject that has been a thorn in my side for many years. I tend to shoot wide open with very fast lenses and have been tortured by AF misses. I think it’s a great argument for digital viewfinders, which I believe, is the best way to achieve consistent correct focus.

Philip ·

Great article. Really good to see the variability of AF exposed like this. I had a 70-200 2.8L I sold earlier in the year as I was never happy with it’s focussing. It went back to Canon CPS 3 times but they could never find anything wrong with it. Bought a MKII and it’s great. Go figure!

John Kennekam ·

Many cameras now have fine-tuning as an option, but the whole process is labourious. Why not simply automate the process for the customer. It could work as follows:

1. The camera and lens is mounted on a tripod.

2. Focus pattern sheet is downloaded from the vendor’s web site and is printed out.

3. The camera is pointed at the pattern sheet.

4. No the magic happens. The camera automatically takes a series of images ranging from -20 to +20.

5. The camera analyses which shot is the sharpest (using existing AF software) and and automatically save the setting for that lens.

The above process is not new, it is just that the user must o steps 4 and 5 manually which takes forever if you have a lot of lenses.

jamesm007 ·

Oleg

That’s how the Pentax service center tunes the focus on bodies as well. They don’t use charts. They put the camera on a special machine turn screws on the bottom until the PCs monitor which is loaded with a special program tells the tech AF is good.

Just a few months ago when my K20D had almost 50,000 clicks on it, the rear edial started to act up and I sent it to Pentax Service in the USA. The tech checks all specs while on his bench before the camera comes back to me. He found AF out of whack and adjusted it.

Now you must understand I thought my AF on the body was perfect. I would nail BIF like they were standing still. No problems with AF fine tuning my lens. Although some lens like the kit needed +8 to be decent. So where I stood my K20Ds AF was perfect.

When I got it back and took pics with all my lens first thing I notice is the kit lens is better. So I start my very tedious procedure of setting AF fine tuning. Its hard because as the article stated their is AF variance even under perfect conditions. It took almost a week for me to set the AF fine tuning on my DA55-300mm (I do work).

With AF tuning done, the kit lens now needed 0 AF fine tuning; before it needed +8. The DA55-300mm needed +5; before it needed 0. Those stand out to me. The others were about the same. The IQ of all lens stayed the same from what I can see except the kit (DA18-55mm WR) which got better.

My conclusion is first time buyers are in for a long road of learning and that these articles should be a must read and for the newbie to take them to heart, and the maintenance schedule in the owners manual should be looked at at least. Many don’t even know dSLRs are suppose to have maintenance.

I hope the author here can one day speak on maintaining dSLRs and if AF does fall out of whack over time. From his unique standpoint.

Ros ·

I get much more consistent AF and hits with my GH1 CDAF then i did with my 40D,

i dont shoot action, so for me i’ll never going back to PDAF, especially after all the trips to the lab for AF calibration (lens and body’s)

Thanx for the article, keep em going!

DaveD ·

What is the rate of ‘bad copy’ lenses?

I’m not overly concerned about the minor variations between the good copy lenses. But as a lone consumer, how do we decide if we have purchased one of those ‘bad copies?’ A little worrisome that you have 2 of those in this relatively small sample.

In your experience, just how rare are the bad copies? Would a casual comparison with one’s other lenses detect one of these bad apples?

Roger Cicala ·

Dave,

Remember, with these samples I purposely included some that had failed our standard inspection process (optical test-charts, etc.) so we would get some perspective on the much bigger difference between a bad lens and just sample variation among normal lenses. Bad copies, out of the box, are rare, but they happen. Maybe 1%, but some variation depending on brand, if it’s a newly released type of lens, is it a zoom, does it have IS, etc. We’re going to have more bad copies than that because our lenses are used heavily: a good lens that gets dropped becomes a bad lens most of the time.

Roger

Jack C ·

Nice article… the charts summarize the information very well.

I would be very interested to see a similarly in-depth analysis of how Contrast Detect AF compares with Phase Detect AF in terms of accuracy and consistency of results

-JC

Robert S ·

Roger,

Thanks for a helpful article. How often should one send their lenses in for service? Recently, I noticed softness in some images and thought I needed to buy primes. I sent my 24-70 mm zoom and 5D Mii back to Canon and they came back noticably improved. Canon’s note that they repaired the mechanical linkage reminded me, as several writers above did, to follow the camera’s maintenance schedule?

Roger Cicala ·

Robert,

I don’t have a blanket answer, but you are providing me a nice intro to an article I’m working on now: looking at how various lenses perform in relation to their age. It’s interesting because the one lens we see deteriorating over time is the 24-70 f2.8: the zoom linkage / seals tend to wear out. I assume (but don’t know for certain) it has to do with a heavy internal barrel that causes wear and tear with long=term use.

dan ·

I personally feel we have been duped by Canon L lenses. Almost every

good nature photograph I have seen has been using a tripod.

I question the need for IS over any lens over 300mm, and the only reason the 300mm 2.8 is is so sought after is because Bob Atkins published his unscientific study for years. Canon should be paying Bob Atkins royalties.

I don’t blame Bob, but his gullible readers are another thing. Maybe

it’s human nature. The 300mm 2.8 seemed like the perfect lens.

It used to be reasonably priced–not anymore.

consumers won’t buy the most expensive lense, or the least expensive-

it’s usually the one in the middle. The 300mm 2.8 was that lens–until

the internet.

Oh yea, people B and H is Not the only store in the world.

When I saw the price of the 300mm 2.8 zoom from $4200.00 to

close t0 8 grand; I started to boycott the company. It’s pure greed.

dan ·

If your new to nature photography–remember this; there’s a reason they give you a nice box on big canon lenses. That’s where they stay most of the time. Buy a long prime and a tripod and get outside and take pictures.

Too many photographers scour the internet looking for a technical edge,

and really don’t take many pictures. I used to be one of those boobs.

Thomas Andre ·

This isn’t the same issue, but is related. I have a Tamron 18-270 pzd and Canon 55-250 lens (for digital smaller sensors). At 18mm the Tamron matches, in size of image, my 18mm-55mm Canon kit lens (for a 20D). At full extension, however, the Canon at 250mm produces a more magnifed image than the Tamron at 270 mm. Have you every check the magnification accuracy?

Roger Cicala ·

Thomas, we do check that and all of the zooms (and a few primes) vary a bit in the actual, versus stated, focal length. For example the Canon 24-70 zoom is actually 25mm to 67mm, the 70-200 is 74mm to 195mm, and the Sigma 50-500 actually is a 55mm to 470mm. I think the rule of thumb is they should be within plus or minus 5% but I’m not certain that’s always true.

John Angulo ·

For these plots, how do you compute the average resolution of the entire lens? You call it a “weighted average” – how is it weighted? In a simple unweighted average of multiple measurements across the image, a fuzzy corner due to decentering might not have much influence on the result, even if some of the measurements capture it. But a fuzzy corner or side is just what makes the difference between good and bad copies for critical purposes. Would it be possible to plot the lowest measured resolution on the vertical axis, whevever on the image that occurred? It might be interesting to see the scatter among different copies in this case.

Roger Cicala ·

Hi John,

The weighted average uses Center point x 1, 6 mid points (which include the top and bottom center edges) x 0.75, and corners and lateral edges x 0.5. On bad corner does drag the weighted average down significantly, but more importantly we hardly ever see one bad corner: usually it’s a bad side (or top/bottom) and occasionally it’s contralateral corners both bad (certain kinds of tilt can cause it) and in such cases the weighted average is awful. But as far as our testing we flunk far more on the weighted average than on center sharpness. Not surprising, really, when you think about how much more a tilt or decentering will affect the outer area of the lens.

Roger

Frans van den Bergh ·

If you want to evaluate the sharpness of your own lenses, or you wish to calibrate the AF fine tuning of your DSLR body, you should try MTF Mapper

http://sourceforge.net/projects/mtfmapper/

This utility is totally free, and source code is provided. Or you could buy Imatest for $300-$5000 🙂

Please read the user documentation thoroughly, though.

I would appreciate any feedback, and welcome all discussion!

Hendrik ·

I second Jack C on

“interested to see a similarly in-depth analysis of how Contrast Detect AF compares with Phase Detect AF in terms of accuracy and consistency of results”.

Please.

Scott ·

Great article, Roger.

Trying to get a sense of how much variability is represented by the cloud in your first graph, I have a question:

Suppose I’m walking around my city in daylight, taking actual pictures. I mostly have the lens stopped down a bit, and I pay attention to using appropriate shutter speeds. Is there enough difference between the best and worst results in the cloud (not including the failed lens) to be consistently visible in (say) an 11 x 14 print?

David Tombs ·

Great article, I am sure you can answer my question on lenses.

I am looking for a prime lenese for my Canon – about 85mm.

I note from the regular data that the same FL lenes range from £650 to over £1200, the latter being the L series.

Q. Why the difference in price and does it really make a difference that can be seen in the finished photo or is iot just about a stop of speed?

Thanks

Roger Cicala ·

David,

It depends what the finished photo is (difference is readily apparent in a large print, hardly apparent on a web jpg.). But it’s about the stop of light and the ability to blur the out of focus areas mostly. Increasing vision is increasingly expensive – an f/1.2 lens is a very special, and expensive thing. But most photographers don’t need it.

Test123 ·

I’d just like to let u know how much I learnt from your blog Dugg you.Hope 2 be back fast for some more good stuff

Han Masden ·

It’s actually a great and useful piece of information. I am happy that you just shared this useful information with us. Please stay us informed like this. Thank you for sharing.

Rainer ·

Generally speaking correct. When I spoke to the supplier who is responsible for warranty issues on a Lens he was only too eager to point me to read all this. he debated with me that it may be my camera instead of my Lens causing a substantial back focus. I was not there to debate or argue a point. I merely wanted to drop a bad copy lens off to have a substantial back focus issue fixed on this Lens. The Sigma 150-500mm just was not cutting it. Heck! I know all this stuff about slight variations from Camera to Lens and vice versa. I have been around long enough, got 8 other Lenses with only slight variations as to be expected.

It beats the crap out of me when someone attempts to override commons sense with some tech jargon and try to tell you that a bad Lens is not the problem but the Camera is. I had this Lens on auto fine tune to minus 20 and it was still slightly back focussing. That is clearly a bad Lens and not a Camera. There is still a clear distinction between small focus variation versus a bad copy. I just got a bad copy and I am hoping it will come back fixed as it is the second time. I knew I should not have purchased a Sigma Lens!! Always felt they are too soft for me but it is the only reasonable priced lens at a 500mm range. Needless to say if all fails I will try and get my money back and buy something more decent even if it costs me double….

Arnout ·

Hi Roger,

I know this is an older post already, but I stumbled upon on of your history posts, and have been binge reading your blog since. I have a question about how you measure your lenses. You say: “All were taken on the same camera body and the best of several measurements for each lens copy is the one graphed.”. I was wondering why you decided to use only the best measurement? From doing experiments at school, my fist instinct would have been to perform like 10 measurements per lens, throw out any outliers, and take the average and standard deviation. That way I think you can be more sure that the variation you see between lenses is caused by differences between those lenses and not because of measuring errors. Have you tried this? How does the standard deviation in the measurement of one lens compare to the variation between lenses?

Alec Kinnear ·

Thanks for this Roger. It really helps to set reasonable expectations for microfocus adjustment and lens calibration.