You CAN Correct It In Post, but . . .

… . there is no free lunch.

I hear this about 20 times a day and it’s true: “Yes, it has a lot of distortion, but that’s easy to correct in post.” It’s a totally true statement.

Unfortunately, way too often, the complete statement goes like this: “I got it because it has such high resolution. Yes, it has a lot of distortion, but that’s easy to correct in post.” That’s two totally true statements. But when combined, they become false.

Distortion correction is a wonderful tool. But every tool, whether in-camera or in your post-processing program, that modifies an image is a trade off of sorts. There is no way you can shift that many pixels around and not decrease resolution. I’ve said it dozens of times. Yesterday, unfortunately, someone asked me to tell him exactly how much resolution you actually lose?

Don’t you just hate it when someone interrupts your Rant of Absolute Knowledge by asking for facts? What a buzz kill. Now, if I were hanging out on a forum under my anonymous handle of LensGuruGod1 I could have used proper Internet etiquette and replied, “If you weren’t an awful photographer you’d already know that. I won’t waste my time with you anymore.”

Being that I was out there using my real name and all, the comment left me no choice. I had to go do some testing and gather some actual facts. I hate when that happens, especially when I’ve already spent two days playing with the Canon 24-70 f/4 IS and taking it apart. That has me a bit behind on my real job, so please excuse that this isn’t an exhaustive test of 30 different lenses. I do think, though, it’s a good example.

The Test

I used the Canon 24-105 f/4 IS for this test. It has a large amount of barrel distortion at 24mm (over 4%). I chose it simply because we already had Imatest set up at 24mm and because I feel our Imatest setup is a bit less accurate wider than 16mm. I mention this only as a preemptive strike because I’m 100% certain some Fanboy is going to be saying, “Roger said the Canon 24-105 has the worst barrel distortion of any lens.” It doesn’t, not by a long shot, but it has plenty for this test.

We usually run Imatest only on RAW files, but that would make it impossible to correct the image. So I took the RAW file to Photoshop, turned off every single sharpening and modifying tool and converted it to TIFF. Then I loaded the correction profiles for the Canon 5D Mk II and 24-105 and did an auto distortion correction, saving that as a TIFF.

Finally, I compared the corrected and uncorrected versions in Imatest. I’m presenting data from one lens here, but I did another for completeness. It was identical.

Image Correction

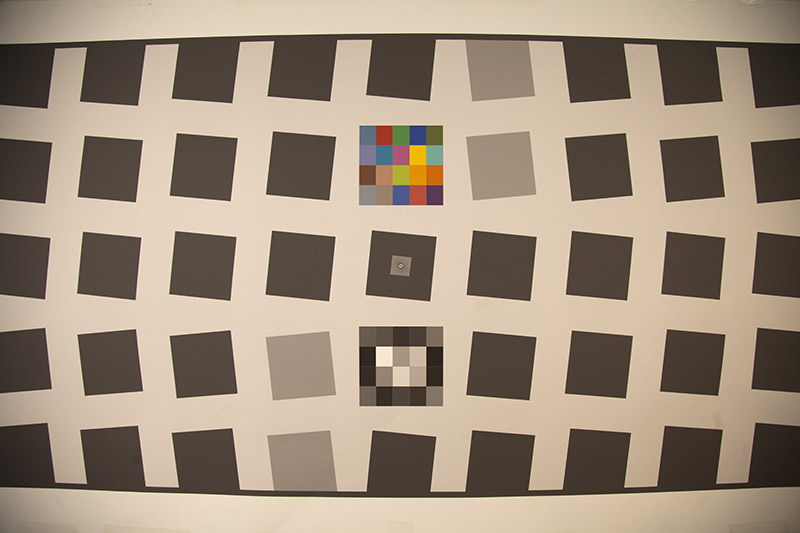

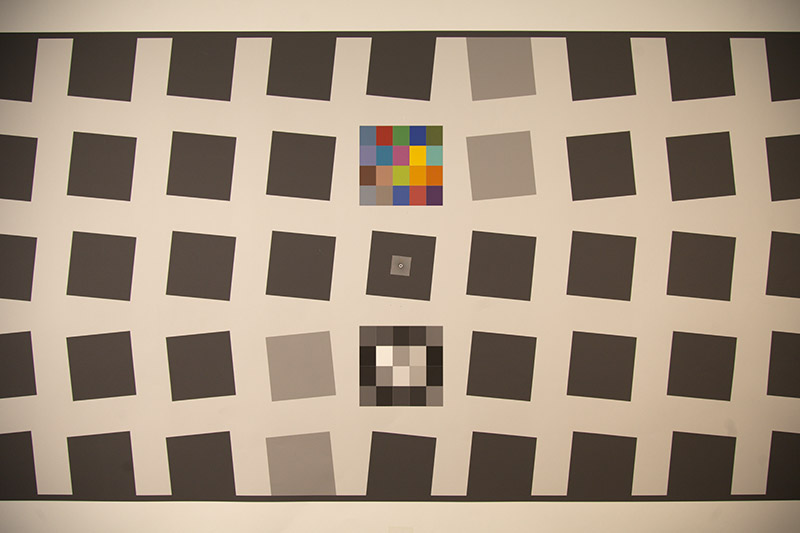

Below are the original and corrected shots. Photoshop does a really nice job of correcting the image. I measured the autocorrected version as carefully as I could and barrel distortion had been reduced from 4.2% to 0.5%.

The Imatest Results

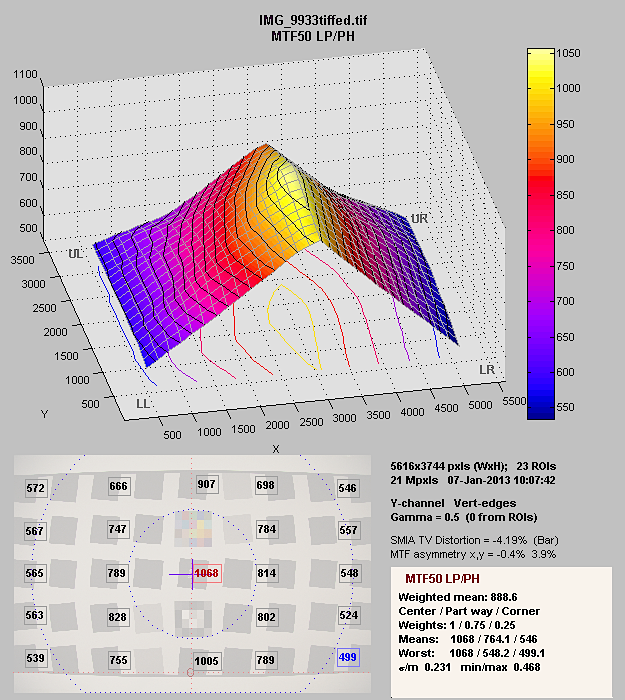

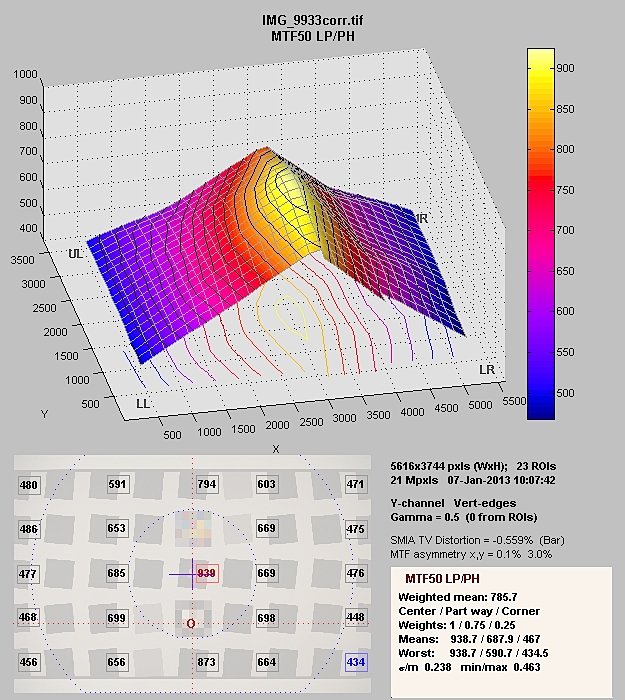

On top is the Imatest printout of the uncorrected image. Below is the corrected image. The numbers in the boxes are the MTF50 measured in Line Pairs / Image Height at each location.

The numbers are a bit hard to read, so I’ll summarize. The center MTF50 dropped from 1068 LP/IH to 939 (88% as sharp) after distortion correction. The far sides from an average of 556 to 477 (86%), while the corners decreased from an average of 539 to 460 (85%). Actually I was a bit surprised, expecting more decrease in the corners and less in the center.

I reran the numbers for MTF20 which decreased in the center from 1552 to 1450 LP/IH (93.5%), on the edges it dropped from 1015 to 838 (83%), and in the corners from 1005 LP / IF to 837 LP / IF (83%) which is more like what I expected.

Since MTF20 probably is a more important measurement of resolution for small prints and online jpgs, this would probably correlate more with what most people would see in an image. Landscape photographers making large prints, though, would be interested in MTF50.

So What Does it Mean?

Not a lot for most people. Distortion correction generally improves the look of a photograph and a small sacrifice in resolution isn’t too important with today’s cameras and lenses, even in the corners.

But when someone wants to argue that they buy a lens with high distortion because it has higher resolution and distortion is easy to fix in post … well, it had better be a lot higher, or it’s a fool’s argument.

I’ll add one other note. It’s well known among lens designers (I’m not one, but I read their textbooks and journals) that when designing a lens correcting distortion often reduces resolution. In ancient times (i.e. film days) distortion correction was a first priority. After all, it’s really hard to stretch film to correct distortion. In current times, lens designers seem to be more willing to leave the distortion to get higher resolution.

I agree with that – I’d rather have the option of correcting or not correcting myself. But I think it’s important for photographers who ARE very interested in the best resolution to realize they’ll be giving some of that back when they correct in post. If two lenses have identical resolution in testing but one has more distortion, that one will have less resolution after the distortion is corrected.

Roger Cicala

Lensrentals.com

January, 2013

42 Comments

mph ·

Thanks for this. Here’s a couple of things I’m pondering now:

1) How much does the loss in sharpness depend on the amount of distortion corrected? If you had a lens with 1% distortion, instead of 4%, would the loss in sharpness be much less, or similar? It’s possible that the “fact of” resampling matters more than the amount of correction. The sharpness loss in the center of the frame suggests this may be the case. If the sharpness loss is about the same no matter how strong the distortion, then allowing a great deal of distortion may still be a good design decision.

2) Similarly, what if we have multiple resampling operations? Suppose I straighten my photo by a degree or so of rotation, which I usually have to do. How much sharpness do I lose? What if I straighten, and also apply distortion correction? Do I bear the full sharpness loss from each operation? If each operation yields 0.85 on its own, is the combined result 0.85*0.85, or some more modest loss?

Roger Cicala ·

mph – you just wanted to give me more work do do, didn’t you? 🙂

I would have said more distortion correction means greater loss of resolution. BUT these results may make me rethink that. The center of the image is ‘moved’ a lot less than the corners, but loss of resolution occurs there too. So I’m just not certain.

Straightening a photo does a similar thing to distortion correction (I tested that way back in the day, but don’t know where the results are), but I have no idea if the results are additive or multiplicative.

I guess more testing is in order. Actually, this kind of thing probably has more practical importance than half the stuff I do.

mph ·

A blogger’s work is never done! If you were getting grants for this research, you’d love to have such clear follow-on projects. 🙂

My intuition says the “fact of” resampling matters more than the amount of correction, at least for these photographic applications. My reasoning is that for these resampling operations, the pixels are moved by much more than 1-2 pixels (think how much a percent or a degree is, in pixels). I think the sharpness loss depends mostly on the sub-pixel effects (or the sub-2×2-bayer-array effects). If you move a pixel by 23.42 pixels, I bet the .42 matters a lot more to the resolution than the 23 does.

Roger Cicala ·

That makes sense. I do know in practical terms you never, ever tilt 1 degree twice, you tilt two degrees once. Otherwise you get more loss of resolution. (I’m dating myself because that goes all the way back to working with Photoshop 2.0 and NIH Image when there weren’t built-in measurements and you had to eyeball that kind of thing.)

So one big move has less loss than two smaller moves. But I’m not sure if one smaller move has a lot less loss than one big move. Probably there’s a small difference between big and small movements, a huge difference between no movement and a small movement. I’ll have to check.

mph ·

Since ACR and Lightroom wait until you’ve made all of your adjustments before they produce a final output image, my hope would be that all of the geometric transormations (lens correction, rescaling, straightening, perspective) are combined mathematically into a single overall transformation, in order to avoid multiple resamplings. That would let ACR/LR outperform Photoshop’s step-by-step process, which can erode sharpness as you note.

THG ·

Great work! As usual. I’m very excited to see results of a lens with less distortion. Phantastic. Thanks a lot!

CarVac ·

Distortion correction, once it exceeds one pixel in the corners, should not cause much loss in resolution unless it’s a truly astounding amount of distortion that requires significant local magnification. Otherwise, on the small scale it’s just sliding the image around.

If you upsample the image 4x in each direction with a bicubic filter first, I’m sure the resolution drop in the corners will be meaningless except for fisheyes. Each original pixel will be left basically intact, just moved. The only possible resolution loss would be from additional magnification.

…unfortunately, that’s not terribly kind on your computer regarding filesizes and processing power in these days of 20+ MP sensors.

Teedidy ·

Would it be possible for software that “fixes” distortion to re-size the image to maximize the resolution and distortion? Instead of “stretching” the image, to always “pull” (contract)?

Roger Cicala ·

Teedidy, I think it basically does that. In the pictures, for example you can see the corrected image is no longer 24mm wide, its as though it was 26 or 27mm. I think the effect comes from just moving pixels. Move pixels and lose some resolution, it’s inevitable.

When I used to teach photoshop people were always shocked to find out a sharpened image had less detail than an unsharpened image. Greater acutance, of course, but less detail.

Teedidy ·

Aw yes, the photo is now 26 or 27mm, are there still the same number of pixels? I personally would love to see a 22MB photo with 5% distortion shrink to 18MB file with .5% distortion with similar resolution.

Jim Harris ·

Roger — Great work!

Taking matters a step further, and possibly touching the “why” of your expectations that the rez fall-off wasn’t even greater in the sides and corners: seldom do correction end with correcting distortion. As you mentioned, there is the straightening that crops into the original image. But also very common is the lens profile auto-correcting vignetting. I find this also very destructive of rez, and the programs do little to extrapolate and fill the gaps left by such correction, resulting gritty, grainy corners. On a worst case scenario, anyone who has ever de-fished a ff fisheye image knows how much space there is to fill in the vaccuum left in the corners/edges. My point is I think you are very right in your expectation that the numbers in practical use are considerably lower than what you’ve come up with here. Sorry to give you any more work, but I’d be curious to see the figures if that area represented by vignetting were “neutralized”.

I’m getting pretty selective in my use of correction tools, and find myself often leaving distortioin and lens profile unused so I can keep as much rez for straightening, cropping in, or using skew to correct perspective. Least destructive to me seems to be removing CA, but even here, edge rez goes down. What is a person to do? 🙂

Thanks so much for opening the can of worms and shining a Maglite into it. 🙂

TPLAV ·

Could math rounding errors play a part in loss of resolution?

Would the smallest move would be one pixel when fractions of a pixel may be required. Especially for a diagonal move.

Roger Love your blog…Thanks

Samuel H ·

I always suspected this, but was too lazy to test myself. Thanks for doing this for me. You rock.

And also: I’m all in for the tide of not completely correcting distortion optically, as long as the sideffects are not clearly smaller than those from correcting it in post. I have no idea if that’s the case, but as long as there’s a tradeoff I’d go for the sharper lens, since “distortion” is not an absolute issue: it depends on what you are shooting. Some of us shoot more people and less buildings, and for this, a lens with more barrel distortion will produce less distorted shapes.

http://www.dvxuser.com/V6/showthread.php?281994-I-need-a-cheap-wide-angle-lens-Recommendations&p=1986142267&viewfull=1#post1986142267

wickerprints ·

I would hypothesize that the reason why corner MTF50 doesn’t decrease as much as one would intuitively expect is because the correction for barrel distortion maps points with a smaller image height to a point with a larger image height. Thus, when comparing MTFs in the periphery, you aren’t actually drawing a comparison from the same part of the image. If you were to do this, you would need to compare a point in the extreme corner of the corrected image to a point that is somewhat nearer to the center in the original uncorrected image.

Additionally, what your data suggests is that there is some intrinsic algorithmic loss of resolution regardless of image height due to any spatial transformation of quantized data. To me, your numbers imply that the losses in the corners are actually very small; one could roughly quantify it as the percentage difference of center versus corner losses.

A third observation is that we would expect the distortion correction to affect sagittal and meridional MTFs differently, with slightly different consequences for lenses that exhibit peripheral aberrations which are direction-dependent.

David Stock ·

Another great post, Roger. Thank you.

I use macro lenses a lot for cityscape photographs. Some macros are super sharp across the image field, even at infinity, and also have very low distortion. That’s my experience, at least. The limitation for these macro lenses is usually maximum aperture, often no wider than f2.8. So it’s a 3-way trade-off….

Michael ·

I think the reason why the loss of resolution after distortion correction is modest in this example is due to the fact that the 24-105 isn’t very sharp in the corners in the first place. This leads to some oversampling w.r.t the lens resolution and thus to lower loss in post processing because there is a lot of data compared to actual information. I would think that if one takes a lens which is very sharp in the corners while at the same time having significant distortion the effect of distortion correction (interpolation) would become more obvious.

Great blog, Roger. Thank you.

Jeff Cowan ·

Not so fast, Roger.

Just what are these lens design books and journals you enjoy reading? C’mon. Some of us are curious about the subject as well 😉

Roger Cicala ·

Jeff,

I didn’t say I enjoyed reading them 🙂

Being mathematically challenged I struggle through them, often with a trig or calculus reference book open at the same time. But, lord, I’ve got several shelves of them, too many to list here. A few I use a lot:

Goldberg: Camera Technology: the Dark Side of the Lens

Kingslake: Lens Design Fundamentals

Ray: Applied Optics

Ray: The Photographic Lens

Laikin: Lens Design, 4th Ed.

Smith: Modern Lens Design

My absolute favorite, though, is Kingslake: History of the Photographic Lens

Roger

peevee ·

Mathematically, distortion correction should be performed before or at the time of demosaicing, not after. Ideal algorithm would suffer as much resolution loss at worst as the amount of distortion corrected.

By converting to TIFF first and doing the correction later, you operate on junk interpolated data that was not there to begin with. Junk in – junk out.

Robert Bowmaker ·

Thanks for the interesting post Roger, this is going to motivate me to look into this more deeply. However, straight off the bat, I think it’s important to point out the interaction between lens resolution, camera sensor resolution, and resolution loss due to distortion.

It’s well known that the camera matters when measuring lens resolution; if the camera has too few megapixels, it can’t capture the fine details that the lens is resolving.

Since the resolution loss you’re seeing here is due to resampling the image with non-integer pixel shifts, the penalty applies to the camera, not the lens. To clarify:

– A low-resolution lens that is significantly outresolved by a very high megapixel camera will only pay a negligible resolution penalty when applying this sort of distortion correction,

– A high-quality, high-resolution lens that is paired with a low-resolution camera (such that the sharpness of the images is camera-limited) will lose the most resolution due to distortion correction.

So if you have a D800, I expect you’re going to notice this a lot less than if you have a D90. Furthermore, if you take an image from a D90 and double its size (both width and height), before performing distortion correction, the resolution isn’t going to be affected as badly.

Which might not be an unreasonable thing to do if you’re printing 8 foot by 6 foot gallery prints.

Joe ·

So at what point do you compose a ‘never for film’ list? I’ve always been tempted to snag a 1V to try, but this information would be killer to have. You have to wonder what the old lens guys are saying distortion – if they had the glass and precision tuning currently available we’d all have medium format level resolution!!

Hans van Driest ·

What is probably most interesting is how much of the loss can be recovered. one could argue that re-sampling mostly reduce the contrast for higher spatial frequencies. if so, and when the snr is sufficient, the loss can be mostly recovered. The same goes for stopping down a lens. We hear how stopping down a lens to f11 (on say a 24MP ff camera), loses a lot of resolution. It does indeed cost some, but most is recoverable with extra sharpening.so much so that in say a 20×30 print, the loss is almost invisible. this is even true for f16 (with some lenses, not all). aggressive sharpening can regain most of the loss, up to a point that the difference is, again, hard to see in a 20×30 print.

I have a Samyang 14mm, a distortion champion. but also a lens with a remarkable resolution, out in to the most extreme corners. And correcting the distortion does sacrifice some of that resolution, but again, extra sharpening can (visually), recover most of the loss. I have never measured any of this. But we are talking photography, so I would expect that what you see is what counts most.

another general remark on resolution. some lenses take sharpening much better than other. I do not claim to have investigated the cause, but can imagine that it has to do with the relation in say mtf50 and mtf10. the later can become very important when you sharpen the image. a lot of the resolution we get form high resolution digital is in the area where lenses give considerable attenuation of higher spatial frequencies, and an AA filter ads to this.

we often see, in mtf plots, figures for 10 and 30lp/mm. a 24MP ff sensor has a Nyquist limit of 83lp/mm, which is considerably more. a 24Mp aps-c, like the nex-7, has a Nyquist limit in the order of 125lp/mm. a lot of sharpening is needed to see this resolution back in the result.

mvmv ·

Distortion correction also crops the photo, so 98% viewfinder might be more accurate than 100% viewfinder? After correction you can still crop but adding is difficult.

Jeff Cowan ·

Thanks Roger!

Volker ·

Hi Roger!

Thanks for your interesting experiment. Long ago, I investigated problems resulting from reduced resolution – that time, however, affecting novel video compression codecs that allowed sub-pixel movements of macroblocks. Indeed, all non-integer displacements gave the biggest trouble.

But watch out: This kind of motion compensation left the pixel sizes/geometry intact. So as long as you didn’t have to interpolate the background (=the non-moving parts of the image), everything was fine.

But with correcting lens distortion, things are a bit different.

Not only do you move pixels around, you also change their shape, figuratively speaking. If you want to correct barrel distortion as in your example, you’re pushing a cross shaped section of the image inwards and the rest outwards, finally resulting in pixels larger (or smaller) than 1×1 and not even square ones.

Note, that I define “Pixels” in a more abstract meaning as “image sections having the same information source”.

And it’s perfectly clear that if you blow up local pixel blobs from 1×1 to 1×2 or 2×2 while the complete image itself keeps its size, you’re going to lose information. This, BTW, is interchangeable with a local scaling of the image.

Since “information loss” is equivalent to “resolution loss”, I would beg to differ that only the sub-pixel portions of an image transformation will affect sharpness – it depends on the nature of the transformation.

Shifts along the x- and y-axis are pretty harmless (you can even do some kind of antialiasing/error-minimizing to reduce the effects of sub-pixel translations), rotations too (if the algorithm correctly handles the larger increments of points further away from the centre) but non-linear and inhomogeneous transformations are tricky.

You have to deal with pixels overwriting each other and sections where information is interpolated.

Just my 2cts,

Volker

Randy ·

Thanks, Roger. The amount of barrel/pincushion in current lenses leads me to believe manufacturers have concluded customers don’t notice or don’t care very much. Correcting distortion after the fact seems “sloppy” to me, but since none of my wide angles is distortion-free I take this as a given. Now, I even use the 16-35VR for interiors sometimes. Why not–I’m going to have to “fix” it later, anyway.

simon ·

hmm very interesting thank you very much, but also annoying to know how much it actually is (even in the center, oh nooo) 🙂

no seriously the (many great) comments got me thinking weather some of that loss could be recovered with a smart algorithm. maybe some cameras that automatically do that already are better than others? maybe someone with a lot of different cameras could compare them all 🙂

and I also would wish that many mft lenses weren’t corrected automatically, since when you later stitch the images to a larger image you distort the Image again anyway.

and I also wonder why do you reduce 4% to 0.5% and not to 0?

David ·

If you try this again, it might be interesting to see if DXO or PTLens (particularly PTLens) could give better results. As with the comments above, the answer may have less to do with the inherent distortion of the lens than with the resampling technique.

Daniel ·

I support David’s idea.

Is there a chance for you to make comparison of the uncorrected image and 3 corrected images (lightroom/ps, dxo optics pro, pt lens)?

At least DxO claims to be the number one in the field.

Also, it would be interesting to see the change in resolution for barrel as well as pincushion distorted images (will it have some effect on the center sharpness change?).

Roger Cicala ·

Daniel and others,

I’ve had a lot of interesting input from people who know post processing programs from the inside (several have left comments here, others have emailed). The bottom line is while it needs to be studied further, it’s too complex for me to do – there just isn’t time. Some of the pertinent points:

1) These algorithms change constantly. Not only do they differ between programs, they differ between versions of programs. The laws of mathematics say there will always be image degredation, but how much could vary a lot — or a very little.

2) The differences aren’t just ‘better or worse’. The type of distortion, resolution of the image, distance of the shot, depth of field, aperture of exposure, contrast, etc., all could have an effect.

3) Resolution changes could also differ in very localized areas, particularly if a lens had aberrations like coma, spherical aberration, etc. in addition to distortion.

4) DxOAnalytic software probably would be better for this job than Imatest, simply because it analyzes smaller circular regions rather than larger linear ones. But I don’t have that equipment.

5) Multiple software packages and raw converters need to be tested, using multiple images of different distortions and resolutions.

The work needs to be done. It would be interesting. But it would take weeks and I simply don’t have that kind of time to dedicate to it.

Esa Tuunanen ·

Like Volker said barrel distortion makes center of image “bulge” out needing some mutilation of also those pixels to sweep distortion under the rug. So resolution loss also in center should be expected.

And just like he said software distortion correction is literally like pixels in some areas of image being compressed smaller and again streched in other places to reshape areas of image.

So why should anyone think that process is lossless.

You don’t think same way from drawing some figure/pattern onto air balloon and then inflating balloon?

Also multiple separate corrections should obviously cause more information loss because of multiple times that results of calculations are rounded up.

Photozone did year ago MTF50 tests for few Samsung NX lenses both with and without distortion correction and also confirmed resolution loss.

Simon, Raw Therapee doesn’t do automatic distortion correction if you leave that option disabled.

Daniel ·

Roger: understood and definitelly thank you for your efforts. Carry on the good work!

John ·

Very interesting and informative post Roger, thank you. It makes me wonder (don’t go testing this, hehe), if the sharper 24-70mm f/2.8 mark II, after correction, will be roughly the same resolution as the uncorrected 24-70mm f/4L. 😉

WTV3D ·

So true,

great article.

Thanks

Eric ·

Fantastic article – thanks for creating such a great blog.

abib ·

That’s why I sold my 24-105L and my 17-40mmL for a 14mmL mk 2. and sticking to the much better EFS 17-55mm f2.8 and 70-200mm L f4 i.s or f2.8L mk2.

Gee Free ·

Dear Roger,whenever I read your stuff I am always reminded of the late Geoffrey Crawley who wrote reviews for the British Journal of Photography back in the day. Your no-nonsense, cut-to-the-chase, fanboy-averse approach is so refreshing, welcome and much needed. What a different technical world photography is this digital realm. There is so much potential misinformation and confusion regarding real-world IQ and how to achieve it and how to maintain it.

I got rid of a beloved, late, mint Nikkor 28/3.5 PC because the CA was just so outrageous that even after correction attempts in numerous software there was still what I can only describe as a white shadow where the red/purple fridge used to be. Your little article begins to explain why and points to a reality that what we use when we photograph is not just a sensor but a complete system (lens-sensor-electronics, etc)that often-times may deploy software manipulation (that is essenially what “firmware” is isn’t it?)even at the AD stage. Notwithstanding that, if we have a “perfect” sensor that requires no AD tricks to be “perfect” and we use a lens that captures an image full of distortion that must be corrected via software and will thus detract from the “perfectness” of the sensor, then what is the point of lauding the camera manufacturer because of having a sensor whose full potential cannot be realized due to deficiencies in the camera manufacturer’s lens line-up.

Where to find information on real, genuine and practical image IQ that takes such into consideration? For example, I have yet to read anything on DxO’s site that factors in the destructive effects of correcting for distortions like CA nor have they mentioned Nikon’s manipulation of the analogue signal before it is converted to digital. Whether this is still an issue or not a do not know. But I remember reading that astronomical photographers would not use certain Nikon cameras because the noise reduction process used effectively cancelled out some of the stars before they were even recorded!

Lens distortion really matters even in the digital age – and thank you for pointing that out. Where can I find an honest rating system of lenses and systems that will honestly rate based upon real world stuff like you have done in basically telling us to be wary of “fools arguments”? Thank you for your blog and your hard work.

Manuela ·

Roger, thank you very much for this test. I would have asked exact the same question as mph if I were the first here to comment. I am looking for further testing because in many fora people use the argument that distortion can be corrected and the loss of resolution is negligible. As I suspected there is in fact no free lunch.

Manuela ·

I meant “I am looking forward to further testing”, sry for that, I am not English native.

Lisandra ·

aaaaaat any rate…you constantly answer things that keep me up at night. Resolution is the most important thing to me as of today (despite my favorite format being m4/3s) but I think distortion is more distracting overall than a bit of resolution loss.

Matt ·

Imatest gives two measurements – MTF and “MTF after ideal USM sharpening”. I’d be curious to see if the latter changes as much as the former. You’d expect any pixel moving operation e.g., horizon straightening, to reduce MTF, but in a detail critical image, you’d also sharpen after manipulation.

Gert van der Plas ·

Resampling the original image to the original resolution after correction would certainly drop the MTF. Merely by resampling slightly shifted camerapixels. However with a proper algorithm and sufficient oversampling the MTF should drop less. Were I to write such a code I’d not stretch the image sides above and below the image center, compress the center and stretch de corners. Minimising the pixels shifts for distortion correction and divide the MTF reduction over most of the image. A drop of the MTF in the center seems therefor logical to me.

I’d expect with proper resampling that the MTF would drop with equal ratio of a pixels enlargment and reverse for shrinkage.