How to Use AF Microadjustment on Your Camera

The most common questions and complaints I hear almost always have to do with AF accuracy, in one way or another, even if they don’t seem like it at first. “I’m trying to focus on this person’s eyes, but the ears are in focus instead,” or, “This lens is front focusing like crazy,” or, “This fast aperture prime was soft the whole time I used it.” Roger has written some rather lengthy, detailed posts about how phase-detection AF works (phase detection being the kind you use on a dSLR in normal, everyday shooting) so I won’t go into a full explanation here. But the gist of his articles is basically this: phase-detection AF isn’t always that accurate. Here’s some light reading, if you feel so inclined:

Why You Can’t Optically Test Your Lens with Autofocus

Autofocus Reality, part 1: Center Point Single Shot Accuracy

That last link is the first in a 4 part series, but right there in that first part is a really good example of what AF microadjustment can do. You can read that if you like technical stuff, but I’m about to summarize it in the next paragraph.

So what is it and why is it necessary?

Lenses and cameras are made to certain specifications, and those specs fall within a narrow range of tolerances. Because of the way phase-detection AF works and how it relies on physical calibration for accuracy, if lens and camera tolerances stack up in one direction or another and things are off by minute amounts, then you might see consistent front or back focusing with that particular lens and camera combination. It’s important to know that this front or back focusing is a combination specific issue. You can take 20 copies of the same lens and test them on one camera body, and you might find that most of them are pretty close to dead on, one or two will probably front focus varying amounts, and one or two more will back focus varying amounts. You could then take those same 20 lenses and test them on a different camera body and get completely different results. This doesn’t mean the lenses or the cameras are broken. They just need adjustment, and this is something you can probably do yourself in camera.

How do I do it?

The procedure for making the necessary adjustments is pretty simple, and it’s the basically same across camera brands. Here’s a list of things you’ll need:

- Camera

- Lens or lenses to be adjusted

- Tripod

- Focusing target

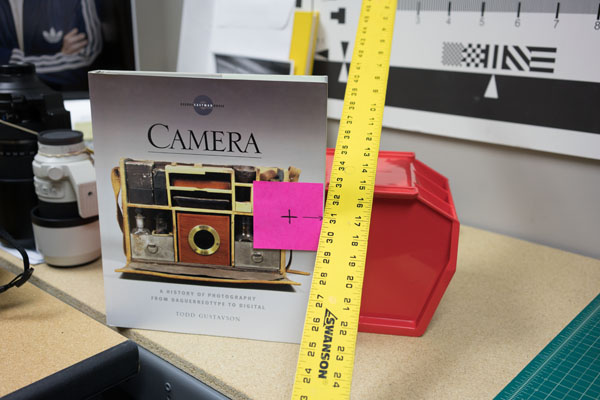

The target can be something as fancy as a LensAlign, or as simple as a book and a yardstick. All you really need is something with a high contrast, flat face you can stand parallel to the sensor plane, and something next to it that will give you a scale to provide a gauge for the plane of focus.

Once you have your target, get your camera set up on a tripod and have it level with your target. If you’re using a LensAlign, there are holes in the front face of the target that line up with red circles on the panel behind. If you’re using the book method, try to get the book and sensor plane as parallel as you can.

Then line up your camera, initiate AF and take a shot. Make sure you’re using the center focus point for this. Using outer points can create all sorts of problems if the lens you’re calibrating has any field curvature. I like to focus, take a shot, rotate the focus ring, refocus, take another shot, repeat a few times to gauge consistency.

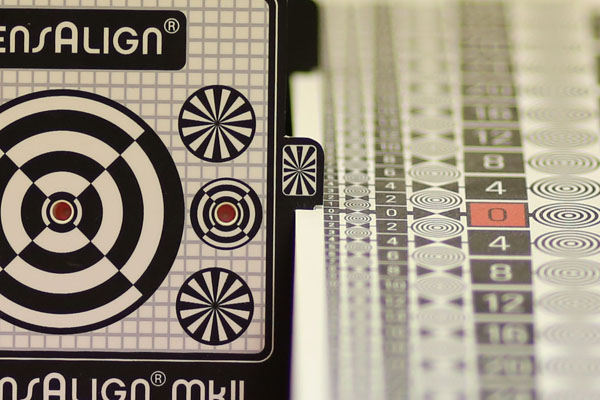

Zooming in to the image in camera let’s us see how accurate this lens/camera combination is.

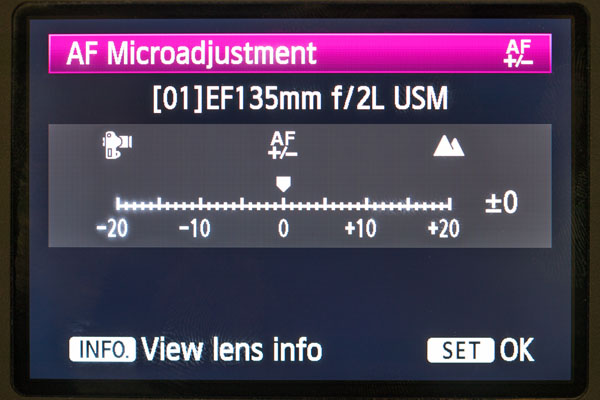

Look at that! This Canon 135mm f/2L is spot on! But they aren’t always like that. Let’s try another copy.

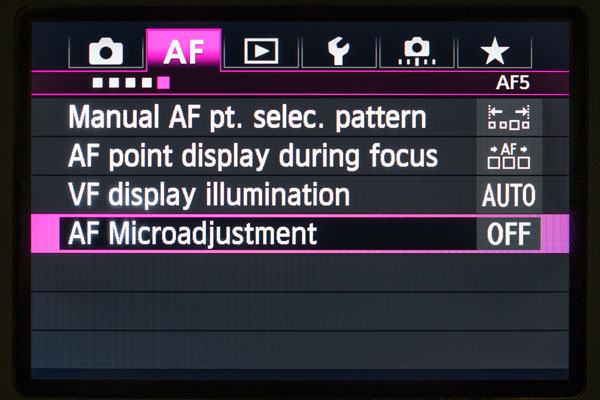

This copy is front focusing on this camera body. Look at the smaller numbers to see the difference. Should be pretty easy to adjust. First go into the adjustment menu. On Canon it’s called AF Microadjustment. On Nikon, it’s AF Fine Tune. On most other camera systems it’s called microadjustment as well. Nikon just likes to be fancy, I suppose.

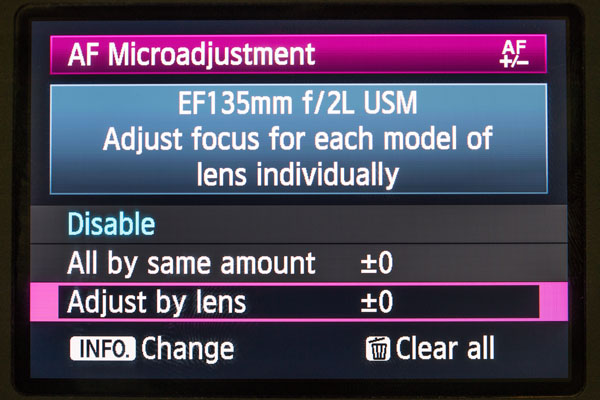

You’ll almost always see two options, one for a default setting that affects all lenses universally, and one that’s lens specific. I don’t think I’ve ever used the default setting because every lens will be different.

Setting the actual adjustment will look like this:

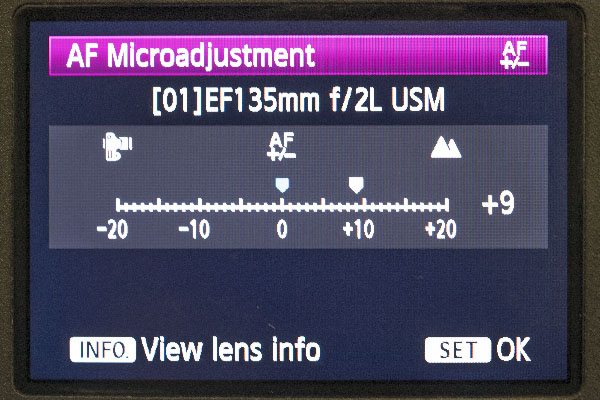

If the lens is front focusing, you move the adjustment to the right, away from the camera. For back focusing you go the other way. To get this lens dialed in, I found I needed an adjustment of +9:

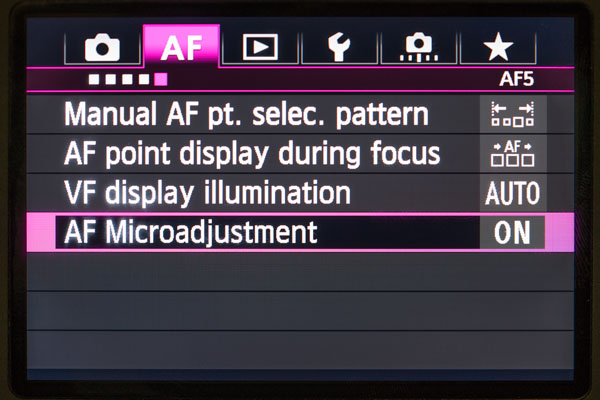

Once you’ve set it, make sure the camera shows the adjustment is turned on:

And here’s what it looks like after adjustment:

Now it matches the first lens! And that’s it. Once you’ve set the adjustment, you shouldn’t have to think about it again.

Is there anything else I should be aware of?

Prime lenses are generally the easiest to deal with. When it comes to zooms, you may be a little more limited. Most current Canon cameras that have the AFMA function can now adjust for both ends of the zoom, with a corresponding W and T setting. If an adjustment for each end is not available in your camera, you can either adjust for the end you use the most, or find a compromise you can live with. I’ve seen zooms where one end was fine, and the other was off, or both ends were off different amounts in the same direction, or both ends were off in different directions. There’s often no universal solution, unfortunately.

When it comes to 35mm and 50mm primes, you’ll often find that making an adjustment at shorter focus distances may cause longer distances to be off. It’s not that other lenses aren’t the same in this regard, but for some reason it seems to be more noticeable on these focal lengths. There isn’t really a good solution to this in camera, unfortunately. You’ll want to make your adjustments fit your needs, which may be difficult with some lenses. But if you have the Sigma Art series lenses and the Sigma USB Dock, you can actually adjust for both closer distances and longer distances. The dock and related software allow for four adjustments at four different distances with those Art (A1) series lenses. On Sigma A1 zooms, you can make those four distance adjustments at four different focal lengths, for a total of 16 possible adjustments! It’s time consuming, but if you want everything as spot on as possible, this is really the way to go.

The example above needed a moderate adjustment, +9. Larger adjustments aren’t indicative of something faulty, though. On very rare occasions you may find a lens is beyond adjustment. I once owned a Nikon 20 f/2.8D that was like that. I ended up selling it to someone who had zero issues with it on their camera. If you own a lens that’s beyond adjustment, you can either do what I did, or you may be able to send your lens and camera to the manufacturer to have them adjust it for you, as long as both lens and camera are from the same manufacturer. Just know that adjustment won’t be free.

Is that it?

Yep, that’s pretty much it. If you’ve never experienced front or back focus issues before, you’re either extremely lucky, or you just haven’t noticed it. It’s incredibly common, so it’s good to be aware of it. I’ve had countless discussions that started off with, “Well, none of my lenses have any problems on my camera, so your lens must be broken.” And 95% of the time the lens just needed a little AFMA to get it right in line. Whether you’ve been shooting for one year or 50 years, you will have to deal with this at some point. If you’re ever in doubt about whether you’re having focus problems or resolution problems, switch to live view and try using manual focus or AF (in live view your camera most likely reverts to contrast detection AF, which is much more accurate but slower). If your images get sharper, the lens is most likely front or back focusing on your camera. And now you know how to fix that.

116 Comments

J.L. Williams ·

Great explanation, but I can’t help wondering when lens calibration became the consumer’s job rather than the manufacturer’s. “Back in the day,” if your 50mm f/1.1 Nikkor wasn’t focusing your model’s heavily-mascared eyelashes accurately on your Nikon SP, you sent them (the camera and lens, not the eyelashes) back to EPOI and they’d fix it.

Joey Miller ·

Well, on a rangefinder the adjustment is mechanical. I’ve done rangefinder adjustment on my old Mamiya Universal, and it’s not fun, nor would I expect the average user to be able to do it. And you don’t have to calibrate your own gear if you don’t want to. You can always send your camera and lenses back to the manufacturer and have them adjust everything for you, for a price. Because of how phase detection works, there’s just no way for these manufacturers to calibrate every copy of a lens to every copy of a camera in their inventory. It’s a combo specific issue. As far as I know, this has always been the case. We just haven’t had a way to adjust it ourselves until recent years. I guess it’s just in how you view it. It’s either the manufacturers being lazy and making you do it yourself, or it’s them allowing you to make minor adjustments when necessary and skip the expense of having it done professionally. I prefer to think of it as the latter.

J.L. Williams ·

Yeah, I’ve adjusted more than a few rangefinders myself (Nikons are easier than most; the original Mamiya 6 is one of the easiest) but what surprises me is that people now no longer expect new cameras to arrive pre-calibrated, but accept it as their responsibility and applaud manufacturers for giving us a menu to do it: “It’s not a bug, it’s a feature!”

Joey Miller ·

The more difficult things to adjust on my old Mamiya are the lens cams. Having to adjust all 6 lenses I have for that Universal body in conjunction with the rangefinder is the most tedious thing I’ve ever had to do. God forbid the body or any of the lenses take a big bump and I have to do it all over again. One of my 100mm f/2.8s is so off now that I only use it with the ground glass.

But I think the prominence of the need to correct focus has more to do with how exacting digital is compared to the old film days. Most of us back then didn’t bother zooming in at 200% and pixel peeping our images to death. Now that we can do that, the flaws in phase-detect AF are more apparent, and AFMA is really the only way to deal with that on a large scale. Unless you think manufacturers should start selling cameras and lenses with a coupon for free calibration. I don’t think that’ll fly, though, without big price hikes to cover the extra work. Any piece of sophisticated machinery is going to need adjustment for perfect use. I personally would rather be able to do it myself than have to pay someone else to do it.

And people do expect cameras to arrive pre-calibrated and work perfectly right out of the box. I field questions about that very thing all the time. “All my lenses work just fine on my camera, but yours doesn’t, so it’s broken.” And the vast majority of the time it just needed AFMA. The idea that things work correctly as-is without adjustment is the common misconception.

Donnie Robertson ·

If all the lens option came with the camera (a Gazillion Dollars) that would work. Yet so many have a drawer full of lenses they love that the manufacturers would be hard pressed to make every lens ever made focus perfectly. Ya think?

J.L. Williams ·

Sure, that’s great logic. Other manufacturers would love to do the same: “Sorry your new car runs crappy, but it will be better once you calibrate the fuel injection system. You can’t expect US to do that for every grade of gas and every locale in the country, can you? Geez, what do you expect for $48,0000?”

J.L. Williams ·

Sure, that's great logic. Other manufacturers would love to do the same: "Sorry your new car runs crappy, but it will be better once you calibrate the fuel injection system. You can't expect US to do that for every grade of gas and every locale in the country, can you? Geez, what do you expect for $48,0000?"

Chris Jankowski ·

Joey,

Your statement:

>>>>Because of how phase detection works, there’s just no way for these manufacturers to calibrate….

should be qualified:

>>>> Because of how phase detection works in DSLRs and film SLRs, there’s just no way for these manufacturers to calibrate…

There is absolutely no problem with phase detection autofocus as a method. The problem is that the phase detection sensors in DSLRs are located normally at the bottom of the camera and the light to them has to take a contorted path. The system must perfectly align this path with the path to the sensor, which is difficult as the path includes a movable mirror.

This whole silly business of AFMA becomes totally unnecessary the moment you place the AF sensors directly on the main image sensor the way e.g. Sony does it in A7RII. They have nearly 400 PDAF sensors on the main image sensor. This solves several other problems as well e.g. the restriction to the size of the image covered by AF. They cover 80% of the image sensor with PDAF sensors. It is also much simpler and cheaper to manufacture.

Joey Miller ·

You’re right about the dSLR qualification. I guess I figured that was a given after I linked the articles at the beginning, but I should’ve made it more explicit.

And I’m excited about this trend of phase detection on the image sensor itself. The a7R II is one of my favorite cameras right now because it’s doing it right.

Thom Hogan ·

No, he’s not right about DSLRs versus mirrorless. Phase detect done at its fastest means that the lens motor has to end exactly where the camera told it to go. It’s not just about mirror alignment and mount alignment.

Now some mirrorless cameras perform a contrast detect fine tune after the phase detect to deal with the lens motor tolerance issue. The problem with that is that this slows focus from what it could be using phase detect alone, and can lead to “focus chasing” on moving subjects. Having taken plenty of DSLR and mirrorless images of very fast and complicated movements, I can tell you that the mirrorless systems still have a way to go to equal the best DSLR focus performance. What I see on the Sony’s, in particular, is that they appear to “cheat” a little. In a continuous shot sequence I don’t get every shot perfectly in focus as I expect with my Nikons (or Canon when I shoot it). Instead, I get a lot of images that are “tolerably in focus,” partly due to DOF, but not nailed.

As I noted above, Olympus has AF Fine Tune in their cameras, partly because there are lenses that you can mount on those cameras that will only do phase detect (e.g. no followup contrast detect).

Joey Miller ·

Ahh, thanks for the clarification. And thanks for all the other helpful insight in these comments!

Greg ·

If the AF is still off, shouldn’t the on-sensor image still be out of phase after focusing if the motor doesn’t go to the correct spot? In that case, there should still be focus chasing, but it would be purely due to the requested adjustment not taking the lens all the way. No contrast detection necessary.

In this kind of situation, I could see AF Fine Tune making PDAF faster, but I don’t understand why it would make it more accurate.

Then again, maybe the trick is that Olympus isn’t doing a post-focus check, and is assuming that it went to the right spot. However, in that case wouldn’t the amount of adjustment needed be dependent on how out-of-focus the image was to start?

Thom Hogan ·

See HF’s comment about PD information. Once the focus got to within CoC, there’s no discrimination available. Note that the Japanese tend to use CoC’s of 0.033 for full frame, which most of us would tend to regard as way too lax.

AF Fine Tuning doesn’t make focusing faster. It makes it more accurate to the cumulative tolerances in the system. In a DSLR, those tolerance include lens mount position, mirror positions, AF focus sensor position, and lens motor focusing accuracy, as well as a few others. In a mirrorless system, the first three go away but the last one doesn’t. If the lens didn’t go exactly to where the camera commanded it to (and in the expected time ;~), you don’t have focus, you have something close to focus, which is what AF Fine Tuning is designed to adjust for.

With Olympus, when you use m4/3 lenses on the camera, the camera does mostly contrast detect focusing with PD assist. However, if you put a 4/3 lens on the camera, it does PD focusing and NO contrast detect assist. The reason for this has to do with the design of the lens motors. You can design a lens motor that’s optimal for PD (very fast jumps to specific spots) or CD (fast continuous movement through the focus range). You generally prioritize one or the other. The older 4/3 cameras and lenses were for DSLRs that only had PD, so those lenses were optimized for PD.

Greg ·

After doing a fair amount of reading over the weekend, I think I have a better understanding of what’s going on here.

OSPDAF seems to work more like traditional manual focus assists than modern Traditional PDAF (TPDAF) systems with field lenses. The following paper was especially helpful in describing the older manual-focus assists:

http://dougkerr.net/Pumpkin/articles/Split_Prism.pdf

The reason OSPDAF would still need AF fine tune seems to be a combination of margin or error in the AF system and the communication protocols used for the older lenses. From what I can tell, TPDAF systems used protocols that looked like “move the lens to here,” did a cursory check if the focus was within the margin of error, and then took the shot. In this case, AF fine tune would still be needed if the lens’s concept of focus distance/location was slightly off relative to the actual focus distance, and so all distances in the lens communication needed to be treated as some small percentage off.

If the focus wasn’t within the margin of error after the requested focusing, we would consider the focus system to be broken – this is presumably outside the scope of AF fine tune.

Something I’m not clear on is if PDAF systems in general are able to determine the correct focus point more precisely when significantly out of focus than when almost in focus. Is this true?

Thom Hogan ·

That document is a bit old, and predates on-sensor PD.

I’m going to quote the most intelligent thing I’ve heard about tech design, spoken to me in the back seat of a cab in Las Vegas in 1981 by the remarkable Lee Felsenstein: “if you look at a close enough level, all digital systems eventually become analog.”

What he meant by that is that while, yes, we deal with 1’s, and 0’s in the digital world, how long does it take to go from a 1 to a 0 and what is the state in between? ;~)

Thing is, everyone’s assuming in their comments that alignment and performance is “perfect.” It is not. Yes, the lens may have been instructed to go to X, but when you look closely enough, you discover X has tolerance to it. It could end up at X-Y, X, or X+Y. Making a lens motor stop at a specific place is an analog game, not a digital one. It isn’t “always right” or “always wrong.” In a well-designed system, the performance will tend to fall in a bell curve, which is one reason why AF Fine Tuning works better than no AF Fine Tuning for DSLRs: you align to the center of the bell curve with an AF Fine Tune value.

“Margin of error” is a dangerous concept here. No doubt that engineers are designing to that, but we don’t know what their margin of error is ;~). For a long time, Canon was rounding the Zeiss CoC value to 0.035 for some reason. Others round it to 0.03. And “round” is a significant term in this respect. would an implied value of 0.034 be within the “margin of error” if you round to 0.03? And remember, the Zeiss value (actually 0.025) was originally derived for “normal vision at normal viewing distance of a modest sized print.”

Greg ·

More generally, I’m trying to understand two things:

1.) Why does modern OSPDAF still need AF Fine Tune?

2.) Will all future OSPDAF still need this due to inherent limitations in the performance of the system, or is this just an artifact of current designs?

The reason for focusing on that document [excuse the pun] is that it describes a focusing method without a lens between the primary lens and the separator lenses, which is optically similar to the mechanism described in the Fujifilm OSPDAF description (http://www.dpreview.com/news/2010/8/5/fujifilmpd) and the Sony patent. The document uses large prisms that the user observes, whereas the Fujifilm method uses masked pixels with individual microlenses that the camera observes. The overall effect of using phase detection at an existing plane of focus without an intervening lens is the same.

My point was, optically, OSPDAF is more like the old dual prism method than it is like the modern 3-lens method.

Your discussion of tolerances is exactly the point I was trying to make. If the design is “Go to X” with the intention being that it means going anywhere between X-Y and X+Y, it’s a problem if instead it goes to X-Y+Z to X+Y+Z. AF Fine tune tells the camera to subtract Z before sending the request. (This is all described as constant offsets, I suspect the actual adjustment will be more complicated than that.)

With a design (like most CDAF) where the communication looks more like “move a lot” “Not there yet” “went too far” there is more correcting done when we’re close to in focus. I think this is the point you were trying to make about motor optimization, although your point was more on the physical motor design side required to support a protocol like that. This explains why modern OSPDAF systems still need AF Fine Tune today. Could a system implementing OSPDAF use this same lens communication method of fine-tuning the result that CDAF uses, or is there some inherent meaningful limitation on the precision that OSPDAF can get? It seems to me like the limitation is the sensor pixel size, but that wouldn’t be a limitation at all if the image pixels are the same size as the in-plane focus pixels, would it?

(I’m going to devote a separate response to mosswings on baselines and OSPDAF. It may be that the baseline is the key limitation on phase detection focus precision.)

Thom Hogan ·

1. One of the tolerances you’re trying to remove on all PD systems is where the lens lands upon instruction to go to X. It doesn’t always go to X. In many of the on-sensor PD systems they follow the PD move with contrast detect moves. However, now you have a system that can end up slower than a DSLR PD system that doesn’t have to move twice (assumes it’s been AF Fine Tuned). Moreover, you can’t keep doing CD until you have absolute focus, otherwise you’re in what I call “chase mode” on moving subjects. There’s a tolerance built into the CD “focus” determination, too.

2. Thing is, we keep getting more sampling (resolution). This additional sampling is giving us better recording of what actually happened in terms of focus. There are tolerances in every physical system. That’s why I mentioned the “at some level all digital systems are analog” thing. ALL. Not some. Not many. All. The real question here is do we eventually hit a level where the vast majority say “that’s good enough”? I suspect that if we keep pushing sensor resolutions up, the answer is no.

What will happen is that we’ll run CD motors faster, I think. We’ll also use defocus maps like Panasonic to get some PD-like information.

mosswings ·

I don’t think that this is true – it depends on the system. Remember that off-sensor PDAF systems work by comparing the displacement of image pairs along a line. The zero-point of the line is some distance out from the nominal center of the focus zone, so that small deviations from perfect focus are easily seen by the system and the images don’t overlap. The effective aperture of the off-sensor PDAF system is also quite high – f/28 or so, which means that the images don’t lose definition with defocused images owing to the high DOF of the AF optics.

OSPDAF systems, to my understanding, don’t have this image separation to aid them, so they struggle in two ways: overlap of the image pairs, and lack of field stops or other discriminating devices in the optical path. I’m hedging my bets about the effective aperture of the OSPDAF system, because it can be high.

But it seems like you’re asking a more nuanced question than your phrasing implies..

Greg ·

From what I can tell OSPDAF just works differently than off-sensor PDAF. Image separation on PDAF is really about image separation on the primary lens, not the distance between the sensors themselves. I see no reason we couldn’t have a large baseline with sensors that are next to each other, so long as they are masked (or lensed) differently. This is exactly what the Fujifilm description does. (http://www.dpreview.com/news/2010/8/5/fujifilmpd)

(Dealing with the center focus point here, not addressing potential issues with off-axis focus points.)

With the mask implemented the way it is on the Fujifilm description, the baseline would be lens-limited (extends out to the edge of the lens) but would have a relatively low aperture of f/8 to f/2 (roughly 1.4x the lens aperture, since they’re masking off half of the focus pixels.) If, rather than masking half, the mask started at the f/8 circle and masked down to a quarter of the arc (it probably really does) then that would be more like an effective aperture of f/16 to f/3.3. If we’re willing to give up the lens-limited baseline, we could restrict the outer edge in order to further increase the effective aperture.

Based on this, I see no reason why OSPDAF couldn’t have similar precision to CDAF, but it will be a trade-off with out-of-focus accuracy due to having low apertures. Am I missing something? Certainly if the outer edge of the lens isn’t masked then theoretical focus precision should be pretty close to CDAF since we’re using the same pixel size in the same plane with the same primary lens aperture, shouldn’t it?

mosswings ·

These are good questions, Greg, and I don’t have a definitive answer for them, But I do have some general thoughts.

It is true that PDAF isn’t a “one-and-done” sort of technique, but is constantly sampling and correcting; it just has more information with which to work with than straight CDAF, and doesn’t fiddle around as much. Many times, not much at all. There is nothing preventing any PDAF implementation from being as precise as CDAF, and if you play a bit with your camera you will observe that PDAF isn’t just giving up and going home after one focusing cycle. The issue revolves around where the algorithm gives up. Here, we see that perhaps the PDAF vendors are opting for more speed vs. more repeatability (the subject of another posting on this website), but Canon seems to have made significant strides in making PDAF as repeatable and precise as CDAF. So I see your last conjecture as perhaps overthinking the problem.

Where OSPDAF has some pretty fundamental limitations WRT PDAF is in its light sensitivity (which is pretty poor) and its ability to discriminate particular areas in the frame that lead to poor complex-field tracking (a consequence of that very simple left-right masking).

I will admit to being a bit boggled by the tremendous number of big-data OSPDAF patents taken out over the recent past, but one thing seems clear: manufacturers are relying more and more on areal signal processing tricks to augment the rather weak signal strengths and fuzzy phase information available from OSPDAF sensors. PDAF is remarkably effective with an extremely sparse data stream: one line in the X direction, one in the Y, great optics.

I’d suggest that you take a look at that Nikon programmable-AF patent that I posted in an earlier comment on this thread. It suggests much about what is possible with a high-resolution areal traditional PDAF sensor, and also what may be unavoidable if the same pixels are being used for both imaging and AF.

Thom Hogan ·

This is an excellent question, but I think you’re not going to like the answer ;~).

“Back in the day” we weren’t sampling the data as reliably or at as high a level as we are today. Film wasn’t necessarily flat at the film plane, and unless you had a great loupe and good eyes, you wouldn’t see the offsets that we can easily see today. Worse still, the manufacturers didn’t control for the tight tolerances that are needed today. This is particularly true of Nikon: older, pre-digital Nikkor designs seem to not even fall into a bell curve in terms of how they are off in both mount position and lens motor focus placement.

So, at a minimum, we have legacy issues that the only way to deal with is via some post mortem adjustment. I suppose we could ask the camera makers to do that for us by sending lenses in and having them CHARGE us for that, but I think the way they’ve done this is fine.

As for EPOI, they almost certainly had higher margins than the NikonUSA subsidiary does today, and fewer dealers and customers to deal with.

J.L. Williams ·

Great explanation, but I can't help wondering when lens calibration became the consumer's job rather than the manufacturer's. "Back in the day," if your 50mm f/1.1 Nikkor wasn't focusing your model's heavily-mascared eyelashes accurately on your Nikon SP, you sent them (the camera and lens, not the eyelashes) back to EPOI and they'd fix it.

Joey Miller ·

Well, on a rangefinder the adjustment is mechanical. I've done rangefinder adjustment on my old Mamiya Universal, and it's not fun, nor would I expect the average user to be able to do it. And you don't have to calibrate your own gear if you don't want to. You can always send your camera and lenses back to the manufacturer and have them adjust everything for you, for a price. Because of how phase detection works, there's just no way for these manufacturers to calibrate every copy of a lens to every copy of a camera in their inventory. It's a combo specific issue. As far as I know, this has always been the case. We just haven't had a way to adjust it ourselves until recent years. I guess it's just in how you view it. It's either the manufacturers being lazy and making you do it yourself, or it's them allowing you to make minor adjustments when necessary and skip the expense of having it done professionally. I prefer to think of it as the latter.

J.L. Williams ·

Yeah, I've adjusted more than a few rangefinders myself (Nikons are easier than most; the original Mamiya 6 is one of the easiest) but what surprises me is that people now no longer expect new cameras to arrive pre-calibrated, but accept it as their responsibility and applaud manufacturers for giving us a menu to do it: "It's not a bug, it's a feature!"

Joey Miller ·

The more difficult things to adjust on my old Mamiya are the lens cams. Having to adjust all 6 lenses I have for that Universal body in conjunction with the rangefinder is the most tedious thing I've ever had to do. God forbid the body or any of the lenses take a big bump and I have to do it all over again. One of my 100mm f/2.8s is so off now that I only use it with the ground glass.

But I think the prominence of the need to correct focus has more to do with how exacting digital is compared to the old film days. Most of us back then didn't bother zooming in at 200% and pixel peeping our images to death. Now that we can do that, the flaws in phase-detect AF are more apparent, and AFMA is really the only way to deal with that on a large scale. Unless you think manufacturers should start selling cameras and lenses with a coupon for free calibration. I don't think that'll fly, though, without big price hikes to cover the extra work. Any piece of sophisticated machinery is going to need adjustment for perfect use. I personally would rather be able to do it myself than have to pay someone else to do it.

And people do expect cameras to arrive pre-calibrated and work perfectly right out of the box. I field questions about that very thing all the time. "All my lenses work just fine on my camera, but yours doesn't, so it's broken." And the vast majority of the time it just needed AFMA. The idea that things work correctly as-is without adjustment is the common misconception.

Chris Jankowski ·

Joey,

Your statement:

>>>>Because of how phase detection works, there's just no way for these manufacturers to calibrate....

should be qualified:

>>>> Because of how phase detection works in DSLRs and film SLRs, there's just no way for these manufacturers to calibrate...

There is absolutely no problem with phase detection autofocus as a method. The problem is that the phase detection sensors in DSLRs are located normally at the bottom of the camera and the light to them has to take a contorted path. The system must perfectly align this path with the path to the sensor, which is difficult as the path includes a movable mirror.

This whole silly business of AFMA becomes totally unnecessary the moment you place the AF sensors directly on the main image sensor the way e.g. Sony does it in A7RII. They have nearly 400 PDAF sensors on the main image sensor. This solves several other problems as well e.g. the restriction to the size of the image covered by AF. They cover 80% of the image sensor with PDAF sensors. It is also much simpler and cheaper to manufacture.

Joey Miller ·

You're right about the dSLR qualification. I guess I figured that was a given after I linked the articles at the beginning, but I should've made it more explicit.

And I'm excited about this trend of phase detection on the image sensor itself. The a7R II is one of my favorite cameras right now because it's doing it right.

Thom Hogan ·

No, he's not right about DSLRs versus mirrorless. Phase detect done at its fastest means that the lens motor has to end exactly where the camera told it to go. It's not just about mirror alignment and mount alignment.

Now some mirrorless cameras perform a contrast detect fine tune after the phase detect to deal with the lens motor tolerance issue. The problem with that is that this slows focus from what it could be using phase detect alone, and can lead to "focus chasing" on moving subjects. Having taken plenty of DSLR and mirrorless images of very fast and complicated movements, I can tell you that the mirrorless systems still have a way to go to equal the best DSLR focus performance. What I see on the Sony's, in particular, is that they appear to "cheat" a little. In a continuous shot sequence I don't get every shot perfectly in focus as I expect with my Nikons (or Canon when I shoot it). Instead, I get a lot of images that are "tolerably in focus," partly due to DOF, but not nailed.

As I noted above, Olympus has AF Fine Tune in their cameras, partly because there are lenses that you can mount on those cameras that will only do phase detect (e.g. no followup contrast detect).

Greg ·

If the AF is still off, shouldn't the on-sensor image still be out of phase after focusing if the motor doesn't go to the correct spot? In that case, there should still be focus chasing, but it would be purely due to the requested adjustment not taking the lens all the way. No contrast detection necessary.

In this kind of situation, I could see AF Fine Tune making PDAF faster, but I don't understand why it would make it more accurate.

Then again, maybe the trick is that Olympus isn't doing a post-focus check, and is assuming that it went to the right spot. However, in that case wouldn't the amount of adjustment needed be dependent on how out-of-focus the image was to start?

Thom Hogan ·

See HF's comment about PD information. Once the focus got to within CoC, there's no discrimination available. Note that the Japanese tend to use CoC's of 0.033 for full frame, which most of us would tend to regard as way too lax.

AF Fine Tuning doesn't make focusing faster. It makes it more accurate to the cumulative tolerances in the system. In a DSLR, those tolerance include lens mount position, mirror positions, AF focus sensor position, and lens motor focusing accuracy, as well as a few others. In a mirrorless system, the first three go away but the last one doesn't. If the lens didn't go exactly to where the camera commanded it to (and in the expected time ;~), you don't have focus, you have something close to focus, which is what AF Fine Tuning is designed to adjust for.

With Olympus, when you use m4/3 lenses on the camera, the camera does mostly contrast detect focusing with PD assist. However, if you put a 4/3 lens on the camera, it does PD focusing and NO contrast detect assist. The reason for this has to do with the design of the lens motors. You can design a lens motor that's optimal for PD (very fast jumps to specific spots) or CD (fast continuous movement through the focus range). You generally prioritize one or the other. The older 4/3 cameras and lenses were for DSLRs that only had PD, so those lenses were optimized for PD.

Greg ·

After doing a fair amount of reading over the weekend, I think I have a better understanding of what's going on here.

OSPDAF seems to work more like traditional manual focus assists than modern Traditional PDAF (TPDAF) systems with field lenses. The following paper was especially helpful in describing the older manual-focus assists:

http://dougkerr.net/Pumpkin...

The reason OSPDAF would still need AF fine tune seems to be a combination of margin or error in the AF system and the communication protocols used for the older lenses. From what I can tell, TPDAF systems used protocols that looked like "move the lens to here," did a cursory check if the focus was within the margin of error, and then took the shot. In this case, AF fine tune would still be needed if the lens's concept of focus distance/location was slightly off relative to the actual focus distance, and so all distances in the lens communication needed to be treated as some small percentage off.

If the focus wasn't within the margin of error after the requested focusing, we would consider the focus system to be broken - this is presumably outside the scope of AF fine tune.

Something I'm not clear on is if PDAF systems in general are able to determine the correct focus point more precisely when significantly out of focus than when almost in focus. Is this true?

Thom Hogan ·

That document is a bit old, and predates on-sensor PD.

I'm going to quote the most intelligent thing I've heard about tech design, spoken to me in the back seat of a cab in Las Vegas in 1981 by the remarkable Lee Felsenstein: "if you look at a close enough level, all digital systems eventually become analog."

What he meant by that is that while, yes, we deal with 1's, and 0's in the digital world, how long does it take to go from a 1 to a 0 and what is the state in between? ;~)

Thing is, everyone's assuming in their comments that alignment and performance is "perfect." It is not. Yes, the lens may have been instructed to go to X, but when you look closely enough, you discover X has tolerance to it. It could end up at X-Y, X, or X+Y. Making a lens motor stop at a specific place is an analog game, not a digital one. It isn't "always right" or "always wrong." In a well-designed system, the performance will tend to fall in a bell curve, which is one reason why AF Fine Tuning works better than no AF Fine Tuning for DSLRs: you align to the center of the bell curve with an AF Fine Tune value.

"Margin of error" is a dangerous concept here. No doubt that engineers are designing to that, but we don't know what their margin of error is ;~). For a long time, Canon was rounding the Zeiss CoC value to 0.035 for some reason. Others round it to 0.03. And "round" is a significant term in this respect. would an implied value of 0.034 be within the "margin of error" if you round to 0.03? And remember, the Zeiss value (actually 0.025) was originally derived for "normal vision at normal viewing distance of a modest sized print."

Greg ·

More generally, I'm trying to understand two things:

1.) Why does modern OSPDAF still need AF Fine Tune?

2.) Will all future OSPDAF still need this due to inherent limitations in the performance of the system, or is this just an artifact of current designs?

The reason for focusing on that document [excuse the pun] is that it describes a focusing method without a lens between the primary lens and the separator lenses, which is optically similar to the mechanism described in the Fujifilm OSPDAF description (http://www.dpreview.com/new... and the Sony patent. The document uses large prisms that the user observes, whereas the Fujifilm method uses masked pixels with individual microlenses that the camera observes. The overall effect of using phase detection at an existing plane of focus without an intervening lens is the same.

My point was, optically, OSPDAF is more like the old dual prism method than it is like the modern 3-lens method.

Your discussion of tolerances is exactly the point I was trying to make. If the design is "Go to X" with the intention being that it means going anywhere between X-Y and X+Y, it's a problem if instead it goes to X-Y+Z to X+Y+Z. AF Fine tune tells the camera to subtract Z before sending the request. (This is all described as constant offsets, I suspect the actual adjustment will be more complicated than that.)

With a design (like most CDAF) where the communication looks more like "move a lot" "Not there yet" "went too far" there is more correcting done when we're close to in focus. I think this is the point you were trying to make about motor optimization, although your point was more on the physical motor design side required to support a protocol like that. This explains why modern OSPDAF systems still need AF Fine Tune today. Could a system implementing OSPDAF use this same lens communication method of fine-tuning the result that CDAF uses, or is there some inherent meaningful limitation on the precision that OSPDAF can get? It seems to me like the limitation is the sensor pixel size, but that wouldn't be a limitation at all if the image pixels are the same size as the in-plane focus pixels, would it?

(I'm going to devote a separate response to mosswings on baselines and OSPDAF. It may be that the baseline is the key limitation on phase detection focus precision.)

Thom Hogan ·

1. One of the tolerances you're trying to remove on all PD systems is where the lens lands upon instruction to go to X. It doesn't always go to X. In many of the on-sensor PD systems they follow the PD move with contrast detect moves. However, now you have a system that can end up slower than a DSLR PD system that doesn't have to move twice (assumes it's been AF Fine Tuned). Moreover, you can't keep doing CD until you have absolute focus, otherwise you're in what I call "chase mode" on moving subjects. There's a tolerance built into the CD "focus" determination, too.

2. Thing is, we keep getting more sampling (resolution). This additional sampling is giving us better recording of what actually happened in terms of focus. There are tolerances in every physical system. That's why I mentioned the "at some level all digital systems are analog" thing. ALL. Not some. Not many. All. The real question here is do we eventually hit a level where the vast majority say "that's good enough"? I suspect that if we keep pushing sensor resolutions up, the answer is no.

What will happen is that we'll run CD motors faster, I think. We'll also use defocus maps like Panasonic to get some PD-like information.

mosswings ·

I don't think that this is true - it depends on the system. Remember that off-sensor PDAF systems work by comparing the displacement of image pairs along a line. The zero-point of the line is some distance out from the nominal center of the focus zone, so that small deviations from perfect focus are easily seen by the system and the images don't overlap. The effective aperture of the off-sensor PDAF system is also quite high - f/28 or so, which means that the images don't lose definition with defocused images owing to the high DOF of the AF optics.

OSPDAF systems, to my understanding, don't have this image separation to aid them, so they struggle in two ways: overlap of the image pairs, and lack of field stops or other discriminating devices in the optical path. I'm hedging my bets about the effective aperture of the OSPDAF system, because it can be high.

But it seems like you're asking a more nuanced question than your phrasing implies..

Greg ·

From what I can tell OSPDAF just works differently than off-sensor PDAF. Image separation on PDAF is really about image separation on the primary lens, not the distance between the sensors themselves. I see no reason we couldn't have a large baseline with sensors that are next to each other, so long as they are masked (or lensed) differently. This is exactly what the Fujifilm description does. (http://www.dpreview.com/new...

(Dealing with the center focus point here, not addressing potential issues with off-axis focus points.)

With the mask implemented the way it is on the Fujifilm description, the baseline would be lens-limited (extends out to the edge of the lens) but would have a relatively low aperture of f/8 to f/2 (roughly 1.4x the lens aperture, since they're masking off half of the focus pixels.) If, rather than masking half, the mask started at the f/8 circle and masked down to a quarter of the arc (it probably really does) then that would be more like an effective aperture of f/16 to f/3.3. If we're willing to give up the lens-limited baseline, we could restrict the outer edge in order to further increase the effective aperture.

Based on this, I see no reason why OSPDAF couldn't have similar precision to CDAF, but it will be a trade-off with out-of-focus accuracy due to having low apertures. Am I missing something? Certainly if the outer edge of the lens isn't masked then theoretical focus precision should be pretty close to CDAF since we're using the same pixel size in the same plane with the same primary lens aperture, shouldn't it?

mosswings ·

These are good questions, Greg, and I don't have a definitive answer for them, But I do have some general thoughts.

It is true that PDAF isn't a "one-and-done" sort of technique, but is constantly sampling and correcting; it just has more information with which to work with than straight CDAF, and doesn't fiddle around as much. Many times, not much at all. There is nothing preventing any PDAF implementation from being as precise as CDAF, and if you play a bit with your camera you will observe that PDAF isn't just giving up and going home after one focusing cycle. The issue revolves around where the algorithm gives up. Here, we see that perhaps the PDAF vendors are opting for more speed vs. more repeatability (the subject of another posting on this website), but Canon seems to have made significant strides in making PDAF as repeatable and precise as CDAF. So I see your last conjecture as perhaps overthinking the problem.

Where OSPDAF has some pretty fundamental limitations WRT PDAF is in its light sensitivity (which is pretty poor) and its ability to discriminate particular areas in the frame that lead to poor complex-field tracking (a consequence of that very simple left-right masking).

I will admit to being a bit boggled by the tremendous number of big-data OSPDAF patents taken out over the recent past, but one thing seems clear: manufacturers are relying more and more on areal signal processing tricks to augment the rather weak signal strengths and fuzzy phase information available from OSPDAF sensors. PDAF is remarkably effective with an extremely sparse data stream: one line in the X direction, one in the Y, great optics.

I'd suggest that you take a look at that Nikon programmable-AF patent that I posted in an earlier comment on this thread. It suggests much about what is possible with a high-resolution areal traditional PDAF sensor, and also what may be unavoidable if the same pixels are being used for both imaging and AF.

Thom Hogan ·

This is an excellent question, but I think you're not going to like the answer ;~).

"Back in the day" we weren't sampling the data as reliably or at as high a level as we are today. Film wasn't necessarily flat at the film plane, and unless you had a great loupe and good eyes, you wouldn't see the offsets that we can easily see today. Worse still, the manufacturers didn't control for the tight tolerances that are needed today. This is particularly true of Nikon: older, pre-digital Nikkor designs seem to not even fall into a bell curve in terms of how they are off in both mount position and lens motor focus placement.

So, at a minimum, we have legacy issues that the only way to deal with is via some post mortem adjustment. I suppose we could ask the camera makers to do that for us by sending lenses in and having them CHARGE us for that, but I think the way they've done this is fine.

As for EPOI, they almost certainly had higher margins than the NikonUSA subsidiary does today, and fewer dealers and customers to deal with.

Clint Cheng ·

It should be noted that depth of field distribution is not always 50/50; it varies with focal length of the lens begin tested.

For example:

Focal Length : Rear : Front

10mm : 70.2% : 29.8 %

20mm : 60.1% : 39.9 %

50mm : 54.0% : 46.0 %

100mm : 52.0% : 48.0 %

200mm : 51.0% : 49.0 %

400mm : 50.5% : 49.5 %

Joey Miller ·

Good point! Thanks for the extra info.

Thom Hogan ·

Yes, and DOF distribution is something I had to work with the LensAlign folk with because it also varies with test distance. When you’re assessing the front/back pairs of numbers, you absolutely need to take this into account. FWIW, I find judging the longitudinal chromatic aberration to be more accurate. The focus plane should have none, forward and backward will have some, especially on primes.

Chris Jankowski ·

Hear, hear,

This whole silly business of AFMA becomes totally

unnecessary the moment you place the AF sensors directly on the main

image sensor the way e.g. Sony

does it in A7RII. They have nearly 400 AF sensors on the main image

sensor. This solves several other problems e.g. the restriction to the

size of the image covered by AF. They cover 80%.

I am too hoping that the in a few years all cameras will have PDAF and CDAF directly on the sensor.

Thom Hogan ·

This isn’t actually true. What you’re adjusting for when you do AF Fine Tune is a bunch of tolerances, not just one. In a PD-only system, one of those tolerances is the focus motor in the lens. Olympus, for example, has PD-on-sensor AND also provides AF Fine Tune for this reason: the lens might not have gone exactly where the camera told it to.

Okay, so you COULD do a contrast detect after the phase detect sequence, but that slows down focus systems, and it introduces the potential for “chase” situations (some might call this hunting).

Chris Jankowski ·

Yes, PDAF to get there fast + CDAF to finish and fine tune (both on the main image sensor) is what the Sony A7RII does. In fact, the final CDAF fine tuning may be conceptually thought of as always on, per shot micro adjustment. There is no danger of hunting really, as this is dealt with algorithmically – by limiting the range. Also, the process can be made faster and more predictable by putting slight bias in the PDAF algorithm to make CDAF to start in the right direction.

Thom Hogan ·

Well, even with a reduced range, you can still be hunting within that range on a moving subject. I believe Sony goes further and punts on CD adjustment in some way (either by limiting the number of CD moves or by stopping if it thinks it’s within some CoC). That would explain why on truly moving subjects–especially ones that aren’t in a fixed, linear motion–I see lots of near misses on focus.

Personally, near misses on focuses that are inconsistent in nature–what I see in many Sony sequences–is worse than being consistently just off of focus in a DSLR that allows fine tuning. With the latter, I can adjust out the problem and have a series of spot on images, with the former I can’t.

Donnie Robertson ·

“This whole silly business of AFMA becomes totally unnecessary the moment you place…”. The moment THAT doesn’t work as planned. Sure it IS 95% reliable, but that 5% is a kick n the spheres. Especially for “old geezers” who are half blind. Ahhhhhrrrrrrrggggggggggg!

Ilya Zakharevich ·

What you wrote makes absolutely no sense. There is no direct dependence of Front/Back ratio on the focal length. In fact, for ANY lens, Front/Back ratio is 1 if the depth of field is shallow enough.

What there IS is a dependence of F/B ratio on the DF/L ratio (at least if diffraction is small enough). Here DF is the depth of field, and L is the distance to the subject. The focal length DOES NOT ENTER into this dependence.

Michael Clark ·

It also varies with the focus distance for each focal length. Regardless of focal length, by the time hyperfocal distance is reached the ratio of front to back is going to be approaching 1:? (or, as you state it, 99.9% : 0.1%)

Clint Cheng ·

It should be noted that depth of field distribution is not always 50/50; it varies with focal length of the lens begin tested.

For example:

Focal Length : Rear : Front

10mm : 70.2% : 29.8 %

20mm : 60.1% : 39.9 %

50mm : 54.0% : 46.0 %

100mm : 52.0% : 48.0 %

200mm : 51.0% : 49.0 %

400mm : 50.5% : 49.5 %

Joey Miller ·

Good point! Thanks for the extra info.

Thom Hogan ·

Yes, and DOF distribution is something I had to work with the LensAlign folk with because it also varies with test distance. When you're assessing the front/back pairs of numbers, you absolutely need to take this into account. FWIW, I find judging the longitudinal chromatic aberration to be more accurate. The focus plane should have none, forward and backward will have some, especially on primes.

Chris Jankowski ·

Hear, hear,

This whole silly business of AFMA becomes totally

unnecessary the moment you place the AF sensors directly on the main

image sensor the way e.g. Sony

does it in A7RII. They have nearly 400 AF sensors on the main image

sensor. This solves several other problems e.g. the restriction to the

size of the image covered by AF. They cover 80%.

I am too hoping that the in a few years all cameras will have PDAF and CDAF directly on the sensor.

Thom Hogan ·

This isn't actually true. What you're adjusting for when you do AF Fine Tune is a bunch of tolerances, not just one. In a PD-only system, one of those tolerances is the focus motor in the lens. Olympus, for example, has PD-on-sensor AND also provides AF Fine Tune for this reason: the lens might not have gone exactly where the camera told it to.

Okay, so you COULD do a contrast detect after the phase detect sequence, but that slows down focus systems, and it introduces the potential for "chase" situations (some might call this hunting).

Chris Jankowski ·

Yes, PDAF to get there fast + CDAF to finish and fine tune (both on the main image sensor) is what the Sony A7RII does. In fact, the final CDAF fine tuning may be conceptually thought of as always on, per shot micro adjustment. There is no danger of hunting really, as this is dealt with algorithmically - by limiting the range. Also, the process can be made faster and more predictable by putting slight bias in the PDAF algorithm to make CDAF to start in the right direction.

Thom Hogan ·

Well, even with a reduced range, you can still be hunting within that range on a moving subject. I believe Sony goes further and punts on CD adjustment in some way (either by limiting the number of CD moves or by stopping if it thinks it's within some CoC). That would explain why on truly moving subjects--especially ones that aren't in a fixed, linear motion--I see lots of near misses on focus.

Personally, near misses on focuses that are inconsistent in nature--what I see in many Sony sequences--is worse than being consistently just off of focus in a DSLR that allows fine tuning. With the latter, I can adjust out the problem and have a series of spot on images, with the former I can't.

Donnie Robertson ·

"This whole silly business of AFMA becomes totally unnecessary the moment you place...". The moment THAT doesn't work as planned. Sure it IS 95% reliable, but that 5% is a kick n the spheres. Especially for "old geezers" who are half blind. Ahhhhhrrrrrrrggggggggggg!

Ilya Zakharevich ·

What you wrote makes absolutely no sense. There is no direct dependence of Front/Back ratio on the focal length. In fact, for ANY lens, Front/Back ratio is 1 if the depth of field is shallow enough.

What there IS is a dependence of F/B ratio on the DF/L ratio (at least if diffraction is small enough). Here DF is the depth of field, and L is the distance to the subject. The focal length DOES NOT ENTER into this dependence.

Michael Clark ·

It also varies with the focus distance for each focal length. Regardless of focal length, by the time hyperfocal distance is reached the ratio of front to back is going to be approaching 1:∞ (or, as you state it, 99.9% : 0.1%)

Fazal Majid ·

I think this will be one of the big factors that settles the DSLR vs. mirrorless debate once hybrid on-sensor PDAF+CDAF technology has had a couple more product generations to mature. With rising resolutions, the AF inaccuracies of off-sensor DSLR PDAF are getting more and more visible.

Thom Hogan ·

I don’t think that at all. The geometries of off sensor PD and on sensor PD are quite different. Moreover, it seems that everyone is assuming that the off sensor folk don’t have anything up their sleeve. They do, and as we’ve just seen, the D5 and D500 have automatic AF Fine Tuning. That’s not the only thing they can do, FWIW.

I suspect that long term the performance cameras will have both on and off sensor PD. You can get better discrimination, especially with long lenses at longer distances, with off sensor PD. With on sensor PD you can get additional information about what’s happening during the shot (and during the blackout of the other system).

HF ·

Indeed. As far as I read, OSPDAF has no PD information as soon as the lens is in focus. As the relevant rays from different parts of the lens are within the circle of confusion, the camera can’t immediately react to sudden changes in distance and direction of the subject. The information gathered during previous measurements for acceleration (hard) and velocity is not changed until the subject gets slightly out of focus. Then the OSPDAF is able to get a new estimate. All papers I read on the subject try to deal with information theory (filtering and downsizing to get better data) but not different ways of gathering phase information.

mosswings ·

Uhmmm. NO feedback control loop has error information if the error signal has gone to zero. It sounds like you’re talking about the short baseline issues of the OSPDAF system, and perhaps the issues that OSPDAF has with the effective aperture of the AF system. Off-sensor PDAF has both a large baseline and a high effective aperture that keeps the images being correlated relatively sharp and therefore easy to resolve. OSPDAF doesn’t have the first and may or may not have the second depending on how it’s constructed. Nikon patented a rather fascinating programmable-aperture-and-orientation off-sensor PDAF system that looks for all the world like an OSPDAF sensor with microlenses over large groups of pixels that speaks to Thom’s point about there being more arrows in the DSLR quiver. This technique appears to address many of the complaints leveled at both OSPDAF and off-sensor PDAF, but clearly it’s optimized for one thing – AF – and isn’t compatible with an all-in-one approach.

HF ·

The sensitivity extends beyond the limits of the AF-sensors in DSLRs, explained and demonstrated here:

http://www.clarkvision.com/articles/understanding.autofocus/

Different to OSPDAF

mosswings ·

Not sure what you’re referring to RE: sensitivity. Correction: sensitivity in Clark’s parlance. When I think of AF sensitivity it’s to the minimum light levels in which it can operate, which is for off-sensor PDAF extremely dim light. Clark is sort of saying that, but mixing in aspects of discrimination.

Yes, I see the line in Clark’s treatise about the image pairs overlaying each other with OSPDAF systems. That’s what I was referring to as short baseline problems. The physical distance between the images in an off-sensor PDAF system keeps this image overlap from happening which makes it more responsive to small focus displacements. In OSPDAF systems it’s also exacerbated by the lack of a discriminating mask or lens, which is also displayed in Clark’s treatise. You may have seen this in DPR

http://www.dpreview.com/forums/post/54211961

Describing the construction and theory behind the D300 AF module, which goes into a lot more detail. The Nikon patent I referred to

http://www.google.com/patents/US8526807

is equally fascinating in another way. This doesn’t have a discriminating mask per se, but it does have optics that have the same effect, and the very high effective apertures that keep the image pairs relatively sharp across the focusing range (high DOF, basically). Note that the DOF, and therefore the sharpness of the image, in OSPDAF systems, may not be as well controlled.

OSPDAF systems basically look slightly in one direction and slightly in another direction and try to derive a phase signal from this, employing as you point out heavy math and large data arrays to improve the performance.

Thom Hogan ·

I don't think that at all. The geometries of off sensor PD and on sensor PD are quite different. Moreover, it seems that everyone is assuming that the off sensor folk don't have anything up their sleeve. They do, and as we've just seen, the D5 and D500 have automatic AF Fine Tuning. That's not the only thing they can do, FWIW.

I suspect that long term the performance cameras will have both on and off sensor PD. You can get better discrimination, especially with long lenses at longer distances, with off sensor PD. With on sensor PD you can get additional information about what's happening during the shot (and during the blackout of the other system).

HF ·

Indeed. As far as I read, OSPDAF has no PD information as soon as the lens is in focus. As the relevant rays from different parts of the lens are within the circle of confusion, the camera can't immediately react to sudden changes in distance and direction of the subject. The information gathered during previous measurements for acceleration (hard) and velocity is not changed until the subject gets slightly out of focus. Then the OSPDAF is able to get a new estimate. All papers I read on the subject try to deal with information theory (filtering and downsizing to get better data) but not different ways of gathering phase information.

mosswings ·

Uhmmm. NO feedback control loop has error information if the error signal has gone to zero. It sounds like you're talking about the short baseline issues of the OSPDAF system, and perhaps the issues that OSPDAF has with the effective aperture of the AF system. Off-sensor PDAF has both a large baseline and a high effective aperture that keeps the images being correlated relatively sharp and therefore easy to resolve. OSPDAF doesn't have the first and may or may not have the second depending on how it's constructed. Nikon patented a rather fascinating programmable-aperture-and-orientation off-sensor PDAF system that looks for all the world like an OSPDAF sensor with microlenses over large groups of pixels that speaks to Thom's point about there being more arrows in the DSLR quiver. This technique appears to address many of the complaints leveled at both OSPDAF and off-sensor PDAF, but clearly it's optimized for one thing - AF - and isn't compatible with an all-in-one approach.

HF ·

The sensitivity extends beyond the limits of the AF-sensors in DSLRs, explained and demonstrated here:

http://www.clarkvision.com/...

Different to OSPDAF

mosswings ·

Not sure what you're referring to RE: sensitivity. Correction: sensitivity in Clark's parlance. When I think of AF sensitivity it's to the minimum light levels in which it can operate, which is for off-sensor PDAF extremely dim light. Clark is sort of saying that, but mixing in aspects of discrimination.

Yes, I see the line in Clark's treatise about the image pairs overlaying each other with OSPDAF systems. That's what I was referring to as short baseline problems. The physical distance between the images in an off-sensor PDAF system keeps this image overlap from happening which makes it more responsive to small focus displacements. In OSPDAF systems it's also exacerbated by the lack of a discriminating mask or lens, which is also displayed in Clark's treatise. You may have seen this in DPR

http://www.dpreview.com/for...

Describing the construction and theory behind the D300 AF module, which goes into a lot more detail. The Nikon patent I referred to

http://www.google.com/paten...

is equally fascinating in another way. This doesn't have a discriminating mask per se, but it does have optics that have the same effect, and the very high effective apertures that keep the image pairs relatively sharp across the focusing range (high DOF, basically). Note that the DOF, and therefore the sharpness of the image, in OSPDAF systems, may not be as well controlled.

OSPDAF systems basically look slightly in one direction and slightly in another direction and try to derive a phase signal from this, employing as you point out heavy math and large data arrays to improve the performance.

kimH ·

Is there a rule-of-thumb regarding distance to target?

Joey Miller ·

Some people suggest 25x the focal length of the lens, but shorter works fine as well. In my 135mm setup, I was about 5ft away.

mosswings ·

Fine Tuning values change with distance to subject, converging on a fairly constant value as distance increases. 25x is recommended by Nikon, but good luck finding a consumer-level publication by them stating this.

Reikan published this on the subject:

http://s449182328.websitehome.co.uk/focal/dl//Docs/FoCal%20Test%20Distance_1.1.pdf

There are a couple of bottom lines here: first, choose the fine tuning value that is appropriate for your intended use. If you will only be using the lens at very short subject distances, calibrate closer to that distance (Example: macros). Second, strive for a good balance between optimal subject distance and Fine Tuning value. As the Fine Tuning magnitude gets larger, the ability of the lens to focus at the other extreme may degrade. If you’re pushing 15s and 20s, check the other end of the focus to see if it still is OK.

Thom Hogan ·

I second that, Mosswings: fine tune to your intended use. Likewise, you need to be aware that you can lose infinity focus with some AF Fine Tunings (technically you can lose close focus, too, but if you fine tuned to intended use you’re not worried about this or losing infinity focus).

mosswings ·

Fine Tuning values change with distance to subject, converging on a fairly constant value as distance increases. 25x is recommended by Nikon, but good luck finding a consumer-level publication by them stating this.

Reikan published this on the subject:

http://s449182328.websiteho...

There are a couple of bottom lines here: first, choose the fine tuning value that is appropriate for your intended use. If you will only be using the lens at very short subject distances, calibrate closer to that distance (Example: macros). Second, strive for a good balance between optimal subject distance and Fine Tuning value. As the Fine Tuning magnitude gets larger, the ability of the lens to focus at the other extreme may degrade. If you're pushing 15s and 20s, check the other end of the focus to see if it still is OK.

Thom Hogan ·

I second that, Mosswings: fine tune to your intended use. Likewise, you need to be aware that you can lose infinity focus with some AF Fine Tunings (technically you can lose close focus, too, but if you fine tuned to intended use you're not worried about this or losing infinity focus).

Piotr Krochmal ·

Hi I tried many home calibration systems, some use monitor as target others DIY calibration setup similar to LensAlign system.

For me worked setup when target was made from just printed paper with text. Light was from side to got better contrast. I’ve check best sharpness by LV – as most lenses have lateral aberration just look for moment between green and purple. This is my reference point for calibration. Then I look at distance scale turn LV off, AF on and see what happens, change micro-calibration setting and so on. It is much faster than making photos, downloading them putting filter emboss (best to shown OOF areas).

I’ve got this process after buying sigma 18-35 with sigma dock (pentax version).

So main problem was how to fast and reliable check when sharp is sharp.

And as calibration process required about 3 iterations of full

calibration I have to got setup that not take me whole day.

How that info help someone.

Best!

p.s.

Why A4 text paper sheet? AF point size and AF point mark is two different things.

Having that size target – I have one variable less.

Piotr Krochmal ·

Hi I tried many home calibration systems, some use monitor as target others DIY calibration setup similar to LensAlign system.

For me worked setup when target was made from just printed paper with text. Light was from side to got better contrast. I've check best sharpness by LV - as most lenses have lateral aberration just look for moment between green and purple. This is my reference point for calibration. Then I look at distance scale turn LV off, AF on and see what happens, change micro-calibration setting and so on. It is much faster than making photos, downloading them putting filter emboss (best to shown OOF areas).

I've got this process after buying sigma 18-35 with sigma dock (pentax version).

So main problem was how to fast and reliable check when sharp is sharp.

And as calibration process required about 3 iterations of full

calibration I have to got setup that not take me whole day.

How that info help someone.

Best!

p.s.

Why A4 text paper sheet? AF point size and AF point mark is two different things.

Having that size target - I have one variable less.

MichaelK ·

Of course the future will be different if everyone makes their cameras like Nikon has with the D500/D5 with an automated system for applying AF fine tune.

Matthew Miller ·

Or you can do the same thing that system does manually — switch between live view/contrast-detect focus and phase-detect, and adjust the fine tuning until they’re the same. Both simpler and more accurate than the slanted-ruler method.

Matthew Miller ·

Or you can do the same thing that system does manually — switch between live view/contrast-detect focus and phase-detect, and adjust the fine tuning until they're the same. Both simpler and more accurate than the slanted-ruler method.

l_d_allan ·

MagicLantern provides “Auto-Dot-Tune” micro-focus-adjustment. Quick and easy. I’m unclear if Nikon is using a similar approach.

l_d_allan ·

MagicLantern provides "Auto-Dot-Tune" micro-focus-adjustment. Quick and easy. I'm unclear if Nikon is using a similar approach.

Manfred Winter ·

Now, I have a Sigma 50 1.4 Art lens which produces some weird Focus results, depending on distance. Trying the Target Method with the lower distances is easy, but how does one do this with the infinity distance option? With distances 10 Meters or Larger, the Target is too tiny to produce usable results. What’s the best way here? And which distance is realistically close enough to infinity for this purpose?

Manfred Winter ·

Now, I have a Sigma 50 1.4 Art lens which produces some weird Focus results, depending on distance. Trying the Target Method with the lower distances is easy, but how does one do this with the infinity distance option? With distances 10 Meters or Larger, the Target is too tiny to produce usable results. What's the best way here? And which distance is realistically close enough to infinity for this purpose?

Angel Llacuna ·

In your example, how did you know that you had to put an adjustment value of 9 ?

Frans van den Bergh ·

Or you could try MTF Mapper:

https://sourceforge.net/projects/mtfmapper/

Using the MTF Mapper 45-degree test chart, you can obtain quantitative values to show you the location of the surface of best focus.

My user documentation is a bit crusty, so take a look at Ed Dozier’s recipe for AF tuning using MTF Mapper:

http://www.photoartfromscience.com/#!MTF-Mapper-Cliffs-Notes/qrlrc/55eb21620cf23d0feffc23fc

Frans van den Bergh ·

Or you could try MTF Mapper:

https://sourceforge.net/pro...

Using the MTF Mapper 45-degree test chart, you can obtain quantitative values to show you the location of the surface of best focus.

My user documentation is a bit crusty, so take a look at Ed Dozier's recipe for AF tuning using MTF Mapper:

http://www.photoartfromscie...

Greg Thurtle ·

The variability from shot to shot is why i recommend people use FoCal over a standard test chart and eyeballing as it’s the only solution i’m aware of that takes the guesswork out of it by analysing many shots over a range to take out the random variation that occurs in any PDAF system (lens motor, other factors, etc).

I remember using the slanted ruler method originally (which is basically what lens align is doing for $$$ more). and I’d always fluctuate around certain values as the shot-to-shot variablity makes you second guess yourself.

I also love the analysis tools in FoCal to let me know how my lens is performing and typical AF variablity giving me more confidence in my results and making it repeatable.

Albert Dutra ·

Happy FoCal user as well!

Greg Thurtle ·

The variability from shot to shot is why i recommend people use FoCal over a standard test chart and eyeballing as it's the only solution i'm aware of that takes the guesswork out of it by analysing many shots over a range to take out the random variation that occurs in any PDAF system (lens motor, other factors, etc).

I remember using the slanted ruler method originally (which is basically what lens align is doing for $$$ more). and I'd always fluctuate around certain values as the shot-to-shot variablity makes you second guess yourself.

I also love the analysis tools in FoCal to let me know how my lens is performing and typical AF variablity giving me more confidence in my results and making it repeatable.

Adam Fo ·

What about lenses like a Planar f1.4 which change the focus point when stopped down ?

Albert ·

Many thanks for the article. I received a Tamron 35 a few days ago, and following your instructions (I did it on the cheap) was able to determine it was consistently backfocusing on my D750 by a little. I calibrated it to -9, and was able to get reproducible results (5 shots tested, defocusing before shooting) at 1m50 and 1m. I then did some testing handheld, still shooting the ruler from a variety of distances, and the calibration held up.

brenton giesey ·

how does micro-adjustment apply to mirrorless cameras like the a7Rii that don’t have standard focus points like a DSLR and different accuracy?

AaronClosz ·

The interplay between the camera and lens is essentially the same. The camera’s software has a particular point on the sensor that it wants to be in focus and talks to the lens until a specific amount of contrast is detected. The micro-adjustment redefines where that process begins, as the size of the movements are usually limited. So, maybe the lens needs to begin moving at point B rather than point A.

dtolios ·

Micro-adjustment is only needed in D-SLRs or similar assemblies were the AF sensor is not on the sensor plane. D-SLRs use a dedicated AF sensor with its optical path being different than that to the sensor. Even small differences in the length of the optical path to the AF sensor, vs that of the sensor due to manufacturing tolerances, might throw AF off.

Mirror-less designs, much like latest DSLRs in “live-view”, use diodes on the sensor itself for focusing, so the process is inherently more accurate, although a tad slower still.

CH ·

how does micro-adjustment apply to mirrorless cameras like the a7Rii that don't have standard focus points like a DSLR and different accuracy?

AaronClosz ·

The interplay between the camera and lens is essentially the same. The camera's software has a particular point on the sensor that it wants to be in focus and talks to the lens until a specific amount of contrast is detected. The micro-adjustment redefines where that process begins, as the size of the movements are usually limited. So, maybe the lens needs to begin moving at point B rather than point A.

dtolios ·

Micro-adjustment is only needed in D-SLRs or similar assemblies were the AF sensor is not on the sensor plane. D-SLRs use a dedicated AF sensor with its optical path being different than that to the sensor. Even small differences in the length of the optical path to the AF sensor, vs that of the sensor due to manufacturing tolerances, might throw AF off.

Mirror-less designs, much like latest DSLRs in "live-view", use diodes on the sensor itself for focusing, so the process is inherently more accurate, although a tad slower still.

Ryan ·

I use the Dot Tune method whenever renting a lens.

Néstor Ojeda ·

I need to show me how to adjust zoom Nikkor 24-70 vr 2.8, thank you very much

Winston Ramroop ·

How do u fine tune a lens like Nikon the 16-35 F4? Which end of the lens do u calibrate? The 16mm or the 35mm or somewhere in the middle? I am confused about fine focusing a zoom lens !

Roger Cicala ·