More Ultra High-Resolution MTF Experiments

GEEK ALERT!!

Let’s be absolutely clear; this is not a practical or useful article. It won’t help your photography or cinematography become better. It won’t help you choose equipment any time in the next couple of years. It won’t provide any fodder for your next Forum War. It’s just a geek article that may interest some people. It may give a little peek into what may come in the future, and some insight into the kind of work we’re actually doing behind the scenes at Olaf. So if you’re interested in that kind of stuff, read along.

A couple of years ago, a testing customer asked us to find which lenses could get maximum resolution from a 150-megapixel sensor. Many people assumed that the highest resolving lenses at standard resolutions would be the highest resolving lenses at higher resolutions. Assumptions are the dark matter of the internet; we can’t see them, but we know they account for most of the mass.

We try hard not to assume, so we tested a bunch of lenses at high frequencies on the MTF bench (high-frequency MTF is basically high resolution on the camera). This requires testing MTF at ultra-high resolutions, way higher than any camera of today. The manufacturer wanted 240 lp/mm (compared to the 50 lp/mm we use currently). I wasn’t sure that was necessary, and actually wasn’t sure we could do it, so we settled on 200 lp/mm. If you want some understanding of what all that means, you can read the above post or Brandon Dube’s excellent post. Or you can just accept it and move on.

There was no photo or video lens that could resolve 200 lp/mm wide-open. (Our standard for ‘resolve’ was an MTF 0f 0.3; an MTF of 0.2 was borderline. There’s some evidence to support those cut-offs, but someone could argue them. Wait, this is the internet. Someone WILL argue them; it’s what someone lives for.)

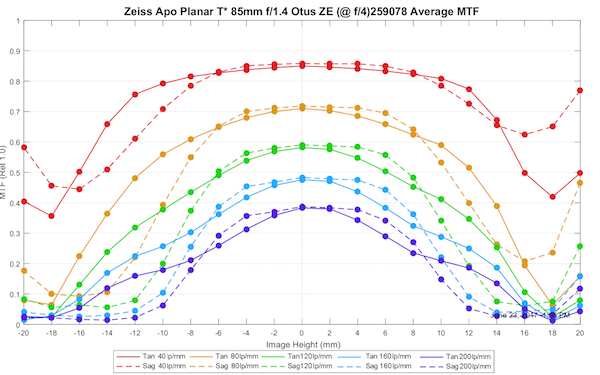

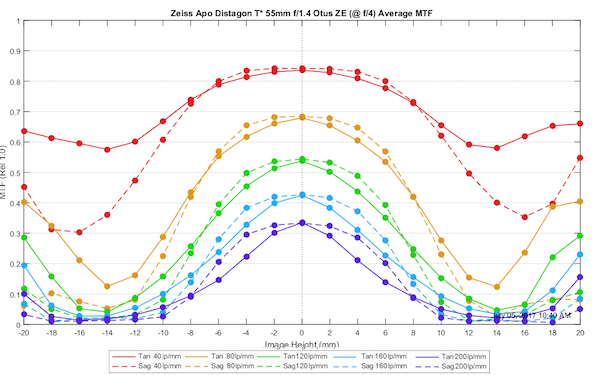

We did find several prime lenses that could meet those criteria stopped down to f/4 in the center of the image, but none could near the edges. The best results were for the Zeiss Otus 85mm f/1.4 lens at f/4. A few other lenses (Zeiss 135mm f/2 APO-Sonnar; Sigma 135mm f/1.8 DG HSM Art; Zeiss 55mm f1.4 Otus) were acceptable at f/4 in the middle portion of the image. Nothing wider than 50mm was really acceptable, although the Canon 35mm f1.4 Mk II and Sigma 35mm f/1.4 Art were close.

Back to the Future

Two years later, that customer asked us if we knew of any other lenses that they should consider. There’ve been a lot of lenses released since we did these tests, and some of those lenses fit the criteria for possible ‘ultra-high’ resolution; primes with focal lengths of 85mm or more. The manufacturers are obviously making these lenses with at least moderately higher resolution cameras in mind. So perhaps some of the newer lenses would resolve ‘ultra-high’ frequencies better than some of the older lenses we had tested.

So we checked some new lenses all the way up to 240 lp/mm, something sufficient to make a 200 megapixel FF camera worthwhile. To be clear, this is NOT coming to a camera near you anytime soon; it’s a research project. But if the researchers are making such a sensor, it makes sense they want to know which lenses would get the best results from the sensor. It is not a test for if the lens or sensor out resolves the other, because that doesn’t happen. (For those of you who believe in perceptual megapixels or that the earth is flat, I’ve included an appendix to clarify this a bit – with no math more complicated than multiplication.)

The Best We Found Last Time

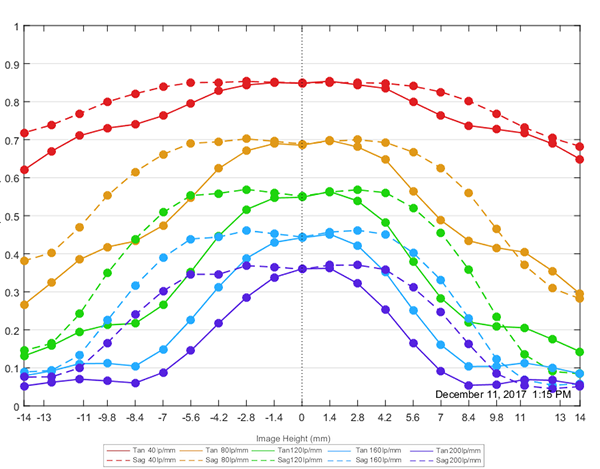

Last time we found some tendencies: longer focal lengths perform better, and f/4 is the minimum aperture that any lens can resolve such high frequencies. To give you a reference, here are several lenses that met our criteria last time (notice on these we set the highest frequency at 200 lp/mm).

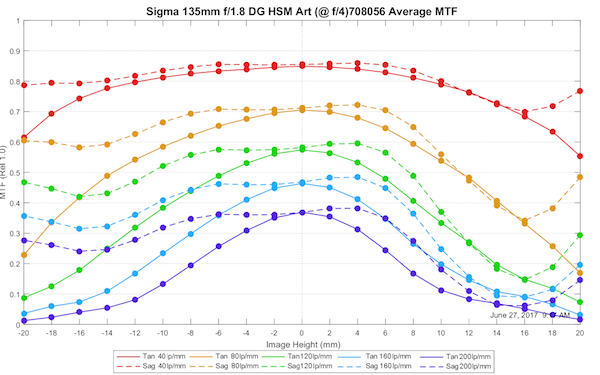

Sigma 135mm f/1.8 DG HSM Art at f/4

Lensrentals.com, 2017

Zeiss 85mm APO-Planar Otus at f/4

Lensrentals.com, 2017

Zeiss 55mm Otus Distagon at f/4

Lensrentals.com, 2017

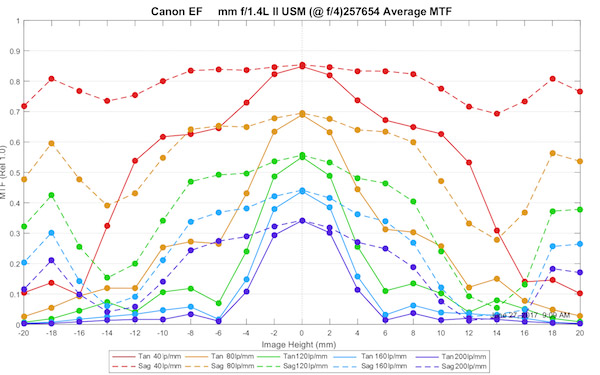

Lensrentals.com, 2017

The 35mm Lens Whose Name Cannot Be Spoken

You’ve seen the best results we had from lenses you could actually buy and use. We did see one lens that you can’t purchase, a prototype lens that will probably never be made, that was truly amazing, particularly for a 35mm focal length.

Olaf Optical Testing, 2017

So here is a 35mm lens that is as good as the 55m Otus; it’s that it’s this good at f/2.8 and doesn’t improve much at f/4. I put this up just to show that current lenses are not designed to do their best at ultra-high resolution, but they could be.

We Wanna Take it Higher

Last time around, we set our peak at 200 lp/mm, even though the client really wanted 240 lp/mm. We didn’t think the higher number was really necessary, and honestly, we weren’t sure that any of our lenses would adequately resolve even 200 lp/mm. This time, though, we felt comfortable we could test at 240 lp, and doing that, let us also test at 192 lp/mm, which is pretty close to the original 200 lp peak.

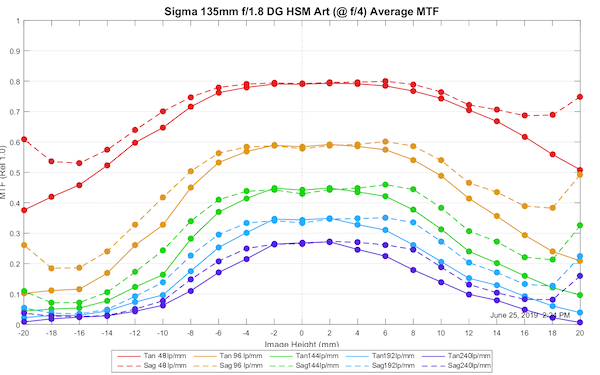

For starters, we repeated the test using a Sigma 135mm f1.8 Art. You can compare it to the one done at 200 lp up above. Remember, these are single copy tests, so there is a little sample variation, but you can see the light blue line of this run (192 lp/mm) is comparable to the purple line of the previous lens (200 lp/mm). Even at 240 lp/mm, the MTF still exceeds our ‘borderline’ MTF cut-off of 0.2, at least in the center, but it doesn’t quite reach and MTF of 0.3.

Lensrentals.com, 2019

We’ll consider this our standard for the new tests, then, and see if any of the other lenses do as well.

Results for New Lenses

Sony 135mm f1.8 GM

We’ll start with the usual “Roger had expectations and was disappointed” part, because, after all, disappointment is the sole purpose of expectations. One of the reasons I was excited about testing new lenses was that the Sony 135mm f/1.8 GM had the best normal-range MTF scores we’d ever seen, besting the Sigma 135mm f/1.8 Art. It seemed logical that it might also best the Sigma in ultra-high resolution tests. Reality 1, Logic 0. We tried the lens at f/4, but it was actually a bit better at f/5, which we show below. At high frequency, it’s not as good as the Sigma.

Lensrentals.com, 2019

Let me repeat, for those of you who want to mistake this test as having something to do with, say, the 60-megapixel full-frame camera you’re shooting; it doesn’t. Somewhere around 80 lp/mm would be more than sufficient for that. If you compare the orange lines of the Sony and Sigma 135mm graphs, you’ll see that at 96 lp/mm the Sony is actually a bit better than the Sigma. At ridiculously high frequencies, the Sigma is better. The takeaway message is important: better MTF at one frequency doesn’t mean better MTF at all frequencies.

So let’s look at a couple of other candidates I thought might do really well.

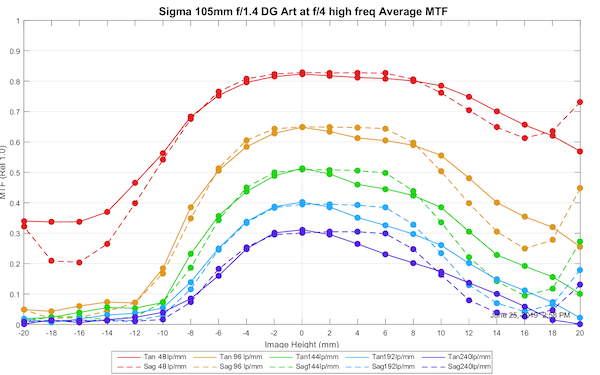

Sigma 105mm f1.4 Art at f/4

Even though this copy was slightly tilted, in the center, at least, it is the first lens to make, albeit barely make, our ‘acceptable’ cut-off of MTF 0.3 at 240 lp/mm. At 192 lp/mm, it actually touches MTF of 0.4. So we have a new high-resolution champion, and that’s despite, as you can see below, this copy of the lens having a slight tilt.

Lensrentals.com, 2019

Zeiss APO Sonnar 100mm f/1.4 Otus at f/4

Another good contender; not quite as good as the Sigma 105mm, but very similar to the Sigma 135mm.

Lensrentals.com, 2019

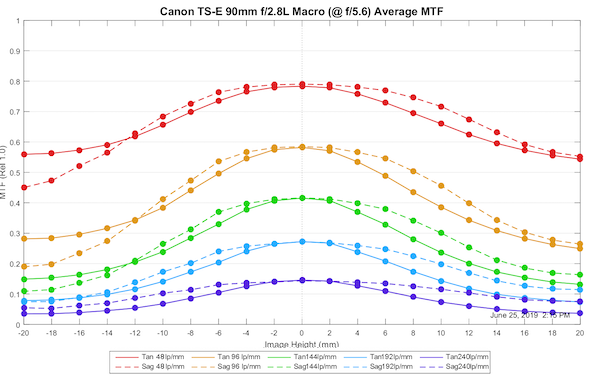

Canon 90mm f2.8L TS-E Macro

This one I had low expectations for, but we had one handy, so we thought we’d give it a try. Set your expectations low, and they will be met. Again, don’t get me wrong, this is a really, really good lens, it’s just not as good at ultra-high resolutions.

Lensrentals.com, 2019

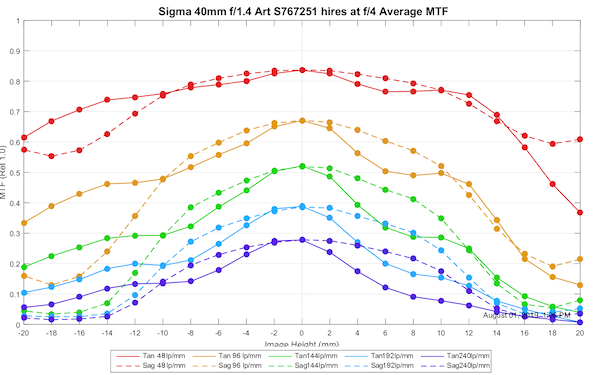

Sigma 40mm f1.4 Art

We learned from earlier testing that wider-angle lenses don’t do as well at these frequencies. But the Sigma 40mm Art tested so spectacularly at normal MTF that we thought it was worth a shot, at least.

Lensrentals.com, 2019

Here’s a case where good at normal resolution translates to good at ridiculously high resolution. This is close to, although not quite as high in the center as the Otus 55mm. It stays acceptable further away from the center than the Otus 55mm does, however.

Summary:

I say summary, because there are no practical or useful conclusions to be made. The only thing of interest, probably, is that only really good lenses can resolve ultra-high resolutions you’ll never need. However, even among these really good lenses, you can’t assume how a lens will perform at ultra-high resolutions based on its results at normal resolutions. You can also see that ultra-high-resolution performance is a bit easier to obtain in short telephoto focal lengths than in standard or wide-angle lengths.

Oh, yeah, and you can also wonder why someone, somewhere, is wondering what lenses will perform well at resolutions more than twice as high as what you might need today.

Roger Cicala, Aaron Closz, and Brandon Dube

Lensrentals.com

October, 2019

Appendix: Why Perceptual Megapixels are Stupid

I get asked several times a week if this lens or that is ‘capable of resolving’ this number of megapixels. Some people seem to think a lens should be ‘certified’ for a certain number of pixels or something. That’s not how it works. That’s not how any of it works.

How it does work is this. Any image you capture is not as sharp as reality. Take a picture of a bush and enlarge it to 100%. You probably can’t see if there are ants on the leaves. But in reality, you could walk over to the bush (enlarge it if you will) and see if there are ants by looking at a couple of leaves.

What if I got a better camera and a better lens? Well, theoretically, things would be so good I could see the ants if I enlarged the image enough. MTF is somewhat of a measurement of how sharp that image would be and how much detail it contains. (The detail part would be the higher frequency MTF.) That would, of course, be the MTF of the entire system, camera, and lens.

Lots of people think that will be ‘whichever is less of the camera and lens.’ For example, my camera can resolve 61 megapixels, but my lens can only resolve 30 megapixels, so all I can see is 30 megapixels.

That’s not how it works. How it does work is very simple math: System MTF = Camera MTF x Lens MTF. MTF maxes at 1.0 because 1.0 is perfect. So let’s say my camera MTF is 0.7, and my lens MTF is 0.7, then my system MTF is 0.49 (Lens MTF x Camera MTF). This is actually a pretty reasonable system.

Now, let’s say I get a much better camera with much higher resolution; the camera MTF is 0.9. The system MTF with the same lens also increases: 0.7 X 0.9 = 0.63. On the other hand, I could do the same thing if I bought a much better lens and kept it on the same camera. The camera basically never ‘out resolves the lens.’

You could kind of get that ‘perceptual megapixel’ thing if either the lens (or the camera) really sucks. Let say we were using a crappy kit zoom lens with an MTF of 0.3. With the old camera; 0.3 X 0.7 =.21. Let’s spend a fortune on the newer, better camera, and we get 0.3 X 0.9 = 0.27. So our overall system MTF only went up a bit (0.07) because the lens really sucked. But if it had been just an average lens or a better lens (let say the MTF was 0.6 or 0.8), we’d have gotten a pretty similar improvement.

If you have a reasonably good lens and/or a reasonably good camera, upgrading either one upgrades your images. If you ask something like ‘is my camera going to out resolve this lens’ you sound silly.

Roger’s rule: If you have either a crappy lens or crappy camera, improve the crappy part first; you get more bang for your $. I just saw a thread for someone wanting to upgrade to the newest 60-megapixel camera, and all of his lenses were average zooms. I got nauseous.

369 Comments

Dave Hachey ·

“Roger’s rule: If you have either a crappy lens or crappy camera, improve the crappy part first; you get more bang for you $. I just saw a thread for someone wanting to upgrade to the newest 60-megapixel camera, and all of his lenses were average zooms…”

It would be nice to know which lenses perform well on the latest 60 MP uber sensor. Sony sort of implies it’s their GM series of lenses, but I suspect they are trying sell us more expensive glass. When you get up into the 50+ MP territory, technique matters as much as MTF. How about a new acronym? TTF, Technique Transfer Function. I suspect we are reaching the point of diminishing returns.

Roger Cicala ·

Dave, I love that! Let’s make it Opto-Technique Transfer Function. But I agree, with today’s good gear, technique is probably more of a differentiator than a few more pixels or a slightly better lens. Oh, and I also agree about the Sony thing. There are lots of great lenses (and lots of not-great lenses) in FE mount. Some of these are GM.

Since I’m planning on buying into a new system myself, I have a list of what lenses I’d get for each of those mounts, including FE. I might share that one of these weeks.

Dave Hachey ·

I sense a topic for a future blog post. “OTTF – Everything you need to know.” I recently bought a Sony A7Riv, but so far I can’t say that it makes vastly better images than the earlier models. I’m still trying to get my head wrapped around the requirements to take 60 megapixel images. I have good glass, so I suspect it’s technique (or my advanced age). Sony keeps getting better and better.

Brandon Dube ·

Maybe I’ll write a blog post on that next weekend or the weekend after

Dave Hachey ·

Good idea. I just reread your article on PSFs, aberrations, and MTF. Nice article,

Roger Cicala ·

Please!!! I haven’t bothered you cause I figured settling in at JPL was taking some time. BTW – Aaron’s sick that his trip got canceled.

Brandon Dube ·

Not to rub it in (but totally to rub it in) – I went to Tartine over the weekend and have half of a country loaf on my counter 🙂

Things are pretty settled these days. I still have to go to the DMV and re-register my car and transfer my license – that’s the last major thing. Of course, “next weekend or the weekend after” is busy parlance for “may or may not happen” …

Franz Graphstill ·

Having only used my A7R IV for a few weeks, mostly with the 135 GM (which is a good match for it), for me the best feature of that combination is the marvellous eye AF system. I am getting extremely good AF results, even at 100%.

Dave Hachey ·

Yep, the AF system is a marvel to behold. This really is a wonderful camera, but I suspect not for everyone.

Nick Podrebarac ·

Not just cameras, but every piece of equipment is a compromise. If camera systems have taught me anything, the trick is to find the equipment whose compromises least affect your use case…then find anyone who comes to a different conclusion on the internet and shout them down in a peurile manner.

Jan Steinman ·

How about “Witless Transfer Function,” or WTF? 🙂

SpecialMan ·

Given your shocking confession that technique is so important wouldn’t a better investment be a lighter, faster-to-set-up, more rigid yet more vibration absorbing, taller-yet-more compact tripod? And a rigorous program of shutter-finger exercises?

Samuel Edge ·

Please do share….

User Colin ·

Remember that today’s 50+MP full frame sensors have similar pixel pitch (4µm) to a bog standard 24MP APS-C camera that many people have been using for years. And while you might say that 24MP APS-C only uses the middle sharp centre crop, to render the same print size it needs magnified more than 50MP FF.

Very satisfying results can be achieved with a cheap APS-C 50mm prime lens on a 24MP APS-C camera. So, rather than reaching towards some limit, FF has merely achieved parity with what entry-level photographers have used for years. But apparently FF needs a lens costing £1000+ rather than £100+.

The latest lenses are I’m sure technological marvels. But they are darned expensive.

Dave Hachey ·

Considering what you get, modern lenses aren’t that outrageously priced, but that’s a subjective matter. The only time I ever winced at purchasing a lens was when I bought a big white Canon telephoto. It cost several times what I paid for my first car (a ’67 Mustang).

bokesan ·

For 35mm you have many options. When the Otus line was released (roughly $3000-4000) who would have thought you’d get roughly the same quality for a quarter of the price one or two years later from Sigma? And the 50mm Z Nikkor costs 380€ currently. Quite affordable I’d say. And even some of the cheap Chinese brands are showing quite good optical quality. My feeling is that excellent quality is getting quite affordable these days, and it’s a trend that will continue. (That might not apply in the same amount to zooms and wide-angles).

Idahodoc ·

Absolutely! I have the Sony a7R4 and it is a devil of a camera to get really sharp pictuees with! Now what do I mean? If I only had 24MP to play with, they would all look good. But I was cussing my 24-70 f/2.8 for being unsharp at pixel peeping ranges until I realized it was ME! Throw away those rules for sharp shooting (inverse focal length = shutter speed for hand holding). It seems I can easily blur images at beyond 1/500 on that rig. Now, that only appears at the “bonus” pixel level, it prints OK at lower res. But to extract that last ounce of sharpness needs really excellent technique. And shooting bursts. On the plus side, all my GM lenses appear to produce more detail, sometimes much more, on the 60 MP sensor. Can’t wait to try my adapted Sigma 105 f/1.4!

Dave Hachey ·

This beast will take some effort to tame. My main reason for the purchase was for portrait and landscape work, which will require a good tripod. I have an A73 and an A9 for wildlife and general photography.

Dave Hachey ·

"Roger’s rule: If you have either a crappy lens or crappy camera, improve the crappy part first; you get more bang for you $. I just saw a thread for someone wanting to upgrade to the newest 60-megapixel camera, and all of his lenses were average zooms..."

It would be nice to know which lenses perform well on the latest 60 MP uber sensor. Sony sort of implies it's their GM series of lenses, but I suspect they are trying sell us more expensive glass. When you get up into the 50+ MP territory, technique matters as much as MTF. How about a new acronym? TTF, Technique Transfer Function. I suspect we are reaching the point of diminishing returns.

Roger Cicala ·

Dave, I love that! Let's make it Opto-Technique Transfer Function. But I agree, with today's good gear, technique is probably more of a differentiator than a few more pixels or a slightly better lens. Oh, and I also agree about the Sony thing. There are lots of great lenses (and lots of not-great lenses) in FE mount. Some of these are GM.

Since I'm planning on buying into a new system myself, I have a list of what lenses I'd get for each of those mounts, including FE. I might share that one of these weeks.

Dave Hachey ·

I sense a topic for a future blog post. "OTTF - Everything you need to know." I recently bought a Sony A7Riv, but so far I can't say that it makes vastly better images than the earlier models. I'm still trying to get my head wrapped around the requirements to take 60 megapixel images. I have good glass, so I suspect it's technique (or my advanced age). Sony keeps getting better and better, sadly I do not.

Brandon Dube ·

Maybe I'll write a blog post on that next weekend or the weekend after

Dave Hachey ·

Good idea. I just reread your article on PSFs, aberrations, and MTF. Nice article,

Roger Cicala ·

Please!!! I haven't bothered you cause I figured settling in at JPL was taking some time. BTW - Aaron's sick that his trip got canceled.

Brandon Dube ·

Not to rub it in (but totally to rub it in) - I went to Tartine over the weekend and have half of a country loaf on my counter :)

Things are pretty settled these days. I still have to go to the DMV and re-register my car and transfer my license - that's the last major thing. Of course, "next weekend or the weekend after" is busy parlance for "may or may not happen" ...

Franz Graphstill ·

Having only used my A7R IV for a few weeks, mostly with the 135 GM (which is a good match for it), for me the best feature of that combination is the marvellous eye AF system. I am getting extremely good AF results, even at 100%.

Dave Hachey ·

Yep, the AF system is a marvel to behold. This really is a wonderful camera, but I suspect not for everyone.

Nick Podrebarac ·

Not just cameras, but every piece of equipment is a compromise. If camera systems have taught me anything, the trick is to find the equipment whose compromises least affect your use case...then find anyone who comes to a different conclusion on the internet and shout them down in a peurile manner.

Jan Steinman ·

How about "Witless Transfer Function," or WTF? :-)

SpecialMan ·

Given your shocking confession that technique is so important wouldn't a better investment be a lighter, faster-to-set-up, more rigid yet more vibration absorbing, taller-yet-more compact tripod? And a rigorous program of shutter-finger exercises?

Samuel Edge ·

Please do share....

User Colin ·

Remember that today's 50+MP full frame sensors have similar pixel pitch (4µm) to a bog standard 24MP APS-C camera that many people have been using for years. And while you might say that 24MP APS-C only uses the middle sharp centre crop, to render the same print size it needs magnified more than 50MP FF.

Very satisfying results can be achieved with a cheap APS-C 50mm prime lens on a 24MP APS-C camera. So, rather than reaching towards some limit, FF has merely achieved parity with what entry-level photographers have used for years. But apparently FF needs a lens costing £1000+ rather than £100+.

The latest lenses are I'm sure technological marvels. But they are darned expensive.

Dave Hachey ·

Considering what you get, modern lenses aren't that outrageously priced, but that's a subjective matter. The only time I ever winced at purchasing a lens was when I bought a big white Canon telephoto. It cost several times what I paid for my first car (a '67 Mustang).

bokesan ·

For 35mm you have many options. When the Otus line was released (roughly $3000-4000) who would have thought you'd get roughly the same quality for a quarter of the price one or two years later from Sigma? And the 50mm Z Nikkor costs 380€ currently. Quite affordable I'd say. And even some of the cheap Chinese brands are showing quite good optical quality. My feeling is that excellent quality is getting quite affordable these days, and it's a trend that will continue. (That might not apply in the same amount to zooms and wide-angles).

Idahodoc ·

Absolutely! I have the Sony a7R4 and it is a devil of a camera to get really sharp pictuees with! Now what do I mean? If I only had 24MP to play with, they would all look good. But I was cussing my 24-70 f/2.8 for being unsharp at pixel peeping ranges until I realized it was ME! Throw away those rules for sharp shooting (inverse focal length = shutter speed for hand holding). It seems I can easily blur images at beyond 1/500 on that rig. Now, that only appears at the "bonus" pixel level, it prints OK at lower res. But to extract that last ounce of sharpness needs really excellent technique. And shooting bursts. On the plus side, all my GM lenses appear to produce more detail, sometimes much more, on the 60 MP sensor. Can't wait to try my adapted Sigma 105 f/1.4!

Dave Hachey ·

This beast will take some effort to tame. My main reason for the purchase was for portrait and landscape work, which will require a good tripod. I have an A73 and an A9 for wildlife and general photography.

Raimo Korhonen ·

What about (the legendary) Leica, then?

Roger Cicala ·

I don’t know, the M-mount wasn’t up for consideration. I learned enough doing the other tests to not speculate 🙂

Norbert Windecker ·

I would like to see the new Leica APO Summicron Series in the test.

Raimo Korhonen ·

What about (the legendary) Leica, then?

Roger Cicala ·

I don't know, the M-mount wasn't up for consideration. I learned enough doing the other tests to not speculate :-)

Norbert Windecker ·

I would like to see the new Leica APO Summicron Series in the test.

Andreas Werle ·

Thanks for sharing your data with us, Roger.

The 105 mm Sigma obviously is not a TS-Lens, so I wonder why tilting doesnt affect its center resolution. I mean, this is a defect, the other lenses are not (so much) tilted. BTW, what happens when you look at a tilted 90mm Canon? 🙂

Are 240lp/mm OLAF’s limit or why did you hesitate to do measurements at this high frequency?

Greetings Andy

Roger Cicala ·

A slight field tilt doesn’t affect center resolution as long as their’s no decentering. This one might have affected it a tiny bit, but probably not.

When we first started this, my thinking was that by 50 lp/mm we were seeing a lot more variation in lenses than we do at 10 or 20, so at really high frequencies the variation might be too huge to test. That turned out not to be the case, especially as we stopped down to f/4 or f/5.6 for the majority of these tests. The machine will actually test to 300 lp/mm.

Brandon Dube ·

We measured up to 900 lp/mm for my thesis. The limit is about 5,000 lp/mm, but we could push that to 20,000 lp/mm with a different microscope.

Ilya Zakharevich ·

Are you expecting to resolve 20,000 lp/mm in visible light?! Good luck with having sin ?>3…

Brandon Dube ·

Are you expecting to make friends?

Ilya Zakharevich ·

Frankly speaking, I never expected to make friends with anybody who can resolve 20,000 lp/mm in visible light in air. But if you are interested, I’m always open for suggestions!

Andreas Werle ·

Thanks for sharing your data with us, Roger.

The 105 mm Sigma obviously is not a TS-Lens, so I wonder why tilting doesnt affect its center resolution. I mean, this is a defect, the other lenses are not (so much) tilted. BTW, what happens when you look at a tilted 90mm Canon? :-)

Are 240lp/mm OLAF's limit or why did you hesitate to do measurements at this high frequency?

Greetings Andy

Roger Cicala ·

A slight field tilt doesn't affect center resolution as long as their's no decentering. This one might have affected it a tiny bit, but probably not.

When we first started this, my thinking was that by 50 lp/mm we were seeing a lot more variation in lenses than we do at 10 or 20, so at really high frequencies the variation might be too huge to test. That turned out not to be the case, especially as we stopped down to f/4 or f/5.6 for the majority of these tests. The machine will actually test to 300 lp/mm.

Brandon Dube ·

We measured up to 900 lp/mm for my thesis. The limit is about 5,000 lp/mm, but we could push that to 20,000 lp/mm with a different microscope.

Ilya Zakharevich ·

Are you expecting to resolve 20,000 lp/mm in visible light?! Good luck with having sin φ>3…

Brandon Dube ·

Are you expecting to make friends?

Ilya Zakharevich ·

Frankly speaking, I never expected to make friends with anybody who can resolve 20,000 lp/mm in visible light in air. But if you are interested, I’m always open for suggestions!

DP ·

> I just saw a thread for someone wanting to upgrade to the newest 60-megapixel camera, and all of his lenses were average zooms. I got nauseous.

so you state that crappy zoom (before diffraction hits) will not yield more (even not that much more) resolution with 60mp behind vs for example 24mp 🙂 ?

Chris Perry ·

Of course it would. But you can buy a fine prime lens for far less than $3500. The reality is, few types of photography benefit from ultra high resolution – certainly not photos of people.

Nick Podrebarac ·

Unless you want to accurately map the pores on a person’s cheek, then by all means go for the 60mp.

Michael Clark ·

Who said anything about people? We're only obsessed with reproducing flat test charts!

Michael Clark ·

Yes, you can buy one fine prime lens for less than $3,500. But how many fine primes must you buy to cover the focal length range of even a couple of good zooms?

I often shoot two bodies with a 24-70/2.8 and 70-200/2.8 IS which are "higher end" zooms.

At other times I use two of the following: 24/2.8, 35/2 IS, 50/1.4, 85/1.8, 135/2, 200/2.8, which are, at best, mid level primes (which can be as sharp as the zooms at common apertures, with smoother bokeh, but they're not as sharp as many high end primes).

Whether I use zooms or primes is based on several variables, including freedom of movement in relation to the subject (which can be quite limited shooting sports and theatrical/concert performances, two things I shoot often), available light (again, night/indoor sports and theatrical settings), stability of the platform from which I'm shooting (Only one of my primes has IS, some of my zooms do. It comes in handy when standing in the wings or on the back of a portable outdoor stage vibrating with loud music. In such a case I'll sometimes use a 24-105/4 IS instead of the "sharper" 24-70/2.8.)

Roger Cicala ·

It would. But resolution would improve much more if you improved the weak link.

# WLM ·

> I just saw a thread for someone wanting to upgrade to the newest 60-megapixel camera, and all of his lenses were average zooms. I got nauseous.

so you state that crappy zoom (before diffraction hits) will not yield more (even not that much more) resolution with 60mp behind vs for example 24mp :-) ?

Chris Perry ·

Of course it would. But you can buy a fine prime lens for far less than $3500. The reality is, few types of photography benefit from ultra high resolution - certainly not photos of people.

Nick Podrebarac ·

Unless you want to accurately map the pores on a person's cheek, then by all means go for the 60mp.

Michael Clark ·

Who said anything about people? We're only obsessed with reproducing flat test charts!

Michael Clark ·

Yes, you can buy one fine prime lens for less than $3,500. But how many fine primes must you buy to cover the focal length range of even a couple of good zooms?

I often shoot two bodies with a 24-70/2.8 and 70-200/2.8 IS which are "higher end" zooms.

At other times I use two of the following: 24/2.8, 35/2 IS, 50/1.4, 85/1.8, 135/2, 200/2.8, which are, at best, mid level primes (which can be as sharp as the zooms at common apertures, with smoother bokeh, but they're not as sharp as many high end primes).

Whether I use zooms or primes is based on several variables, including freedom of movement in relation to the subject (which can be quite limited shooting sports and theatrical/concert performances, two things I shoot often), available light (again, night/indoor sports and theatrical settings), stability of the platform from which I'm shooting (Only one of my primes has IS, some of my zooms do. It comes in handy when standing in the wings or on the back of a portable outdoor stage vibrating with loud music. In such a case I'll sometimes use a 24-105/4 IS instead of the "sharper" 24-70/2.8.)

Roger Cicala ·

It would. But resolution would improve much more if you improved the weak link.

JordanViray ·

In defense of the Perceptual Megapixels idea, it isn’t the number which is stupid but rather the poor interpretation of it. From what I understand, Perceptual Megapixels is a function of System MTF50 which is a perfectly fine measurement, i.e., I can expect to resolve 16MP at 50% contrast given a high contrast target on a given (my) camera with X lens. If I put Y or Z lens on it, the tested results are useful in showing what sort of improvements I can expect.

Ideally we would have optical MTF and sensor MTF to calculate System MTF at any arbitrary contrast requirement; but while places like LensRentals provide a great deal of information about the former, the latter information is scarce.

Brandon Dube ·

MTF-50 is not correlated to our perception of the quality of an image (that is what SQF is for). If I put a line up in front of you of objects at various contrasts, you would not find 50% contrast to be at all special, and would probably be surprised that with no noise you can perceive targets with 1, even 1/2% contrast.

JordanViray ·

MTF50 is what it is, a contrast level that some people (Imatest mainly it seems) have decided to pick as a baseline for good contrast and the baseline for their lens resolution scores. It may be somewhat arbitrary but it is useful on account of the sheer number of measurements testers have provided with it, i.e., I can reliably compare MTF50 resolution among tested lenses from sites using Imatest and similar cameras. Although I do not know the mathematics behind SQF, I am very sure that higher System MTF curves will translate to higher SQF scores – perhaps not for viewing Instagram on a smartphone but certainly for viewing on future large 8K displays or large format prints where people can walk up to the image. SQF might be great if output viewing parameters are fixed and known but I’ll take MTF any day.

We’ve had this discussion before where yes, we can perceive high contrast targets at very low contrast levels e.g. MTF1 which would be more akin to a resolution “limit” but that is beside the point. In a typical photographic scenario where we do have noise and where detail is often not very high contrast, there’s a case to be made for specifying a higher contrast threshold (e.g. Roger’s own threshold of MTF30 or MTF20) for a more realistic “limit”. My own limit is lower as I do enjoy pixel peeping test charts but in any case, a lens that provides 16MP at System MTF50 is photographically FAR more useful than a lens that resolves 16MP at System MTF1 all else being equal. Or in more general terms, the higher the MTF curves, the better.

Brandon Dube ·

But that’s just it. If we’re defining the “resolution” of something, then that figure of merit should correspond to the finest thing that can be resolved. MTF-50 corresponds to something so easily detected that no one in their right mind would associate it with the finest thing that can be resolved.

MTF-20 is an age old guidance from Brian Thompson that is well based in logic. MTF-30 for a lens, accounting for 64% contrast from the sensor at Nyquist, is a good proxy for MTF-20 at the system level, which is what you really want.

Ignoring that lenses do not provide “MP,” you cannot have 16 <> in two cases, one with MTF-50 and the other MTF-1. They are too far apart to the point that it is unphysical and such a system cannot be realized.

JordanViray ·

If we consider resolution to be the finest thing that can be resolved, then there is only one measure that matters, extinction resolution. We might choose a longer wavelength in the visible spectrum to be conservative for typical photographic applications but that tells us the finest thing that can be resolved. Not MTF20 or MTF30. With all respect to Brian Thompson, both of us do notice and care about lower contrast levels whether at optical or system level.

Note I was careful not to say lenses are the determinant of MP but given that they can resolve a certain number of black and white line pairs at a given contrast level, we can calculate MP from that. Here is my thinking:

We have an f/2 and an f/4.82 diffraction limited lens (at 550nm) on a so-called 1/2″ sensor (6.4×4.8mm). 16 million square pixels on such a sensor corresponds to a linear frequency of 361. The f/2 lens provides MTF50 and the f/4.82 lens provides MTF1 at that frequency. Unless I’ve missed something (some condition of PSF or miscalculation on my part?), that system should be realizable. Granted the MTF for both will improve at lower frequencies so the f/4.82 lens won’t look so bad in practice, but it will at that frequency.

As always, I appreciate you taking the time to share your expertise.

Brandon Dube ·

I assumed fixed pixel size and F/#

JordanViray ·

In defense of the Perceptual Megapixels idea, it isn't the number which is stupid but rather the poor interpretation of it. From what I understand, Perceptual Megapixels is a function of System MTF50 which is a perfectly fine measurement, i.e., I can expect to resolve 16MP at 50% contrast given a high contrast target on a given (my) camera with X lens. If I put Y or Z lens on it, the tested results are useful in showing what sort of improvements I can expect.

Ideally we would have optical MTF and sensor MTF to calculate System MTF at any arbitrary contrast requirement; but while places like LensRentals provide a great deal of information about the former, the latter information is scarce.

Brandon Dube ·

MTF-50 is not correlated to our perception of the quality of an image (that is what SQF is for). If I put a line up in front of you of objects at various contrasts, you would not find 50% contrast to be at all special, and would probably be surprised that with no noise you can perceive targets with 1, even 1/2% contrast.

JordanViray ·

MTF50 is what it is, a contrast level that some people (Imatest mainly it seems) have decided to pick as a baseline for good contrast and the baseline for their lens resolution scores. It may be somewhat arbitrary but it is useful on account of the sheer number of measurements testers have provided with it, i.e., I can reliably compare MTF50 resolution among tested lenses from sites using Imatest and similar cameras. Although I do not know the mathematics behind SQF, I am very sure that higher System MTF curves will translate to higher SQF scores - perhaps not for viewing Instagram on a smartphone but certainly for viewing on future large 8K displays or large format prints where people can walk up to the image. SQF might be great if output viewing parameters are fixed and known but I'll take MTF any day.

We've had this discussion before where yes, we can perceive high contrast targets at very low contrast levels e.g. MTF1 which would be more akin to a resolution "limit" but that is beside the point. In a typical photographic scenario where we do have noise and where detail is often not very high contrast, there's a case to be made for specifying a higher contrast threshold (e.g. Roger's own threshold of MTF30 or MTF20) for a more realistic "limit". My own limit is lower as I do enjoy pixel peeping test charts but in any case, a lens that provides 16MP at System MTF50 is photographically FAR more useful than a lens that resolves 16MP at System MTF1 all else being equal. Or in more general terms, the higher the MTF curves, the better.

Brandon Dube ·

But that's just it. If we're defining the "resolution" of something, then that figure of merit should correspond to the finest thing that can be resolved. MTF-50 corresponds to something so easily detected that no one in their right mind would associate it with the finest thing that can be resolved.

MTF-20 is an age old guidance from Brian Thompson that is well based in logic. MTF-30 for a lens, accounting for 64% contrast from the sensor at Nyquist, is a good proxy for MTF-20 at the system level, which is what you really want.

Ignoring that lenses do not provide "MP," you cannot have 16 << units >> in two cases, one with MTF-50 and the other MTF-1. They are too far apart to the point that it is unphysical and such a system cannot be realized.

JordanViray ·

If we consider resolution to be the finest thing that can be resolved, then there is only one measure that matters, extinction resolution. We might choose a longer wavelength in the visible spectrum to be conservative for typical photographic applications but that tells us the finest thing that can be resolved. Not MTF20 or MTF30. With all respect to Brian Thompson, both of us do notice and care about lower contrast levels whether at optical or system level.

Note I was careful not to say lenses are the determinant of MP but given that they can resolve a certain number of black and white line pairs at a given contrast level, we can calculate MP from that. Here is my thinking:

We have an f/2 and an f/4.82 diffraction limited lens (at 550nm) on a so-called 1/2" sensor (6.4x4.8mm). 16 million square pixels on such a sensor corresponds to a linear frequency of 361. The f/2 lens provides MTF50 and the f/4.82 lens provides MTF1 at that frequency. Unless I've missed something (some condition of PSF or miscalculation on my part?), that system should be realizable. Granted the MTF for both will improve at lower frequencies so the f/4.82 lens won't look so bad in practice, but it will at that frequency.

As always, I appreciate you taking the time to share your expertise.

Brandon Dube ·

I assumed fixed pixel size and F/#

Someone ·

Is the mysterious lens by any chance the cancelled Fuji 35/1.0?

Athanasius Kirchner ·

Nope, they’d have no way to test it, as they don’t own a mount for it.

But it makes for a great conspiracy theory ?

boeck hannes ·

I’d bet Otus 35. That’s conspicuously missing in the lineup.

Roger Cicala ·

Just for the record, I can’t say what it’s not. But as the article stated, it’s not a lens that you can buy, or will be able to buy.

Someone ·

Is the mysterious lens by any chance the cancelled Fuji 35/1.0?

Athanasius Kirchner ·

Nope, they’d have no way to test it, as they don’t own a mount for it.

But it makes for a great conspiracy theory 😂

boeck hannes ·

I'd bet Otus 35. That's conspicuously missing in the lineup.

Roger Cicala ·

Just for the record, I can't say what it's not. But as the article stated, it's not a lens that you can buy, or will be able to buy.

SpecialMan ·

Exhibit A: The $2 million 1600mm f/5.6 that Leica built for Sheikh Saud Bin Mohammed Al-Thani of Qatar.

In the words of the greatest philosopher of the 20th century: "Money changes everything."

Phil Service ·

Roger, many thanks for these “geek” articles. One issue that you did not discuss is the argument that the sensor resolution (camera MTF) is limited by pixel size (or photosite spacing). For the Sony A7Rm4 (which seems to be the camera of the moment), sensor pixel size is about 3.76 microns. Given that it takes two rows of pixels to record a line pair, that translates to a maximum resolution of about 133 lp/mm (what I believe is the Nyquist freqency for this pixel size). And a lens image of 133 lp/mm sampled by that sensor would probably have a poor MTF, even if the image made by the lens had an MTF of 1.0. At frequencies greater than 133 lp/mm, the camera (and system) MTF would essentially be zero, regardless of how good the lens was, I think. So, it seems to me that one might be able to make a good argument that “excellent” lenses can ‘out resolve (most) cameras’, at least at high frequencies. Or perhaps a better way to put it is: at high frequencies, and with high-performance lenses, the camera is most likely to be the limiting factor on SYSTEM MTF.

Dragon ·

Due to aliasing, the output above Nyquist is not zero in your example, but it is junk.

Federico Ferreres ·

Also, if the term is so ridiculous, I find it ironic he is hired to find if any lens “outresolves” a 240MP sensor, defined as having 30%+ contrast at the highest frequency. Basically, readers asking if anything outresolves something else are called dumb, while the article itself SOLELY and openly explores only this question.

Roger Cicala ·

Not at all. I wasn’t hired to find if anything outresolves anything, because it doesn’t. I was hired to find which lenses would give the best performance at those resolutions.

It is possible, at great extremes for one to outresolve another. For example if I took a really bad 18-55 kit lens some high resolution sensor increase might no longer improve the overall image quality. Conversely if I have a 6 megapixel camera, an Otus might not look any better than a Canon 35mm f/1.4. But if you take normal lenses on normal cameras, then no, there is no ‘outresolves’.

Federico Ferreres ·

I think you make a great point to note it’s not a binary “outresolving” of any kind. I think most would benefit from “how much would I benefit” from a new lens or sensor. Anything that helps shed light into it is fantastic, as the article is. Thanks for taking the time to explain a bit further.

CryptoLover ·

It would be very helpful if you just had a page with a list of all the lenses you’ve tested, with a link to each article where they were tested. Hope you could consider it

Phil Service ·

Roger, many thanks for these "geek" articles. One issue that you did not discuss is the argument that the sensor resolution (camera MTF) is limited by pixel size (or photosite spacing). For the Sony A7Rm4 (which seems to be the camera of the moment), sensor pixel size is about 3.76 microns. Given that it takes two rows of pixels to record a line pair, that translates to a maximum resolution of about 133 lp/mm (what I believe is the Nyquist freqency for this pixel size). And a lens image of 133 lp/mm sampled by that sensor would probably have a poor MTF, even if the image made by the lens had an MTF of 1.0. At frequencies greater than 133 lp/mm, the camera (and system) MTF would essentially be zero, regardless of how good the lens was, I think. So, it seems to me that one might be able to make a good argument that "excellent" lenses can 'out resolve (most) cameras', at least at high frequencies. Or perhaps a better way to put it is: at high frequencies, and with high-performance lenses, the camera is most likely to be the limiting factor on SYSTEM MTF.

Dragon ·

Due to aliasing, the output above Nyquist is not zero in your example, but it is junk.

Federico Ferreres ·

Also, if the term is so ridiculous, I find it ironic he is hired to find if any lens "outresolves" a 240MP sensor, defined as having 30%+ contrast at the highest frequency. Basically, readers asking if anything outresolves something else are called dumb, while the article itself SOLELY and openly explores only this question.

Roger Cicala ·

Not at all. I wasn't hired to find if anything outresolves anything, because it doesn't. I was hired to find which lenses would give the best performance at those resolutions.

It is possible, at great extremes for one to outresolve another. For example if I took a really bad 18-55 kit lens some high resolution sensor increase might no longer improve the overall image quality. Conversely if I have a 6 megapixel camera, an Otus might not look any better than a Canon 35mm f/1.4. But if you take normal lenses on normal cameras, then no, there is no 'outresolves'.

Federico Ferreres ·

I think you make a great point to note it's not a binary "outresolving" of any kind. I think most would benefit from "how much would I benefit" from a new lens or sensor. Anything that helps shed light into it is fantastic, as the article is. Thanks for taking the time to explain a bit further.

CryptoLover ·

It would be very helpful if you just had a page with a list of all the lenses you've tested, with a link to each article where they were tested. Hope you could consider it

Nicholas Bedworth ·

Great geeking!!! And keep in mind that the MTF is also function of wavelength.

It’s influenced by the combined performance, as Roger points out, of the optics, color filtration technology on the sensor, pixel physics, readout noise, quantization error and on and on.

Brandon Dube ·

Strictly speaking, MTF is not a function of wavelength. It’s a function of the spectral content of the object.

Nicholas Bedworth ·

Right, the spectral distribution… Wavelength is perhaps an imprecise manner of expressing the idea, as compared with spatial frequency distribution. Something like that… 🙂

Nicholas Bedworth ·

Great geeking!!! And keep in mind that the MTF is also function of wavelength.

It's influenced by the combined performance, as Roger points out, of the optics, color filtration technology on the sensor, pixel physics, readout noise, quantization error and on and on.

Brandon Dube ·

Strictly speaking, MTF is not a function of wavelength. It's a function of the spectral content of the object.

Nicholas Bedworth ·

Right, the spectral distribution... Wavelength is perhaps an imprecise manner of expressing the idea, as compared with spatial frequency distribution. Something like that... :)

J.L. Williams ·

Fascinating article. I was especially taken by the observation that Lens A can outperform Lens B at merely high frequencies, but underperform at ultra-high ones. I’d love to know why that is, but maybe Feynman was right about sticking with the math and not trying to ask why…

I also appreciated the takedown of “perceptual megapixels” and wondered if another frequently-flung-around concept, “per-pixel sharpness,” is another example of the same type of thing… I figure a pixel has only one value, so how can one be sharper than another?

Roger Cicala ·

This will need to be answered by Brandon Dube. Because he understands ‘why’. I’m a ‘what’ guy.

Brandon Dube ·

You know how you can find pictures of Siemens’ stars with a null on them of zero contrast (aka contrast inversion?) That means the MTF hits zero at some frequency, and then isn’t zero anymore at higher frequencies. You can have the same thing, but without hitting zero (i.e., reaching some local minimum).

The “why” for /why/ that can happen is more complicated and involves Fourier transforms of complex-valued functions that only a computer can solve, but humans can intuit about.

bokesan ·

Isn’t this already the case in the normal (10-50 lp/mm) MTF graphs? E.g. lens A might be higher than lens B at 20, but lower at 50? I thought that would be one of the things that experienced MTF graph readers (not me) can tell at a glance.

Larry Templeton ·

I don’t know how helpful a “takedown of perceptual megapixels” really is. Reading that section it felt like there was an assumption that anyone working with the “silly” (read: utterly moronic) idea that a lens can out-resolve a lens (or vv.) is blatantly trying to disprove sacred optical principles, or make a claim as outlandish as declaring the Earth to be flat.

Using DxOMark and their perceptual megapixel ratings requires matching up a camera and a lens. So if someone wants to know if X lens will net him more resolution on A camera, the perceptual megapixels rating he’s working off of seems similar to the (camera x lens) equation referenced above. Is “perceptual megapixels” an imbecilic, uneducated and foolish term? It sure sounds like it. But despite the dim-witted grandstanding that‘s implied by using such a silly term, it seems like we’re still *somehow* working out the ‘better camera’ and ‘better lens’ equation in a way that works for our pedestrian needs.

Roger Cicala ·

Larry, my major problem is it gives a number that takes into account: 1- lens performance at all apertures, 2- lens performance all across the field, 3- for a zoom performance at all focal lengths, 4 – a formula of calculation that is not shared (but apparently is normalized down to a lowest common denominator, than multiplied up to a sensor in question, which is pretty insane), 5- a method of measurement that isn’t shared and cannot be confirmed or denied by anyone else, and 6 – for lenses that work on multiple cameras and mounts the calculations are done on one camera and mount and therefore aren’t valid.

So we have this number that equates to something but we aren’t sure what, based largely on measurements that we definitely don’t know what, and people are making purchasing decisions based on that.

More to the point, people are misinterpreting it to say things like ‘this lens is rated at 16 megapixels so if I buy a 42 megapixel camera my images won’t improve’ when, in fact, they will.

Carleton Foxx ·

I’ve always thought of them like the old EPA gas mileage numbers, no external validity but nevertheless useful for rough comparison of one combo to another, no?

Roger Cicala ·

Well, if you assume internal consistency, that would be true. But really, it would be more like “we combine EPA gas mileage, 0-60 times, curb weight, luggage space, cornering ability, subjective attractiveness, and seat comfort as measured by our ideal ass size” and rate this car a 92, so it’s the car you should buy. Even if it’s valid, are many people really interested in that ideal combination of cornering ability and luggage space?

J.L. Williams ·

Fascinating article. I was especially taken by the observation that Lens A can outperform Lens B at merely high frequencies, but underperform at ultra-high ones. I'd love to know why that is, but maybe Feynman was right about sticking with the math and not trying to ask why...

I also appreciated the takedown of "perceptual megapixels" and wondered if another frequently-flung-around concept, "per-pixel sharpness," is another example of the same type of thing... I figure a pixel has only one value, so how can one be sharper than another?

Roger Cicala ·

This will need to be answered by Brandon Dube. Because he understands 'why'. I'm a 'what' guy.

Brandon Dube ·

You know how you can find pictures of Siemens' stars with a null on them of zero contrast (aka contrast inversion?) That means the MTF hits zero at some frequency, and then isn't zero anymore at higher frequencies. You can have the same thing, but without hitting zero (i.e., reaching some local minimum).

The "why" for /why/ that can happen is more complicated and involves Fourier transforms of complex-valued functions that only a computer can solve, but humans can intuit about.

bokesan ·

Isn't this already the case in the normal (10-50 lp/mm) MTF graphs? E.g. lens A might be higher than lens B at 20, but lower at 50? I thought that would be one of the things that experienced MTF graph readers (not me) can tell at a glance.

Larry Templeton ·

I don’t know how helpful a “takedown of perceptual megapixels” really is. Reading that section, it felt like there was an assumption that anyone working with the “silly” (read: utterly moronic) idea that a lens can out-resolve a sensor (or vv.) is blatantly trying to disprove sacred optical principles or make a claim as outlandish as declaring the Earth to be flat.

Using DxOMark and their perceptual megapixel ratings requires matching up a camera and a lens. So if someone wants to know if X lens will net him more resolution on C camera, the perceptual megapixels rating he’s working off of seems similar to the (camera x lens) equation referenced above since they’re interdependent. Is “perceptual megapixels” an imbecilic, uneducated and foolish term? I’m sure it sounds like the postulate of a flat-Earther to anyone with a shred of optical knowledge. But despite the dim-witted grandstanding that’s implied by using such a silly term, it seems like we’re *somehow* working out the ‘better camera’ and ‘better lens’ equation in a way that still legitimately serves our pedestrian needs.

Roger Cicala ·

Larry, my major problem is it gives a number that takes into account: 1- lens performance at all apertures, 2- lens performance all across the field, 3- for a zoom performance at all focal lengths, 4 - a formula of calculation that is not shared (but apparently is normalized down to a lowest common denominator, than multiplied up to a sensor in question, which is pretty insane), 5- a method of measurement that isn't shared and cannot be confirmed or denied by anyone else, and 6 - for lenses that work on multiple cameras and mounts the calculations are done on one camera and mount and therefore aren't valid.

So we have this number that equates to something but we aren't sure what, based largely on measurements that we definitely don't know what, and people are making purchasing decisions based on that.

More to the point, people are misinterpreting it to say things like 'this lens is rated at 16 megapixels so if I buy a 42 megapixel camera my images won't improve' when, in fact, they will.

Carleton Foxx ·

I’ve always thought of them like the old EPA gas mileage numbers, no external validity but nevertheless useful for rough comparison of one combo to another, no?

Roger Cicala ·

Well, if you assume internal consistency, that would be true. But really, it would be more like "we combine EPA gas mileage, 0-60 times, curb weight, luggage space, cornering ability, subjective attractiveness, and seat comfort as measured by our ideal ass size" and rate this car a 92, so it's the car you should buy. Even if it's valid, are many people really interested in that ideal combination of cornering ability and luggage space?

Federico Ferreres ·

The reason is that there more this an one way to get to certain contrast level at some frequency, and some paths (designs and approaches) crash at lower levels. But it's easier to see just proposing the opposite of what you say:

"Lens A can outperforms Lens B at merely high frequencies, MOST outperform at ultra-high ones"

It's a modified statement to say the opposite of the observation that got your attention. If that were true, then you'd only need to test any lens at 10 lp / mm, and the moment you find one lens outperforms another, then it'd be best at ANY frequency. Similarly, if you measure at 5 lp / mm, and 1 lp / mm. Actually, and intuitive conclusion is that you cannot take ANY performance as indicative of how a lens will perform at a higher frequency. It may be a rule of thumb, and nothing else.

Look at figure 6, near the end, in this Zeiss Brochure from 1976:

Zeiss - Resolving Power and Contrast

Dragon ·

Those charts do reinforce the need for an AAF even on high resolution cameras if you are shooting anything with repeating patterns and using good lenses.

DrJon ·

Not really as the AA filters all roll-off quite smoothly in real life, starting well before Nyquist, so:

(i) You lose detail as it’s smoothing that too.

(ii) You still get aliasing as it doesn’t cut off sharply.

If I put links to stuff this will get nuked, alas…

Dragon ·

Yes, the AAF roll-off leaves a lot be desired. It is basically sin/x (which means it comes back after the null) because of the lack of negative coefficients in the filter (we just haven’t figured out how to get those pesky darkons to do our bidding). Still, a well placed AAF is often useful. As to different raw converters helping, I haven’t seen much difference with respect to pixel level aliasing, but some do a much better job of guessing color out of the Bayer grid. Given the limitations of the Bayer grid, a 200 MP camera actually makes a lot of sense since that is really only 50 MP in Bayer pixel terms. There may be little or no detail at the individual pixel level, but that is actually good from an aliasing perspective. A logical design would be for the camera to unwind the Bayer grid and then produce a 50 MP RAW file with all the RGB values (only 25% smaller than the 200MP original, but very nice to work with and essentially alias free.

DrJon ·

I see my post full of links failed to appear, so I’m guessing I shouldn’t post any links in this one. However while it’s a little old now if you search for dr_jon and “5dsr moire reduction from raw (automatic and manual)” you might see some of the results I got…

You can certainly get more resolution from Bayer than a pixel per quad though, so you’re throwing resolution away doing that unless you’re way into diffraction softening.

DrJon ·

While I’m being a little naughty googling “A SIMPLE MODEL FOR SHARPNESS IN DIGITAL CAMERAS AA” then avoiding the “- Defocus” result and going to Figure 7 in the “- AA” result could be interesting… noting Nyquist is at 0.5.

Dragon ·

If in that hypothetical 200 MP camera I suggested above the null is moved to .5 or a tiny bit below, then there will be no aliasing at the pixel level and the potential for color aliasing at the quad level will be substantially reduced (i.e. the raw converter will have an easier time guessing). I completely agree that you can get more resolution out of a Bayer grid than the quad, but that improvement comes with a guarantee of uncertainty that is not always desirable. BTW, I have a 5DSR and just added a 90D. The 90D has the lowest visible aliasing of any Bayer camera I have seen so far and it still has very good resolution. It will be interesting to see what Canon’s next high res FF looks like.

DrJon ·

I did like Darkons… 🙂

Oh and the 5Dsr is a great camera, there’s still nothing I’d change mine for (currently). I also love using two buttons for BBF (with completely different AF recipes, inc tracking options – e.g. “My current AF Settings (subject to change)…” is a slightly older version, search with quotes) and am not sure I’d want to give that up, which would rule out a number of future possibilities.

My Bee shot on dpreview should be found from “Bee… 100-400 (on 5Dsr)” which gets you to the thread and shows what we can do with all those pixels (the most indented post, in threaded view, has the Bee closeup).

Dragon ·

Those settings sound quite useful for fast moving stuff (like swallows, hummingbirds, and bees)

It will be interesting to see what the next high res body looks like. By most accounts, it will be an R, but I am a bit torn as I am not so sure but what I would rather see a 5DSR mark II. When the target is moving fast, EVF lag (even when it is short) can make tracking a challenge. OTOH the focus tracking algorithm seems to be leaning toward dual pixel winning out over an OFV sensor. At least that is the case with the 90D and I suspect that may be instructive re the future. Also, an R body would be more likely to have IBIS which could be very helpful with that much resolution. Either way, if Canon makes an 83MP FF sensor that has the pixel performance of the 90D, I will preorder the camera.

DrJon ·

I currently have the Star button set to pinpoint AF with a general-purpose tracking algorithm, partly for Owls in trees or anywhere you want accurate AF on a single thing. The AF-On button has tracking optimised for BIF and all the AF points. It’s very handy to just be able to move your thumb sideways and completely change the AF setup.

I’ll certainly consider a high-MP RF body, although it depends on other things. What I’d really like is a combination of my 5Dsr with a deeper buffer and an extra fps or few and my GH5, so IBIS, 10-bit video, etc. However currently people don’t seem to be able to read high-MP sensors fast enough to do really good video, and people have serious heating issues with some more ordinary sensors (fan in the latest Panasonic, large heatsink in the Sigma).

Dragon ·

Those charts do reinforce the need for an AAF even on high resolution cameras if you are shooting anything with repeating patterns and using good lenses.

DrJon ·

Not really as the AA filters all roll-off quite smoothly in real life, starting well before Nyquist, so:

(i) You lose detail as it's smoothing that too.

(ii) You still get aliasing as it doesn't cut off sharply.

If I put links to stuff this will get nuked, alas...

P.S. However there are some instances where you'll see some moire without an AA filter and won't with (or it's down to a level you don't care about), so for a number of use cases (where much post-processing time isn't available or is undesirable) the AA filter can be a good idea (also some Raw tools are miles better than others at removing it automatically).

DrJon ·

Links:

Extra real resolution without an AA filter:

https://www.lensrentals.com...

Moire both with and without AA filters:

https://www.dpreview.com/re...

Good by KenW on dpreview:

http://www.dpreview.com/for...

See Figure 7 for differences between 5Dsr and 5Ds blur (difference is AA filter), Nyquist is at 0.5:

https://www.strollswithmydo...

Note I find for small areas of high-res detail actually having a little aliased fuzz often looks better than having it smoothed out to nothing.

Dragon ·

Yes, the AAF roll-off leaves a lot be desired. It is basically sin/x (which means it comes back after the null) because of the lack of negative coefficients in the filter (we just haven't figured out how to get those pesky darkons to do our bidding). Still, a well placed AAF is often useful. As to different raw converters helping, I haven't seen much difference with respect to pixel level aliasing, but some do a much better job of guessing color out of the Bayer grid. Given the limitations of the Bayer grid, a 200 MP camera actually makes a lot of sense since that is really only 50 MP in Bayer pixel terms. There may be little or no detail at the individual pixel level, but that is actually good from an aliasing perspective. A logical design would be for the camera to unwind the Bayer grid and then produce a 50 MP RAW file with all the RGB values (only 25% smaller than the 200MP original, but very nice to work with and essentially alias free.

DrJon ·

I see my post full of links failed to appear, so I'm guessing I shouldn't post any links in this one. However while it's a little old now if you search for dr_jon and "5dsr moire reduction from raw (automatic and manual)" you might see some of the results I got...

You can certainly get more resolution from Bayer than a pixel per quad though, so you're throwing resolution away doing that unless you're way into diffraction softening.

DrJon ·

While I'm being a little naughty googling "A SIMPLE MODEL FOR SHARPNESS IN DIGITAL CAMERAS AA" then avoiding the "- Defocus" result and going to Figure 7 in the "- AA" result could be interesting... noting Nyquist is at 0.5.

Dragon ·

If in that hypothetical 200 MP camera I suggested above the null is moved to .5 or a tiny bit below, then there will be no aliasing at the pixel level and the potential for color aliasing at the quad level will be substantially reduced (i.e. the raw converter will have an easier time guessing). I completely agree that you can get more resolution out of a Bayer grid than the quad, but that improvement comes with a guarantee of uncertainty that is not always desirable. BTW, I have a 5DSR and just added a 90D. The 90D has the lowest visible aliasing of any Bayer camera I have seen so far and it still has very good resolution. It will be interesting to see what Canon's next high res FF looks like. BTW, I am disappointed that you didn't eve snicker at my reference to darkons :-).

DrJon ·

I did like Darkons... :-)

Oh and the 5Dsr is a great camera, there's still nothing I'd change mine for (currently). I also love using two buttons for BBF (with completely different AF recipes, inc tracking options - e.g. "My current AF Settings (subject to change)..." is a slightly older version, search with quotes) and am not sure I'd want to give that up, which would rule out a number of future possibilities.

My Bee shot on dpreview should be found from "Bee... 100-400 (on 5Dsr)" which gets you to the thread and shows what we can do with all those pixels (the most indented post, in threaded view, has the Bee closeup).

Dragon ·

Those settings sound quite useful for fast moving stuff (like swallows, hummingbirds, and bees)

It will be interesting to see what the next high res body looks like. By most accounts, it will be an R, but I am a bit torn as I am not so sure but what I would rather see a 5DSR mark II. When the target is moving fast, EVF lag (even when it is short) can make tracking a challenge. OTOH the focus tracking algorithm seems to be leaning toward dual pixel winning out over an OFV sensor. At least that is the case with the 90D and I suspect that may be instructive re the future. Also, an R body would be more likely to have IBIS which could be very helpful with that much resolution. Either way, if Canon makes an 83MP FF sensor that has the pixel performance of the 90D, I will preorder the camera.

DrJon ·

I currently have the Star button set to pinpoint AF with a general-purpose tracking algorithm, partly for Owls in trees or anywhere you want accurate AF on a single thing. The AF-On button has tracking optimised for BIF and all the AF points. It's very handy to just be able to move your thumb sideways and completely change the AF setup.

I'll certainly consider a high-MP RF body, although it depends on other things. What I'd really like is a combination of my 5Dsr with a deeper buffer and an extra fps or few and my GH5, so IBIS, 10-bit video, etc. However currently people don't seem to be able to read high-MP sensors fast enough to do really good video, and people have serious heating issues with some more ordinary sensors (fan in the latest Panasonic, large heatsink in the Sigma).

BoredErica ·

Can you explain why people think moire is the result of ‘lens outresolving the sensor’ and why that is wrong?

DrJon ·

Moire is perhaps more lens+atmosphere+AA-filter out-resolving the Bayer array, and the de-bayering algorithm not handling it well…

Also pixels are distinct and lens resolution is smooth-ish as it tails off, so aliasing in general can occur. Sometimes this looks better than not having it. Sensor MTF is odd at the limits due to the discreet nature of pixels.

BoredErica ·

Can you explain why people think moire is the result of 'lens outresolving the sensor' and why that is wrong?

DrJon ·

Moire is perhaps more lens+atmosphere+AA-filter out-resolving the Bayer array, and the de-bayering algorithm not handling it well...

Also pixels are distinct and lens resolution is smooth-ish as it tails off, so aliasing in general can occur. Sometimes this looks better than not having it. Sensor MTF is odd at the limits due to the discreet nature of pixels.

bokesan ·

Given how pronounced the center peak of many lenses gets at higher frequency, I wonder about field curvature. Are we back to “subject in the center” shots with more MP or it actually not this worse?

DrJon ·

Interesting point, would real-World photos where the subject has more of a variable depth look a lot better than the results suggest?

Roger Cicala ·

There’s even a worse thing: at ultra high res focusing becomes ultra critical. On the bench, we can screw things up by letting it find best focus at 30 lp (the default) because best focus at 150 or 200 lp may be significantly different. Basically like focus shift for aperture.

I didn’t look at field curvature on these, but my guessing-game thought is given how fast these fall off (by 4-6mm away from center) that is before you really start to see much field curvature. Still, a good point and very possible.

P. Jasinski ·

Wait… What?

Does this frequency related focus shift effect has any practical implications for photography at standard set of frequencies? like 20 vs 50 lp/mm.

I’m thinking – product/fashion photos – would it be visible in fabric structure versus, say, perceived sharpness of facial features of models. Would “subject” distance be a significant factor here?

This is truly fascinating.

Brandon Dube ·

If you google “on the use of classical mtf measurements to perform wavefront sensing” you will find my thesis. In it, you will find color surfaces of the MTF vs focus and frequency for some aberrations (see, e.g., fig 24). Curvature in the MTF vs Focus vs Frequency is a signature of spherical aberration. There is an intuitive physical optics explanation for it – email me brandon at retrorefractions dot com if you want to hear it.

P. Jasinski ·

Thanks! Read through the background so far – very interesting.

Roger Cicala ·

There’s also the part about if you’re using autofocus, what exactly is it autofocusing? For many camera manufacturers AF is set using a bar chart that probably is the equivalent of 10 or 20 lp/mm. I don’t pretend to know this stuff, it’s proprietary and for phase-detection, at least, I don’t understand it well enough to say. But it’s an interesting question.

P. Jasinski ·

In terms of AF – I can think of Canon 50/1.2 which is notoriously difficult to focus accurately with AF, but with on sensor PDAF it performs considerably better in my experience, especially for off centre focus points – focus shift and curved plane of focus aside, of course.

It seems I need to think deeper at formulating accurate questions I have regarding these effects and how they translate to real world use of camera-lens systems.

Brandon Dube ·

Depth of field reduces (loosely speaking) with the square of the frequency. 200 lp/mm has 1/16 the depth of field of 50 lp/mm (or so).

bokesan ·

Thanks, that’s a relief. I’d love to see an MTF vs Field vs Focus graph for one of these. I know it takes a lot of time – one sample (say the 55 Otus) to see that things are not that bad off-center would suffice.

Brandon Dube ·

Our standard MTFvFvF measurements record up to 250 lp or so. Been a few years since I looked. Roger just has to change a setting on the GUI to a much larger number 🙂

Roger Cicala ·

Brandon has spoken, so it shall be done! This is the sagittal image plane for the Sigma 35mm at f5.6. Top to bottom 20, 50, 100, and 150 lp/mm. At 200 lp/mm we didn’t have MTF of 0.2 so the graph really is just a little purple fuzz. You can see how the peak MTF lowers from yellow (>0.9) through orange (>0.7) to purple (>0.4) as frequency increases, and the depth of field narrows.

https://uploads.disquscdn.com/images/80c77b17c241f191a176c133da0a9c0d11011c5396e3934f95d70ae0a326ca3b.jpg

P. Jasinski ·

is there any chance we could see a similar graph for a lens showing significant spherical abberation?

Roger Cicala ·

Uhm, that was a Zeiss Milvus 35mm f1.4. It has pretty significant spherical aberration, I believe.

Brandon Dube ·

There is insignificant spherical aberration at greatly reduced apertures like f/5.6. It is also field constant, so the result is quite boring in these graphs.

Ilya Zakharevich ·

> “Depth of field reduces (loosely speaking) with the square of the frequency.”

I must say that this makes no sense without context. Could you explain what exactly do you mean here?

Brandon Dube ·

I suspect your idea of depth of field is predicated on the bogus idea of CoC and other geometric models. Those models are, bluntly, horse shit and are off by not 1 or 10%, but several fold when you consider diffraction or even basic higher resolution systems.

The “Aberration Transfer Function” for defocus is a parabola centered at 1/2 the cutoff frequency. Thus, for the bandpass we probably are interested in, depth of field is, loosely speaking reduced with the square of the frequency.

Ilya Zakharevich ·

I repeat: what you say makes absolutely no sense. If you want it to make any sense, you need, %#$@&, to specify WHAT IS that “bandpass we probably are interested in”! Otherwise, e.g., Fig. 34 from your PhD thesis (or any bit of common sense) clearly contradicts your claim?—?both in low and in high spacial frequencies.

Finally, saying “horse shit” does not a good argument make! Unless you can specify EXACTLY which particular metric of depth of field you have in mind, your statements would remain the same the-agricultural-term as before.

Brandon Dube ·

Generally speaking, cutoff is far higher in frequency than the Nyquist limit of the detector (and even then, you do not need much of anything in the way of MTF at Nyquist to get a nice image).

For example, at F/8 the cutoff frequency is 250 lp/mm, current cameras have Nyquist limits near 125 lp/mm. QED, the bandpass we care about is within that monotonically decreasing quadratic regime.

ATFs are not a very good idea, but for defocus it isn’t that bad of one since the range of OPDs associated with defocus that are under consideration (several waves) usually dwarfs the range of OPDs associated with the other aberrations (~0.1 waves).

Suppose my definition of depth of field is MTF of 0.2 times the peak MTF at whatever frequency.

The geometric model predicts infinite resolution when in focus and predicts a linear decline in resolution with defocus. Both of these are easily demonstrated as false.

Or you could take a camera and take an image focused such that the CoC-based DoF equation says something is in focus, only to realize it is quite clearly unacceptably blurry in the picture.

My thesis was not a PhD, but I’ll take the compliment.

Ilya Zakharevich ·

To me, your ideas about “what makes a model geometric” look like some high-school.simplifications. I would prefer to say that “geometric” means something similar to what Andre Yew said here earlier: when you treat all “defects” (including diffraction) as if they were “independent Gaussians”, so combine them as ?(a²+b²) (instead of doing the honest Fourier optic).

About the rest: I still cannot collect the shards of my head together. What *I* get *now* is that using your criterion (MTF20-width), the DoF goes as 1/F for small F, and switches to GROWING as 1/?(F?-F) for F close to the cut-off frequency F?. (But since I do calculations in my head, I could have missed some “?” sign easily?—?which would make my calculations completely bogus. The high-frequency answer above does not [immediately?] match the graphs in your thesis! Need to sleep my flu off… And: sorry for not paying attention to the “grade” of your thesis; I usually review the PhD level, so got a wrong assumption…)

Anyway, note that you did not provide any shard of evidence for your

“QED, … monotonically decreasing quadratic regime.”

Brandon Dube ·

What means it geometrical is that it uses geometrical optics. If you want to treat them as gaussians you first have to define their standard deviations, which is (to me) undoable. The second moment of an airy disk is undefined.

Ilya Zakharevich ·

(Looks like I need to redo without links)