The Limits of Variation

A few people were more than a little amused that I, the ultimate pixel-peeper, wrote an article demonstrating that all lenses and all cameras vary a bit; that you can’t find the ultimately sharpest lens. Each individual copy of a given lens is a little different from the other copies. A single copy will behave a little differently on different cameras. Even on the same camera, autofocus the same shot a dozen times and the results will be slightly different. (Don’t believe that? Put your camera on a tripod, single focus point, aim at a target. Look at the distance scale. Autofocus again. And again. You’ll see the camera is focusing a bit differently each time.) So people started asking me “If there’s variation, then what’s the sense in taking all those measurements?”

There’s plenty of reason for all those measurements. They can be really useful once you stop looking for the exact place a given lens or camera should be and accept that there is range of acceptability. I’m not saying the manufacturer’s repair center stating “lens is within specifications” means it’s acceptable. (It may or may not be on your camera.) I’m saying it can be tested and evaluated to see if it really is acceptable.

In other words, we know that computerized analysis using Imatest or a similar program can show us a measureable difference exists between several lenses. But what does that mean? Can we tell when a difference is just the inevitable minor variation and when it’s a meaningful problem? The answer is yes. The vast majority of the time, anyway.

Determining What is Acceptable

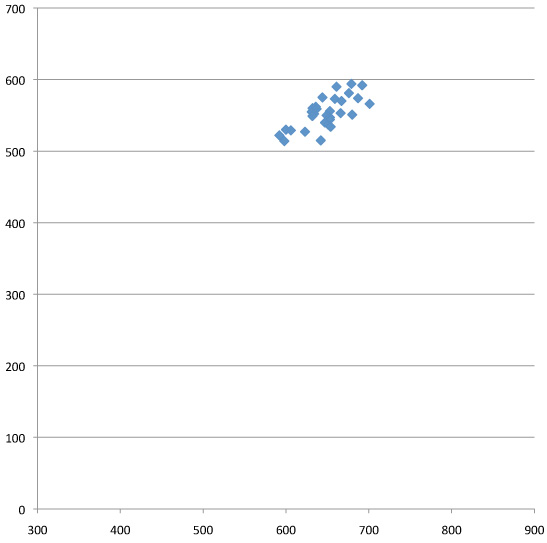

In the last article I used a lot of graphs showing the Imatest results for multiple copies of the same lens, demonstrating how much variation there was between supposedly acceptable copies. I chose to use mostly prime lenses as examples because the patterns were nicely grouped and the outliers were obvious at a glance. Here’s a group of Canon 35mm f/1.4 lenses, for example, and there don’t appear to be any bad copies. It’s a nice, reasonably tight group of results. And knowing how other lenses look when they’re bad, I’d expect a bad lens to be hanging out down around the 500/300 area, well away from this group.

So, the obvious assumption is these are all good lenses. But lets pretend I’m an OCD pixel peeper who’s paranoid that those two lenses at the lower left, the lowest scores of the group, might not be OK. Just because Roger says the group looks good doesn’t mean it is.

Luckily there is a way to help confirm that our grouping is acceptable. Subjective Quality Factor is a measurement developed by Ed Granger and K.N. Cupery in the 1970s for Kodak and used by Popular Photographer for their lens reviews. Basically, SQF uses a mathematical formula, taking the MTF data from the lens (which we get from these tests) to predict with good accuracy how sharp a print would be perceived at various sizes and distances.

I’m not going into detail about SQF (for a more thorough discussion, see Bob Atkins excellent article or the references below). The important part is that several experts have shown an SQF difference of less than 5 for a reasonably sized print is basically not detectable by human vision.

In the graph below I’ve added the SQF numbers for several of the lenses (we arbitrarily use SQF for 30cm height images) for both best and average resolution of the lenses. We could use a different image size and the absolute numbers would change, but not the difference between them (for reasonable image sizes).

As you can see, the highest SQF (84/80) and the lowest (81/75) remain within 5 points of each other. Trying to pick the best lens out of this group (not even considering each will be slightly different on a different camera, slightly different every time it focuses on the same scene, etc.) is basically foolish: we can measure this small difference with the computer, but we couldn’t see it in the picture. However, any lens that tested much lower or to the left of our scattered pattern of good lenses would indeed be softer and you could tell it in a print.

And What Isn’t Acceptable

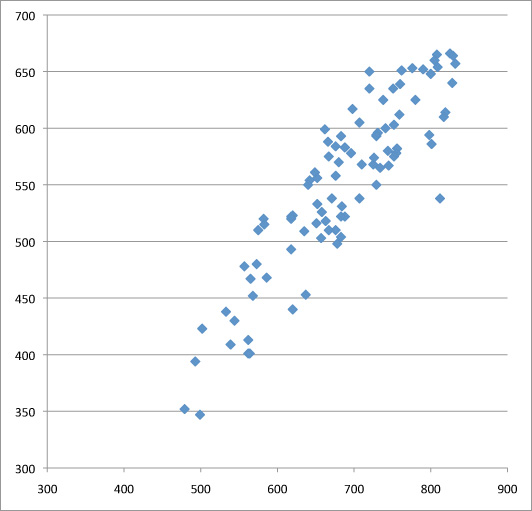

Unfortunately (for my sanity), all lenses aren’t primes with nice tight test patterns that make great examples. Below is a run of almost 100 copies of the Canon 24-70 f2.8 zoom lens tested at 70mm. Why so many? Because when I first tested two dozen copies they were a random smattering of results scattered around the chart. Data doesn’t make any sense? Get more data. Luckily we have a lot of lenses.

But more data didn’t solve the problem either, it just gave me a big smattering of lens results. Zoom lenses do tend to have a bigger copy-to-copy variation spread than primes do, but this was ridiculous. Obviously the two lenses at the bottom left are bad and simple optical testing confirmed that. But what about the group of a half dozen just above the two bad ones? And that other group of a half dozen above those but still under 500 LP/PH? Optical testing wasn’t very helpful, they seemed OK shooting test charts. On the other hand, they didn’t look really sharp either.

SQF was really quite helpful in this situation. Let’s look at the same graph with some SQF numbers added. The very best result we got was an SQF of 85/84. If anything greater than a 5 point SQF would be visibly different, then we can draw a line between the lowest acceptable results and the highest unacceptable results, which I’ve done on the graph.

The truth is, in looking at the graph, that’s where I suspected the line would be — results above that line are fairly tightly grouped, those below it more scattered about. But I’ll admit I was hoping I was wrong, because being right meant we were going to send a whole lot of lenses in for factory service. I believed the SQF data, though, so the whole bunch went off to Canon.

But the proof is in the pudding. Nine of those lenses have come back from Canon. Below are the original test result in blue and the post-repair result in red for those nine copies. I’ve also left in the line from the previous graph that SQF said would be the acceptable cut-off for this lens at 70mm. Not all of them are back yet, but I think these 9 lenses make the point pretty clearly.

As you can see, all of the lenses that were unacceptable by the SQF definition came back from repair in the acceptable range. So it does appears that a bit of pixel-peeping measurement, along with some mathematical calculations, really can give us a reasonable indication of what is just normal variation and what is a bad lens. (BTW, out of all the different Canon lenses, this the only one for which the bad lenses were not completely obvious at a glance. And even the bad lenses from this set all were perfectly fine at less than 55mm, they only had trouble at the long end.)

Of course, now you should be thinking “Roger, why would they just be having trouble at the long end?” and “Why were there so many bad copies of this one lens?” Well, you know me, I have major OCD and I just had to try to figure that out.

The 24-70 mystery

This part has little to do with optical testing, it’s about the Canon 24-70 lens specifically. And before I go further, please raise your right hand and repeat after me: “I do solemnly swear not to be an obnoxious fanboy and quote this article out of context for Canon-bashing purposes.” Because trust me on this: Canon faired very, very well in our testing, with only this one lens being an outlier. Other brands definitely are not better. This one got to be the example simply because we started testing Canon lenses first, and because we have more copies of them.

I mentioned in my last post that we found no lenses that got worse with age except “one zoom that doesn’t seem to be aging well” and this is the one I was talking about. Years ago when we started Lensrentals, I arbitrarily decided to sell every lens at two years old. I didn’t have a particular reason for 2 years rather than 1 or 3, it just seemed about right. But since I’d just made that call on my gut, one of the things we have looked at with every lens test was the age of copies tested versus their test results, to see if my assumption was correct.

For lens after lens there was no difference with age, so I was shocked when I looked at the results of the 24-70s. The vast majority of the bad lenses (and all of the really bad lenses) had over 20 rental weeks of use.

All of our lenses rent with the same frequency. All of them get the same care. So what was different about 24-70s that made them have problems with aging when the other lenses didn’t? There is one thing that is somewhat different about 24-70 f2.8 zooms: they are both barrel extending (rather than internal) zooms and are rather heavy. Most barrel extending zooms are lighter. Maybe thousands of zoom twists was wearing something out.

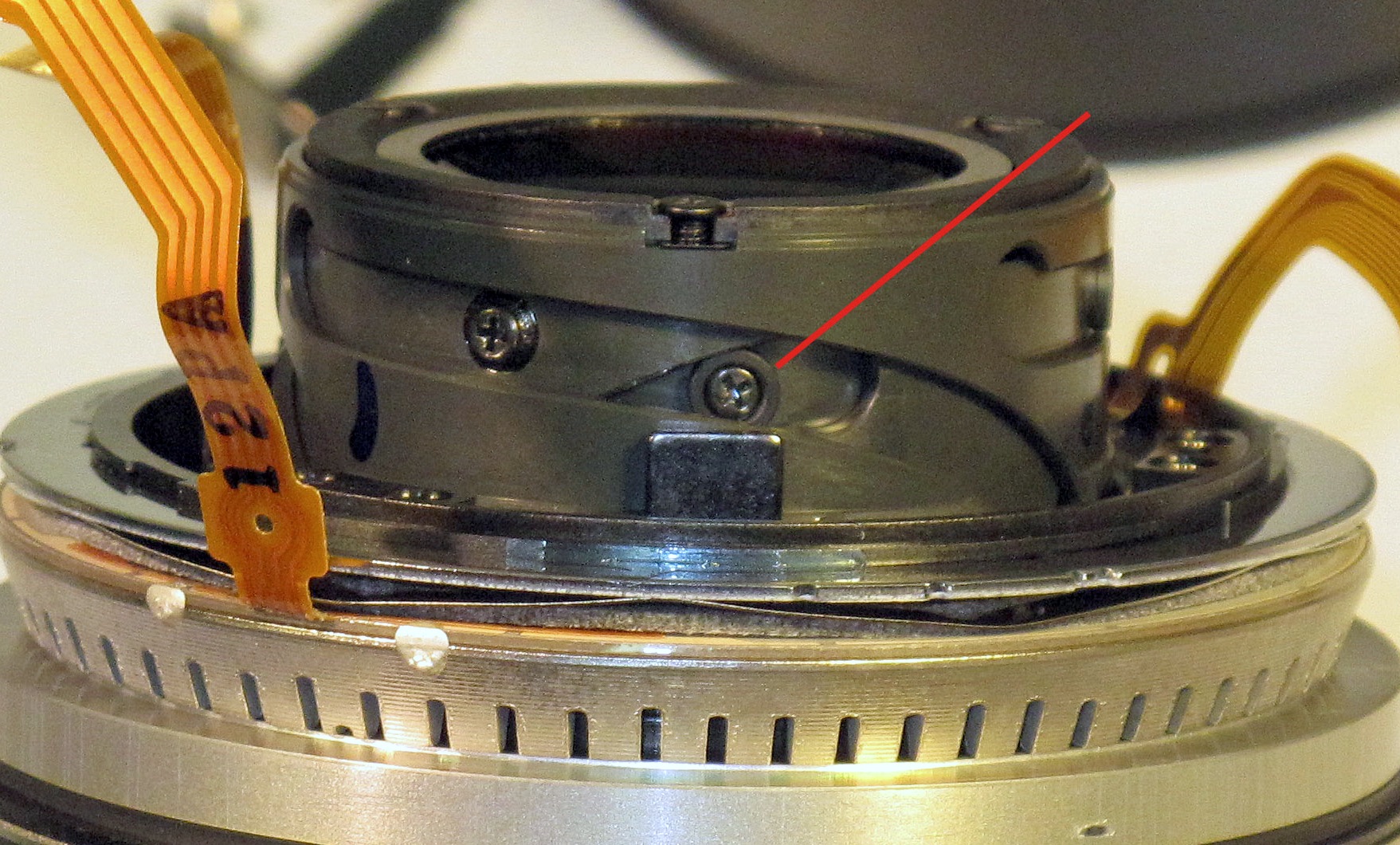

Well, we might not be capable of repairing most lenses without the factory repair center to help, but we certainly are capable of taking them apart (especially when we know they have to go in to be fixed anyway, we’re real brave then) and looking around. What we found was rather interesting. In the zoom helicoid are several screws with plastic collars around them that keep the barrel tracking properly within it’s grooves:

There are a number of such screw/collar assemblies in the lens. When we looked at some of the bad lenses, we saw several screws that seemed to be missing their collars. In several of the lenses, like the one shown below, we found the collar broken off and loose inside the lens.

When you compare a fresh new collar with one we fished out of an older lens, it becomes pretty apparent they’ve worn down and eventually broken off from around the screw. We didn’t find missing collars in every bad copy we opened, but we did in most of them.

A missing collar or two could (I assume – I don’t know for certain) allow the internal barrel to not stay properly aligned as it tracks in and out with zooming. If it wasn’t properly aligned, it wouldn’t focus properly. Perhaps that explains why these lenses usually have trouble at 70mm and not throughout the entire range. Why 70mm and not 24mm? I have no idea. We did see a couple of lenses with problems at the wide end (and they were both sharp at 70mm), but not nearly as many and they were very clearly outliers.

Before you freak out about your personal 24-70, though, remember these are rental lenses. Probably every one gets more use in a year than yours does in a lifetime.

The Bigger Picture

For those of you into pixel peeping and “I demand a perfect copy” kind of stuff, I think this is the takeaway message:

Copy-to-copy variation is real, although barely detectable in actual photography. If you pixel peep you can find a difference that’s real, but not significant. Meaning you can see a small difference in test results, but couldn’t tell the difference in a print.

Bad lenses are usually massive outliers, easily detected at a glance (see the previous article for examples) or with the most rudimentary testing (like just taking some pictures) in the majority of cases.

There are some situations, like our Canon 24-70s, where a measured difference isn’t huge (at least compared to really bad lenses) but probably is significant enough to affect the sharpness of a print. While SQF isn’t the be-all, end-all measurement and has very real limitations, it can be a useful tool helping us to decide what is, and is not significant.

Finally, for those of you (and there are a couple of million of you) who own a Canon 24-70, please don’t go off the deep end because of this demonstration. Remember, these are rental lenses. They get used heavily an average of 90 days a year (and probably not as gently as you would use your own equipment). Plus they are shipped all over the country, an average of 20 round-trips a year. As I’ve always said, Lensrentals.com should be considered battle testing for photo equipment. Whatever can fail, will fail here. It doesn’t mean your copy is going to do this.

But if you have an older copy, and you feel it’s softer at 70mm than in the middle and short ranges, it probably is worth a trip to Canon for a checkup. It should be obvious from the graph above that it can be fixed quite effectively.

References:

Biedermann and Feng: “Lens performance assessment by image quality criteria” in Image Quality: An Overview, Proc. SPIE Vol. 549, pp36-43 (1985)

Granger and Cupery: “An optical merit function (SQF) which correlates with subjective image judgments.” Photographic Science and Engineering, Vol. 16, no. 3, May-June 1973, pp. 221-230.

Hultgren, “Subjective quality factor revisited: a unification of objective sharpness measures”, Proc. SPIE 1249, 12 (1990)

An Addendum for Lensrentals Customers:

About two dozen people have bought Canon 24-70 lenses from our used lens sales page over the last year. Obviously the inspection we carried out when you bought the lens did not include the Imatest information we now obtain on the lens. If any of you have doubts about the sharpness of your lens at 70mm, we would be happy to test it for you. Please send us an email before you send us the lens, though, so we won’t wonder why a lens arrived in the day’s shipments that doesn’t register in our inventory.

Roger Cicala

Lensrentals.com

October, 2011

38 Comments

alek ·

Gawd Roger, you just can’t stop with incredibly fascinating analysis – good job yet again!

As an engineer, I love the data-driven approach … and pretty wild that this one lens “jumped out” at ‘ya … and then upon further inspection, you were able to determine the probable cause. I would be curious what Canon would say – have they made any comments to you?

Roger Cicala ·

Canon usually doesn’t say anything, but from past experience I know they will correct me in about 22 seconds if they think I have anything wrong 🙂

If they do, I’ll pass it along: they certainly know more about their lenses than I do.

Joe Towner ·

Next thing we know you’re tracking a few copies over their rental life, and Canon may or may not love you as much. Maybe this will be addressed in a II lens.

Roger, your drive for perfect lenses is appreciated by all of us who rent.

Tim Swensen ·

Thanks for the analysis, Roger. This is a good lens, but apparently suffers from a reliability

weakness over time. I am glad my copy works well, and it’s good to know that Canon can

readily improve it, should it’s performance degrade over time.

I wonder if you have ever analyzed Canon (or other maker’s) wide angle zooms, such as the

17-40L. I found significant asymmetrical sharpness issues, with the right side of the

image being notably sharper than the left on my copy.

This issue is readily visible and I wonder how Imatest would score such a lens.

Regards,

Tim

Roger Cicala ·

Hi Tim,

We have done the 17-40 (all the Canon lenses are done now) and find on average they’re a bit sharper at the wide end, but not much. What you’re describing though sounds like a decentered or tilted element and it would end up with a very low weighted average on Imatest: in other words probably a bad lens. Canon can fix that, but when you send it in include a photo showing the one-sided softness.

Roger

John Amato ·

Interesting information and thank you. Just today I contacted Canon to send mine back for a second time. It was sharp at 70 but not at the wider end and this was noticeable to me in normal shooting as a lack of snap to the image. I shoot professionally and the lens has gotten heavy use, more than what I suspect you rental lenses do after reading your article. But the lens started off new as not too sharp wide and sharp at 70. The repair got it sharp wide but then the long end was off. So there it is. I hope this trip fixed it.

Sam ·

Were you able to throw in any pre-production copies of the 24-70 2.8L II into the mix by chance?

In all seriousness, this article is additional proof that I should continue to hold out for an update to this lens. We know one is in the works. It’s too old and too important for Canon not to make updating this lens a high priority. That said, I do hope they take their time and re-consider the design from the inside, out. Who knows, they might be able to shave some weight off in the process.

Until that magical press release is published, announcing the Canon EF 24-70 2.8L II USM (or IS version), I will simply continue to rent it from you all…or perhaps re-consider the Tamron alternative. Kudos for such an amazing site and service. Truly 5-star/first-class. Studies like these are icing on the cake that will keep me coming back…and back again to rent from you all.

Ralph Conway ·

Thank you very much for that article, Roger.

If I am in the United States and need to rent a lens, I will be honored to contact your company and use your service.

Ralph

Rob ·

Another superb article, and you’ll be gaining a lot of great data here.

Any chance of looking at the 100-400L? always a lot of talk about wear with that lens.

Roger Cicala ·

HI Rob,

I’ve started on it, but the long zooms take a long time to test, the setup is difficult. With that lens, though, we think we’re pretty accurate in judging them by feel: when the barrel starts to feel gritty as you zoom, it’s usually also getting soft at 400. But then again, if I was so smart, I wouldn’t have had to discover all this problem with the 24-70s, so check back in a month or two when I have real data.

Peter ·

Do you think the 24-105mm f4L lens would also be affected since it has a similar barrel zoom design?

Roger Cicala ·

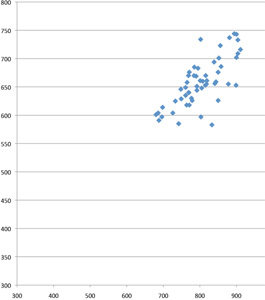

Peter,

It’s not at all: we’ve tested about 80 of the24-105s now and they are one of the most accurate zooms at both ends, no change with age, etc. Perhaps because it’s a much lighter weight, or perhaps because it’s a newer design, it has none of the symptoms of the 24-70. The chart for it is below and you can see at a glance it’s a much tighter pattern with none of the “tail” the 24-70s had.

Davis Bourque ·

And this is the very reason I only rent from you. Your ongoing approch to deliver the best product continues to amaze me

Kudos to you and your staff.

Davis

Steve Singer ·

Roger, this was another fine example of your dedication to the world of photography. Thank you for taking the time and effort to explore this issue. Very few individuals or organizations would go to this extreme.

I was wondering if you plan on testing the Zeiss line of lenses. I am looking more closely at Zeiss and will be renting the 35mm f/1.4 from LensRentals next month. Does an “old-world” (all metal, manual focus) manufacturer of lenses perform better out-of-the-box than Nikon or Canon? (I realize that Cosina actually manufactures the lens for Zeiss).

Any thoughts or comments would be appreciated. Again, thank you for your commitment to your industry.

P.S. Have you given any thought to offering testing as you did for this article, for any lens that is PURCHASED from LensRentals either for a fee or as a value-added proposition?

Roger Cicala ·

HI Steve,

We’re testing ever lens eventually, including Zeiss ZE and ZF mounts. But I don’t have enough data yet to answer the question. It takes a while to go through our rental copies: most of them are out at any given time and it’s usually the third or fourth run of testing a given lens before I have enough data points to start analyzing. And ideally I like to test a group of new-out-of-the box copies for each lens, too, just to make sure there’s not a difference between what we see with new copies and our rental fleet. But I hope one day to make all of the data available.

We are doing analysis on Canon 24-70s before listing them for sale now, for obvious reasons 🙂 We’ll eventually be doing it with every lens, but it will take a few more months before I’ve gotten all our rental lenses done, which is first priority.

Walter Freeman ·

Fascinating article as always, Roger.

I have another lens that I think suffers from some of the same problems: the Olympus 50-200 f/2.8-3.5 non-SWD version. It’s got all the risk factors:

–mine is old: I shoot the hell out of it and got it from someone who did too

–it’s got a pretty heavy barrel that extends, with a quite heavy front element

I’ve noticed that mine is not quite as sharp as it was when I bought it — but only really noticeable at 200/3.5, and even then you have to peep. But since Olympus doesn’t make a mid-range bird lens (like a 300/4 or a 400/5.6), people teleconvert the 50-200… and mine is noticeably worse with a TC than many samples I see, and worse than (I think; these things are subjective without test numbers) it was when I bought it.

A ·

Another excellent and informative post Roger!

I suspect you could make a mint offering a lens testing service…

Before people start getting it into their heads to send everything to you or Canon though, it’s worth reminding people that the postal/courier services can and will occasionally destroy things. I recently received a lovely 5000 piece Portmeirion jigsaw puzzle, or teapot as it was previously known 😉

Thankfully the only thing I’ve rented from you (so far) arrived in perfect shape!

Anders ·

Roger! A very big THANK YOU for your amazing work and the results that You share with us. I live in Sweden and have been trying to get similar deep professional information over here, no luck what so ever. Keep up the spirit 🙂 B.R // Anders

Josh ·

Roger,

It’s worth noting that Photozone required four copies of the 24-70 before it could obtain a non-defective sample.

Gonza ·

Hello,

I am engineer and hobby photographer in Argentina. I did not even know of your existence half an hour ago. I just read the article in dpreview, and then this one , that appears to be a follow up.

Excellent !! . Of course I knew that the perfect lens does not exist, and that you have to calibrate a given lens to a given body. But to see the real measured data, is just awesome.

I learned a lot today. Very very interesting, congratulations!

Gonza

Tom ·

This article in concert with the lens copy variation article is the biggest reason why my first and certainly not last lens rental will be through lensrentals.com.

Thanks for the info.

Flo ·

Great article! Do you test lenses on demand :D?

Thanks!

Roger Cicala ·

Flo,

We can’t at this point: it’s a full-time job just testing our stock lenses to make sure they’re all up to spec.

TS ·

Thank you, this was another very interesting and informative article. I do have one question about the broken screw collars, I’m sorry if this was mentioned in the article but I read it twice and could not find it: did you check any good 24-70 lenses for broken screw collars? I’m wondering if the good lenses had intact collars which would more strongly implicate broken collars as a source of the problem, or if the good lenses might have had broken collars as well which would indicate that the broken collars are a common yet harmless occurrence. Thanks.

Roger Cicala ·

We have opened up a number of 24-70s for other reasons (sand or grit in the zoom, etc.) and have only found the broken collars in our older lenses that had resolutions problems, so far. But it’s certainly possible some break with no effect on resolution.

Roger

Kai ·

Hi Roger,

Fascinating data and work (Engineers’ hats off to you for that), but also somewhat scary to me since I have a 24-70mm that was used professionally by a relative for the first 4-5 years, before I got it.

The data in your post got me thinking, since my relative was unhappy about the sharpness of the copy and had switched to a 50/1.4 for portrait work (that’s how I got it). So armed with a print-out of the post, I handed in my 24-70mm to Canon Tech service. Guess what: they replaced 3 collars and the lens feels a lot better now (haven’t had time to shoot yet). I had always noticed how the barrel would feel loose and could be rocked from side-to-side when zoomed to 24mm, but this is all but gone now.

A big thanks to your dedicated work!

Kai

Jani ·

plastic collars around them that keep the barrel tracking properly within it’s grooves

My OCD can’t handle that apostrophe.

(Yes, I’m just compensating for my feelings of inadequacy before such a mighty piece of research.)

jseliger ·

Heads up: You have a typo here:

BTW, out of all the different Canon lenses, this the only one for which the bad lenses were not completely obvious at a glance

The sentence should read “this is the only one. . .”

Charles ·

This is a fantastic analysis. Have you ever measured lens variation as a function of year and place of manufacture? I picked up a 50L yesterday, having sort of chosen between two copies my local store had. They were both acceptable, although the UA stamp copy (2012) seemed a smidge sharper than the UZ stamp copy (2011). Any data on this? I’m not so curious about this particular lens, just variation as it relates to manufacturing date in general. Of course, the two copies I looked at is way too small a sample size to make any generalizations. And my test of sharpness was completely unscientific (standing facing a poster on a wall and shooting at 1/160 s at f/1.2 then looking at the letters). Thanks for all of your excellent work!

Roger Cicala ·

Charles,

We do see some sort-off patterns. For example one period had lots of Canon 300 f4 IS lenses with electrical problems. Canon 17-55 f2.8 IS, Nikon 24-70 f/2.8 and many other lenses had problems in early years that disappeared in later years.

But we don’t have enough copies to determine certain problems in certain serial number runs.

Fabio ·

Hi,

I am about to buy a used MKI 24-70, the copy is UX0713 (Utsunomiya, 2009).

when I saw this article I was “afraid” of buying.

can tell how to make a fast screening if it is a good copy or not?

right now i have 5d mkIII with 50mm f1.8

Best Reggards,

Roger Cicala ·

Fabio,

Here are a couple of things I look for on 24-70 Canons. First, if there are dents in the filter ring, run away. That lens does NOT survive drops at all.

Second, is it about as sharp at 24mm as at 70mm? The great majority of the problems we see affect either 24 or 70, but not both.

Finally, is there any ‘catch’ in zooming or focusing? Broken collars in the helicoids are common and show up as a roughness when zooming and focusing. If there’s a broken collar, the lens will be decentered.

Fabio ·

Hi Rojer,

what is the test I have to do, if the lens is sharp or not?

suguestao you have any?

when I get my order, I can send you some images for evaluating?

the lens will be sold for a shop, that also repairs lenses …

thanks for your help

Reggards

Debashis ·

I had the same problem with my 5 month old, very sparingly used 24-70mm f/2.8 Mark I. Did a quick focus test using a chart and found lens back focused at 24mm (barrel extended position) by about 10mm-14mm. This I tested at two apertures that I normally use – f/2.8 and f/5.6. There was no perceptible focusing issue at 70mm (barrel retracted position). I also found a bit of lateral movement around the barrel which I suspected played a part in the problem at the 24mm end.

Sent it to Canon repair and it came back today with 3 collars replaced. Haven’t tested the lens yet but the lateral play around the barrel is no longer there. I just hope the problem has been fixed for good.

Regards

Hasan ·

Hi. I really enjoyed reading this and the information was very useful. Perhaps you can enlighten me on a problem I’ve been having. I have tested 3 copies of a Tamron 90mm macro lens on a 3 600D cameras and 1 7D camera (as well as various 5D bodies). On close macro shots, all the lenses worked perfectly on all the bodies. On the 5d bodies and the 7d, the focus was spot on at long distances (10 feet to infinity). On all 3 600D bodies, the focus was way off. Could this be a lens issue or a 600d issue? Surely i can’t have 3 bad copies.

Bartholomew ·

It certainly isn’t the sharpest lens, and I have had mine repaired because of these broken collars after 3 years of use. But when it works, it is excellent for journalistic and documentary use, where IQ isn’t primary element of a lens. It takes beating much better than many other lenses.

Michael Clark ·

“I do solemnly swear not to be an obnoxious fanboy and quote this article out of context for Canon-bashing purposes.”

Cristian Fernando Benaprés Mar ·

very helpful information! I have the same issue with my 24-70 f2.8