Sigma 135mm f/1.8 Art MTF Charts (and a Look Behind the Curtain)

I know there are a lot of people who want to see what the MTF charts on the new Sigma 135mm DG HSM f/1.8 Art lens look like. We just did our initial screening test on ten copies, so I’ll show you what we found, and give you some comparisons to some other 135mm lenses. This also presented a good chance to show you something I’ve talked about but haven’t really shown you; the process we use when we do this to set standards for new lenses in-house.

Now, as always, when I show you some stuff from behind the curtain, you have to swear the Solemn Lensrentals Anti-Fanboy Oath, because with great power comes great responsibility. So you agree you won’t make stupid claims that you’re worried about copy-to-copy variation of this particular lens because these results are GOOD. If I showed you what we go through with bad ones you’d lose your mind; you aren’t ready for bad yet. So everyone say thank you to Sigma for making a lens with good enough quality control that I’m willing to show you the process. In this case we end up testing 11 lenses to use 10 for our database. This isn’t unusual. There are many brand-name lenses where we test 16 or 17 copies before we’re comfortable and a couple of cases where I didn’t accept standards until we’d tested 35 or 40 copies. We hate those lenses very, very much.

So if you’re interested in the process we go through, I’ll use this post to demonstrate it a bit. If you’re not, you can skip the ‘how and why I do this’ section.

Let’s Start with How and Why I Do These Tests

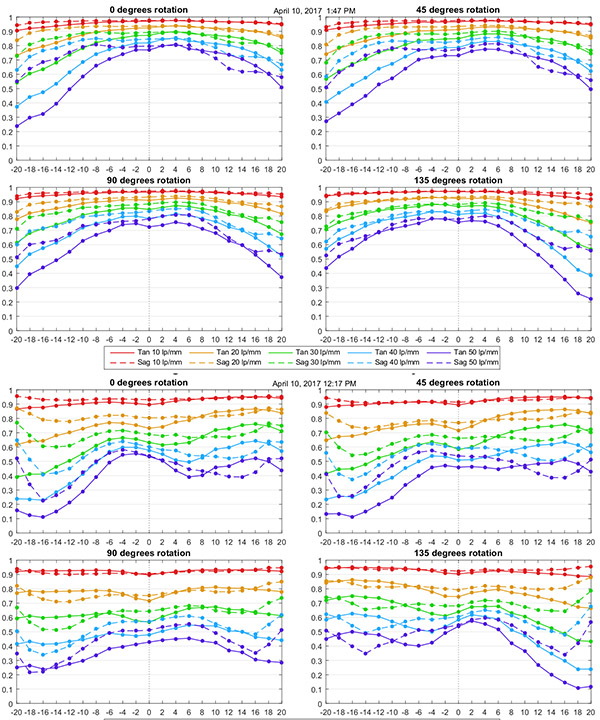

We start standards testing by getting ten brand new copies of the lens. Then I set up the optical bench for the lens and on each copy run a distortion test and run the MTF at four different rotations, so we get a side-to-side, top-to-bottom, and two corner-to-corner tests for each lens.

Here are the results of the first two lenses. You don’t have to be an MTF wizard to figure out the four graphs on top, from the first lens, are different than the four on the bottom, from the second.

Most of you probably think, the second one is obviously a bad copy, because jumping to conclusions on inadequate data is fun and easy. But in the big picture of all lenses, the second one isn’t obviously horrid even though it is obviously not as sharp. It’s fairly even with a weak corner, but the weakness only shows at higher frequencies, which means it’s going to be subtle. The red (10 line pairs) lines aren’t dramatically worse even in the bad corner. There’s no apparent decentering. If you bought this lens and tested it you might, or might not, think it wasn’t as sharp as it should be and if you were really, really careful in your testing might detect that bad corner. If you had both lenses to test side-by-side you’d prefer the first one for certain.

But at this point, I don’t know if this lens just varies a bit, and we’re seeing the range of variation here. Or is the second one an outlier? That’s why we test multiple copies and that’s also why we don’t jump to conclusions; much. So I proceeded to test the next 8 copies and other than glancing at the MTF charts to make sure they were valid, I tried not to pay much attention to the data at this point. Once they were done, I ran our 30 lp/mm full frame displays for all 10 lenses and compared them visually.

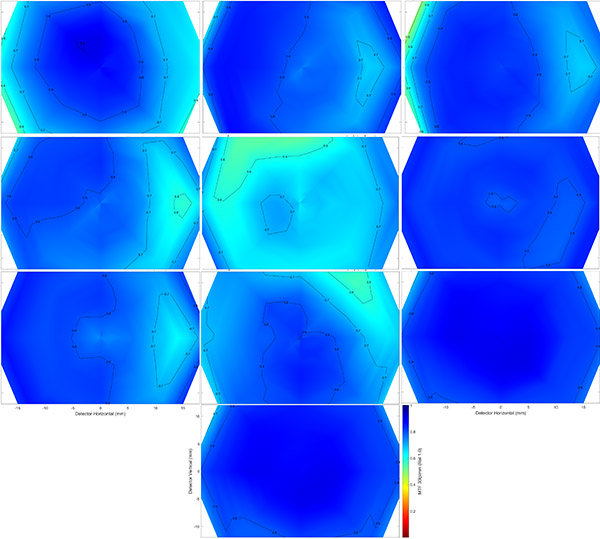

If you haven’t read our blog lately, there are in-depth discussions of what these graphs show in several recent posts, but just consider it a thumbnail of overall sharpness across the lens field with dark blue being the sharpest. That second lens I tested is the one in the center and clearly not as good as the others, at least by this visual inspection. At this point, I begin to think it’s falling into the ‘bad copy’ range, rather than an acceptable variation of the lens range.

But to be more certain, we run each of the lenses compared to the variation of all the other lenses. When I compare this questionable lens (the lines in the graph below) to the range of the other lenses, it becomes clear it falls outside the range. So it was eliminated from the testing group and replaced with another lens, and the results of that group are what I will publish and what becomes our in-house standard for this lens.

You may be interested to know that I gave all 10 of these lenses to one of our more experienced techs and asked him to test them on a high resolution test chart. He said they all passed. When I told him one was bad and to check again, he did identify this one, but described it as ‘just a bit softer, but still fine’. I mention this because part of what you’re seeing here is that this lens is really, really good. Even the worst copy looked OK optically to a very experienced tech. It’s just not quite as good as the others. This is a bit unusual, since a true bad copy usually has some signs of side-to-side variation or decentering, and this lens really didn’t. You could make an argument that this lens is actually OK and I’m just overly picky, and you might be right.

OK, this concludes the ‘why and how we do this’ portion of today’s program. Now lets look at what I found for our set of 10 copies of the Sigma 135mm f/1.8 Art.

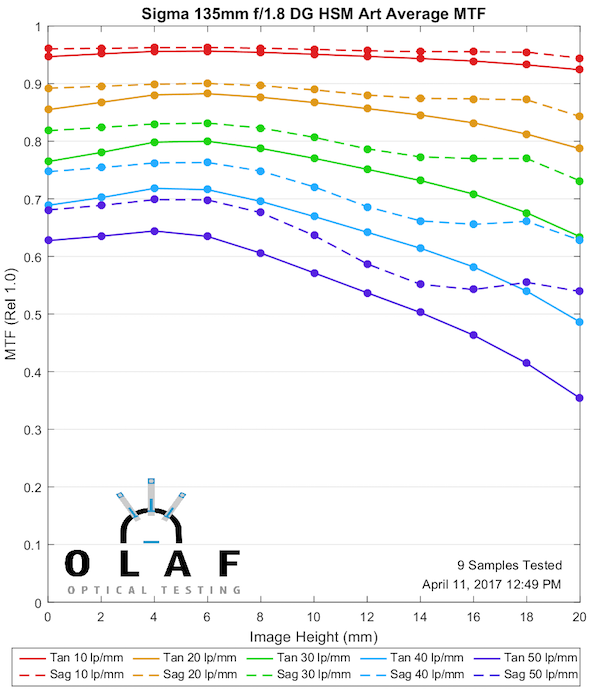

Sigma 135mm f/1.8 Art MTF Results

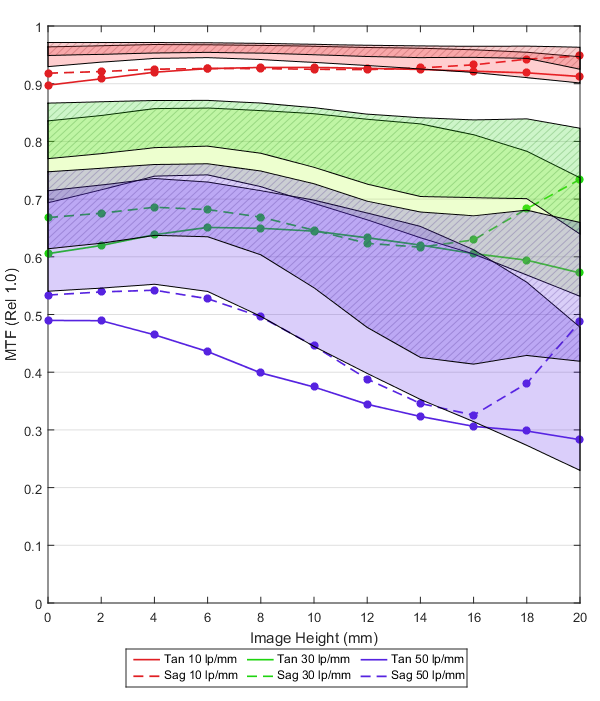

The more MTF savvy among you will notice the center astigmatism that I discussed in our previous blog post on the Tamron 70-200mm zoom. This is partly because with longer lenses the optical center may be a bit off axis from the geometrical center and rotation causes this effect. That is not a problem with the lenses, more of a testing artifact. There also were a couple of copies where there was a bit of central astigmatism that resolved off axis.

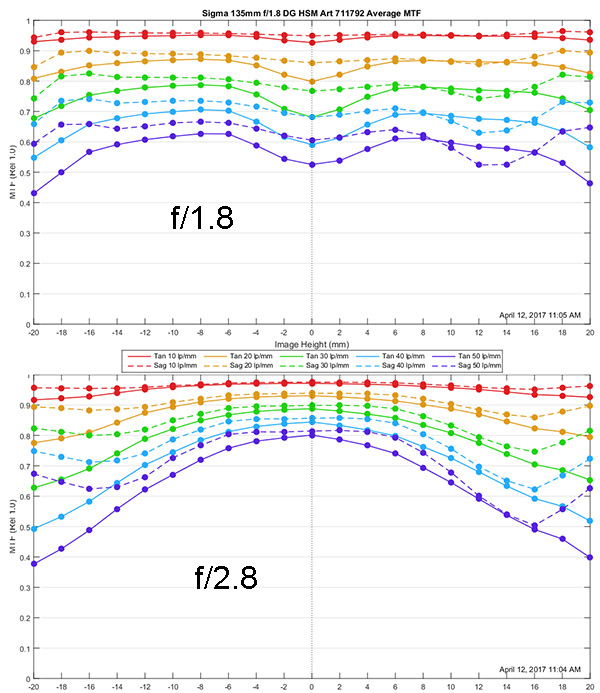

The MTF results are excellent. There is superb resolution both at lower frequencies (contrast) and higher frequencies (fine detail). There is a small astigmatism-like separation between sagittal and tangential lines which may be true astigmatism or lateral color, but it’s minor. The MTF is maintained impressively well all the way to the corners.

Everyone always wants to know about stop-down performance and I always explain I don’t have time to redo the test at every aperture, but I’ll try a bit of a compromise here. I’ve taken one of the ‘about average’ copies and run it at f/2.8. Here you can see that copy’s MTF both wide open and stopped down to f/2.8. It’s really a lot sharper at f/2.8. Who would have thought.

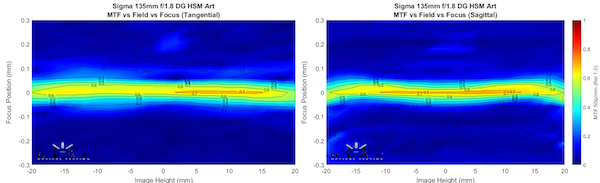

Finally, here are the field of focus plots for both sagittal and tangential MTFs. Like a 135mm lens should be, these are nearly perfectly flat.

And Finally Some Comparison Plots

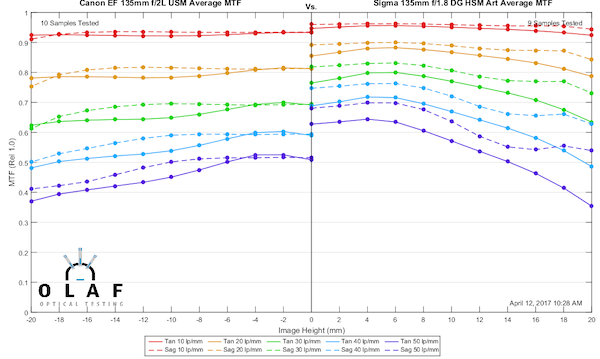

First we’ll compare the Sigma 135mm f/1.8 Art to the venerable Canon 135mm f/2 L, a lens that I unabashedly have claimed as my favorite lens for over a decade. The Sigma’s MTF is clearly better, even though it’s at a slightly wider aperture. Does that mean I’m giving up my tried and true 135mm? Well, I don’t know, but it means I’m at least taking the Sigma for a tryout. That’s really a big difference. But I do love my Canon and there’s more to images than MTF, and the Sigma is kind of chunky and actually a bit more expensive and … . damn, that Sigma MTF looks good.

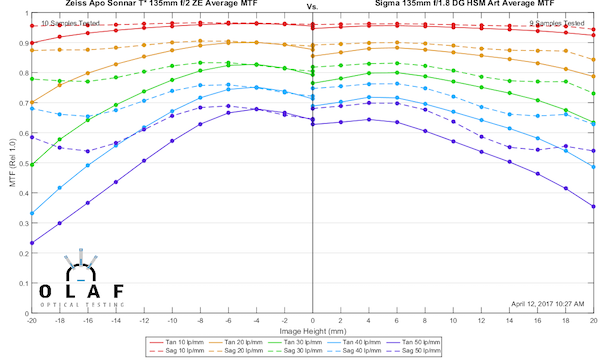

The next obvious comparison is the Sigma 135mm f/1.8 Art with the Zeiss 135mm f/2 Apo Sonnar. Things are a bit more even here, although again keep in mind the Sigma is being tested at a slightly wider aperture. The difference between the two is pretty minor, although the Sigma may be a little better at the edges. Price is about the same here, so the difference is going to be more about how they render, and if you want autofocus with your 135mm.

One last reasonable comparison is to the Rokinon 135mm f/2 — actually, in this case, the Rokinon 135mm T2.2 Cine DS Lens because that’s what I have data on, but it’s the same optics. This one is a close call. Of course, the Rokinon is a lot cheaper. On the other hand, the Sigma autofocuses much better and has better build quality. Still, I want to give Rokinon proper praise for making a superb lens at a superb price.

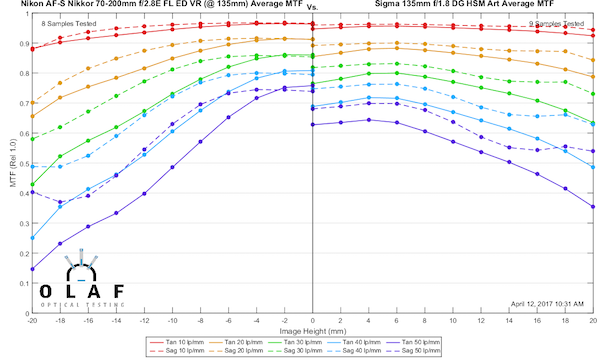

I’ll give you one more direct comparison; one that is meaningless except that I have been harping on ‘zooms aren’t primes’ lately. This is the Sigma 135mm Art at f/1.8 against the best zoom that exists at 135mm, the Nikon 70-200 f/2.8E FL ED. Even at a dramatically wider aperture, the Sigma is better away from center. If you scroll back up the f/2.8 MTF chart I posted above, at f/2.8 the Sigma is just completely better. Zooms are convenient, very good, and very useful lenses. But they aren’t primes, and they never will be.

So What Did We Learn Today?

Well, testing for sample variation has a bit of judgment and a lot of math in it, and there’s a reason why I (with few exceptions) insist on testing ten copies if I’m going to test.

We learned the Sigma 135mm f/1.8 has a really nice MTF curve, better than the Canon 135mm f/2 L. It’s as good at f/1.8 as the Zeiss or Rokinon are at f/2, and it autofocuses, which they don’t. Whether you want it or not is going to depend on a lot of other factors, but the MTF curves are promising. And we learned, to my sadness, that father time catches up with everything, including my favorite Canon 135mm f/2.0 which is showing it’s age a bit when compared to these newcomers.

And we learned that Roger is never going to shut up about ‘zooms aren’t primes’.

Roger Cicala and Aaron Closz

Lensrentals.com

April, 2017

41 Comments

Zwielicht ·

Why do you only use the “good” copies to come up with final results? I thought the aim was to tell a potential buyer who buys ONE copy that with a certain likelyhood the MTF of his copy will lie within a certain interval.

If you end up testing 40 copies, how many (and which ones) are used for the final results?

Re-reading https://wordpress.lensrentals.com/blog/2015/06/measuring-lens-variance/ it sounds like you keep testing more copies until the variation number doesn’t change too much anymore – that seems to contradict what you say above..? (FWIW, in case you’re worried about outliers, there’s also interquartile range.)

Roger Cicala ·

I guess that’s the point I was trying to make. It’s easy when the techs screen out new lenses, reject the 3 or 5% bad copies and we just test the rest. When they’re really bad copies that’s easy. In this case, it wasn’t a really bad copy, so it was more of a judgement call.

We of course continue to test more copies. When I have 50 tested copies it will be more clear. But I don’t have 50 copies yet, we got 14. One that’s just a little softer with no decentering or tilt, like this one, isn’t an obvious bad copy.

For a lot of lenses (any zoom and a number of primes) we would have seen a gradation from best to worst and not thought of this as an outlier (and as I tried to say – when I first saw it I thought it would probably be within variation). But in this case, the other 10 lenses were very tightly grouped – variation is pretty small. So when I first saw this lens I expected to call it variation, but since the others are so good, I called it an outlier.

It’s not so much about the absolute number of this particular copy, it’s about the tight grouping of all the other tested copies compared to this one. If we’d seen a smooth range from best to this, or even past this, then we’d conclude that was just the amount of variation this lens has copy-to-copy.

I could be wrong, and after 20 more copies tested decide that no, the variation is just greater than I thought and this one is acceptable. But as I mentioned in the article, 10 copies is usually plenty to make the call and be comfortable; but there are occasional exceptions. I doubt this is going to be one.

Interquartile range really isn’t the best way to do it, Monte Carlo analysis is, at least every expert tells me that. We also do that in-house, but it’s complex and less intuitive and I haven’t rolled that out to be our go-to yet. Although Brandon keeps pushing me in that direction.

On the other hand, your friendly neighborhood reviewer tested one copy. What I do isn’t the end all-be all, it’s a good start to what should be.

Ed Bambrick ·

A single bad lens is a tragedy; a million bad lenses is a statistic.

Roger Cicala ·

I guess that’s the point I was trying to make. It’s easy when the techs screen out new lenses, reject the 3 or 5% bad copies and we just test the rest. When they’re really bad copies that’s easy. In this case, it wasn’t a really bad copy, so it was more of a judgement call.

We of course continue to test more copies. When I have 50 tested copies it will be more clear. But I don’t have 50 copies yet, we got 14. One that’s just a little softer with no decentering or tilt, like this one, isn’t an obvious bad copy.

For a lot of lenses (any zoom and a number of primes) we would have seen a gradation from best to worst and not thought of this as an outlier (and as I tried to say – when I first saw it I thought it would probably be within variation). But in this case, the other 10 lenses were very tightly grouped – variation is pretty small. So when I first saw this lens I expected to call it variation, but since the others are so good, I called it an outlier.

As I mentioned in the article, 10 copies is usually enough to make the call and be comfortable, but not always. And interquartile range really isn’t the best way to do it, Monte Carlo analysis is, at least every expert tells me that.

On the other hand, your friendly neighborhood reviewer tested one copy. What I do isn’t the end all-be all, it’s a good start to what should be.

Brandon Dube ·

When the initial pool of lenses are tested, e.g. 10 copies, if any of them are more than 2 sigmas from the mean they are discarded as outliers. The population is not large enough to distinguish an outlier from the tail of the distribution, and we err on the side of caution.

At 40 copies, we would use 3 sigmas because we can make a better argument that our sample represents the population.

Your reading of the first post in this series is not really correct — the plot you are looking at is more of a justification for the variance number I had created at the time. It is saying that, basically, the V# stopped changing after (say) 4 copies and we don’t need to test 4,000 for the number to “settle down.” It turns out that V# is not a very good metric after all, so we stopped using it… something like over a year ago.

The standard practice is to pull 10 (or more, as necessary) new copies of the lens when the are received by Lensrentals. We aren’t looking for the variance to stabilize. If we did that, we would stop at about 5 for most lenses.

The mechanism of misalignment in a lens determines how its MTF departs from the average or nominal value. A spacing error will cause a relatively uniform error across the field of view because it changes only spherical aberration and axial color, aberrations that are constant over the field. There is a balance between aberrations so it is not precisely uniform over the field, but for small changes the aberrations are reasonably orthogonal.

In the case of despace, if a lens is -2sig on axis it is probably just about -2sig over the full field of view. That is an obvious reject (at 10 samples).

In the case of decenter or tilt, a lens may be +2sig (excellent) in some places and -2sig (very bad) in other places. It’s clearly misaligned, but is it abnormally so? That is a much more difficult judgement call.

Roger’s comment mentions Monte Carlo. We are of course not doing computer simulations, but the underlying maths is the same and I haven’t thought of a sexy name for it yet. Pseudo monte carlo just doesn’t have a good ring. We’re probably 6-8mo away from anyone seeing it on the LR blog, both my schedule and our programmers’ are quite full.

Robson Robson ·

Have heard pseudo monte carlo more the once though from some guys simulating. But not in the field of optics obviously…

Ed Bambrick ·

2 sigmas from the mean…..no apologies?

Brandon Dube ·

Absolutely none. If you’re not paying Olaf for MTF data, you get no say in what we do.

Ed Bambrick ·

Apologies for the pun !!

Bob B. ·

OK…I broke down like a child yesterday and ordered the lens without seeing Father Roger’s full-range MTF tests. OH MY!

So..I just checked UPS tracking (ETA: Fri. End of day :-))…and then stopped by here to see if any good articles about anything photographic had posted. GULP! …my palms immediately got just slightly sweaty and my stomach was getting sour and gurgling. Did I just drop $1400 on a lens that Roger is going to show me MTF’s that look like a Vivtar zoom from the 1970’s (I tend to react in a fear-based way to almost everything).

….(yes…I hurriedly skipped the part about “How I Do These Tests…Sorry Rog…and raced to the meat of the matter…the MTF’s and the comparisons!)……..Phew…my mind is at ease and now I may head off the UPS courier at his lunch spot. (Don’t even ask me how I know where that is!…but it has to do with G.A.S. And it’s not in the brown truck’s fuel tank.).

I think that I am going to skip into the weekend! YAY!

This time I can any THANKS! 🙂

Roger Cicala ·

I can’t imagine you’re going to be anything other than happy with this one.

Bob B. ·

Got the lens Friday…and put it on my recently purchased 5D Mark IV. WOW! What a combo…the AF is very, very spot on.

Omesh Singh ·

On TDP’s LR-linked MTF charts I found some stopped down data for Zeiss lenses. Have you ever done stopped down MTF tests for the 35L-II?

Roger Cicala ·

No, I’m sorry, we haven’t.

Tevin Limon ·

Love these blogs. Can’t wait for an excuse to rent this lens to try it out.

Patrick Chase ·

For the weight and price the Canon still looks very attractive. I’m sure Canon could do better with the same weight/size/cost budgets today, but it’s not completely out of the game IMO.

On a related note, my first copy of the Sigma 85 had fairly noticeable astigmatism in the center, this becoming only the second lens I’ve ever sent back. I think that there may be some manufacturing challenges that Sigma hasn’t quite mastered with these long and fast primes.

Patrick Chase ·

My visceral reaction is: Fast primes with this sort of DoF take terrific images of flat targets, but I can’t help but wonder if we’re into “overkill territory” for real-world images.

One way to look at this is to think about the depths over which those spectacular MTF values are achievable. The markings on lens focus scales typically assume a 30 micron acceptable blur diameter. That amount of blur causes a 50% reduction in MTF at 24 lp/mm, an 80% reduction at 33 lp/mm, and a 90% reduction at 37 lp/mm. In other words, those spectacular 30, 40, and 50 lp/mm MTF values are completely irrelevant at the limits of DoF as commonly defined, because the lens point spread functions are much smaller than the defocus blur.

I suspect that you would need to work within a ~10 um CoC (and certain no more than 15 um) to see or take advantage of the MTF difference between, say, a Canon 135/2 and a Sigma 135/1.8. For a head shot at 1:15 magnification at f/2 that would give you a “relevant DoF” of ~9 millimeters. Everything outside of that sweet spot will look more or less identical between the two lenses in terms of sharpness.

Patrick Chase ·

Reposting something that apparently hit your spam filter…

My visceral reaction is: Fast primes with this sort of DoF take terrific images of flat targets, but I can’t help but wonder if we’re into “overkill” for real-world (i.e. three-dimensional) subjects.

One way to look at this is to think about the depths over which those spectacular MTF values are achievable. The markings on lens focus scales typically assume a 30 micron acceptable blur diameter. That amount of blur causes a 50% reduction in MTF at 24 lp/mm, an 80% reduction at 33 lp/mm, and a 90% reduction at 37 lp/mm. In other words, those spectacular 30, 40, and 50 lp/mm MTF values are completely irrelevant at the limits of DoF as commonly defined, because the lens point spread functions are much smaller than the defocus blur.

I suspect that you would need to work within a ~10 um CoC to see or take advantage of the MTF difference between, say, a Canon 135/2 and a Sigma 135/1.8. For a head shot at 1:15 magnification at f/2 that would give you a “relevant DoF” of ~9 millimeters. Everything outside of that range will look more or less identical between the two lenses in terms of sharpness. The bottom like is that the range of subjects that are “shallow” enough to exploit these sorts of MTF differences at large apertures seems fairly small.

Greg Dunn ·

That’s a good assessment. I’ve often wondered what these people who crave razor-thin DoF are actually shooting in the field; I strongly suspect many of them are just shooting wide open as a bragging point. I saw a landscape photo recently where the subject was a beach, with sand in the foreground and horizon/clouds in the background. The photographer focused on a diagonal line of breaker foam at f/1.8, ensuring that only just part of it was in focus, and more than 90% of the photo was badly OOF – ensuring there was no subject to draw the viewer’s attention.

Even for portraits – my subjects would be very puzzled if I shot them with half of their face OOF; they like seeing details of hair and clothing.

Patrick Chase ·

IMO there are a fair number of cases where a razor thin DoF does make artistic sense, to create isolation or to otherwise draw attention to a subject. That isn’t my own aesthetic for the most part [*], but I understand and respect where people that do so are coming from.

The catch is that even in those cases only ~1/3 of that “razor thin DoF” (assuming the traditional picture_height/800 criterion for permissible CoC) is sufficiently sharp to expose the sorts of differences we’re talking about here. The other 2/3 might appear “sharp” to a casual viewer, but is actually defocussed to the point where these sorts of lens differences are moot.

There is also the minor complication of focus accuracy. For a real-world, handheld shot of a non-static subject even the best photographer may find themselves hard-pressed to nail focus accurately enough to make the difference between a lens that scores 65% at 50 lp/mm and one that “only” scores 50% visually relevant.

[*] If I were shooting a nearly-flat subject receding to infinity like a beach and ocean, my first instinct might be to reach for one of my tilt-shifts so that I could everything in focus without stopping down too far.

Gracie ·

Astrophotography–zero depth of field needed, high speed needed, full field illumination needed, sharpness–especially in the corners– paramount. I wish these reviews provided better data/graphics on field illumination. A lens with 2 stops of falloff is useless for astrophotography. 1 stop is the max tolerable.

Ketan Gajria ·

Well written. I’m a bit surprised there’s no comparison with the 135mm Batis, despite the difference in maximum aperture and aperture you could compare them at, if only because both are new releases which LensRentals published MTF charts for and both have autofocus. No?

Roger Cicala ·

Ketan, I’ve only tested one prototype copy of the Batis, so I didn’t want to make a comparison until I have 10 copy data.

Ketan Gajria ·

Ah, that’s right. Makes total sense.

bdbender4 ·

Hi Roger and Aaron –

Very minor typo at the very end: it’s April 2017, not 2015. This might confuse the multitudes who will be reading this years from now. Then again, maybe not…

I have a slightly off-topic question about Sigma. You used to do a least-reliable-lens analysis from time to time, but I haven’t seen one for quite a while. I have also heard third-hand on the internet that even though Sigma’s quality control has gotten better over time, problems with focusing accuracy can arise after “a while”, presumably due to wear of some sort.

I don’t own any Sigma lenses but they are obviously tempting these days. So the question is, does your experience support any of the “focusing non-reliability issues”? Or have they adopted six-sigma to deal with all the quality control issues (nice pun, eh?).

And if you don’t want to touch this question that’s OK too.

Roger Cicala ·

Sigma has gone from really not reliable 6 years ago to very reliable now. There are the occasional autofocus reports as you say, but 95% of them can be completely fixed (and firmware updates made) via the Sigma dock which is a marvelous tool. If not Sigma repair is now among the better ones and they take care of it quickly and reliably.

Interestingly, by far, very far, the worst repair service now is Leica.

Aaron Collins ·

Are all Sigmas more reliable now or just the models designed/released recently? IE would a 35 Art bought new today be more likely to be trouble-free than one bought on release?

Markus Baumgartner ·

I’d really love to see a comparison with the Sony Zeiss 135/1.8.

Roger Cicala ·

Unfortunately we don’t have a Sony alpha mount for our machine

CheshireCat ·

Thanks for the test.

What about longitudinal chromatic correction and color rendering ?

(MTF are too… MTF-ish 🙂

Roger Cicala ·

Well, yeah! That’s why there are reviewers 🙂

Although if I buy a spectrometer, then color rendering will become a number too.

CheshireCat ·

That would be great. I think lens color rendering is overlooked by most users, because “colors can be perfectly fixed in post” (which is a myth in my opinion).

Lee ·

Are they going to have to confiscate your Purchase Order pad to stop you??

Samuel H ·

Great test, as always. Thanks a lot.

My take is that this 135mm f/1.8 is just as good as their awesome 85mm f/1.4 (comparing your MTF graphs, actually a little bit better, but you’ve taught us not to split hairs). It also seems just as heavy, and, surprisingly, a bit smaller.

It’s about time they started making glass for Sony FE (and not just “same glass with different mount”, but actually designed for mirrorless, meaning smaller and lighter but with similar performance).

Paulo Basseto ·

Roger, how does it compare to the 105mm 1.4 in sharpness at widest aperture? The 105 has been a beast every time no hickups and always sharp, even when you expect it not to be.

Roger Knight ·

Roger, In Zack’s 5/24/17 review of the Sigma 135 Art, he mentions having had back focusing issues with his Canon 135L. His comment was, ” 2. It was backfocusing, but pretty inconsistently. When I sent it into Roger and Aaron, they found that it was decentered pretty bad, a somewhat common issue with that lens from what I could understand”.

Is decentering a problem with the 135L? I’ve never read of such an issue in any of your reviews or comments on that lens.

Regards.

Roger

Zach Sutton Photography ·

For clarity, I’d first like to go on record by saying I absolutely beat the crap out of my gear, so it has to get serviced somewhat often.

Since few have asked me on that topic, I was told that my front group was coming unscrewed, and that it needed to be recentered. I was told it wasn’t a problem, a fairly easy fix, and not a uncommon occurrence over time. That’s not to suggest that the Canon 135L are all going to become decentered, but that after wear and tear, all lenses will need to be inspected and repaired. I’ll revise the piece to give more clarity on the topic…just wanted to address it here as well

Don Farra ·

Roger, thank you for the interesting write up. A couple of questions: can a lens be de-centered by misuse? Like dropping the lens or during shipment. Second, how can folks at home test their lenses for being decentered? I hope that makes sense.

Marc P. ·

Even it’s out of my price range, and also i have no need for this focal

range, it’s way appreciated, that you do these kind of tests, especially

thanks to Roger, and Aaron. Photozone gave this new Sigma the whole

she-bang, all full 5 Stars and a “highly recommanded” setting – i’ve

never seen this from Photozone for as long as i do read their Site,

which is quite >10 years now….

Anyway, i do hope Sigma releases finally the new ART 24-70/2.8 to the public,

and then a comparsion between this Lens, and the “mighty” Canon 24-70/2.8 L II USM,

but also the (expected) G2 Generation of Tamrons 24-70/2.8 this late

fall – i’d being very interested to read such an article from you Lens

Lovers Guys. 🙂

kind regards,

Marc

MEJazz ·

‘zooms aren’t primes’ but you did admitted that the Canon 70-200/2.8 IS II is sharper than the Canon 200/2.8L II prime. It was a bit let down that a zoom bested the prime…

Earl of Lade ·

I got this lens a few months back and it is superb!

https://uploads.disquscdn.com/images/4a243287cc3fbcdc245f76c58794089f971e1092a8f57303070f65171f2f0640.jpg https://uploads.disquscdn.com/images/b60b4ee52ec3ebf57563ef8c42861d122b94beea369cfe453c417f05b6ed163f.jpg