How to Expose Raw Files – Part 2

This is Part 2 of an article published last week. For the best understanding, please read part one by clicking here.

Last week, we talked a bit about how the camera exposes raw files and used an analogy of rainwater and buckets to explain that. Today, we’re going to dive into the topic more and discuss it with more practical techniques and understanding.

Putting this into Practice

The net of all this is that you want to set your camera to base ISO and get as much light on the sensor as you can without saturating it in areas where you want detail. How successful you are at doing that will depend on how bright the lighting is, how far you must stop down to get the depth of field you desire, and how fast a shutter speed you need to deal with camera and subject motion. But there’s an essential problem: how do you know when you’re saturating the camera sensor or clipping the highlights?

The news on that front is bad. None of your camera metering functions can reliably detect sensor saturation. If you have a mirrorless interchangeable lens camera (MILC), your two best tools are the highlight zebras and the histogram, but even they are flawed. Both the histogram and the zebras have the right idea. If you want to detect clipping set the zebra threshold just below the clipping level and adjust your exposure – remember, we are at base ISO now — so that no meaningful highlights have the zebra warning. Or look at the right side of the histogram and adjust the exposure until the histogram contains data close to the right edge, but there’s no little spike at the right edge that would indicate that some of the image is clipped. The zebras have an advantage over the histogram in that they indicate where in the image the clipping occurs, and I find them easier to see in the finder.

Both the zebras and the histogram suffer from the same basic problem: they are derived from the preview image that you see in the finder of your MILC, or on the LCD screen when you review photos in your DSLR. The JPEG preview image is of lower resolution than the raw file, so you might miss some small blown-out areas. But that’s not the big problem; the wrong data is being displayed. You want to know when the raw file experiences clipping, and the zebras and histograms tell you when an sRGB or Adobe RGB JPEG image, processed as you’ve defined in your camera menus, is clipping.

Usually, but by no means always, the JPEG-derived histograms and zebras are conservative – they show clipping before the raw file is actually clipped. How conservative they are depends on the spectrum of the lighting and the white balance you’re using in the camera. Some manufacturers are more conservative than others; if you’re using a Nikon Z7 and you’re judging your exposure from the JPEG histogram (the Z7 doesn’t support zebras except in video mode), you’re likely to have raw files that are quite underexposed compared to what you could get away with if you set your exposure by the raw histogram.

Similarly, when you get your files into your raw developer, the histogram you see there won’t tell you how close you were to clipping a channel in the raw file. The histogram you see in Lightroom or Adobe Camera Raw is the histogram of the developed image. If you develop an image with all the controls left at their default settings, it’s the histogram of an image developed the way the program creators think most people will like most of the time.

To know how well you’re doing with your raw exposures, you must use a tool that lets you see the raw data directly. I use two such tools: RawDigger and Fast Raw Viewer. Both will show you raw histograms, but their, ahem, focus is different. Fast Raw Viewer is a culling tool with a raw histogram that makes it very useful for sorting. But you can also use it for quality control, to see if you are getting enough light on the sensor or if you have clipped some high values. RawDigger is a one-trick pony, and its trick is deep and varied analysis of raw files. It requires some learning, but it can yield lots of information.

Here’s an example of a raw histogram and the histogram you see in Lightroom. I shot this scene with a Nikon Z7.

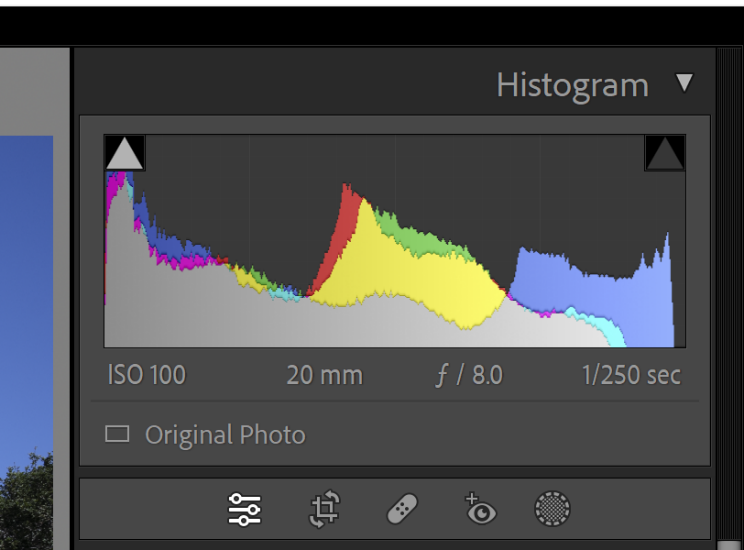

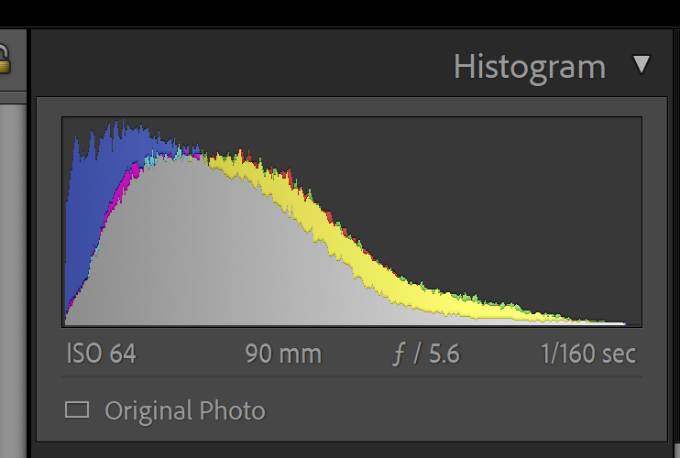

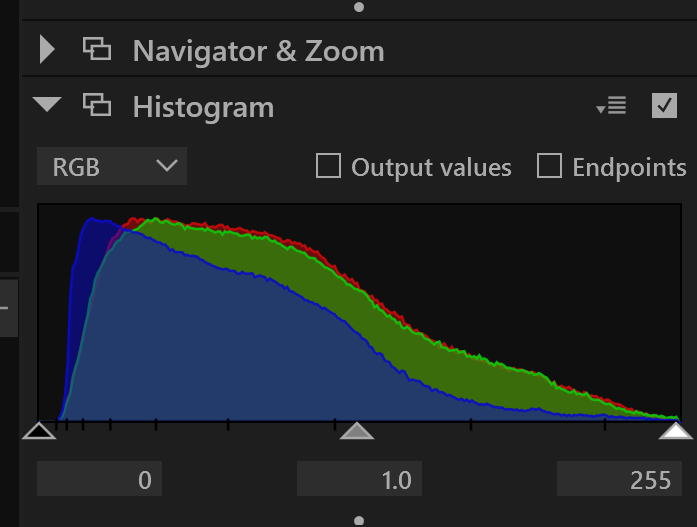

Here’s what the histogram in Adobe Camera Raw looks like with the default settings:

Looks like the blue channel is blown, doesn’t it?

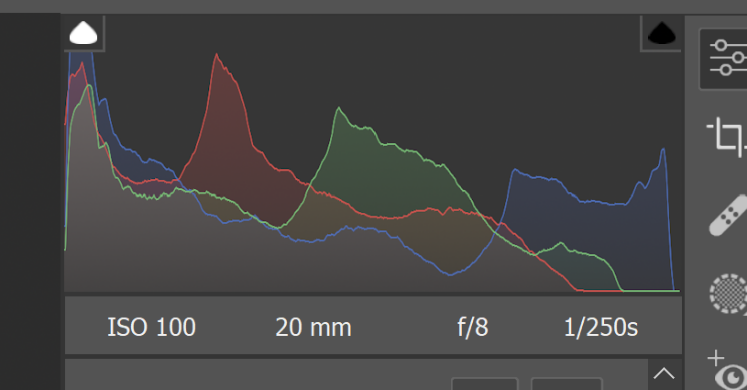

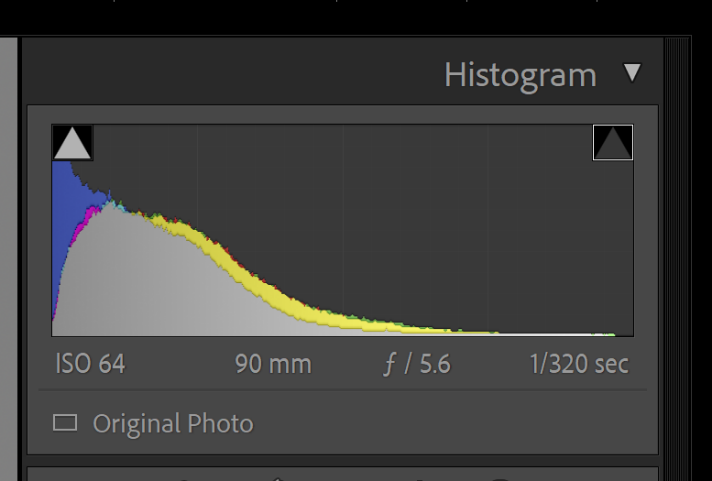

Here’s the Lightroom histogram with default settings:

Near the clipping point, it looks similar, as well as it should, since Lightroom and ACR share the same core software; the differences are due to the different color spaces used by the two programs for histograms.

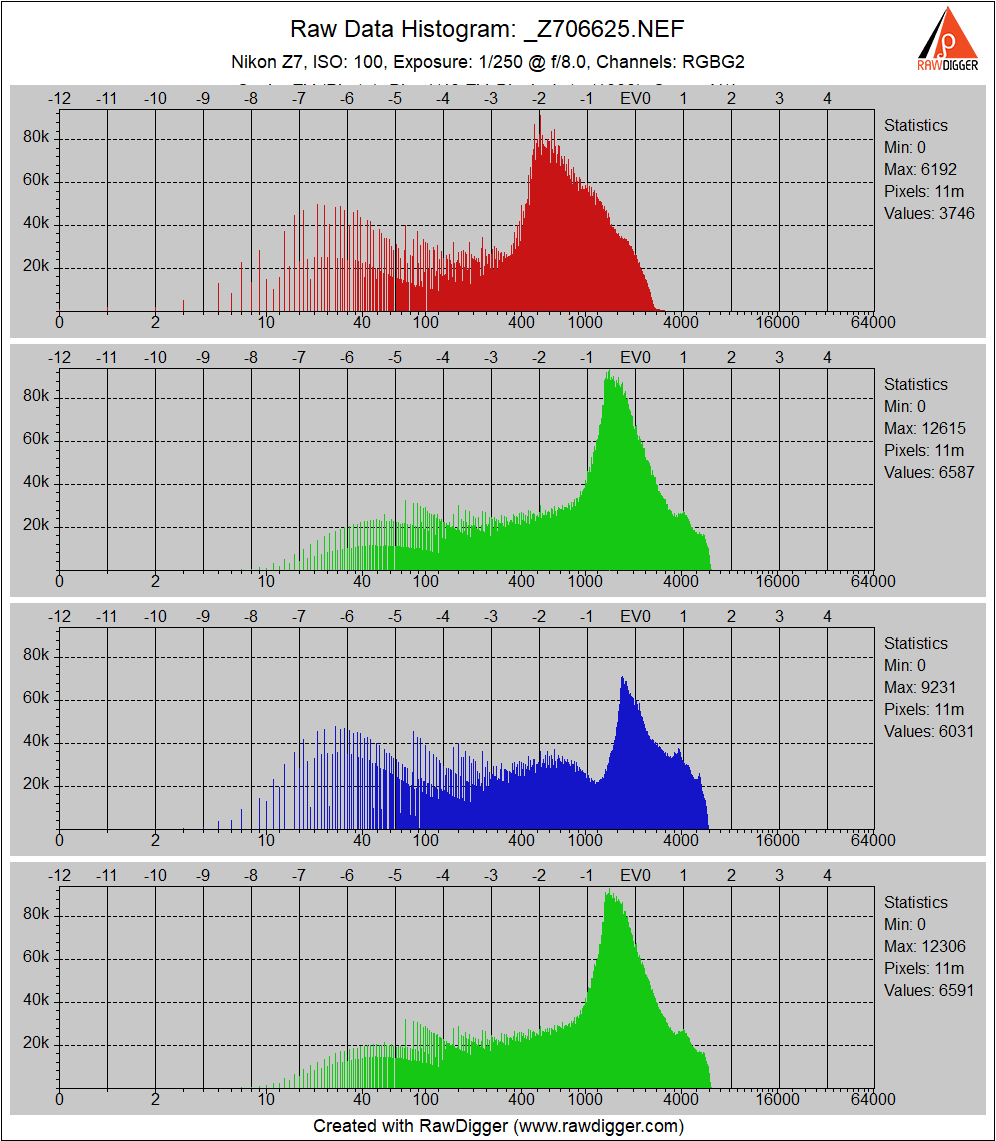

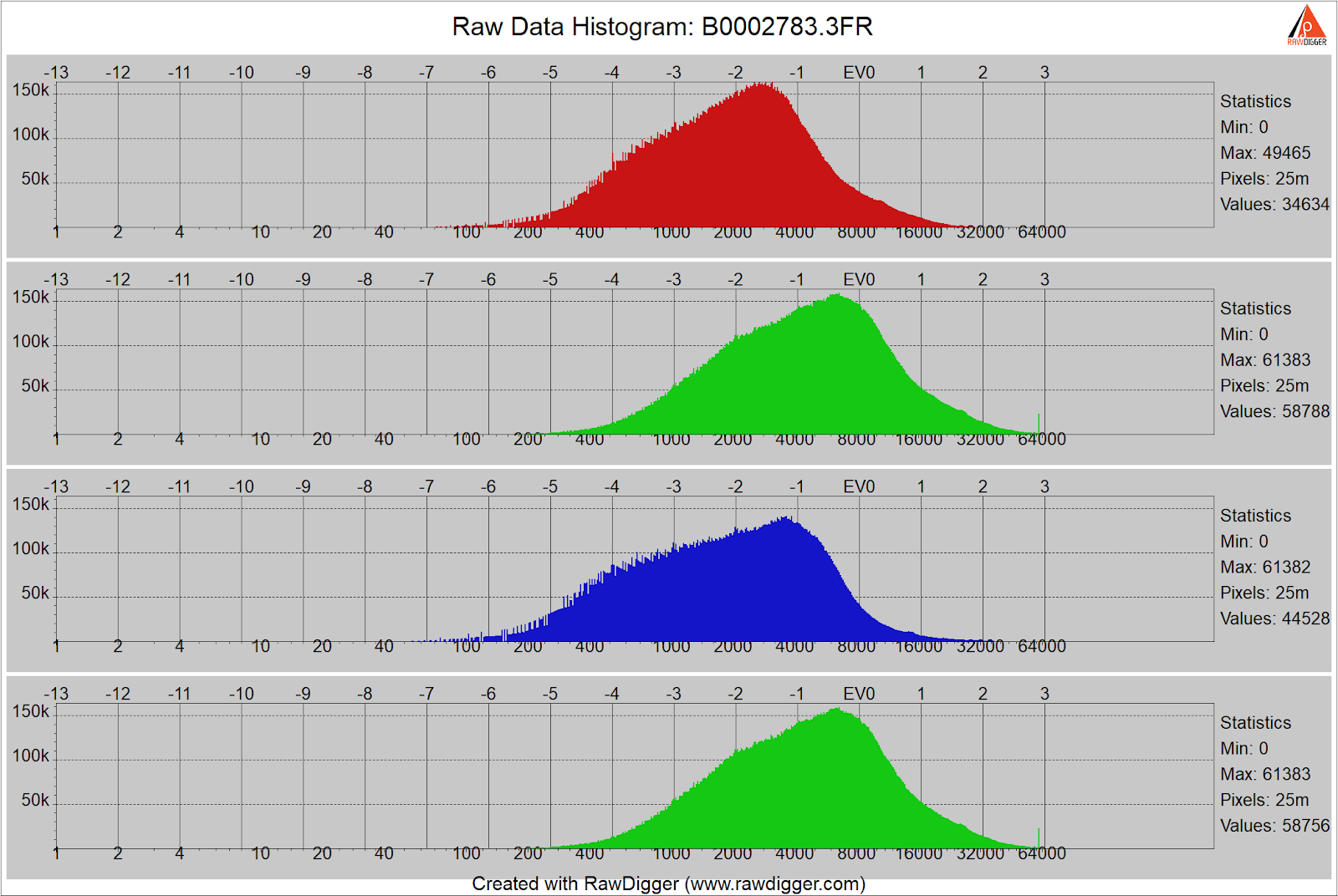

And here’s the raw histogram, courtesy of RawDigger:

Now we see that the blue raw channel is not the one with the highest values; that honor goes to the green raw channels. And most of the highlights in the blue and green raw channels are about a stop and a half from clipping, which occurs around 16000.

There are things that can be done to make the in-camera histogram more like the raw histogram than it usually is. The most powerful of these is called Uni-WB. These techniques make the finder image look green on most cameras, and, though these approaches have been around for a while, they have not gained much traction in the photographic community.

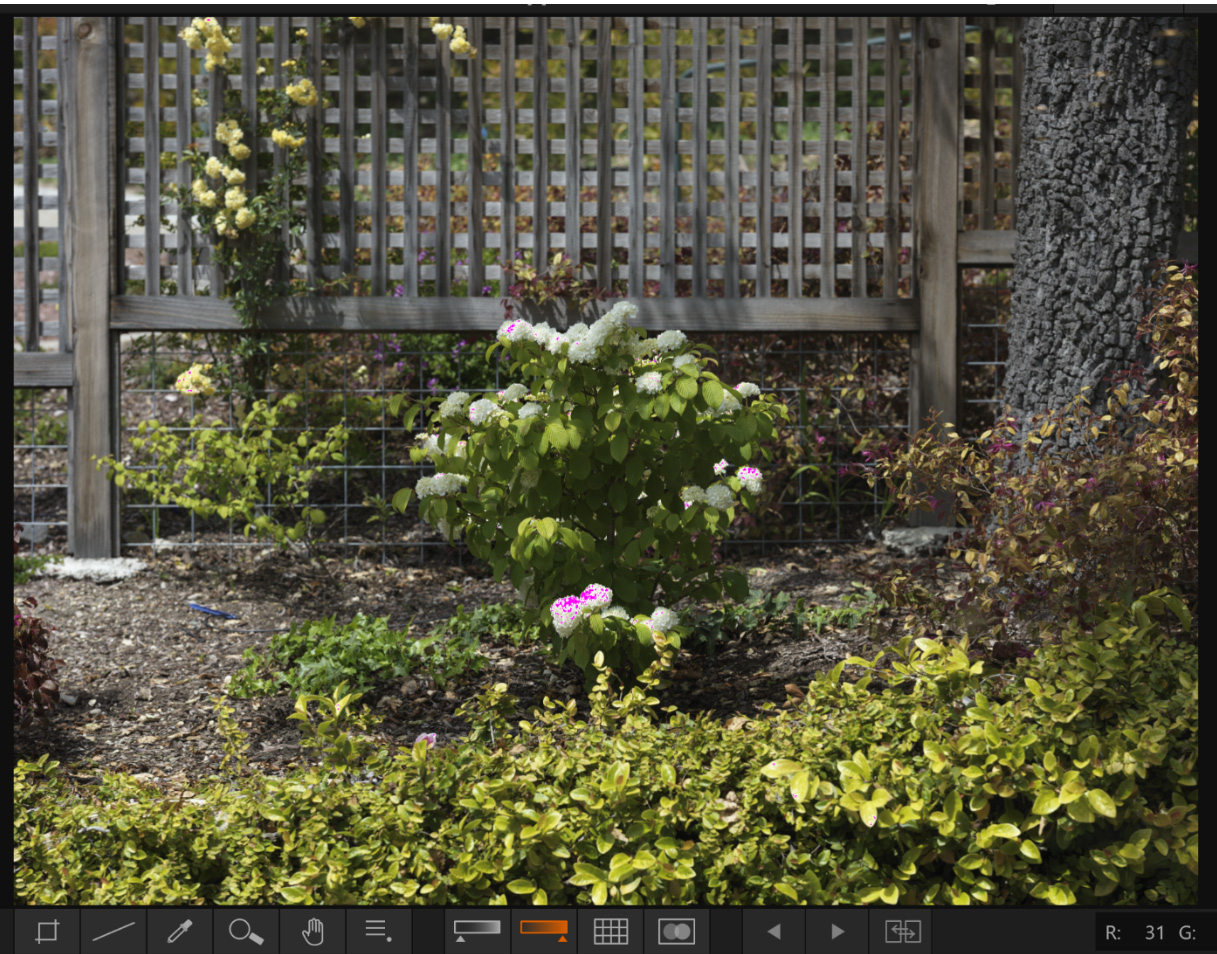

How much margin you have depends on the camera and the scene. Here’s an exposed to the right shot with the Hasselblad X2D:

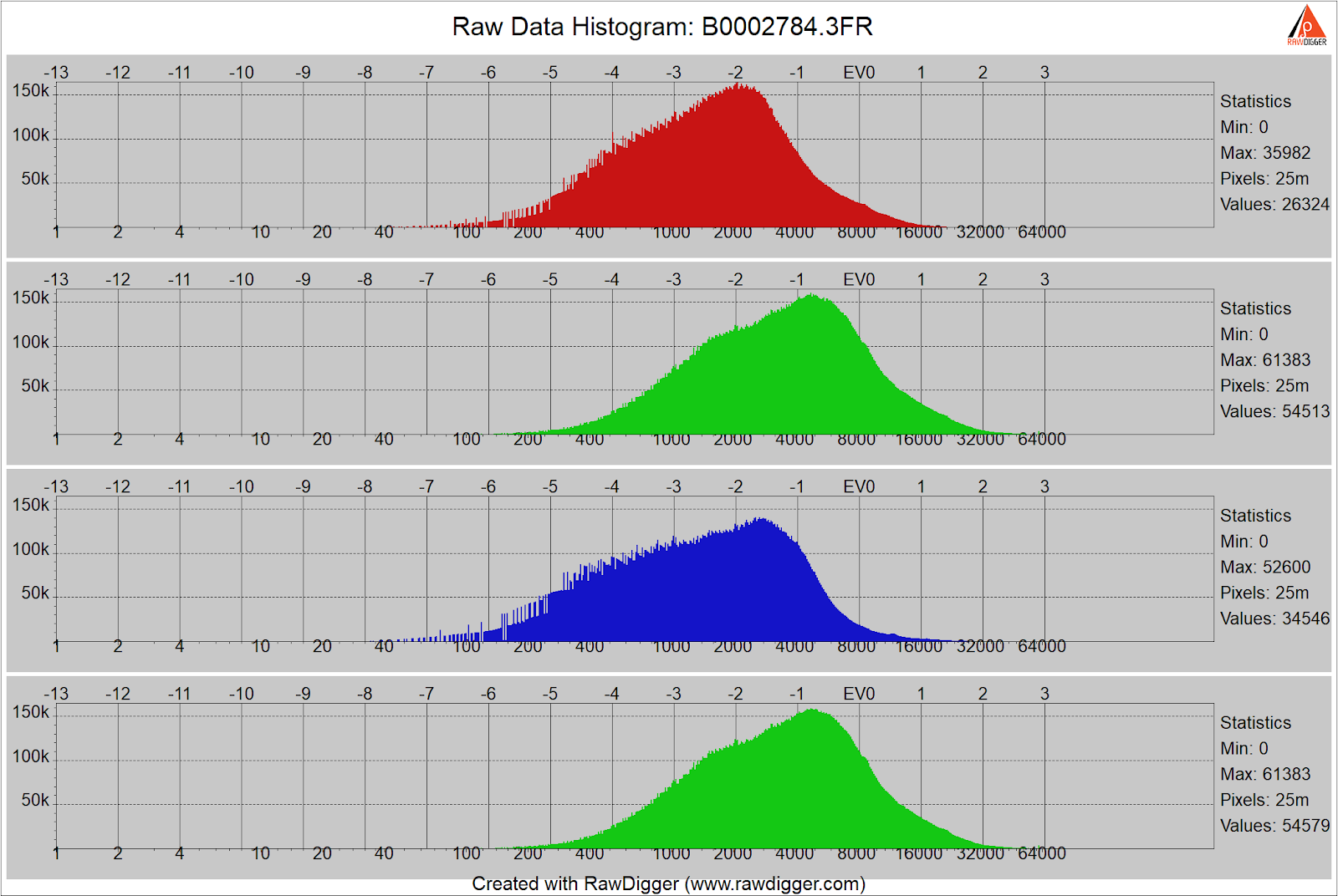

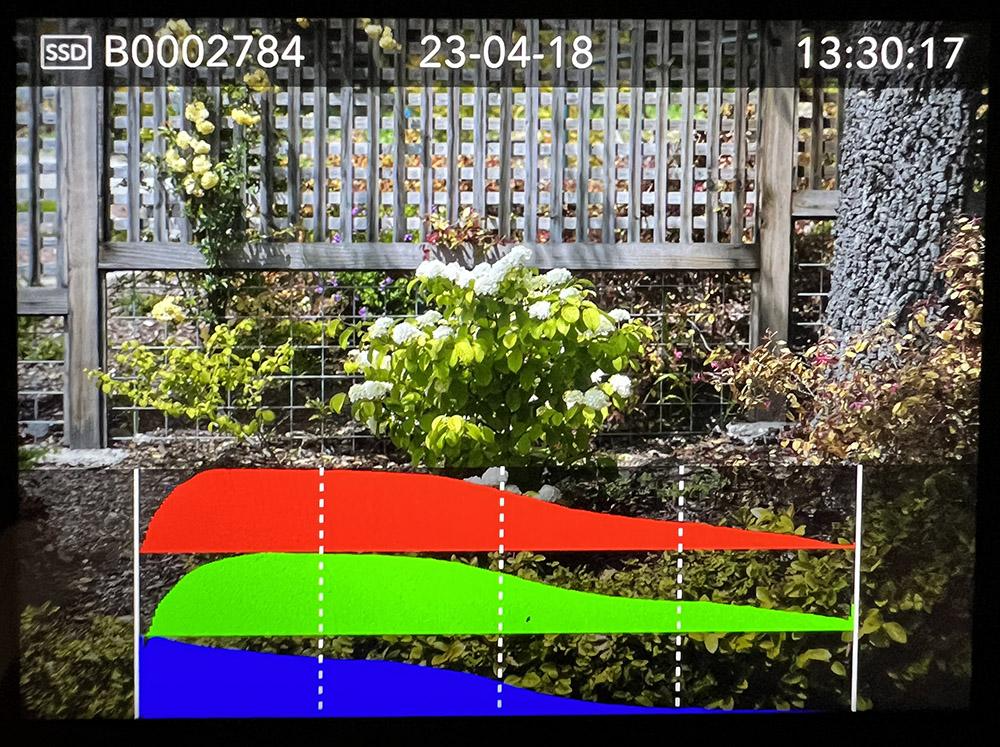

Here’s the raw histogram for that shot:

Just a third of a stop more exposure than that shows clipping in the green channels:

This camera saturates at just below 64000; see the spike in the green channels above?

Here’s the in-camera histogram for the ETTR image:

It looks like there is quite a bit of clipping, but the actual raw clipping is minimal. In fact, you must go to an image that is 1 2/3 stops underexposed from the ETTR image to get to the point where the in-camera histogram says there’s no clipping.

If we bring that image into Lightroom with default settings, here’s what we see:

In this case, the Lightroom histogram clipping point isn’t much different from the raw file clipping point. But the shot one stop underexposed from that also shows the histogram going all the way to the right.

Clearly, Lightroom is applying a shoulder to the highlights in the ETTR image.

If we look at the file with Hasselblad’s raw converter, Phocus, here’s the histogram we see with the default settings:

But when we turn the clipping warning on, some of the flowers are tinged in purple:

The take-home lesson is you can’t be sure if the raw file is blown – that’s bad — or just close to it – that’s good – without looking with some program that lets you see the raw data itself.

If you don’t want to look at the raw histograms, you probably won’t have any raw clipping for near-neutral objects lit with common light sources, though you’ll likely be underexposed from true raw ETTR. Be careful around bright colorful objects like flowers, though.

To review, here’s how to get optimum raw exposures:

- Set your ISO to base ISO.

- Set your f-stop to the stop you need to get the depth of field you want.

- Set your shutter speed to get as much or as little blur as you want.

- Check the histogram or the zebras to make sure you’re not going to clip any important highlights. If you are, stop down or set a faster shutter speed.

- If the histogram shows no information on the right side, consider boosting the ISO setting, but don’t be aggressive about that. If your camera offers dual conversion gain and you want to boost the detail in the deep shadows, you might consider setting the ISO to the lowest setting that gets the high conversion gain. Don’t plan for doing more than a four or five-stop push in postproduction.

Raw Development Implications

If you’re new to this kind of thinking about raw exposure, when you get your images into your favorite raw developer, you may – or may not – be surprised at what you see.

If you are making images of a typical scene and have not played games with your JPEG preview image controls, your JPEG preview image, its histogram, and the histogram of your raw converter at default settings will reflect a normal-looking capture with histograms that span almost the entire x-axis. Normal processing in your raw developer will work pretty much the way you’d expect it to work.

If you are shooting a low-contrast scene, you’ll end up with little information on the left side of the in-camera histogram (and the histogram of your raw converter at default settings), and you’ll probably have to darken the midtones and shadow areas in postproduction. But that should work out fine if you’ve gotten enough light on the sensor.

A high-contrast scene with bright highlights that need preserving will probably result in a capture whose JPEG preview image (and the histogram of your raw converter at default settings) is dark, and you’ll need to boost the shadows and midtones in post.

If you’re not interested in some technical details about noise, stop right here, grab your camera, and go make some well-exposed photographs.

Technical Discussion on Quantizing and Read Noise

I know that some of you won’t be satisfied by the raindrop analogy and associated hand-waving. Let’s look quantitatively at how much of a win the good part of increasing ISO buys us. This section assumes some technical knowledge.

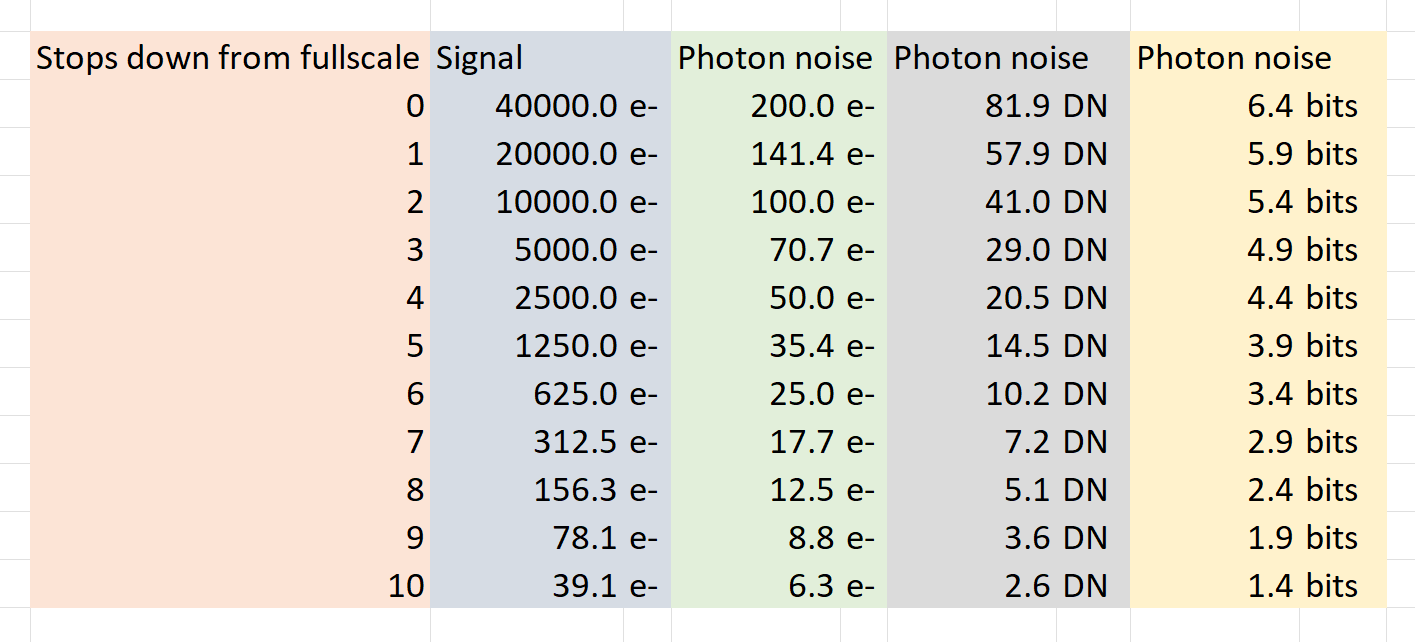

First, we’ll look at quantization. I’ll give numbers for a typical modern CMOS sensor: full well capacity (FWC) 40000 electrons (e-), base ISO 100, 14-bit ADC.

The first column is how far the signal level is below full scale – in stops. The second is the number of electrons in the signal. The third is the root mean square photon noise associated with that electron count. The fourth is the rms value of the photon noise in counts or data numbers. The last column is how many binary digits it would take to capture the rms value of the photon noise. The amount of noise necessary for adequate dither of an analog to digital converter is around 1 bit. Even 10 stops down from full scale the photon noise is dithering the ADC properly. The precision (number of bits) in the ADC is not a limiting factor in the accuracy of the camera. Since we have no read noise in the above analysis, if we looked at more stops down from full scale, we’d eventually get to the point where the photon noise does not provide adequate dither for the ADC.

I’ll look at read noise next, using the Fujifilm GFX 100S as an example. This camera uses a Sony backside illuminated CMOS sensor and is representative of modern sensor pixel designs. Sony, the dominant supplier of sensors for interchangeable lens cameras, uses virtually the same pixel design in sensors for APS-C, full-frame 35mm, 33x44mm medium format, and larger medium format cameras.

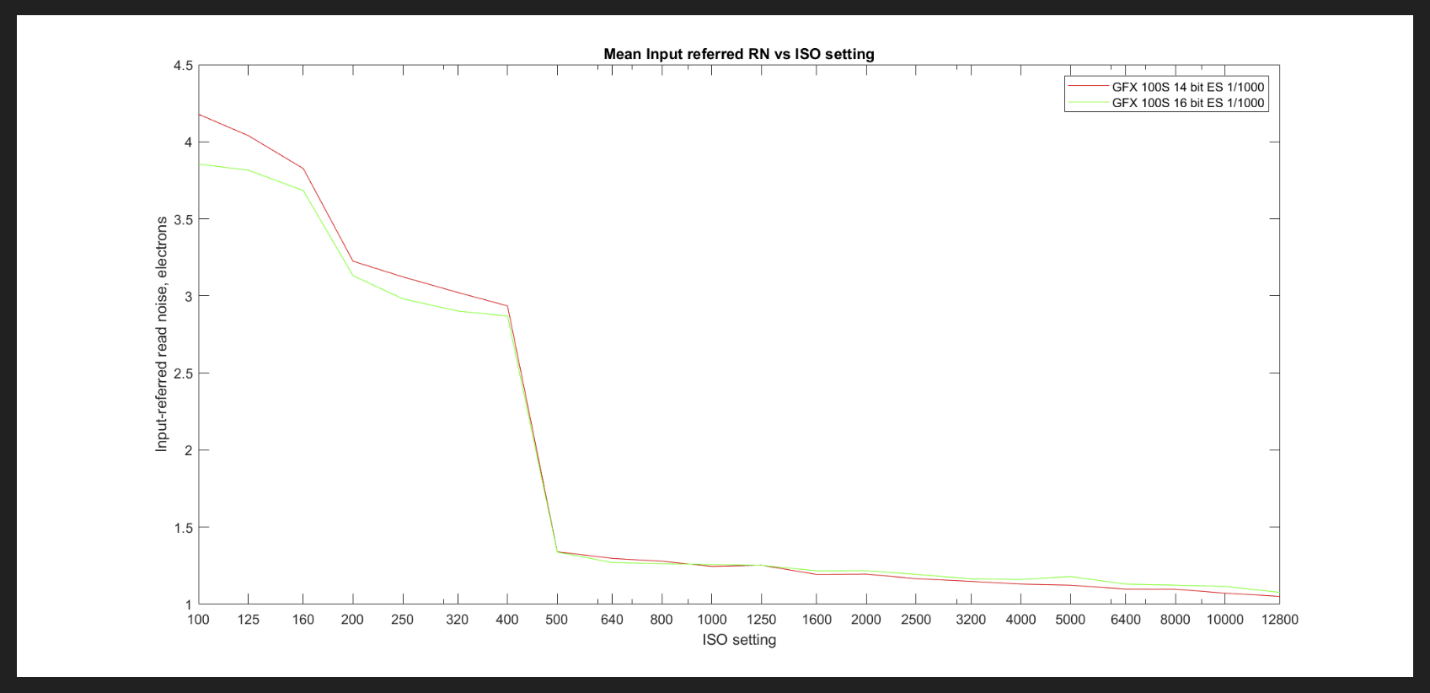

Here’s a graph of the input-referred read noise of the GFX 100S versus the ISO setting incremented in 1/3 stop steps, as measured by yours truly with the camera set for 14-bit and 16-bit precision:

What is input-referred read noise? It is a result of a calculation that uses a black field for the subject and analyzes the noise in the resultant raw file, then calculates how much electron noise on the capacitor in the photodiode there would have to be if that were the only noise in the system. The rain gauge analogy would be calculating the ripple noise in the various-sized buckets in terms of the number of raindrops that would have to fall into the funnel to create that effect. The numbers on the y-axis of this graph are directly comparable to the green column in the photon noise table above.

When you have two uncorrelated noise sources – and photon noise and read noise are uncorrelated – they add as the square root of the sum of the squares. That means that if one source of noise is quite a bit greater than the other, virtually all the noise is coming from that source. Nine stops down from full scale, the photon noise is 8.8 electrons. At ISO 100, the read noise is 4.2 electrons. The total noise is sqrt(8.8^2+4.2^2) or 9.75 electrons.

What if the image is completely dark? Then there’s no photon noise at all. The ISO 100 read noise is 4.4 electrons, that’s almost 2 least significant bits, which is plenty for dithering the ADC. The other place to look at on the curve is at ISO 500, where the higher of the two conversion gains the sensor offers kicks in, that the read noise drops to around 1.4 electrons. That’s almost 3 least significant bits, which is more than enough dither.

Glossary

Dual conversion gain. Conversion gain is the ratio of the voltage on the photodiode capacitor to the number of electrons captured. The conversion gain is set by the capacitance, with lower capacitance producing higher gain. High capacitance allows lower photon noise if enough light falls on the sensor and is desirable at base ISO. Lower capacitance allows lower read noise and is desirable at higher ISOs. Dual conversion gain sensors became popular about 10 years ago, and allow the capacitance to change with ISO setting, thus in some sense allowing the best of both worlds.

Full well capacity. Full well capacity (FWC) is the charge that can be stored in a sensor pixel before the pixel saturates. It is measured in electrons (e-). Modern CMOS sensors have FWCs of about 3000 electrons per square micrometer.

Histogram. A graphical representation of the distribution of numerical data. The x-axis contains intervals, sometimes called buckets. The y-axis plots how many items have values in the range of each bucket. In the histograms presented by cameras, what’s plotted is either scene luminance for the whole frame, or each of the JPEG color channels, also for every pixel in the JPEG preview image. Usually, the in-camera histogram x-axis is linear in the tone curve of the JPEG color space the user selected, which means that middle grays are more or less in the middle of the x axis. In camera histograms have linear y axes. The histogram in Photoshop is in the working color space. The histogram in Lightroom is in the Pro Photo RGB color space, except that is uses the sRBG tone curve. Raw file analyzers like RawDigger offer many ways to bin the image values into buckets and display the results.

Pixel. Short for picture element; the most basic component of an image. In a sensor, the photodiode electronics associated with the resetting, detection, conversion of electrons to voltage, buffering, and the first stage of switching for one picture element are collectively referred to as a pixel.

Precision. In computer science, the precision of a numerical quantity is a measure of the detail in which the quantity is expressed. The units are usually binary digits, or bits. The analog to digital converters in cameras output an unsigned binary number. The length of that number in bits is the precision of the ADC. Some people refer to precision as bit depth.

23 Comments

Ted Orland ·

Thanks for the wonderful pair of essays on this subject! I do have one question (albeit still poorly-formed, so treat it as semi-rhetorical). As I read it, my Fuji APS camera’s live EVF displays a scene’s image tones as they would appear in a jpeg file. So, if I modify my camera’s live EVF settings — e.g. setting highlights to -2, and shadows to +3 via the onscreen menu — does that also modify the resulting live histogram (or the zebra-stripes) that is displayed via the EVF? Basically, what I’m looking for — in the go/no-go moment of decision before tripping the shutter — is the clearest display of all the shadow & highlight detail that will ALSO be available in the RAW file being generated by that exposure.

b____k ·

A couple of thoughts:

1) are you modifying your EVF settings as you stated, or are you modifying your actual JPG processing settings? Most of the time the EVF brightness and color changes have nothing to do with the histogram & zebras, whereas the JPG settings WILL affect the EVF feed, unless you have “natural live view” or its equivalent turned on. So as long as your EVF is still WYSIWYG JPG-wise, your live zebras and histograms should respect your JPG settings.

2) different cameras may handle these nuances in different ways, especially depending on other chosen settings. It would be best to find an unchanging scene and test this yourself with a fixed exposure, using a few shots taken with highlights and shadows set to their low and high extremes.

Ted Orland ·

Yes, thanks, I agree — a control test is the logical next step (and fairly straightforward in this instance).

Jim Kasson ·

Yes, the histogram in the EVF is computed from the EVF preview image. So are the zebras. So anything that affects the EVF preview image will also affect the histograms and the zebras. But adjustments to the EVF preview image don’t affect the raw data.

Frank Kolwicz ·

I’ll have to wait and see where Jim Kasson goes with this in his 3rd installment to determine if it’s useful for me, but, I’ve read all of Roger N. Clark’s similarly comprehensive technical exposition of how the system works and have only found it to be of limited practicality.

I did extract one gem from Clark’s work on image noise: for my purposes it is more useful to simply find the highest ISO that fits my workflow and final use without requiring too much effort at noise reduction. What that amounts to is the ability to use default Lightroom noise/sharpening settings for all but a few images to be printed at 300dpi and 16×20 inch size. For my old 5dSr that was ISO 1600 and for my R5 it’s ISO 6400. One ISO stop higher and I have to do one or more additional selective noise reductions and re-sharpen to get a good printable image.

I’ve seen people destroy images with really bad blurring because they’re trying to hold to base ISO because of some rule they mistakenly apply religiously without understanding the implications for overall image quality.

For me, a noiseless blur is less acceptable than a sharp, somewhat noisy image. There’s a balance.

Jim Kasson ·

For raw files, at the same exposure, increasing ISO setting on the camera does not increase noise in the image.

I said to pick the exposure first, then pick the ISO setting.

Jim Kasson ·

Right now there is no third installment. If I do one, what should I cover? I’m thinking about some postproduction examples.

Frank Kolwicz ·

I think a variety of examples would be a good idea.

Translating theory and data into concrete imagery works best for me and, assuming I’m not alone, others like me.

Specifically, examples might include all the hardware choices, ambient conditions and post-processing decisions that will affect different photographic image types: lens and camera sensor; subject; lighting; intended final use. For my case that means a long focal length lens, high megapixel body, an often evasive subject, lighting that can vary drastically from frame to frame and turning it all into a satisfying final print. YMMV.

Jim Kasson ·

That sounds more like a book than a blog post.

Jim Kasson ·

>I think a variety of examples would be a good idea.

Try these:

https://blog.kasson.com/the...

https://blog.kasson.com/the...

https://blog.kasson.com/the...

Civilitas ·

Thanks for two really great essays!

A few years ago, on the RawDigger blog, there was a discussion of the differences between digital gain in a raw converter like Lightroom, and the analog gain that happens in camera. (please see https://www.rawdigger.com/h... ) . Since reading that article, I have not been following the "use base iso or dual-gain iso and then push in lightroom" strategy. Instead, I have been trying to get the ISO "correct" in camera. Wondering what your thoughts are on this issue?

[Of course, your point about the camera histogram not being correct with respect to RAW clipping is absolutely well-taken!]

Civilitas ·

Thanks for two really great essays!

A few years ago, on the RawDigger blog, there was a discussion of the differences between digital gain in a RAW converter like Lightroom, and the analog gain that happens in camera. (please see the RawDigger website, where you can find the article – I don’t think I can post a link here without violating a comment restriction) Since reading that article, I have not been following the “use base iso or dual-gain iso and then push in lightroom” strategy. Instead, I have been trying to get the ISO “correct” in camera, to take the greatest possible advantage of analog gain. Wondering what your thoughts are on this issue?

[Of course, your point about the camera histogram not being correct with respect to RAW clipping is absolutely well-taken!]

Jim Kasson ·

Look at the read noise vs ISO graph above. After the camera switches to the high conversion gain, there is little improvement in read noise obtainable by turning up the ISO further. This is typical behavior for dual conversion gain cameras.

grubernd ·

Very nice overview. Well written, thank you.

For general photography, reportage etc I set my camera to something between -1/3 to -2 EV and be done.

This has been working rather well all the time, but since I switched to using darktable with the scene-referred workflow results have been absolutely mind blowing. I have been able to balance images in ways with just a few settings that would require local adjustments or other extra steps in the traditional raw converters.

The new darktable pipeline does not have hard stops at black and white anymore but those are floating. There are tools in the pipeline to match the overall dynamic into a viewable experience, either filmic (fiddly and overly complicated) or sigmoid (amazingly simple with even better results).

And with this pipeline the colors stay absolutely consistent across the board. With a so-called iso-invariant camera there is not even a downside in heavy underexposure and correcting in post instead of raising the ISO in camera. Which seems to go against what you wrote in your article, but here we stay with the low ISOs no matter what.

Of course for critical work you want to photograph a bracket and then pick the image that just gives enough headroom and have reasonable highlights after using “inpaint opposed”.

Jim Kasson ·

“And with this pipeline the colors stay absolutely consistent across the board. With a so-called iso-invariant camera there is not even a downside in heavy underexposure and correcting in post instead of raising the ISO in camera.”

Sounds like there’s no profile twist with the Darktable profiles. That’s good for the more extreme pushes.

grubernd ·

I have pushed the files from my D500 up to 5 stops with no noticable color shifts compared to more moderate settings. Of course the noise reduction has to follow suit, because at iso100 +5EV one is effectively dealing with iso3200 data.

Jim Kasson ·

“Of course for critical work you want to photograph a bracket and then pick the image that just gives enough headroom and have reasonable highlights after using “inpaint opposed”.”

At base ISO, right? I don’t know what “‘inpaint opposed” is.

grubernd ·

Sorry for the jargon. “Inpaint opposed” is one of the highlight recovery algorithms available in darktable. In my experience it makes for a very seemless transition to the blown parts even with tiny specular highlights – which are rather often a giveaway for digital processing.

Matt M ·

Thank you for the informative posts. I have been trying to develop a better sense of how many 1/3rds of a stop I can expose beyond the right edge of my camera histogram at base ISO and lowest dual-gain ISO without going the UniWB route? Would you consider commenting on my strategy for using my in-camera histogram and Fast Raw Viewer (FRV) to achieve ETTR images on a consistent basis? Let’s assume that most of my images are outdoor medium to high contrast scenes, and my camera’s histogram is based on a neutral picture control setting and auto (natural light) white balance.

Step 1: I start by making multiple images at base ISO of the same static scene beginning with the histogram pushed to the right, but not beyond. For each subsequent image, I decrease shutter speed by 1/3 stop until I’m exposing well beyond the right side of my camera’s histogram.

Step 2: Open the raw files in FRV and use the FRV Raw Histogram and Exposure Stats to determine how many stops beyond the right side of my in-camera histogram I can go before I saturate the channels.

Step 3: Repeat steps 1-2 using the lowest dual-gain ISO setting.

Again, the idea is to develop a general sense of how far beyond the right edge of my camera histogram I can go without truly blowing the highlights in a “typical” scene for my shooting. In practice I might choose to reduce exposure by about 1/3rd of a stop from estimate max if I don’t have the opportunity to bracket. What am I missing?

Jim Kasson ·

That sounds good, but you should know if the lighting changes or the highlights are not quasi-neutral, the differences between the in-camera histogram and the raw histogram will change. I usually don’t try to get past half a stop down from true raw ETTR if I’m not bracketing.

By the way, don’t use automatic white balance if you want the relationship between the in-camera histogram and the raw histogram to be fixed.

T N Args ·

I find the zebra exposure set to 109+ on my Sony FF camera acts as a raw clipping indicator, live on screen while shooting. It is incredibly useful. And whenever I check it against RawDigger I see that it is accurate for practical purposes.

cheers

Deniz ·

This is what I do with my Sony A1. It is EVEN more accurate if you use the B&W profile, which is a nicer way to visualise the lighting in your scene too.

Deniz ·

Why is it so that a modern camera – none of them – have an option to show a RAW histogram if RawDigger can manage it?